In this assignment, you will simulate and train some very simple neural networks

using the Fast Artificial Neural Network software package.

The first task is to design a neural network that computes the logical OR

function. This function takes two inputs and gives an output of 1 if either

input is 1; otherwise, it gives an output of 0:

| Input 1 |

Input 2 |

Output |

| 0 |

0 |

0 |

| 0 |

1 |

1 |

| 1 |

0 |

1 |

| 1 |

1 |

1 |

- Load fannExplorer. Using a web browser, pull up the URL http://ilinux1.eecs.berkeley.edu:2718/fannExplorer.html. You will see the Fast Artifical

Neural Network Explorer (fannExplorer) running as a Macromedia Flash application in your browser.

- Load project files.

- File > Load Neural Network : Select or.net

- File > Load Training Data : Select or.train

- File > Load Test Data : Select or.test

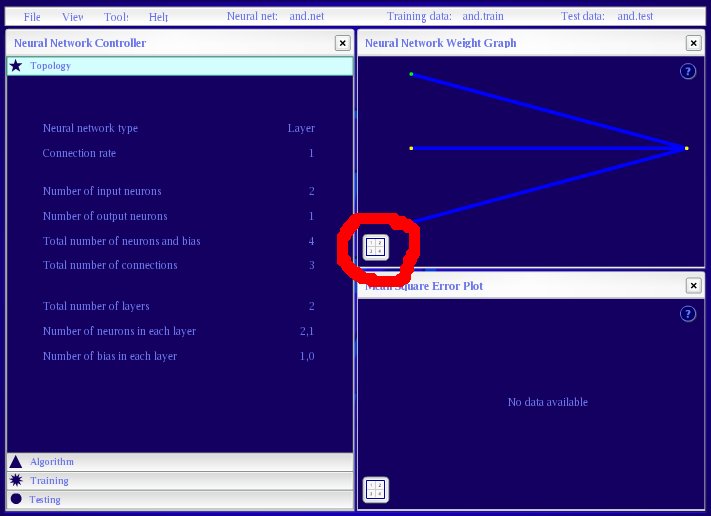

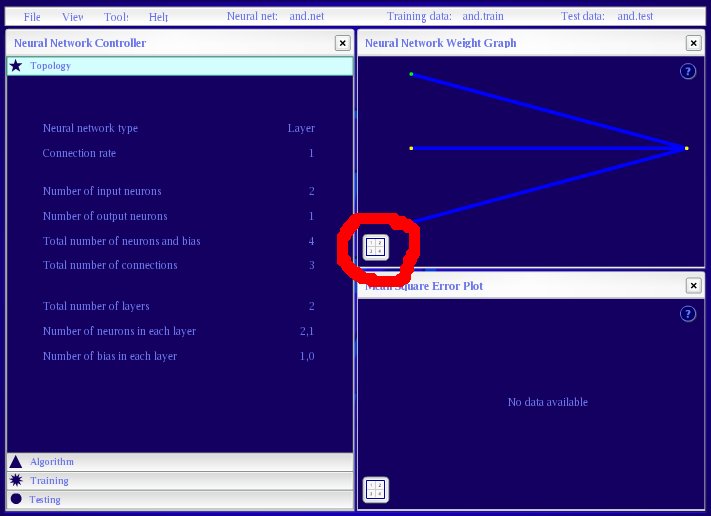

- Examine the network architecture and activation displays.

Notice the Neural Network Weight Graph in the upper-right window.

You see two input nodes (yellow), one bias node (green), and one output node.

Mousing over the question mark

brings up a helpful legend.

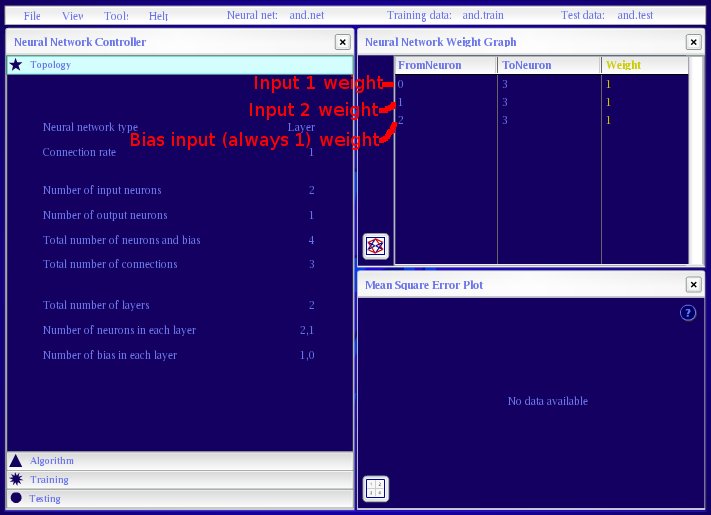

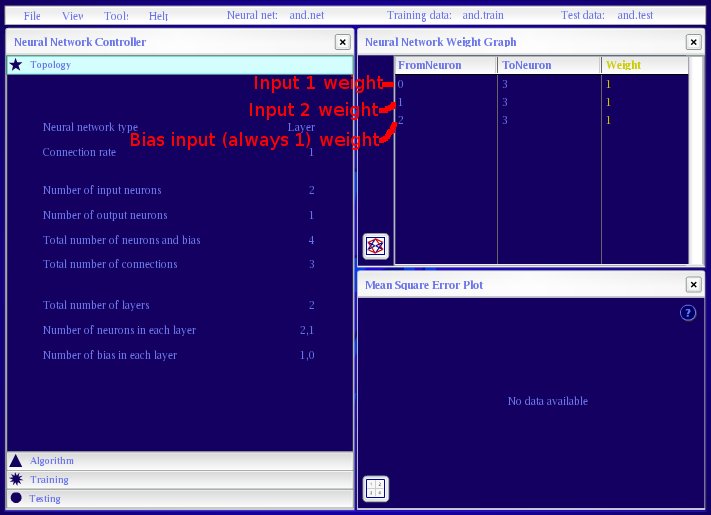

Click on the icon in the lower-left: now you see the network weights in the form of a table.

- Set the network to compute the OR function shown above.

Click on the weights in network weights table to change them.

Set the weights such

that the 4 possible OR inputs produce the correct OR

outputs.

The bias node's output is fixed at 1. It can act as a threshold for the output

node.

This network is configured to use neurons with a "threshold" function, so that their outputs are 1 only if the weighted sum of their inputs is at least 0. Otherwise the output is 0. Specifically,

activation = sum(weight * input)

if activation > 0

output = 1

else

output = 0

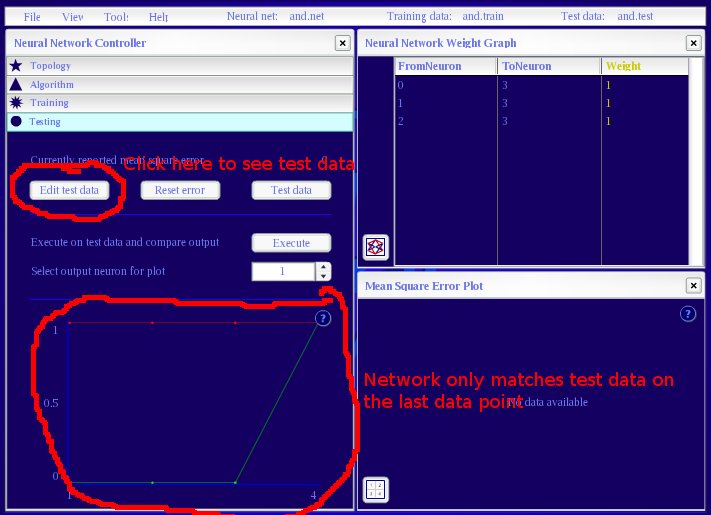

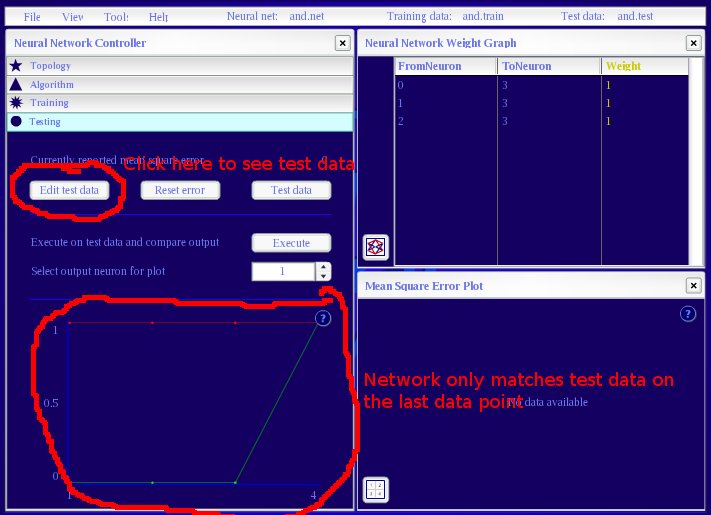

- Check the network behavior.

In the Neural Network Controller window, pull up the Testing pane.

Click on Execute and look at the resulting plot. The green plot shows desired output

and the red line shows actual output. Verify that they match.

- Note if you want to save your network, select a filename that

includes your student ID or some other unique identifier. Every student's

networks get saved on the server, so we don't want students to overwrite each

other's saved networks.

To hand in:

- State the weights you chose.

- Report the output for each input pattern, and show how the network

works by illustrating the calculations that produced each output.

Now try to make the network learn the OR

function. Instead of defining the network by hand, you will set the system with

random initial weights that will be adjusted during training.

Although you probably don't know yet how exactly the program learns

these weights, you will by the end of next week: the algorithm is called

"back-propagation," and a short, non-mathematical description can be found

here.

2a. Learning without hidden nodes

- Randomize the weights.

In the Neural Network Controller window, pull up the Training pane.

Click on Randomize to assign small random weights to each edge. (The randomness

would be essential for neural nets that have hidden nodes.)

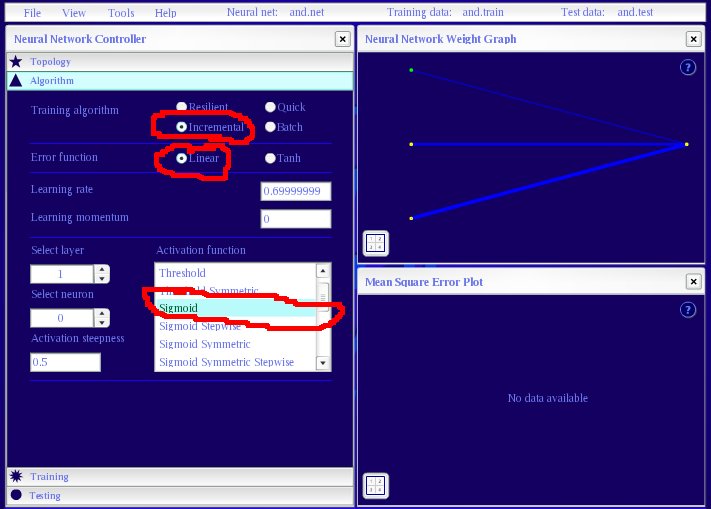

- Choose the algorithm.

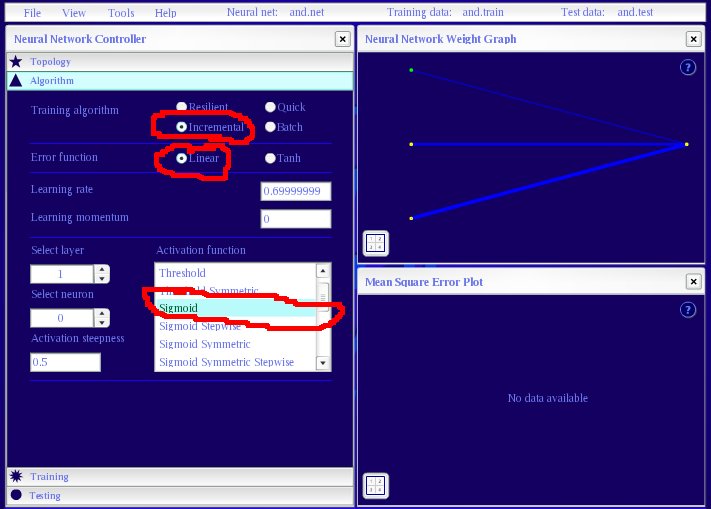

In the Neural Network Controller window, pull up the Algorithm pane.

Select Incremental for the training algorithm, and Linear for the error

function. Also select the neuron's activation function to be Sigmoid.

- Train the network. Click on Train. In the Error Plot in the lower-right window, note

the number of epochs trained and the final error value. You can click on the icon in the lower-left corner

to see the exact numbers. With Incremental as the training algorithm, each epoch consists of

a pass through all the training patterns, with the weights updated after each training pattern.

With Linear for the error function, the error in the error plot is the average of the squared error

for each pattern. You should leave the Maximum number of training epochs value alone. If you

make it too high, you can overload the server.

- Experiment with training options. In the Algorithm pane, experiment with different

values for the training algorithm, error function, learning rate, and learning momentum. Mousing

over the controls may elicit some helpful explanations.

Note how the settings effect the rate of convergence during training, and what weights are learned

by the network. Keep a record of the parameters you try and how they affect your results.

A few notes:

- You will need to choose a consistent criterion for what it means to learn in

this network. You might choose something based on the error in the error plot;

this would mean that the error falls below a particular threshold

each time. Alternatively, you could require that each pattern produces the right

output within some constant amount. (You can view this by looking at the

Test pane.)

- For whatever error criterion you choose, you should require that the network

satisfy it within a fixed number of training sweeps (like 1000). Thus, failing

to learn means not meeting that criterion within that many sweeps.

To hand in:

- Briefly describe the learning criterion you used.

- Turn in a record of the parameters that you tried as well

as an impressionistic account of what happened. Include an example solution.

- For what range of settings does the network reliably learn the OR

function?

- For what range of settings does the network learn about 75% of the time?

(That is, for about 75% of your training runs with new, random initial weights.)

(Hint: you may want to look for correlations between the randomly

initialized weights you get and the resulting learning behavior.)

2b. Learning with hidden nodes

- Reconfigure the network architecture to add two hidden nodes.

Select File > New Neural Network. Choose 2 input neurons, 1 output neuron,

1 hidden layer, and 2 neurons in the first hidden layer. Click Create.

You will see two bias nodes, one for the input layer and one for the hidden layer.

- Experiment with adjusting the same parameters.

Repeat (2a) with two intermediate units between the input and output.

To hand in:

- Once again, turn in a record of the parameters that you

tried, a general account of what happened, and an example solution.

- How much does this new network help?

The second task is the logical SAME function, which is 1 if and only if the inputs are identical:

| Input 1 |

Input 2 |

Output |

| 0 |

0 |

1 |

| 1 |

0 |

0 |

| 0 |

1 |

0 |

| 1 |

1 |

1 |

- Load same.train and same.test. Then repeat parts (1) and (2) for the SAME

function. Repeat the experiments involving designed weights as in Part 1 and

learning as in Part 2, without and with two extra hidden units.

To hand in:

- Turn in records of the parameters that you tried, a

general account of what happened, and an example solution.

- How do the results differ in this case? Explain why.