Lab 14: Spark

Due at 11:59pm on 12/03/2015.

Starter Files

Download lab14.zip. Inside the archive, you will find starter files for the questions in this lab, along with a copy of the OK autograder.

Submission

By the end of this lab, you should have submitted the lab with

python3 ok --submit. You may submit more than once before the

deadline; only the final submission will be graded.

- You will be conducting this lab on the Databricks Spark platform which can be accessed via a browser.

- Each completing each question on Databricks, you will copy a token into lab14.py

- After completing the lab, you may run OK as usual

MapReduce

In this lab, we'll be writing MapReduce applications using Apache Spark.

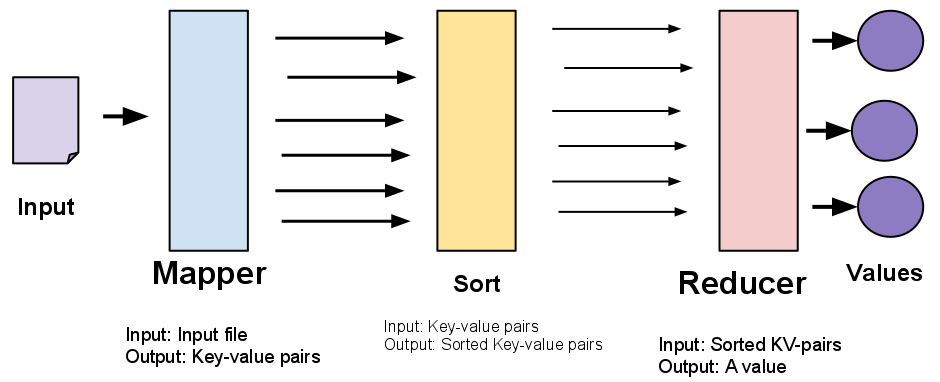

A MapReduce application is defined by a mapper function and a reducer function.

- A mapper takes a single input value and returns a list of key-value pairs.

- A reducer takes an iterable over all values for a common key and outputs a single value.

The following diagram summarizes the entire MapReduce pipeline:

Apache Spark and Databricks

Spark is a framework that builds on MapReduce. The AMPLab (here at Cal!) first developed this framework to improve an open source implementation of MapReduce that ran on Hadoop. In this lab, we will run Spark on the Databricks platfrom, which will demonstrate how you can write programs that can harness parallel processing on Big Data.

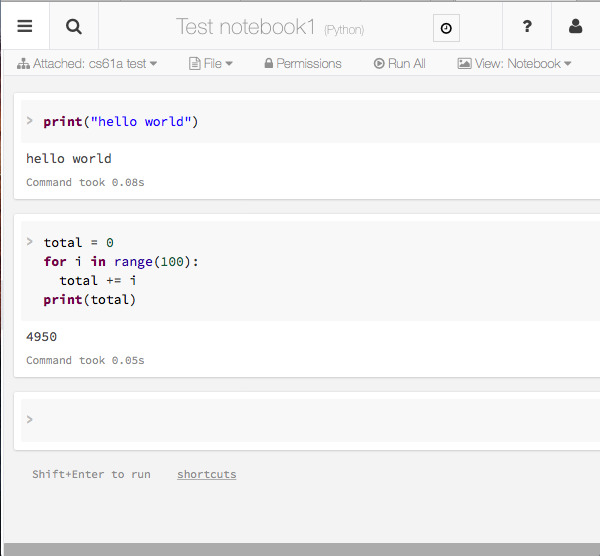

Databricks is a company that was founded out of UC Berkeley by the creators of Spark. They have been generous enough to donate computing resources for the entire class to write Spark code in Databricks notebooks. A Databricks notebook is very similar to an IPython notebook, which is a python instance that be interacted inside of a web browser. Databricks and IPython notebooks can contain code, text and even images!

Here is a screencap of a notebook. You can see that we have code that are in cells. We can run all the cells by pressing the 'Run All' button or we can run a single by pressing the Shift+Enter keys when our cursor is in the desired cell.

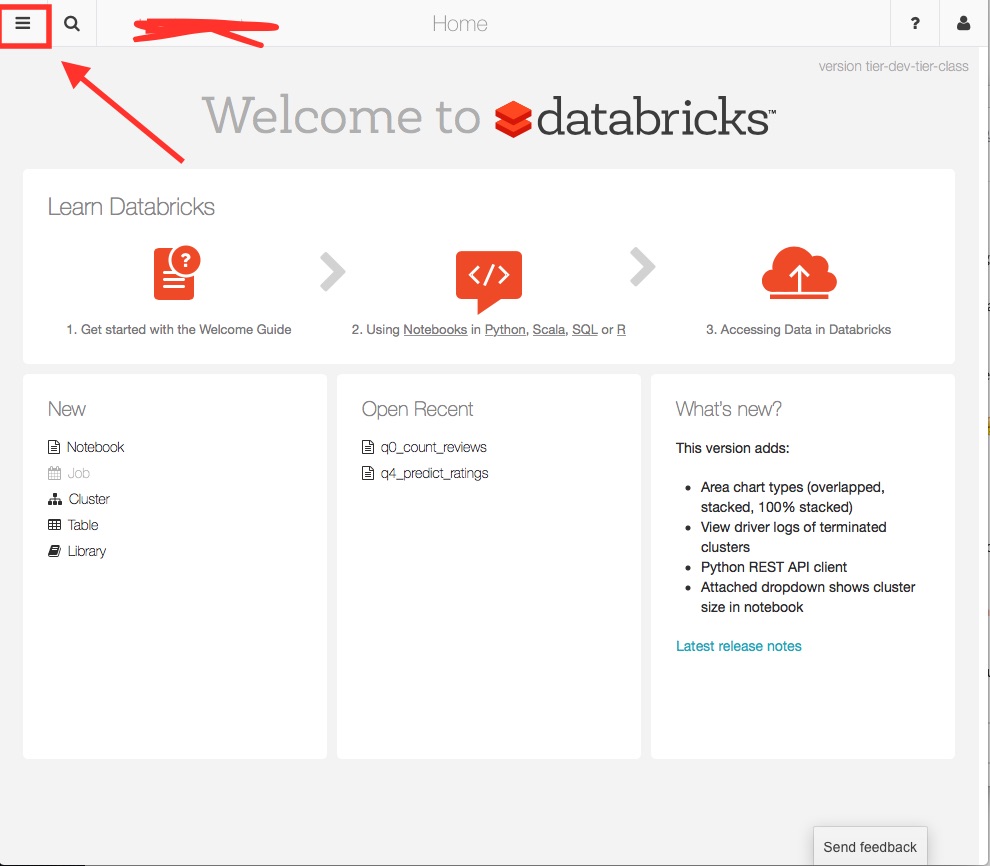

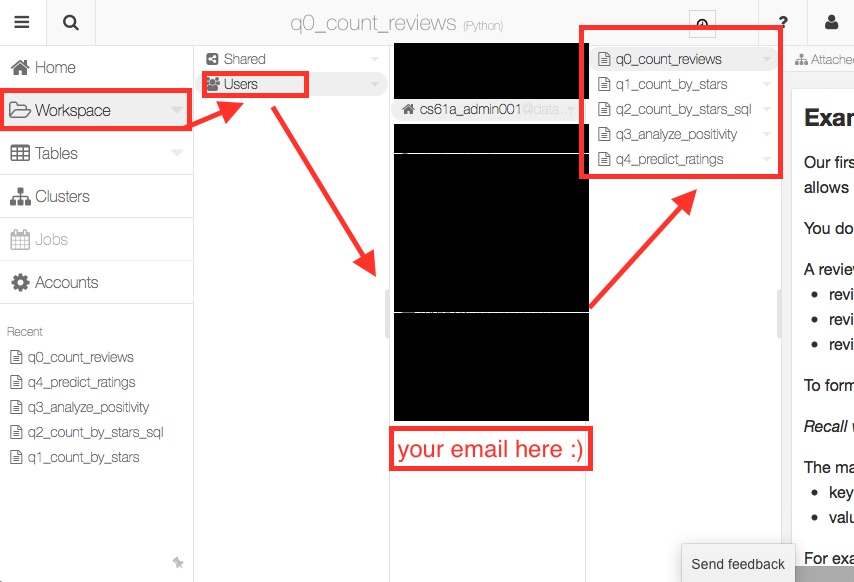

To access the notebook we can visit our CS61A shard here. The username will be your bcourses email where you should have received an email with a password.

You will greeted by this page where you should press the labeled button:

Once you do, follow the series of steps:

- Press Workspace

- Press Users

- Select your user account

- Select the lab question you wish to work on

Now you know how to access the notebooks, check out Q0 as an example and complete Q1-Q3 which are mandatory, Q4 is left as optional but you should complete it for your benefit.

For Q1-Q3: Once you complete each question and pass all the tests for each, you should copy the token that is shown in the last cell into your lab14.py as a string.

FAQ

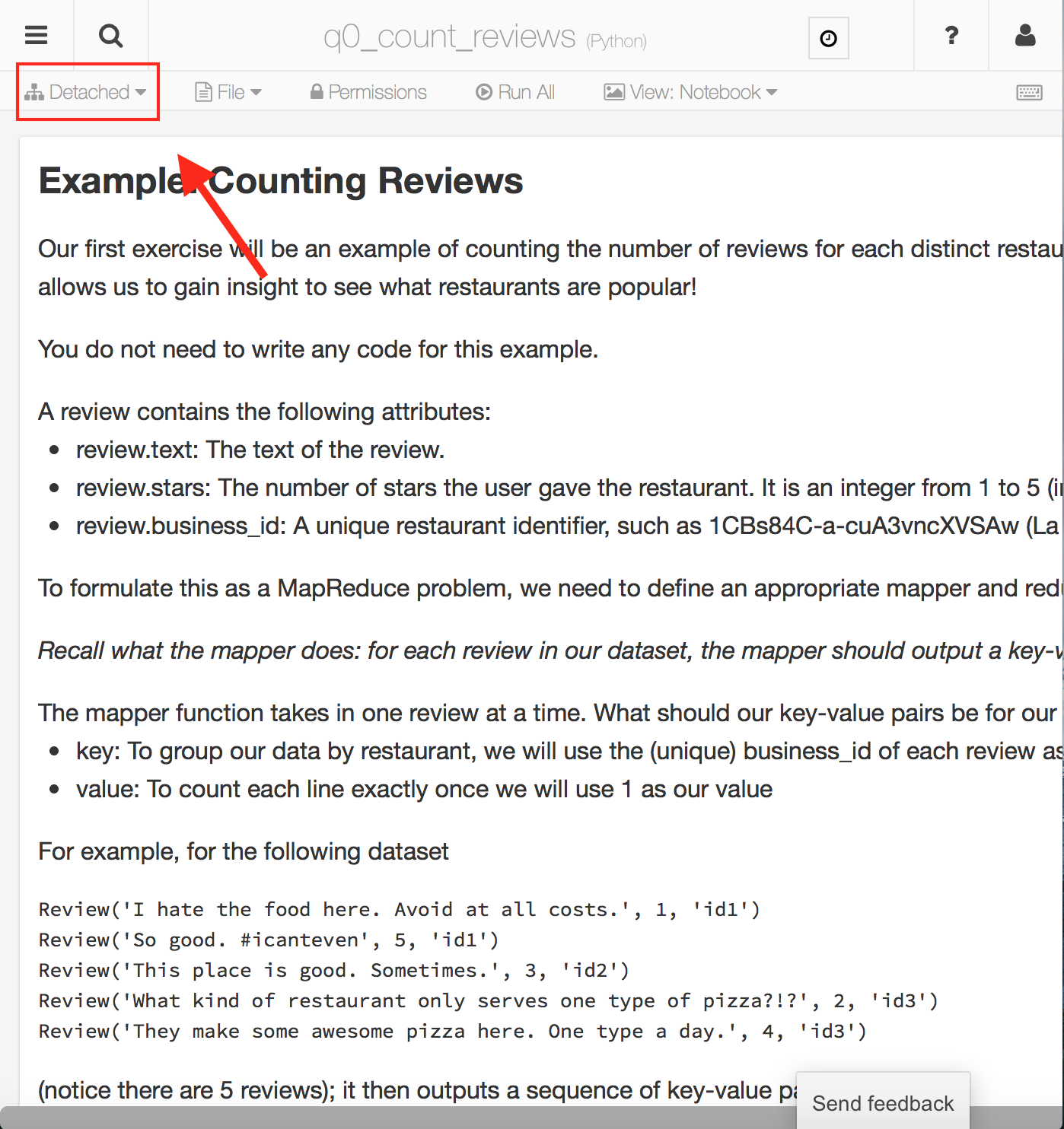

If your notebook says detached:

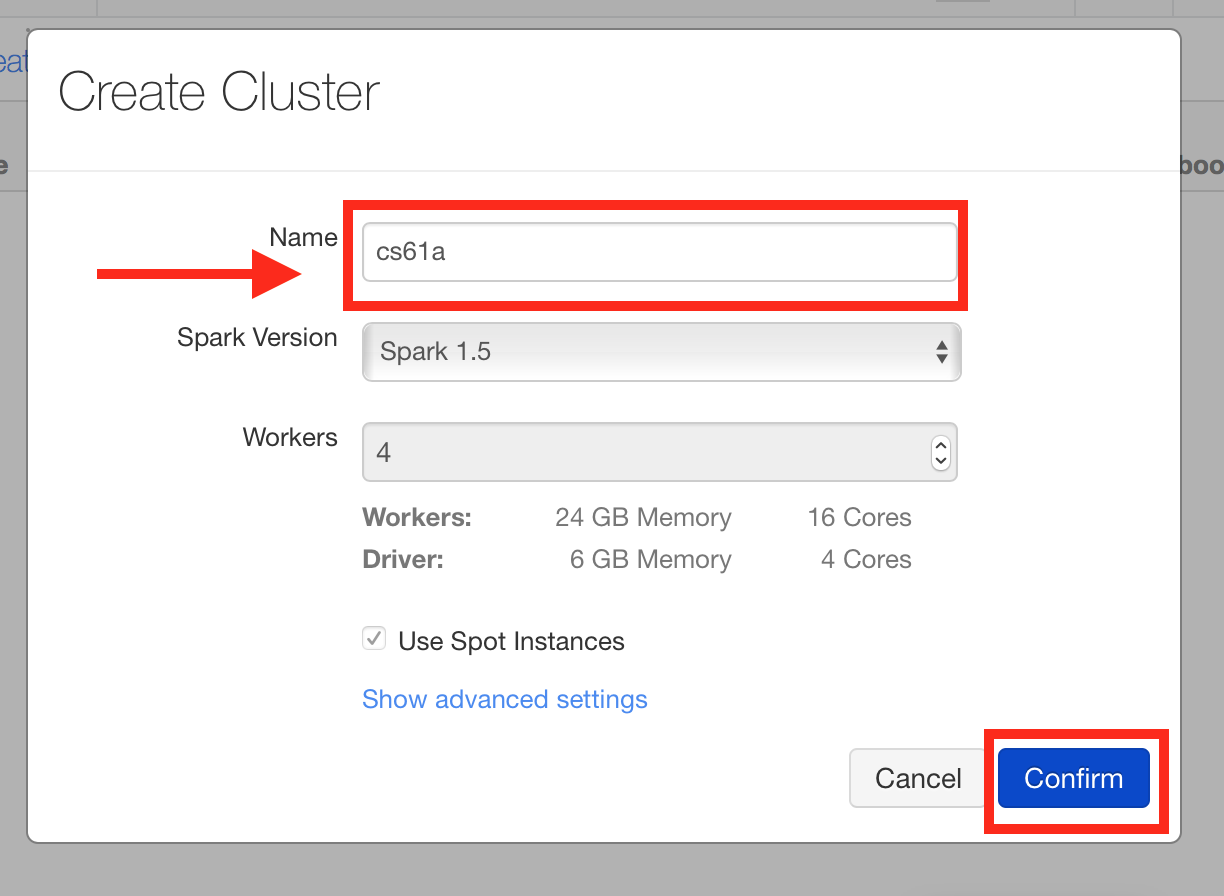

You should attach a cluster. If no cluster exists, then you should create one with the name "cs61a".

Creating a cluster

First press down on the menu that says "detached":

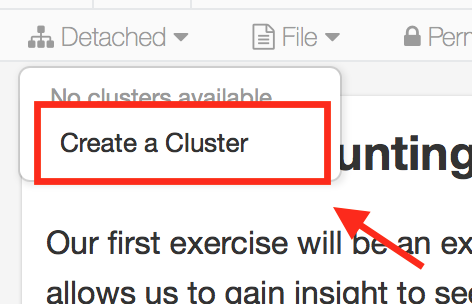

Next press "Create a Cluster":

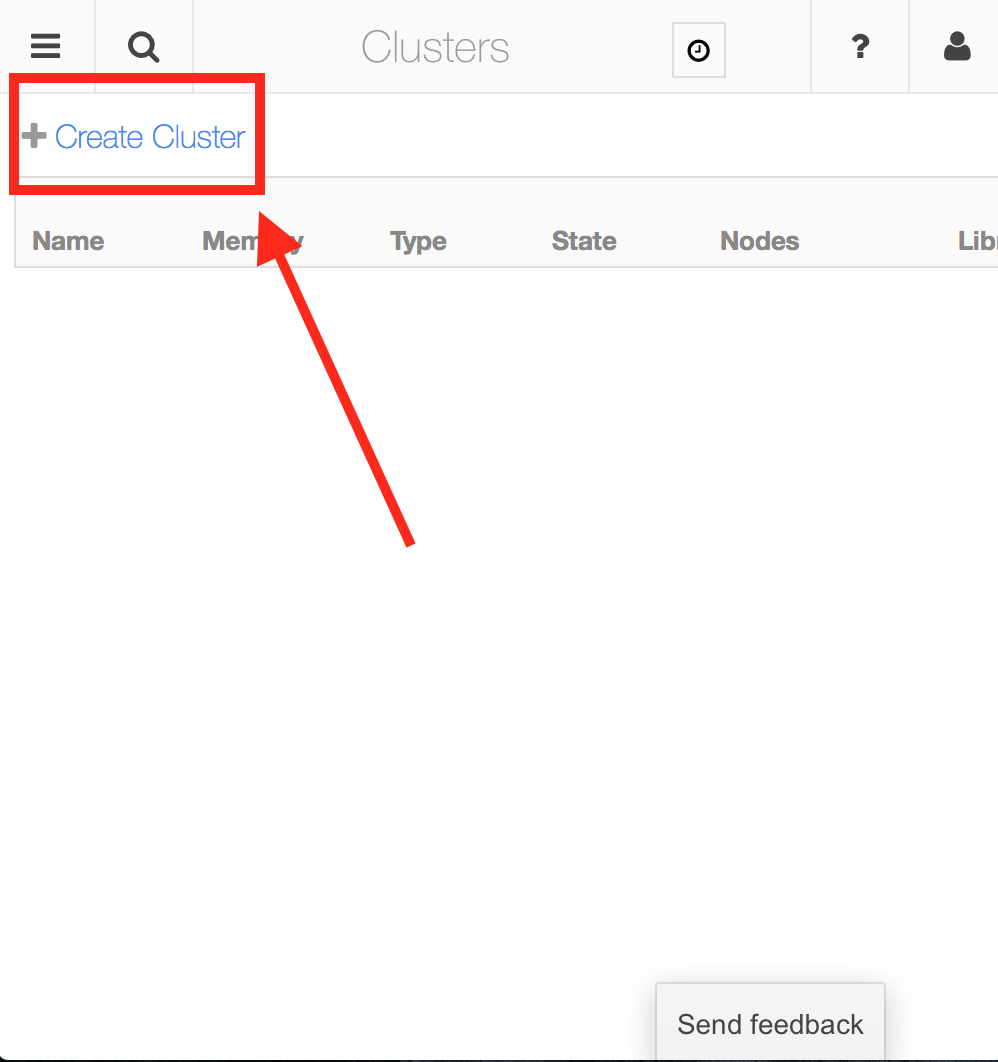

Then in the new page, press "Create Cluster":

For the name, type in "cs61a" and press confirm.