I used both the single-scale and multi-scale approaches for images below. Run a naive search within a small delta window, then upscale the image and shifts accordingly. Continue iteratively. The project also includes explorations for various color alterations: auto-contrast, auto-white-balance, different bases for alignment. It includes some border experimentation, but in the end, naive border detection trumped the rest (in roi--efficiency per unit time--over a convolutional approach).

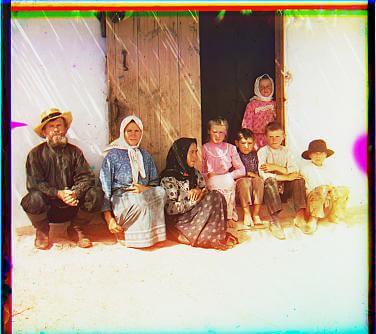

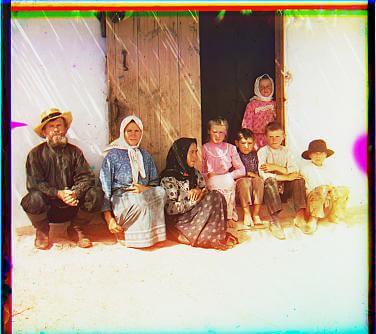

Problems: I found many issues with both emir and village. So, I cut off margins more aggressively (lower threhsolds, bigger margin with naive cut) The colors in others looked pale, so I pursued more color alterations below.

Bells and Whistles: (1) autoconstrast (2) auto white balance, (3) different basis for colors (no visible improvement) and (4) different border algorithms (no visible improvement)

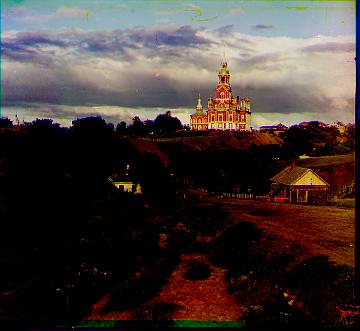

Failures: Algorithm did not match up village and emir correctly. Looking at the images, the different in darkness is just too different. I suspect an edge detector or gradient-based method would be more effective. I personally was more interested in exploring color though.

name, dx1, dy1, t1, dx2, dy2, t2

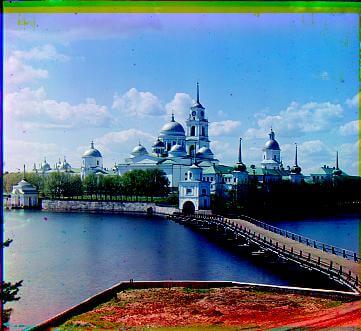

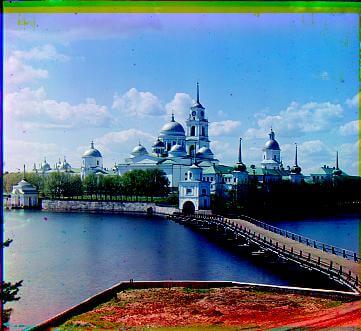

Cathedral, 1, 0, 0.69, -2, 5, 0.68

Emir, -17, 136, 12.3, 290, 186, 11.5, 90.95

Harvesters, -3, 59, 100.65, 3, -105, 100.83

Icon, -5, 47, 110.17, -23, 300, 104.92

Lady, -10, 37, 101.93, 6, -50, 104.3

Monastery, 1, -1, 0.72, -2, 10, 0.89

Nativity, 0, -3, 0.72, -1, 4, 0.78

Self_Portrait, 7, 91, 104.88, 2, -44, 105.52

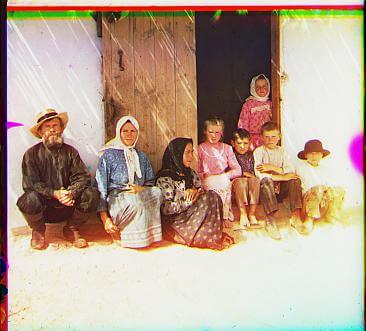

Settlers, -1, 1, 0.73, 0, 0, 0.89

Three Generations, 0, 51, 99.41, -5,-46, 99.47

Train, -3720, -3132, 98.30, 7, -97, 95.80

Turkmen, 6, 53, 116.65, -4, -50, 104.87

Village, -7, -1, 123, 13.47, -67, 183, 11.44

00090r,-212, -190, 0.78, -215, -65,0.75

01790r,-207, -190,0.73,-207, -179, 0.73

01043r,-1,12,0.04,-1,26,0.05