Part 1: Frequency Domain

Part 1.1: Warmup

Overview

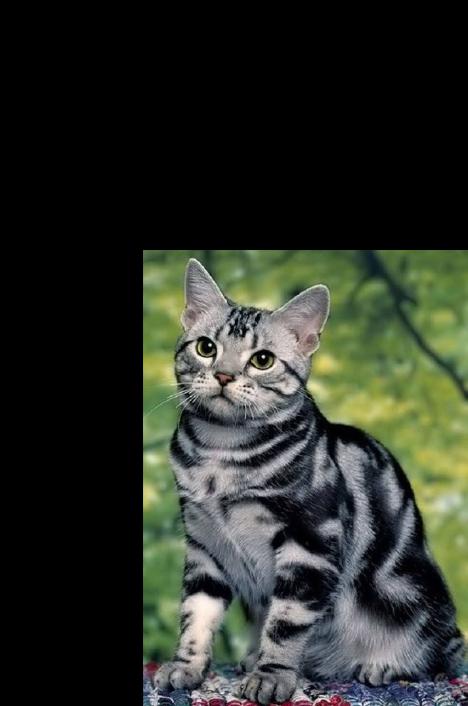

For the warmup, the task was to take a blurry image and sharpen it using the unsharp masking technique. This is done by using a Gaussian filter to retrieve the low frequencies of the image, and subtracting these low frequencies from the original image. With the resulting high frequencies of the image, add them (possibly scaled) to the original image. Because "sharpness", or edges, of an image are essentially the high frequencies, boosting these high frequencies makes the image appear more "sharp".

Results

(Click to enlarge)

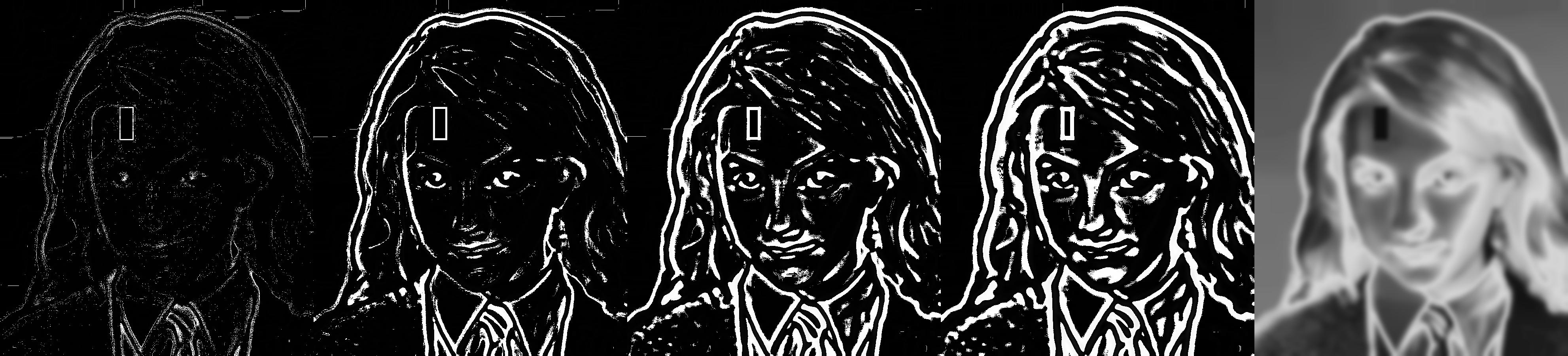

Part 1.2: Hybrid Image

Overview

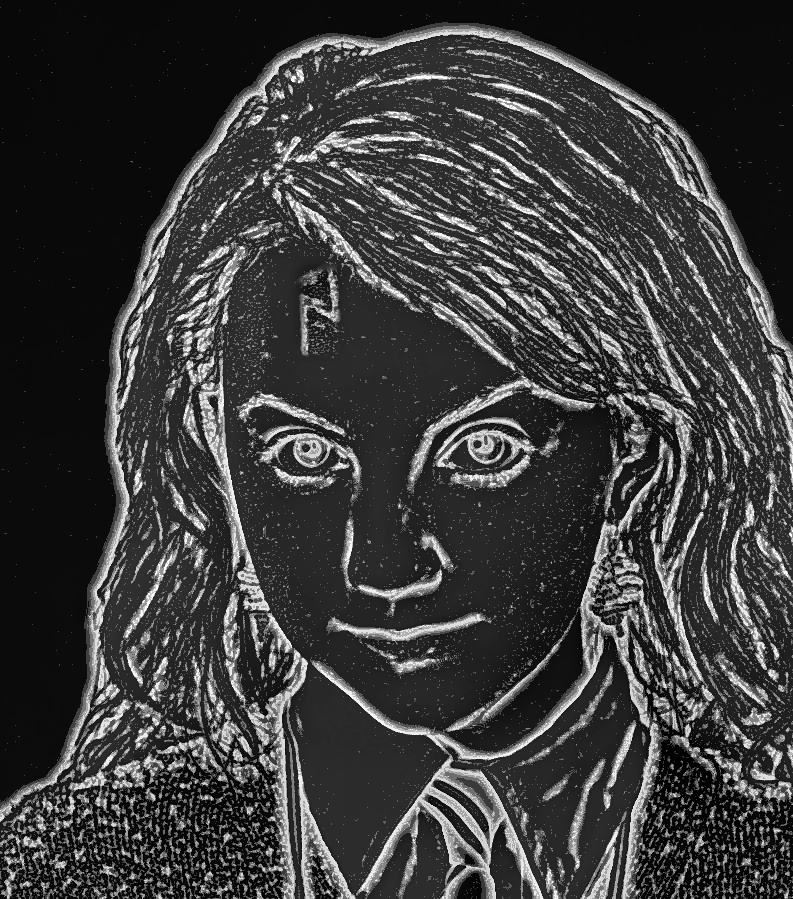

Hybrid images are static images that appear different depending on the distance from which the image is viewed. For humans, high frequencies dominate perception when available, but at a distance, only the low frequencies are. Therefore, by blending the high frequencies of one image with the low frequencies of another, the perceived "image" changes when viewed from close-up and far away.

Results

(Click to enlarge)

Input images:

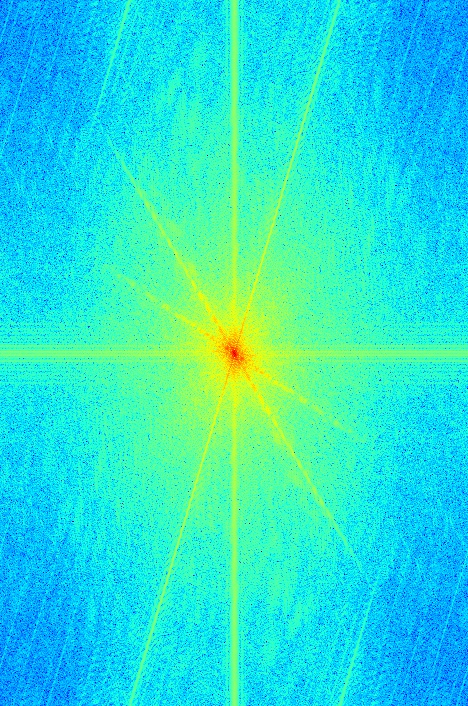

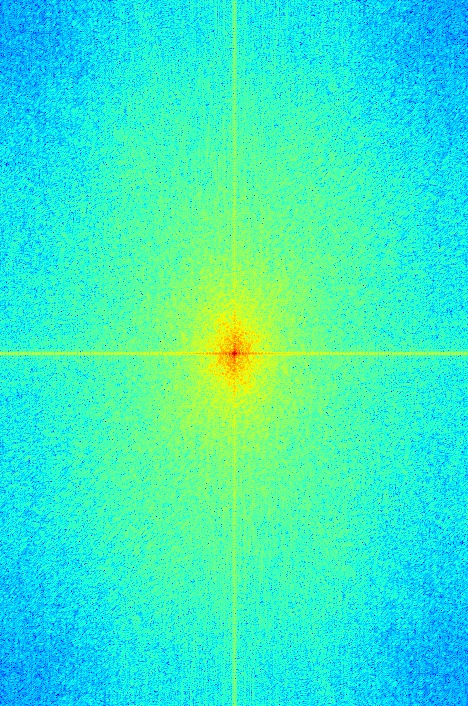

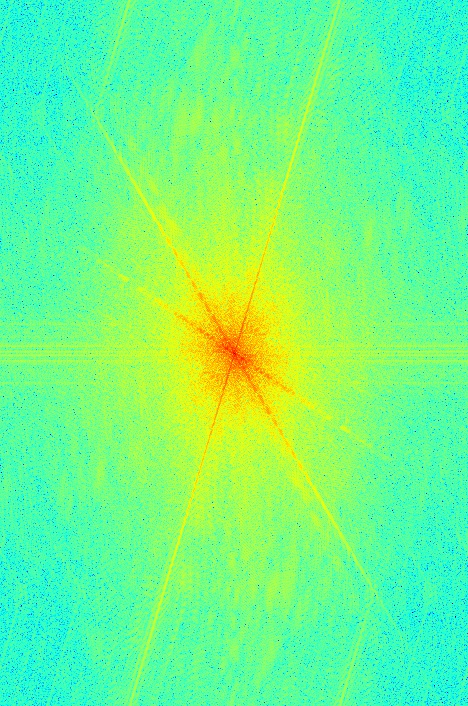

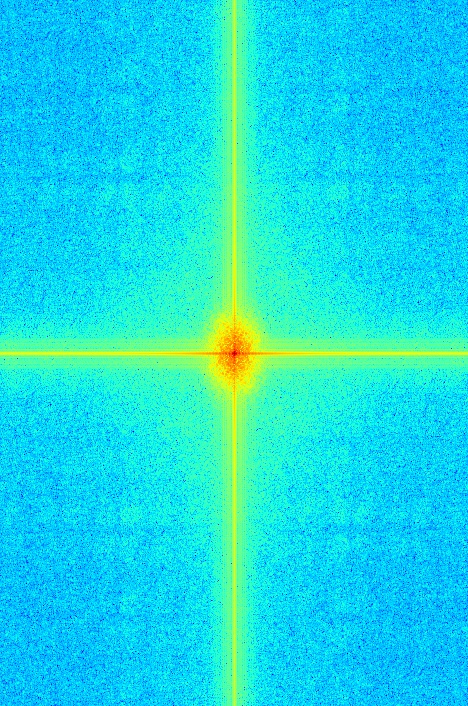

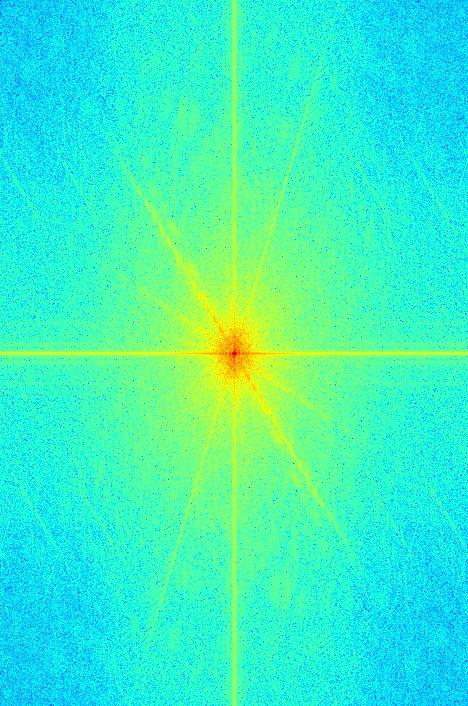

Images + Frequency Analysis (log magnitude of Fourier transform) after alignment:

Filtered Images + Frequency Analysis:

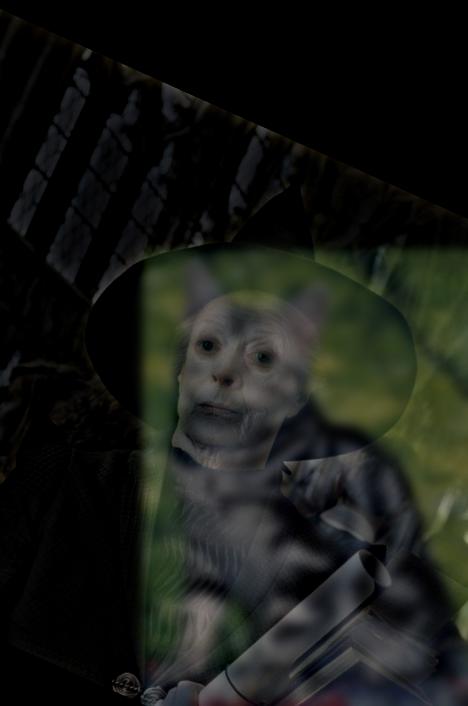

Resulting Hybrid Image + Frequency Analysis:

Additional Results

The Hermione expression hybrid image actually does work alright - from up close, you can see her mouth turned down, and from far away, you see her mouth turned up. The problem is, because the 2 input images were very different in size, once they were aligned, the mouths ended up being in rather different positions (I decided to align by the eyes, so Hermione wouldn't look like a multi-eyed alien). So rather than having one sad mouth close-up and one happy mouth far away, it looks kind of like Hermione has 2 mouths, even from close-up. Which, though alright, isn't really what I was going for.

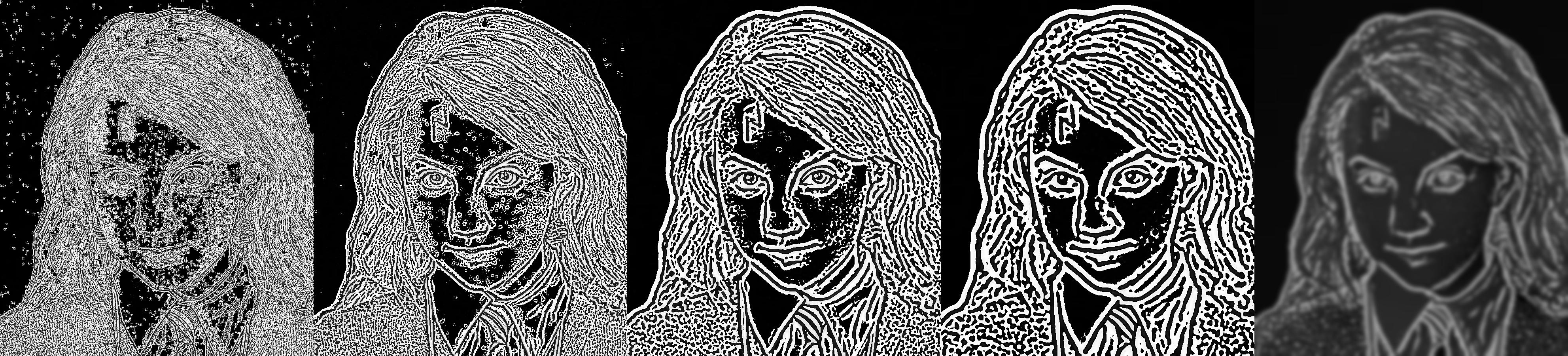

Part 1.3: Gaussian and Laplacian Stacks

Overview

A Gaussian stack is a stack of a single image, to which a low-pass filter (Gaussian filter) is repeatedly applied. Thus, one sees less and less high frequencies of the image - the image appears smoother and smoother.

A Laplacian stack is also a stack of a single image to which a low-pass filter is repeatedly applied, but instead, the high frequencies are the ones retained. Thus, the stack consists of the different bands of frequencies of the image, starting from high frequencies, and ending with all the remaining lower frequencies.

These stacks can be used to analyze the frequencies of images.

Results

(Click to enlarge)

Part 1.4: Multiresolution Blending

Overview

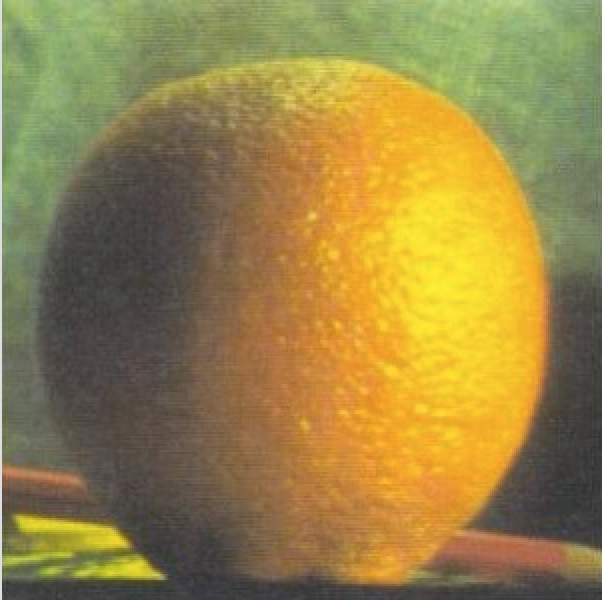

When 2 images are blended together, the line where they join is called a seam. We can make this seam smooth by gently distorting the images. Using multiresolution blending, we distort the images at each frequency band, making for a much smoother seam.

Results

Part 2: Gradient Domain Fusion

Overview

In this part, we seek to use gradient domain fusion to seamlessly blend an object from a source image to a target image. To avoid obvious seams, we focus on the gradients of the source image, instead of the flat intensity of the pixels. Since humans generally care more about the gradients than the actual intensity values, we find values for the target pixels that most closely match the gradients of our source image.

This technique, known as Poisson blending, uses least squares to find the appropriate values for the pixels. Though we are deliberately ignoring the source pixel intensities, we preserve the source gradients in the blended image, leading to a smooth, seamless new image.

Part 2.1: Toy Problem

Overview

To warmup for blending images with Poisson blending, we first start out with a toy problem: recreate an image by preserving the original gradients.

To do this, we compute the x and y gradients from an image s, then use these gradient values, and the intensity of the top-left corner, to solve the least squares problem of the pixel intensities of the "new" image.

Our objectives can be summarized so (s is the source image, and v is the "new" image):

1. Minimize ( v(x+1,y)-v(x,y) - (s(x+1,y)-s(x,y)) )^2 , aka "let the x-gradients of v match the x-gradients of s".

2. Minimize ( v(x,y+1)-v(x,y) - (s(x,y+1)-s(x,y)) )^2 , aka "let the y-gradients of v match the y-gradients of s".

3. Minimize (v(0,0)-s(0,0))^2 , aka "let the top left corners match in color".

Results

(Click to enlarge)

Part 2.2: Poisson Blending

Overview

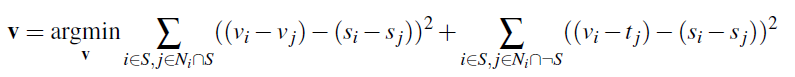

Now, we blend real images together using Poisson blending and least squares. The idea is the same as described in 2.1; we still want to match x and y-gradients as closely as possible.

But this time, we create a mask for the source image region we wish to blend into the target. From source image pixels in this mask, we use gradients to find their values. The blending constraints are thus:

We take the NSEW neighbors of each pixel in the mask, and match the source image gradients. If a neighbor isn't in the mask, its value is already set as its value in the target image. Setting up these constraints for every pixel in the mask creates a large matrix equation, which we solve using least squares. The resulting values are the values of each pixel in the mask.

Results

Additional Results

The Poisson blends work best when the background of the object in the source image and the surrounding area in the target image are of similar color. In the failed Yule Ball image, one can see that the background of the penguin chick is a bit different from the floor of the Yule Ball - the floor is kind of a consistent gray, whereas the snow fades from white to blue. Matching the gradients up on the boundary thus is a little harder, and because we're using least squares, this error propagates a bit into the interior of the image.

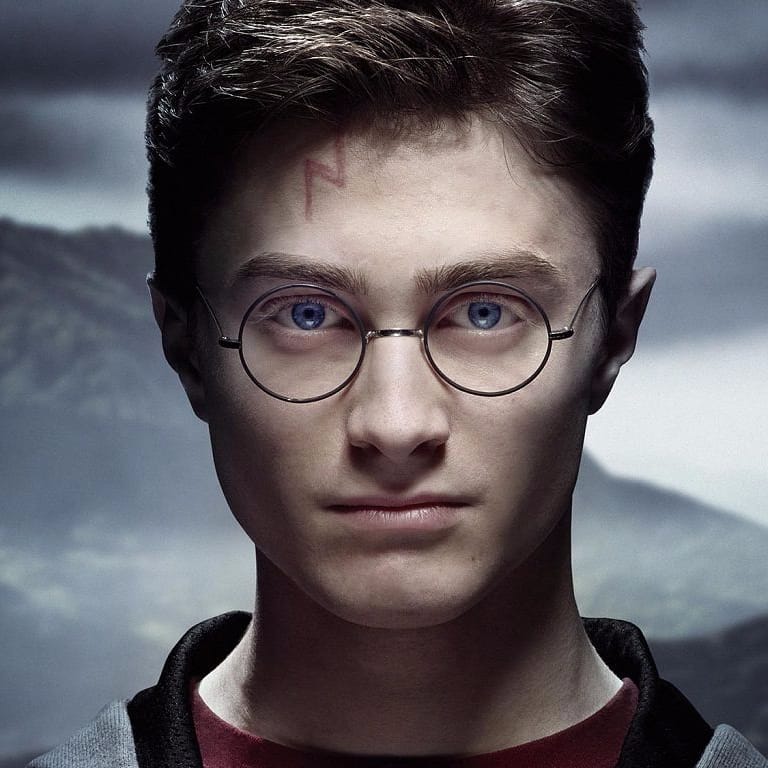

Laplacian vs Gradient Domain Blending

In this case, the Poisson blend doesn't look very good. This is because the scar from Harry is very shadowy, whereas Luna, the target image, is very brightly lit. The two backgrounds don't match very well, so least squares doesn't work out too well either. So in this particular case, the Laplacian blend is better.

In general, Poisson and Laplacian might fare better in different situations. As mentioned before, gradient domain blending can change the color and intensity of the original source image, so if maintaining color integrity is a top priority, Laplacian pyramid blending might be a better pick. In the blending seam area though, Laplacian actually includes frequencies of both images - so if the source and target images have vastly different frequencies, putting them together with Laplacian might result in a rather funky image. In that case, Poisson blending might be a better choice.

Final Thoughts

The most important thing I learned from this project was to use software packages and functions intelligently. Do the research. Else you'll be staring at your computer for a million years while it whirs and burns and still nothing happens.