For this section I applied a gaussian filter on my desired image, with sigma=5, to created a blurred copy. After removing this blur from the original image, I was left with the detail. After weighting this detail with alpha (chosen based on image) and adding it on top of the original image, I was able to create a more detailed, sharper image.

For this section I applied the gaussian filter on the base image, which created a blurry effect and then

overlayed the details of the other image on the top (Daenerys). I chose sigma values of 2.5 and 4.5 through experimentaion.

The end product appears like a hybrid of the two images.

The second example (rose + sunflower) was not as successful as the first example. This is due to the fact that

the inner portion of the rose, which includes the inner petals, takes away the distinguising texure of the inner

sunflower. Without this texture, we cannot see the important property of the sunflower and recognize it, even from a higher

resolution.

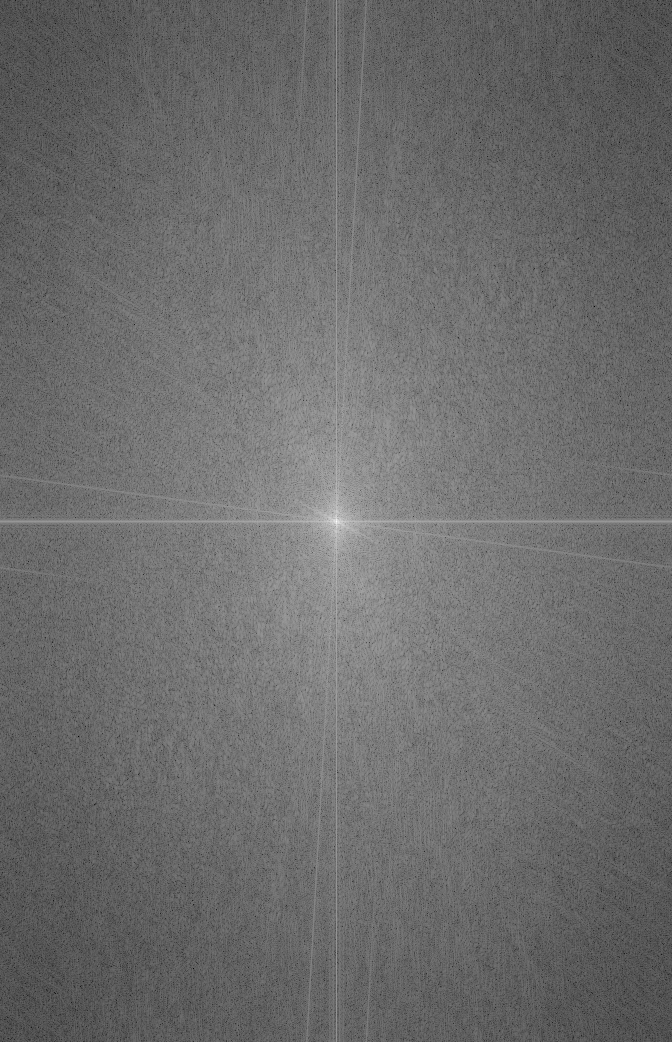

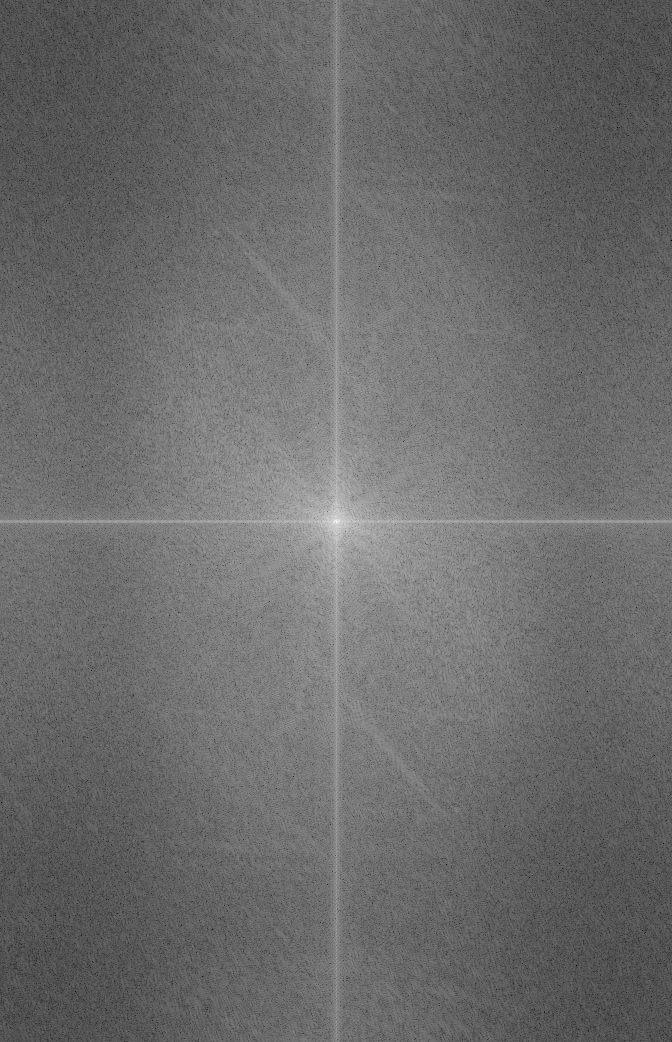

In this section I derive the laplacian image at level i by subtracting the i+1th gaussian from the ith gaussian.

For this section I used the laplacian/gaussian stacks from 1.3 to blend together images. The first example

shows the sample apple and orange example. This example uses a simple filter that just cuts the image in half

then seams together.

The second example is a more complex mask where we mask only the cottage and paste it onto the

image of the sunflower field. This example works well because the behind of the cottage matches

the trees in the sunflower field where we merged the images.

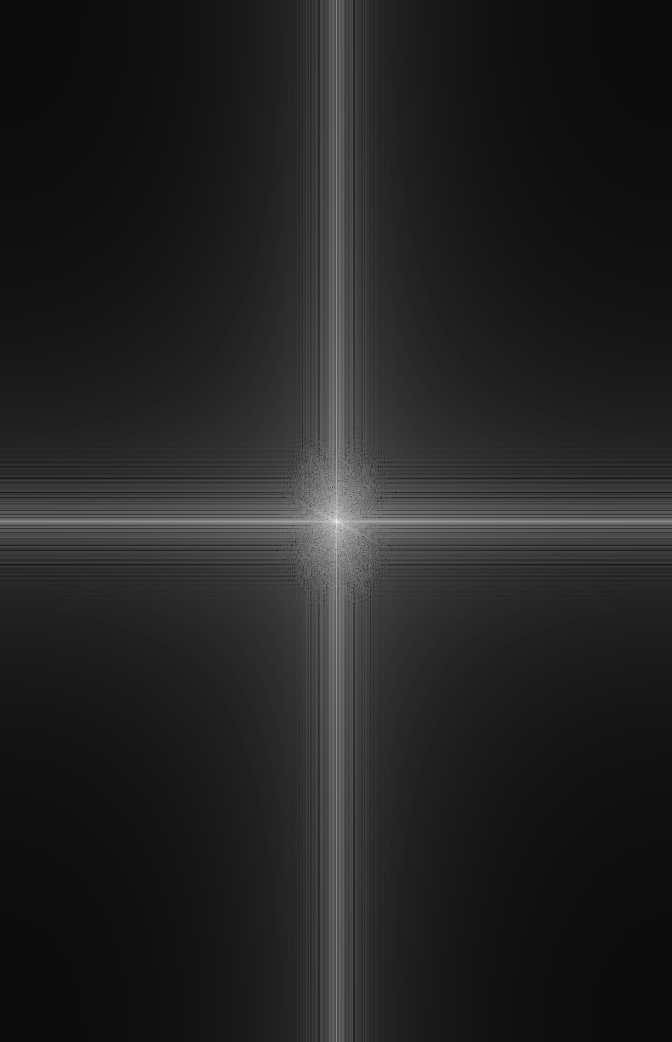

The third blending doesn't show great results. The bird is extremely washed out by the sky background. This is probably

due to the light background that the bird is placed on, which blurs the bird out too much

The toy problem involved setting up a least-squares problem wherein we try to match the gradient of adjacent pixels from the toy picture (both horizontally and vertically), then matching the top left pixel exactly. The reconstructed image is thus recreated, but not exactly, for though we try to minimize the differences, the pixels are slightly darker in the reconstructed image.

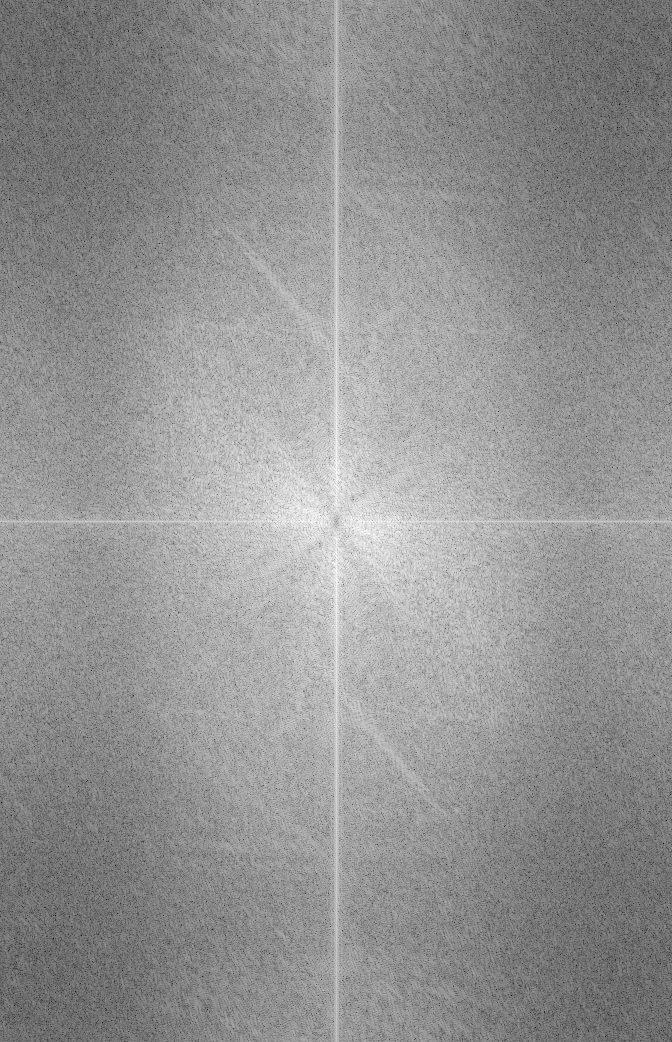

For this section I used least squares to match a source image into a target image. The first and

last examples are pretty well done and smoothly blend a foreground image onto a background. I tried to make

sure that the source images were already contained in images similar to the target to more smoothly

paint the pictures onto the target. In the last example (the two girls on the mountain), I also inculde

the raw image with the picture imposed but not blended. Here you can see how they were already in a

green background which smoothly blended into the mountain scenery.

The middle picture (peacock at the taj mahal) isn't as smooth due to the fact that

the original peacock was on a dark green grass surface while the target image is much more intense and very blue. As an

effect the peacock coloring is skewed extremely blue.

Here we compare the ship and bird example from the laplacian with the same images blended by the poisson blending technique. Here we can see that poisson is far better at blending the images. The poisson blending technique doesn't wash out the bird to match its' background but rather changes its intensity to to the background.