Overview

|

In this project, we will explore how to morph images into one another dynamically, as well as averaging images. We will do this by defining correspondence points, creating a mesh for the average image, and fineally morphing the images together. This allows us to do all kinds of cool things like this Michael Schrute over here!

|

|

1: Face Morphing

Approach

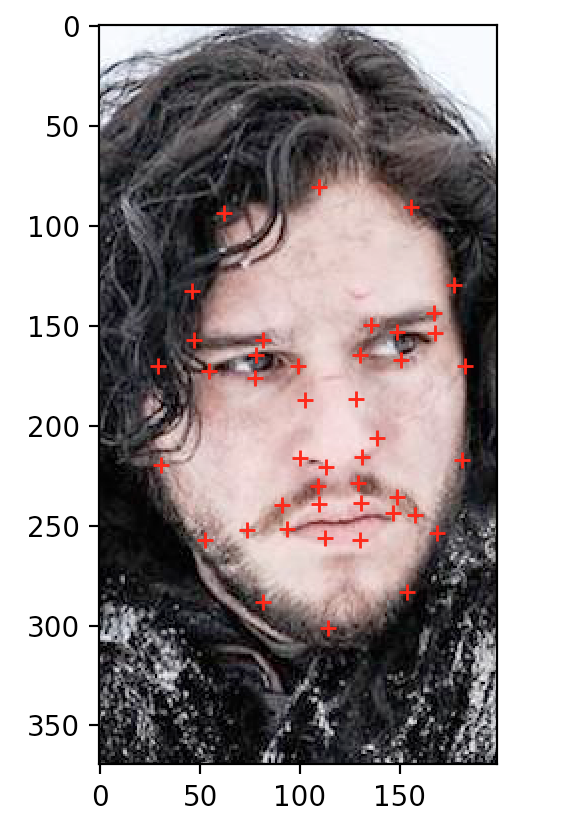

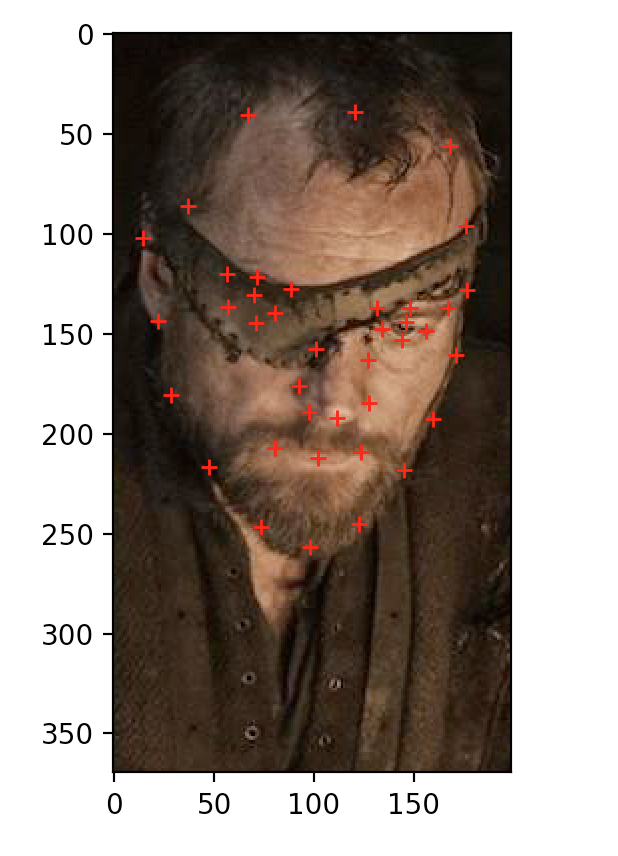

There are a few main steps to morphing two faces together. We will begin by first allowing the user to define correspondence points in the two images. This will allow us to have some reference points to morph our images.

|

|

Given this framework, we must know figure out some way to morph our image into these sets of mean images. To do this, we will first construct a mesh for our median image, and the one we used for this project was the triangular Delauney Mesh, which looks like the following:

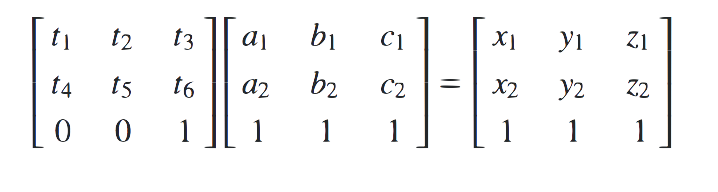

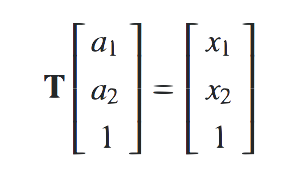

Now that we have this triangular mesh, we must figure out how to transform the corresponding triangle in the source images. To do this, we will define some transformation matrix T which maps the vertices of a triangle in our mean mesh, to the corresponding triangle verticecs in one of our source images, like the following:

Now finally will apply this transformation to every point within the triangle in our median mesh to find the corresponding points in our source images, with the proper weighting on intensity, like the following:

Face Morph Results

Let's talk a look at some of the results from this morphing technique:

|

|

|

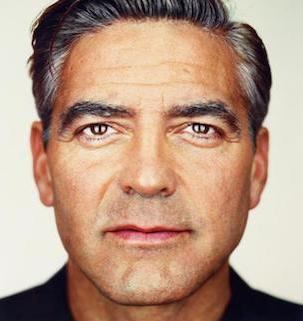

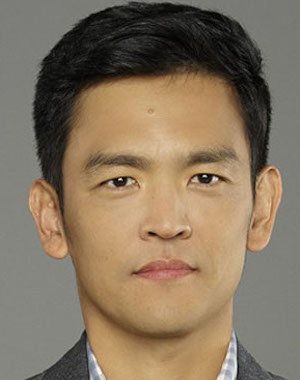

My friend Jing always gets told he looks like Harold from Harold and Kumar, so I thought I'd do some face morphing to test it out:

|

|

|

|

|

|

2: The Mean Face

Approach

Here we will explore the mean of a dataset, giving us a novel way to understand visual data, as well as using some of our previous morphing techniques. To do this, we will first compute the average face in the dataset, by taking the average of all the facial geometries and pixel intensities, and finally morph each face into that average.

2.1: Calculating the Mean Face

|

First we will calculate the mean face of the population. In this example, we used frontal images from the FEI Face database. To do this, we will just compute the average of all the facial gemoetries (provided by the dataset), as well as the average of all the pixel values. This will give us a sense of what the "mean" person in this dataset looks like. Here are our results: |

|

2.2: Some "Mean" Morphing

We're now going to morph some of the faces in our dataset into the mean face. Here are some of the halfway images between an image on our dataset and the "mean" image:

|

|

|

|

|

|

Now we're going to morph a picture of me into the geometry of the mean face and vica versa. To do this we will just follow a normal morphing procedure, without using alpha blending to blend the image colors. Here are the results:

|

|

|

We can see that there were some artifacts caused by the fact that the mean and I had different facial positions. We will explore this more by extrapolating past the mean geometry to create a caricature of myself. Here is the caricatured image of myself:

|

|

3: Bells & Whistles

3.1: Game of Thrones: The Hall of Faces (Musical Gif)

Since I'm a huge fan of game of thrones, I decided to make a video face morph of some of my favorite characters:

|

3.2: Micah Morphage

Next I decided to do some morphing of my friend Micah into the "mean" East Asian woman:

|

|

|

|

|