Lightfield Camera

Depth Refocusing and Aperture Adjustments

Nancy Li

Overview

A lightfield camera, different from a normal camera, captures multiple images of the same scene, which enables us to do some pretty cool digital effects after the picture is taken. In this project, the goal is to use the images provided by the Stanford Light Field Archive to simulate refocusing an image at different depths and aperture adjustment.

Depth Refocusing

Each image is taken from an x, y position in a 17x17 grid. Here, we shift each image by alpha * (dx, dy), where dx, dy is the distance of the image from the center image (positioned at 8, 8). All the shifted images are then averaged together to make the resulting image. Alpha is a parameter that can be adjusted to change where the image is focused.

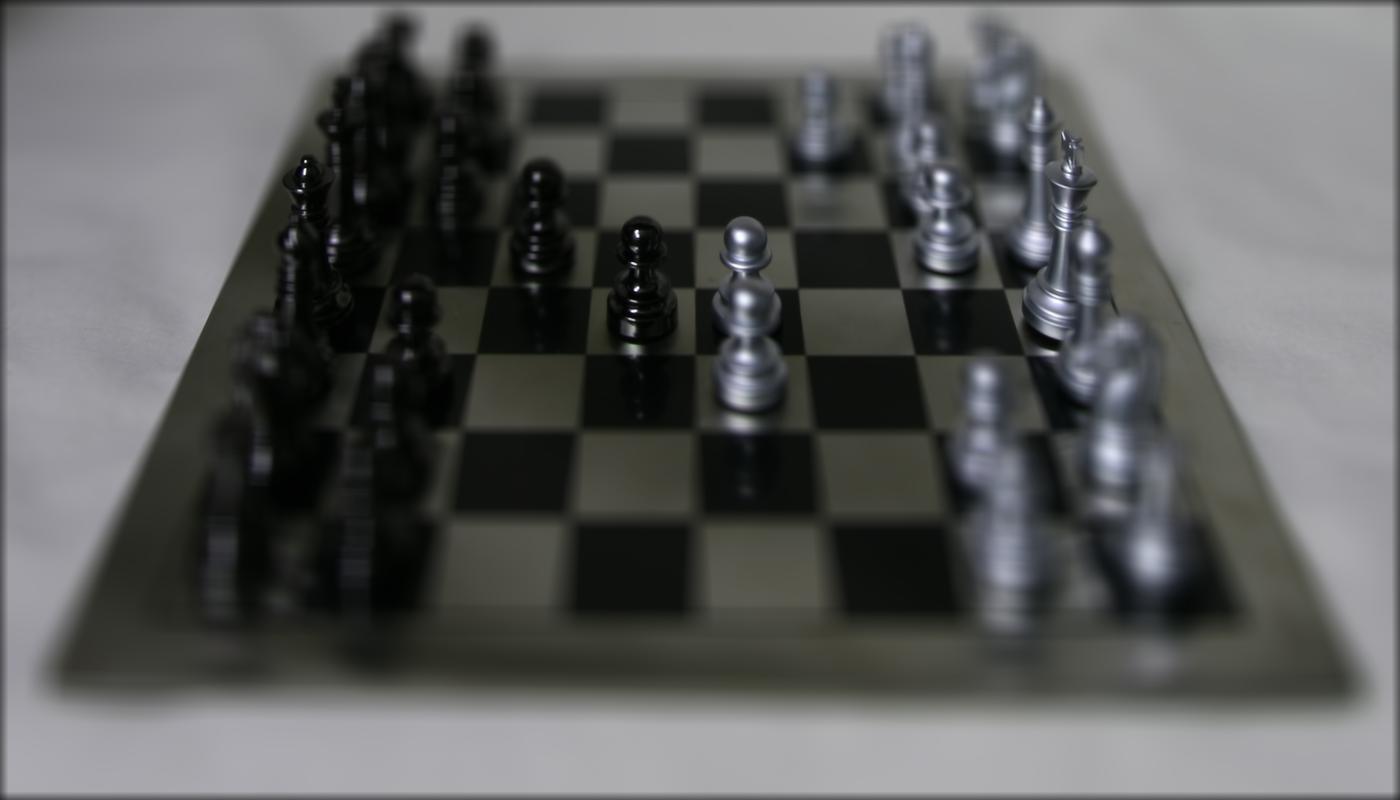

Depth refocusing of the chessboard. Alpha is chosen from [0, 3] with 0.25 steps.

Alpha=0

Alpha=1

Alpha=2

Alpha=3

Depth refocusing of jellybeans. Alpha is chosen from [-5, 0] with 0.5 steps.

Alpha=-5

Alpha=-4

Alpha=-3

Alpha=-2

Alpha=-1

Alpha=0

Aperture Adjustment

In this part, we simulate changing the aperature of the same scene. The approach is to pick a center image from the 17x17 grid, and then average images within a radius r in range [0, 9]. The radius r controls how many images are included in the average image. When more images are included that are farther away from the center image, then it simulates increasing the aperture of the camera, giving us a blurry foreground effect.

My result is show below. Each frame shown is the result of r=0,1,2,3,4,5,6,7,8.