Creating an image mosaic involves combining several images of the same scene. The images should be such that all are shot from the same point of view (keeping the camera in place but rotating it about its optical center) and with overlapping fields of view. We can recover the transformations between each pair of images, which are homographies of the form p' = Hp, if the above constraints are followed.

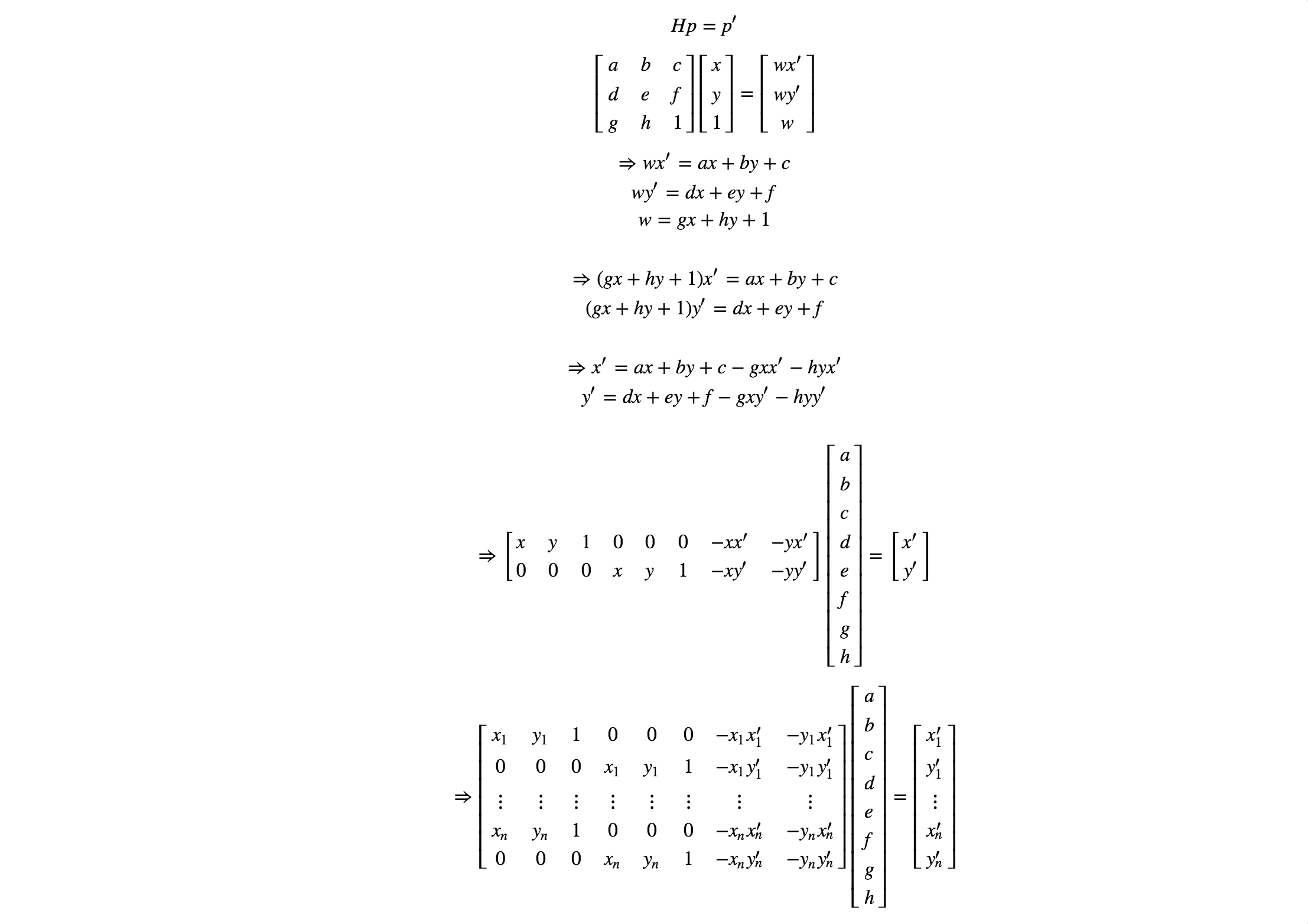

In order to compute the entries in H, we must select the correspondences between each pair of images. Selecting 4 or more correspondences (points common to the two images) allows us to use least-squares to solve for H. Below, we show how p' = Hp can be rewritten in the form Ah = b to be solved be a least-squares solver.

Solving for h, appending 1, and then reshaping it to a 3x3 matrix gives us the homography H from the set of points (xi',yi') to (xi,yi).

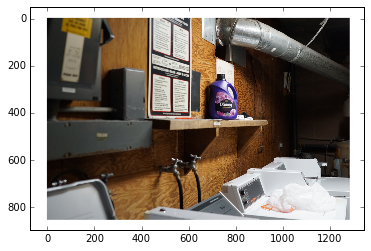

To check that our method of recovering the homographies is correct, we attempt to "rectify" an image. We take an image of a planar surface and warp it so that the plane is frontal-parallel. We define the corresponding set of points (xi,yi) by inferring the frontal-parallel shape of the object.

Finally, we can use homographies to create image mosaics. Below, we compare simply overlaying the images vs. using alpha blending to blend the seams of each pair of images.

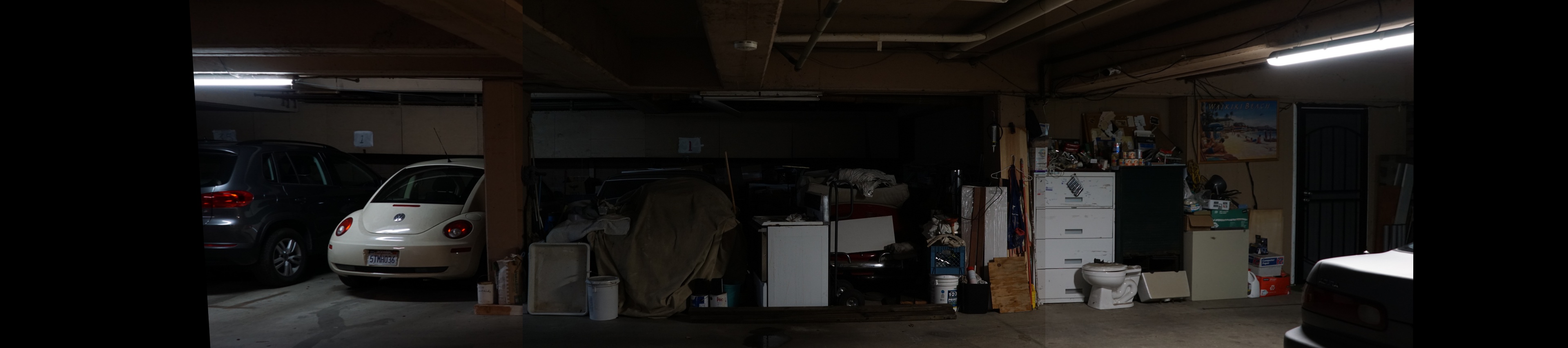

No blending

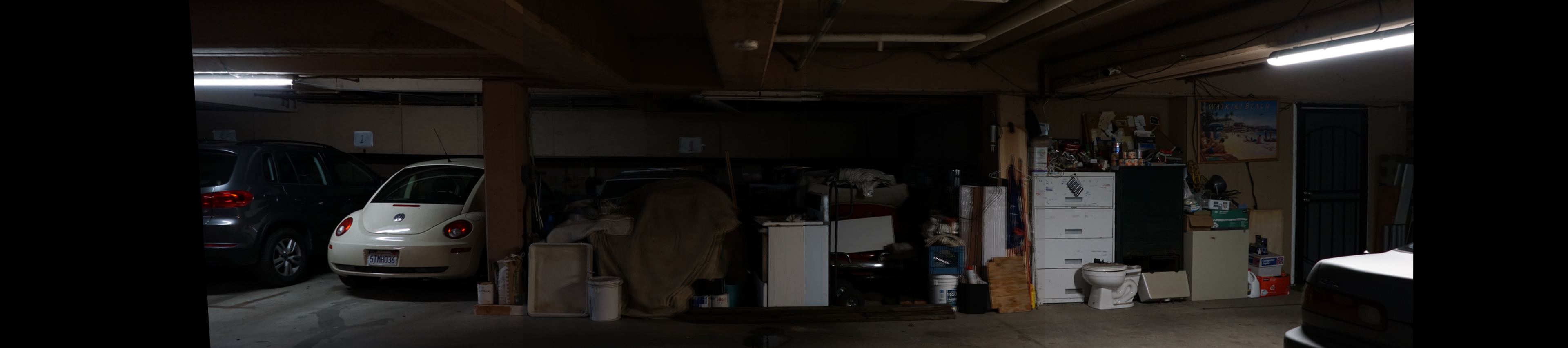

Alpha blending

No blending

Alpha blending

No blending

Alpha blending

It's interesting to see how the homography between each pair of images in a panorama can be modeled by a linear transformation (H) as long as the transforms are projective.

In Part 1, creating an image mosaic required manually selecting correspondences between each pair of images. This was problematic because selecting points by hand is both time-consuming and limited in accuracy.

The goal of this part of the project is to create a system for automatically creating mosaics by automatically identifying matching features between pairs of images. To do so, we follow the paper "Multi-Image Matching using Multi-Scale Oriented Patches" by Brown et al. (with some simplifications).

Thus, we implement the following steps: 1) Detect corner features 2) Extract a feature descriptor for each feature point 3) Match those feature descriptors between two images 4) Use RANSAC to compute a robust homography estimate 4) Stitch images to produce a mosaic (as in part A).

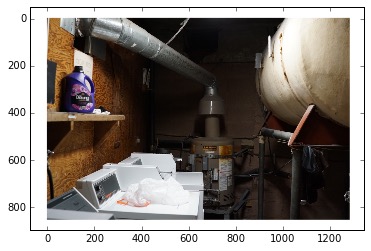

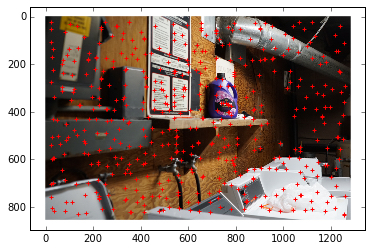

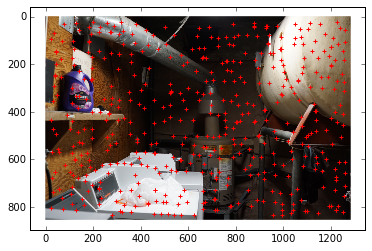

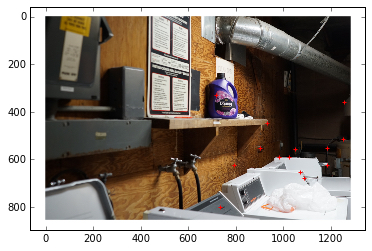

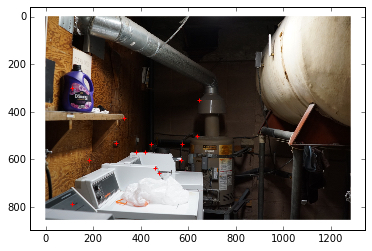

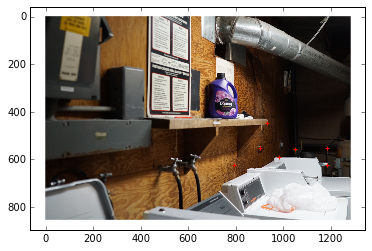

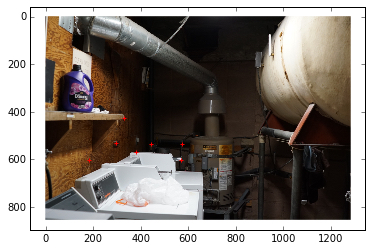

We will demonstrate with the below pair of images.

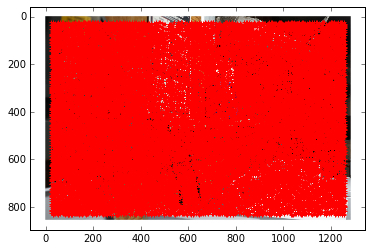

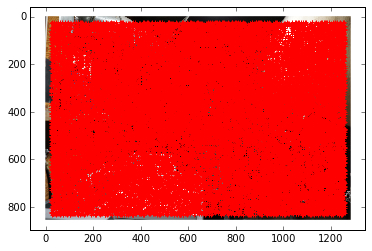

We first use a Harris Detector to generate Harris corners (points of interest). The Harris Detector first computes the response of an image to a corner detector, thresholds that response, and then returns the points of local maxima.

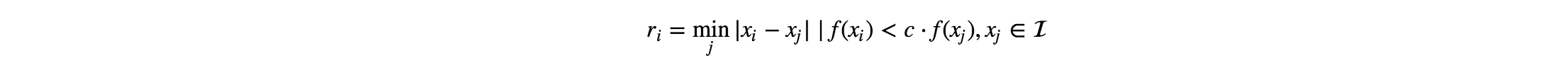

The Harris Detector gives us hundreds of thousands of interest points. To reduce this to a reasonable number, we use ANMS. ANMS seeks to minimize the above optimization problem, where x_i is an interest point location and I is the set of all interest point locations. We use a value of 0.9 for c, as recommended by the paper. Below we have selected 500 interest points with the largest values of r_i.

Next, we extract axis-aligned 8x8 patches (ignoring rotation-invariance). To do so, we sample these patches from a larger 40x40 window and then gaussian blur and subsample to get the 8x8 patches.

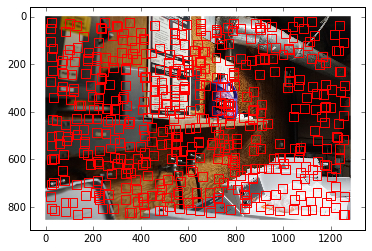

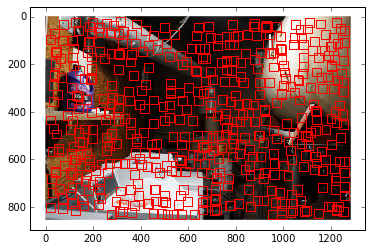

Given the above feature vectors, we find matches between features of the two images. We define a good match to be one where the 1-NN/2-NN error is greater than some epsilon. Below we used an epsilon of 0.7.

We then use 4-point RANSAC to find the set of points that will result in a robust homography estimate.

Now we will revisit the examples from part 1.

No blending

Alpha blending

No blending

Alpha blending

No blending

Alpha blending

Feature matching across pairs of images is actually a quite difficult task that requires a combination of various techniques (we used a Harris Detector, Adaptive Non-Maximal Suppression, Feature Matching with Lowe Thresholding, and RANSAC). For the examples above, our autostitching produced comparable or better results than with hand-stitching.