Animated sprites and even characters have dated back to the first days of animation, where there was little interactivity and one would simply watch the animation across the screen.

While magical at the time, these static frames creating the illusion of life were not at all interactive. To enhance the illusion of life, video games have introduced interactivity, giving humans control of these sprites. Typically, one would control these characters with buttons and keys or with the movement of their mouse. However, these interactions generate a need for an animator to add in more frames to transition from one action to another since the animation sequence can change at any point due to the interactive nature of all this.

Even with these interactions, the player has complete control over what they are doing, how they are moving the sprite, which in turn removes a part of the immersiveness of the illusion of life. After all, life-like interactions are not about calculated movements and actively controlled responses. In order to even further the illusion of life, we can track an interactive feature that happens unconsciously--eye movement.

Your eyes move to where to want to be. Traditionally, in a game, your eyes would target your destination and you would act upon getting there by maybe pressing a few keys and waiting a couple of frames. However, in that time, you are disconnected from the immersion of the game. If we instead remove the part where you would have to consciously think about what to do to get to where you want to be, the game world may become more interactive

In this project, we propose a different way of interacting with animated sprites using gaze to move the on-screen character. The work was divided into two parts: (1) creating video textures that will smoothly follow a cursor or user gaze and (2) detecting user gaze to control the animated sprite. We faced numerous challenges in each portion of the project.

In order to create a smooth video texture from a given set of image frames, we needed to create a vector between our video's moving feature and our cursor which was problematic when lighting, small motion differences when recording can cause large changes in the detected features.

In order to conduct gaze tracking, we needed to locate the eyes within a frame and find the pupil using a standard RGB webcam. Detection behavior varied greatly with different lighting, users, and interactive behaviors. We tried our best to overcome these challenges.

|

First, we set up a way to convert videos to frames. Then, we preprocess information like the sum of square differences between the frame and the transition probabilities. We can then loop over to detect our cursor and choose our next frame based on a cost function. |

|

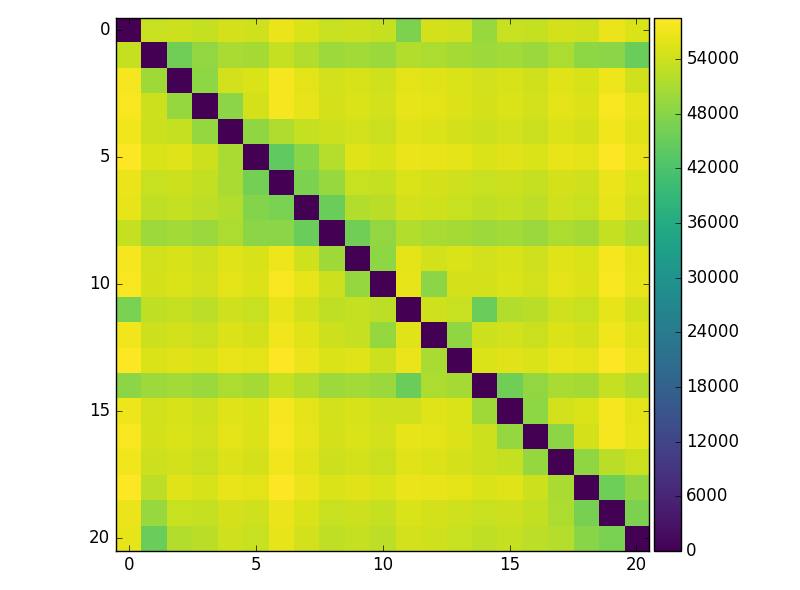

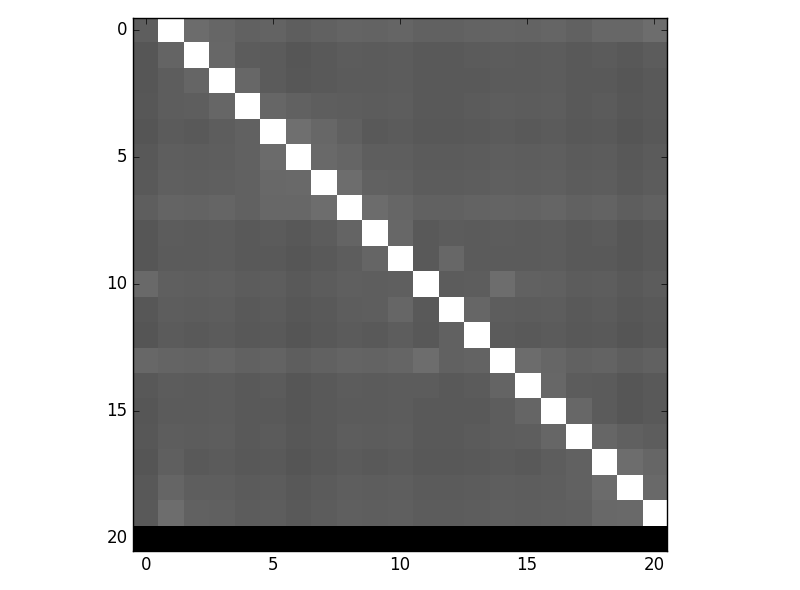

The first step to creating a video textured sprite was to calculate the image difference matrix using the L_2 distance between any pair of images which gives us the pixel value differences between the images. To get the transition probability: D_{i,j} = e^(D_{i+1,j}/sigma) where sigma is the average of nonzero distances.

|

|

In order to determine the position our animated sprite is in relation to our cursor, we must have features within a given image frame. This proves to be a challenge as in many animated sprites, especially those with multiple rotating appendages or those that change form throughout the frames, features may be very hard to detect and match up. Instead of identifying the object we want from features, we can use the shape generated from taking the difference between two consecutive frames. We can calculate the "center of gravity" of this movement shape by taking the square difference between the 2 frames and generate a grayscale mask for the region of difference. Then, we find the center for all these pixels by taking the average of all their positions, weighting them by the value of the pixel, which is the amount of difference. Using that center point, we can determine the direction an object is moving within 3 frames. To ensure that vector calculation runs smoothly at runtime, we pre-process this information by storing all the center points in the same way as we did with the probabilities of transitioning--in a matrix.

Two different cost metrics were used to find the best transition image: (1) Minimizing distance between feature and cursor (2) Minimizing angle and image similarity. Note that the distance metric takes into account where the user wants the sprite to be while the angle metric takes into account both where the user wants the sprite to be and the transitional probabilities.

|

|

Using the center points of these differences, we came up with two ways to generate a cost function. One, which we will call the distance method, takes into account where you would like to be. This cost function computes how far the center point is from the point you want to be at and minimizes it. While this function speedily gets to exactly where you would like to be, it tends to sacrifice smooth transitions in order to do so. |

|

The other method, which we will call the angle method, only takes into account the direction you would like to go and the transition probability. This method includes transitions and results in the sprite choosing to go in the direction of where to choose to go. However, it may take some time and may not get to where you want it to be before you have already moved in another direction, in which it will stop and try to follow your new direction. |

|

|

|

Given a webcam feed of the user, we first detect the user's eyes using the Viola Jones eye detection (Haar cascades within OpenCV) which give us a region of interest. Within this region, we look for circles using the Hough Transform. We then mapped the center of the detected circle in relation to the image frames to get the desired cursor position. Next, we map the relative position within our eye region to an absolute position within our image frames, simulating a mouse click. It might seem intuitive to transition smoothly from left to right if the user was to look from left to right, however, eyes do not transition smoothly and our program aims to mimic eye darting behavior that humans exhibit. Because eyes tend to dart, it is more important for the sprite to appear where the eyes are looking than for it to naturally move there. In this case, the distance method we mentioned previously works better. |

We have a 21 frame video of a girl running all drawn by hand. We chose to use this to test because the background doesn't introduce noise that may affect the square difference that we use to determine the moving object.