CS194 proj1

James Li

Overview

"Images of the Russian Empire: Colorizing the Prokudin-Gorskii photo collection"

We are given a set of images which consist of grayscale slides corresponding to blue, green, and red color filters. The challenge is to slice those slides into 3 images corresponding to RGB color channels, then align them relative to each other.

Pyramid structure

All images were processed using a pyramid. I downscaled the images until the width was 256 or less. (Note that this means even the small .jpg images were fed through one layer of the pyramid!) Then I cropped the images and computed the alignment.

On the base level, I aligned the images by up to 24 pixels vertically and 8 horizontally. I assumed that because the 3 gray images were stacked vertically, the vertical error might be larger than the horizontal.

On all other levels, I shifted the images by up to 1 pixel h/v (2 pixels if image width ≤ 1024). This is much faster than testing a larger alignment distance, and makes up for my slower(?) scoring function.

- Initially, I recomputed the crop borders on every single level. If I tested all alignments up to 2 pixels off in each direction, sometimes the optimal alignment would be 2 pixels off, meaning only shifting by 1 pixel would produce bad results.

- Once I edited the code to use consistent cropping among all scale levels, no alignment in the example image set was ever off by more than 1 pixel. As a result, I reduced the maximum shift on large scale levels to only 1 pixel.

Alignment algorithm

My alignment algorithm is not based directly on either SSD or NCC, but on prior work I did in 1D audio alignment at https://github.com/jimbo1qaz/corrscope.

Every time I test an alignment, I evaluate the score function:

- Subtract the mean from the input image and reference image

- Shift the input image by the alignment amount

- Compute the cross-correlation via

mean(input * reference image * window).

Because I want to mostly ignore the image edges (the borders don't align), I multiply either the input or reference image (both are equivalent) by a window before performing the dot product. The window weighs each pixel by the product of a parabolic function of its x and y positions. As a result, pixels close to any edge of the image contribute less to the score function's output value.

Unlike cropping away the image borders, my window function does not introduce a numeric twiddle factor (which may be an advantage or disadvantage). It also does not depend on cropping the edges away exactly correctly, has no weird edge effects, and has worked very reliably among the example images. The disadvantage is that multiplying by a window is slower than cropping the images.

Bells and whistles

Using edges as a feature

I originally had functionality for horizontal and vertical edge detection via np.abs(np.diff(array, axis = 0 or 1)). However I removed this code since it slowed my algorithm down by nearly a factor of three. Also edge detection was unnecessary, as my plain cross-correlation algorithm worked on every image I threw at it.

Cropping

I implemented cropping by processing the low-resolution image only. My assumption was that all images are surrounded by black borders which are not cropped out. Unfortunately the assumption of black borders was not true on literally every image in the collection.

I rewrote the code that splits the overall image into 3 parts to overlap the output channels. This ensures that each channel includes black borders and parts of the neighboring channel. This will change how the displacements are computed, whether cropping is enabled or not.

For the actual cropping algorithm, I analyzed the top of the low-resolution downscaled image, looking for a boundary which maximizes (average pixel value of row) - (average pixel value of row above). The argmax of that value corresponds to the image being lighter than the black border. I performed the same thing for the bottom of the image, but finding the argmin instead. Similarly for the left and the right borders. Then I shrink the borders by 1 downscaled pixel.

I do not recompute crop borders at larger images, but merely rescale the low-resolution coordinates up.

At the end of the process, I shift the crop borders by the same amount as the channels, compute their intersection (where all images are valid), and slice the array to that size before writing to disk.

Cropping results

The results were acceptable overall. I didn't notice any images where too much was cropped out, but many images have either a blue haze on the bottom (I could crop out a few more pixels to fix this), or nasty colored features along the edges (which would require changing my algorithm).

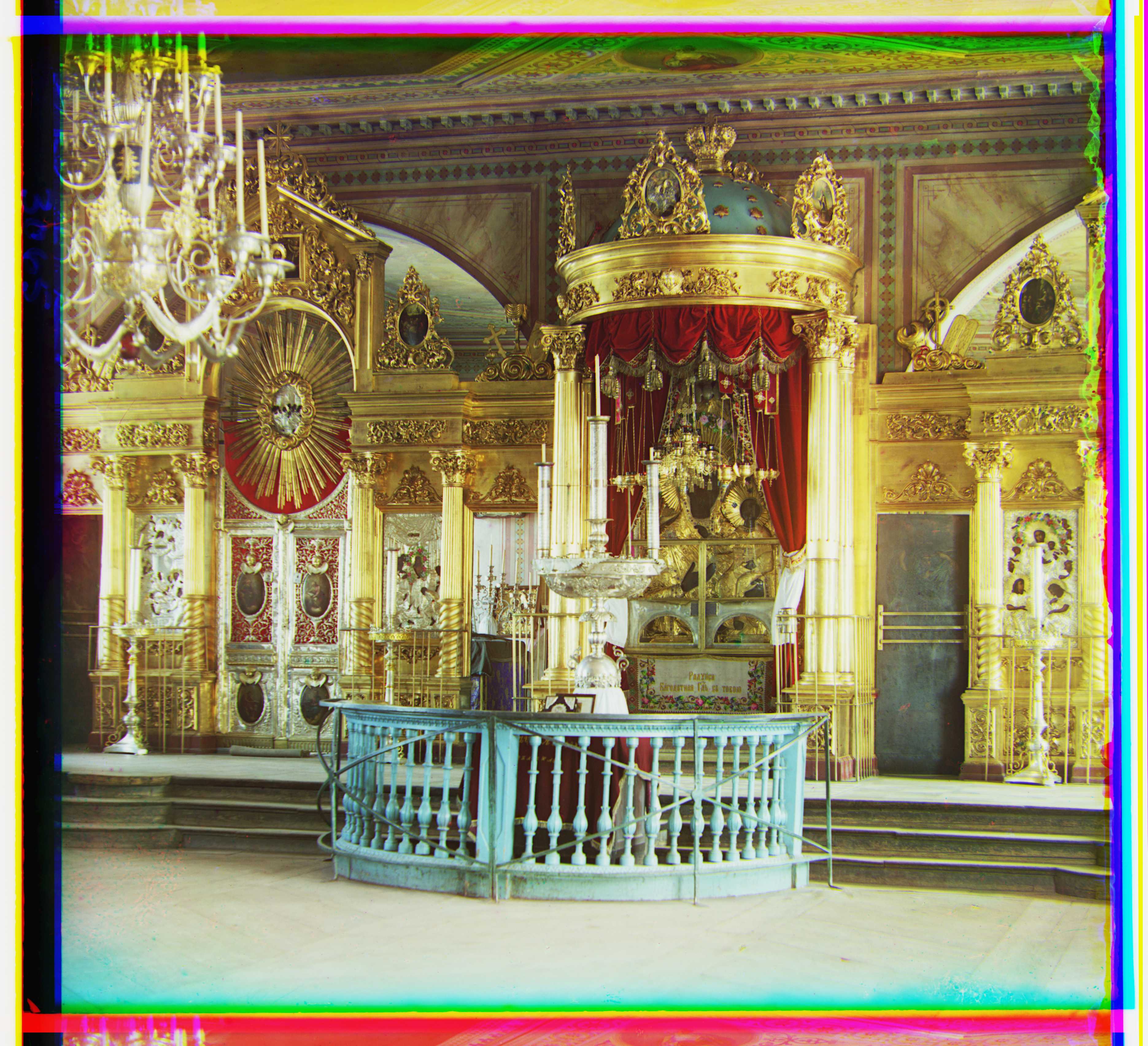

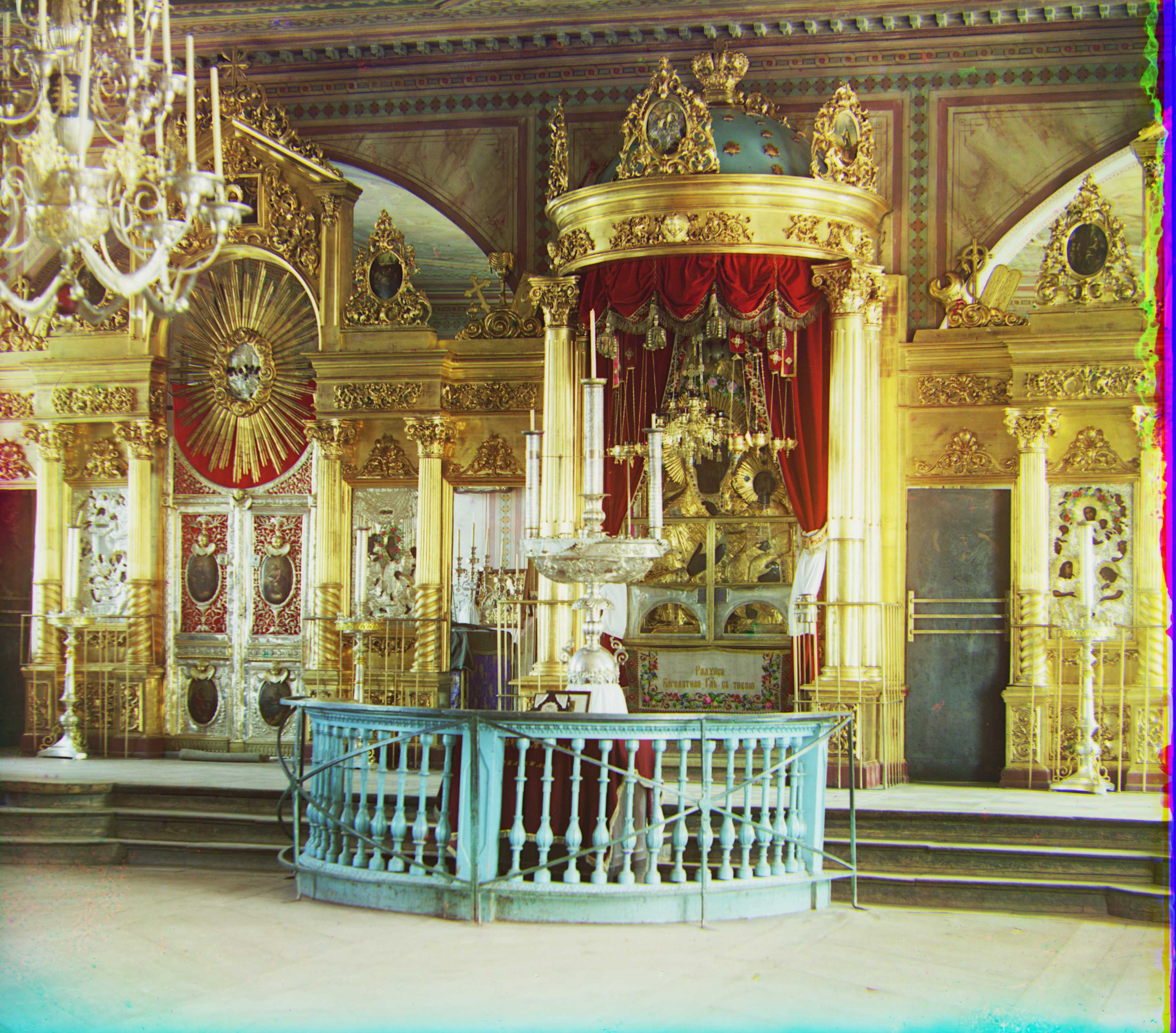

For example, in icon.tif, the bottom has a color tint, and the right side of the image has some brightly colored artifacts that I did not crop out.

I don't know why the bottom of harvesters.tif is missing red. I don't know why my algorithm didn't crop it out.

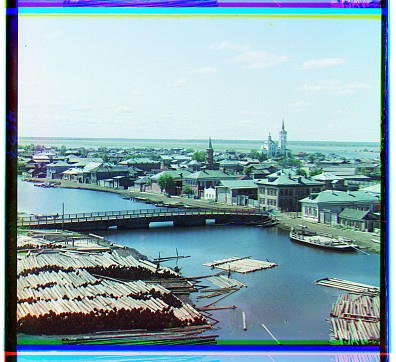

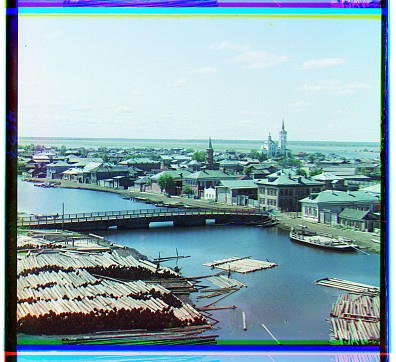

The bridges image cropped very poorly, because the images were not surrounded by a black border as I had assumed. Instead, the images had white gaps between the channels.

Results

I had acceptable to seemingly-perfect results on all images. Here is an overview of the images which look worst in my inspection. By opening my outputs in GIMP and running "channels -> decompose", I noticed that many channels were intrinsically misaligned, and require scaling or rotation to fully align. A few pictures even had moving objects in frame.

- harvesters.tif was difficult to process because there is a bit of rotation between the frames.

- emir.tif had pretty good results. By decomposing the output jpg's color channels in GIMP, I checked that the belt buckle's pattern was exactly aligned among all 3 channels. However, the emir's head moved slightly between blue and green/red though, and the clothes don't line up perfectly. The door on the right isn't aligned either.

- train.tif isn't aligned well either, but this is because the 3 images are rotated relative to each other.

- three_generations.tif has a bit of human movement and rotation between the 3 images.

| Uncropped images |

Cropped images |

Uncropped:

Uncropped:

Image displacements (y, x):

Red: [-4 1]

Green (reference): [0 0]

Blue: [ 6 -2]

|

Image crops [[top, bottom], [left, right]]:

Red:

[[ 22 340]

[ 18 370]]

Green:

[[ 10 344]

[ 14 370]]

Blue:

[[ 14 334]

[ 14 374]]

|

Uncropped:

Uncropped:

Image displacements (y, x):

Red: [-32 17]

Green (reference): [0 0]

Blue: [ 40 -24]

|

Image crops [[top, bottom], [left, right]]:

Red:

[[ 208 3168]

[ 192 3552]]

Green:

[[ 112 3232]

[ 208 3568]]

Blue:

[[ 160 3168]

[ 224 3584]]

|

Uncropped:

Uncropped:

Image displacements (y, x):

Red: [-33 -3]

Green (reference): [0 0]

Blue: [ 39 -16]

|

Image crops [[top, bottom], [left, right]]:

Red:

[[ 224 3264]

[ 144 3520]]

Green:

[[ 144 3296]

[ 144 3504]]

Blue:

[[ 208 3216]

[ 144 3488]]

|

Uncropped:

Uncropped:

Image displacements (y, x):

Red: [-44 5]

Green (reference): [0 0]

Blue: [ 51 -17]

|

Image crops [[top, bottom], [left, right]]:

Red:

[[ 224 3296]

[ 224 3632]]

Green:

[[ 128 3312]

[ 208 3728]]

Blue:

[[ 208 3232]

[ 208 3728]]

|

Uncropped:

Uncropped:

Image displacements (y, x):

Red: [-30 2]

Green (reference): [0 0]

Blue: [36 -5]

|

Image crops [[top, bottom], [left, right]]:

Red:

[[ 304 3232]

[ 192 3552]]

Green:

[[ 224 3344]

[ 176 3616]]

Blue:

[[ 128 3280]

[ 176 3616]]

|

Uncropped:

Uncropped:

Image displacements (y, x):

Red: [7 3]

Green (reference): [0 0]

Blue: [ 7 -9]

|

Image crops [[top, bottom], [left, right]]:

Red:

[[ 224 3264]

[ 192 3552]]

Green:

[[ 176 3344]

[ 192 3552]]

Blue:

[[ 272 3296]

[ 192 3552]]

|

Uncropped:

Uncropped:

Image displacements (y, x):

Red: [-5 1]

Green (reference): [0 0]

Blue: [14 -2]

|

Image crops [[top, bottom], [left, right]]:

Red:

[[ 30 350]

[ 20 378]]

Green:

[[ 18 352]

[ 20 378]]

Blue:

[[ 16 334]

[ 20 378]]

|

Uncropped:

Uncropped:

Image displacements (y, x):

Red: [-38 10]

Green (reference): [0 0]

Blue: [ 44 -26]

|

Image crops [[top, bottom], [left, right]]:

Red:

[[ 288 3280]

[ 208 3568]]

Green:

[[ 192 3312]

[ 224 3600]]

Blue:

[[ 256 3248]

[ 240 3616]]

|

Uncropped:

Uncropped:

Image displacements (y, x):

Red: [-1 8]

Green (reference): [0 0]

Blue: [ 21 -28]

|

Image crops [[top, bottom], [left, right]]:

Red:

[[ 160 3216]

[ 304 3664]]

Green:

[[ 112 3264]

[ 304 3664]]

Blue:

[[ 192 3200]

[ 288 3664]]

|

Uncropped:

Uncropped:

Image displacements (y, x):

Red: [-31 -2]

Green (reference): [0 0]

Blue: [ 36 -13]

|

Image crops [[top, bottom], [left, right]]:

Red:

[[ 304 3264]

[ 144 3504]]

Green:

[[ 224 3360]

[ 144 3504]]

Blue:

[[ 240 3280]

[ 144 3504]]

|

Uncropped:

Uncropped:

Image displacements (y, x):

Red: [-7 1]

Green (reference): [0 0]

Blue: [ 8 -2]

|

Image crops [[top, bottom], [left, right]]:

Red:

[[ 22 346]

[ 18 380]]

Green:

[[ 10 344]

[ 20 380]]

Blue:

[[ 12 332]

[ 22 382]]

|

Uncropped:

Uncropped:

Image displacements (y, x):

Red: [-43 26]

Green (reference): [0 0]

Blue: [43 -5]

|

Image crops [[top, bottom], [left, right]]:

Red:

[[ 192 3264]

[ 160 3568]]

Green:

[[ 112 3296]

[ 192 3584]]

Blue:

[[ 224 3216]

[ 176 3584]]

|

Uncropped:

Uncropped:

Image displacements (y, x):

Red: [-13 10]

Green (reference): [0 0]

Blue: [ 21 -12]

|

Image crops [[top, bottom], [left, right]]:

Red:

[[ 160 3232]

[ 208 3616]]

Green:

[[ 96 3280]

[ 208 3632]]

Blue:

[[ 176 3232]

[ 224 3648]]

|

Uncropped:

Uncropped:

Image displacements (y, x):

Red: [-37 -11]

Green (reference): [0 0]

Blue: [36 1]

|

Image crops [[top, bottom], [left, right]]:

Red:

[[ 336 3280]

[ 192 3568]]

Green:

[[ 240 3360]

[ 176 3552]]

Blue:

[[ 224 3296]

[ 160 3520]]

|

Custom images

I downloaded 5 images from the Library of Congress, chosen to have a mix of dark and light images, large and small features, and also brightly colored clothing which is difficult for some algorithms to align. After running my algorithm with the same numeric twiddle factors from before, I obtained reasonable results on all images. Here is an overview of issues I noticed:

- The workers image has a strong blue cast and red stains, which were manually edited out of the Library of Congress polished version.

- The bridge has light-colored edge effects which I did not crop out (my crop algorithm was only designed to remove black borders). The polished version crops out the light borders, and edits the bushes out of the image entirely!

- In the picture of women, the blue channel is slightly bigger than the others, so it's impossible to line them up perfectly through translation alone.

| Uncropped images |

Cropped images |

Uncropped:

Uncropped:

Image displacements (y, x):

Red: [-33 13]

Green (reference): [0 0]

Blue: [76 8]

|

Image crops [[top, bottom], [left, right]]:

Red:

[[ 32 3296]

[ 128 3600]]

Green:

[[ 32 3072]

[ 160 3536]]

Blue:

[[ 176 3264]

[ 144 3520]]

|

Uncropped:

Uncropped:

Image displacements (y, x):

Red: [-8 0]

Green (reference): [0 0]

Blue: [58 -1]

|

Image crops [[top, bottom], [left, right]]:

Red:

[[ 160 3200]

[ 224 3584]]

Green:

[[ 112 3200]

[ 224 3584]]

Blue:

[[ 160 3136]

[ 240 3584]]

|

Uncropped:

Uncropped:

Image displacements (y, x):

Red: [-10 -2]

Green (reference): [0 0]

Blue: [ 31 -22]

|

Image crops [[top, bottom], [left, right]]:

Red:

[[ 320 3280]

[ 144 3552]]

Green:

[[ 240 3408]

[ 128 3536]]

Blue:

[[ 256 3344]

[ 128 3536]]

|

Uncropped:

Uncropped:

Image displacements (y, x):

Red: [-72 8]

Green (reference): [0 0]

Blue: [114 -10]

|

Image crops [[top, bottom], [left, right]]:

Red:

[[ 304 3296]

[ 160 3552]]

Green:

[[ 176 3312]

[ 160 3552]]

Blue:

[[ 128 3168]

[ 160 3552]]

|

Uncropped:

Uncropped:

Image displacements (y, x):

Red: [-49 16]

Green (reference): [0 0]

Blue: [ 58 -30]

|

Image crops [[top, bottom], [left, right]]:

Red:

[[ 176 3200]

[ 176 3552]]

Green:

[[ 80 3216]

[ 192 3568]]

Blue:

[[ 128 3104]

[ 240 3568]]

|

Uncropped:

Uncropped:

Uncropped:

Uncropped:

Uncropped:

Uncropped:

Uncropped:

Uncropped:

Uncropped:

Uncropped:

Uncropped:

Uncropped:

Uncropped:

Uncropped:

Uncropped:

Uncropped:

Uncropped:

Uncropped:

Uncropped:

Uncropped:

Uncropped:

Uncropped:

Uncropped:

Uncropped:

Uncropped:

Uncropped: