The goal of this project was to colorize the Prokudin-Gorskii photo collection. Each photo in the collection consists of three black-and-white photographs taken with a red, green, and blue filter. When aligned, these three negatives produce a color image. Assuming a x-and-y translation model (ie: only shift the images up, down, left, and/or right), we implemented several methods that calculated a two-dimensional displacement vector to align the three channels.

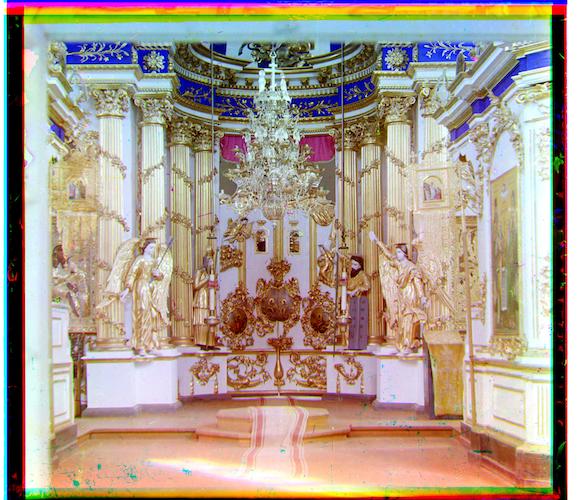

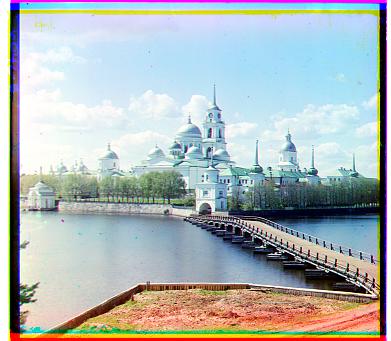

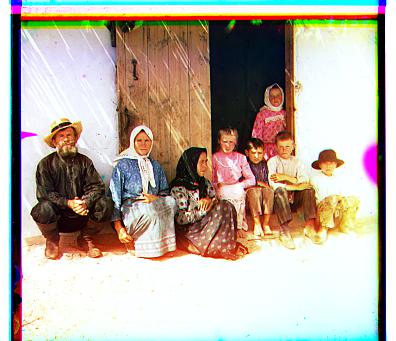

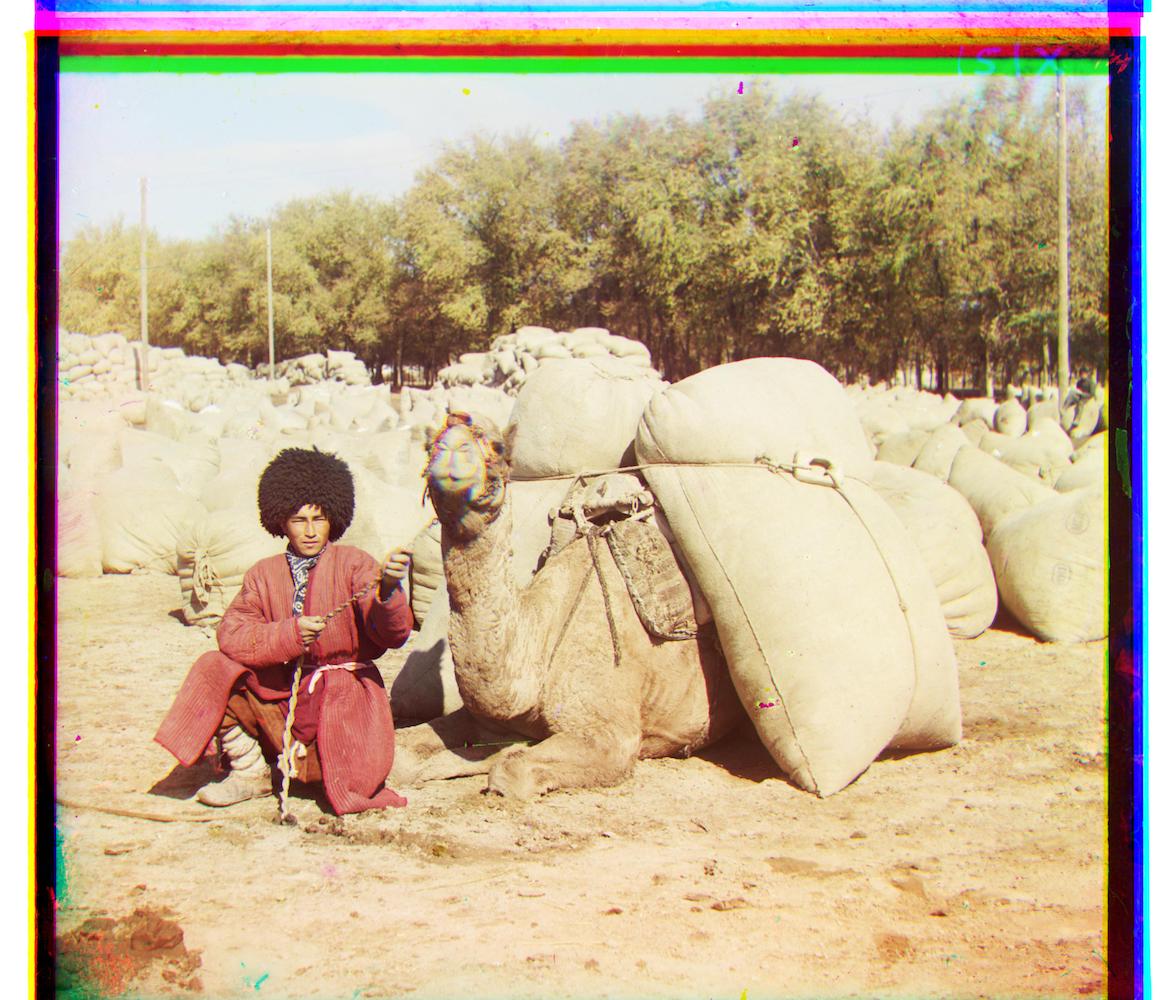

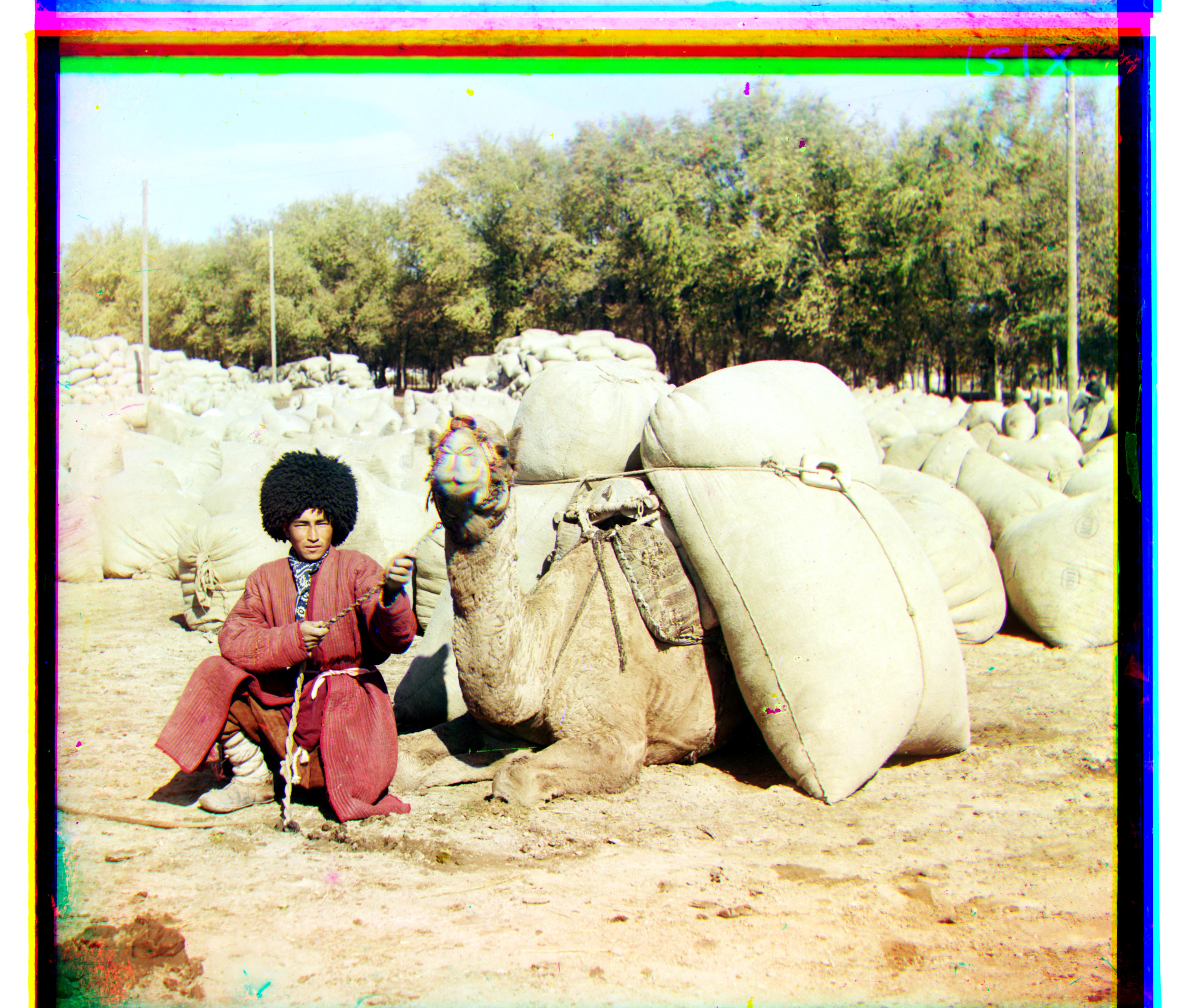

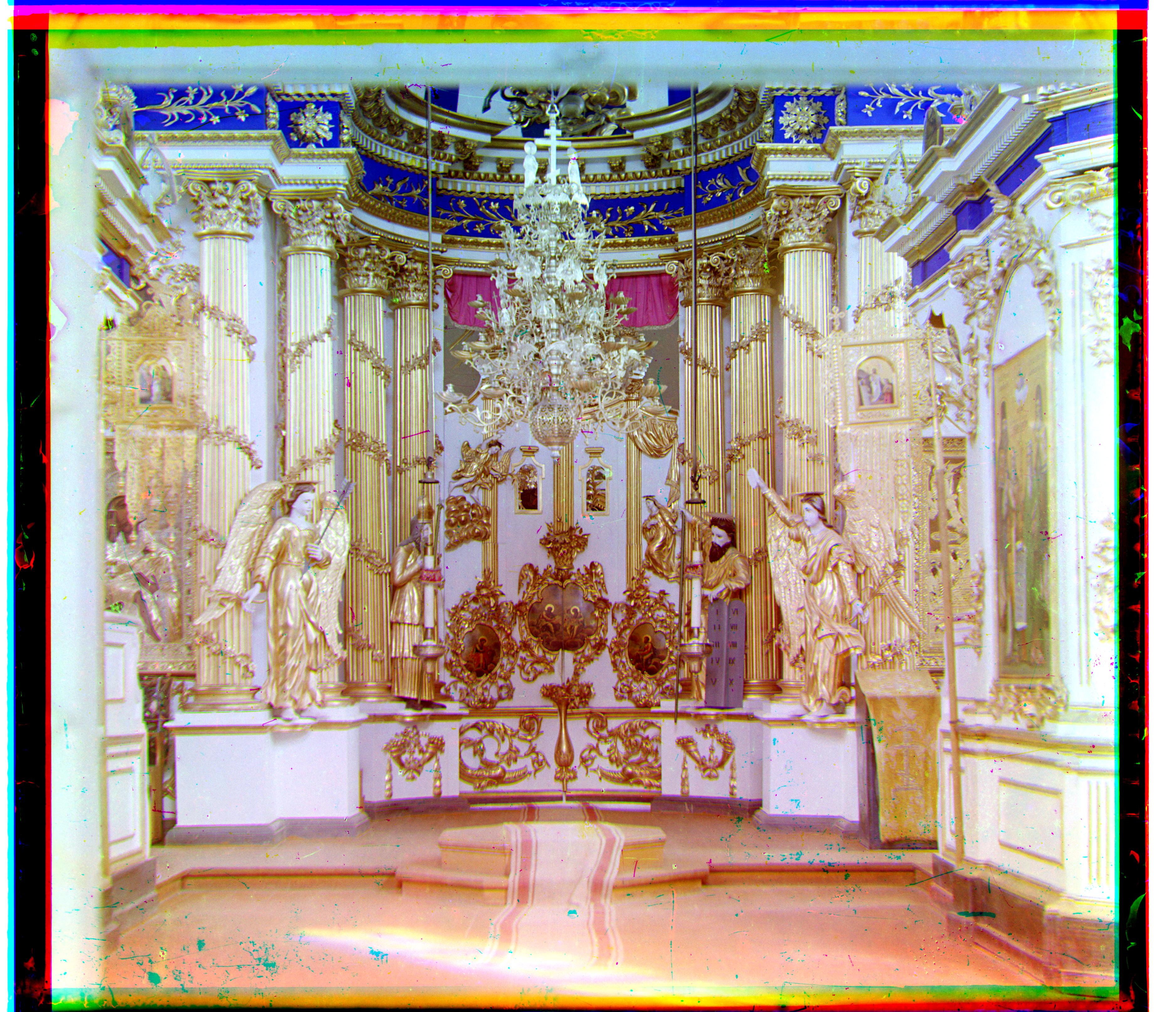

The first approach we implemented was a naive exhaustive search over all the possible displacements (30 pixels in each direction) to find the alignment with the smallest SSD (sum of squared differences) in pixel value. Since the images had black and white borders, we only computed the SSD on the internal pixels of the image. This worked well for the smaller images:

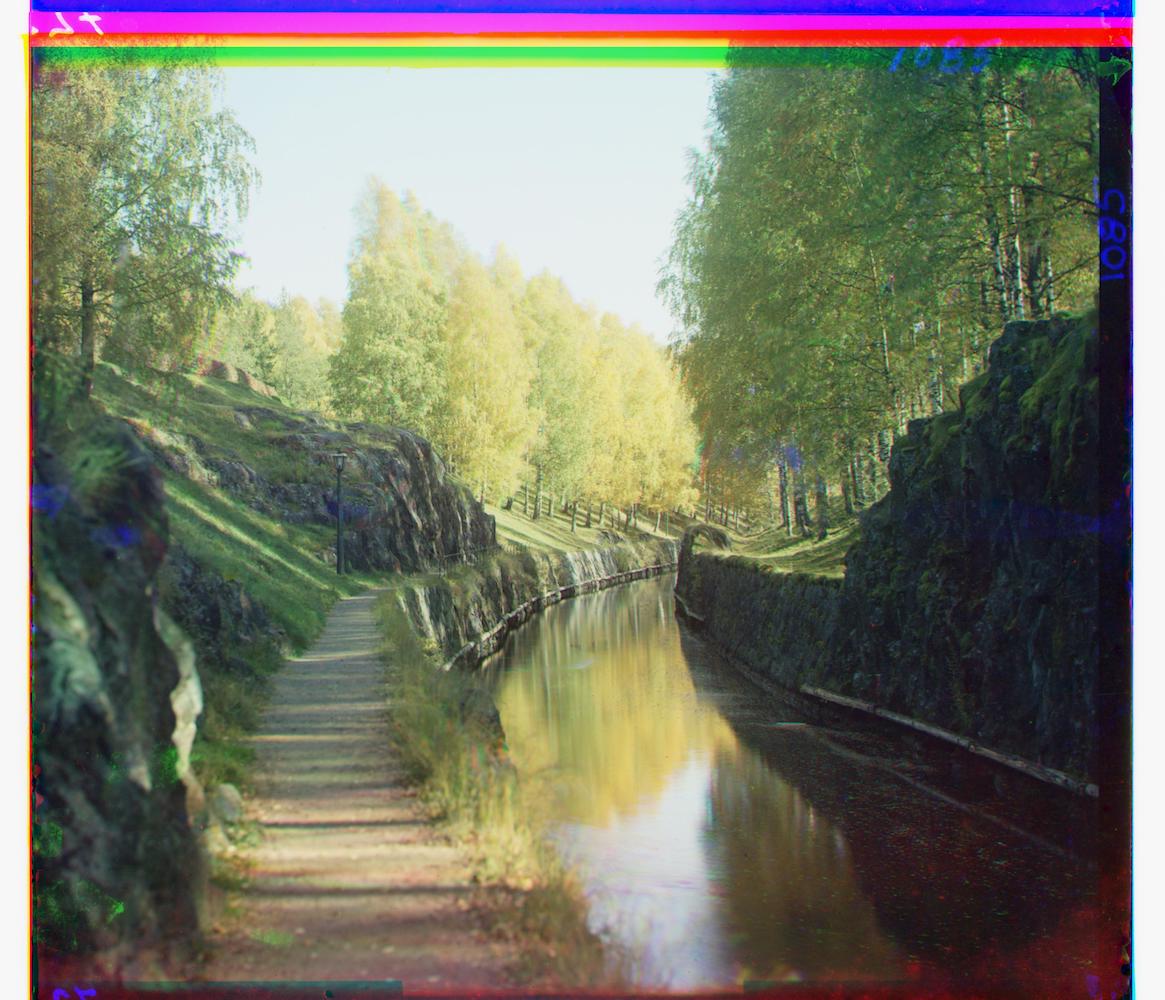

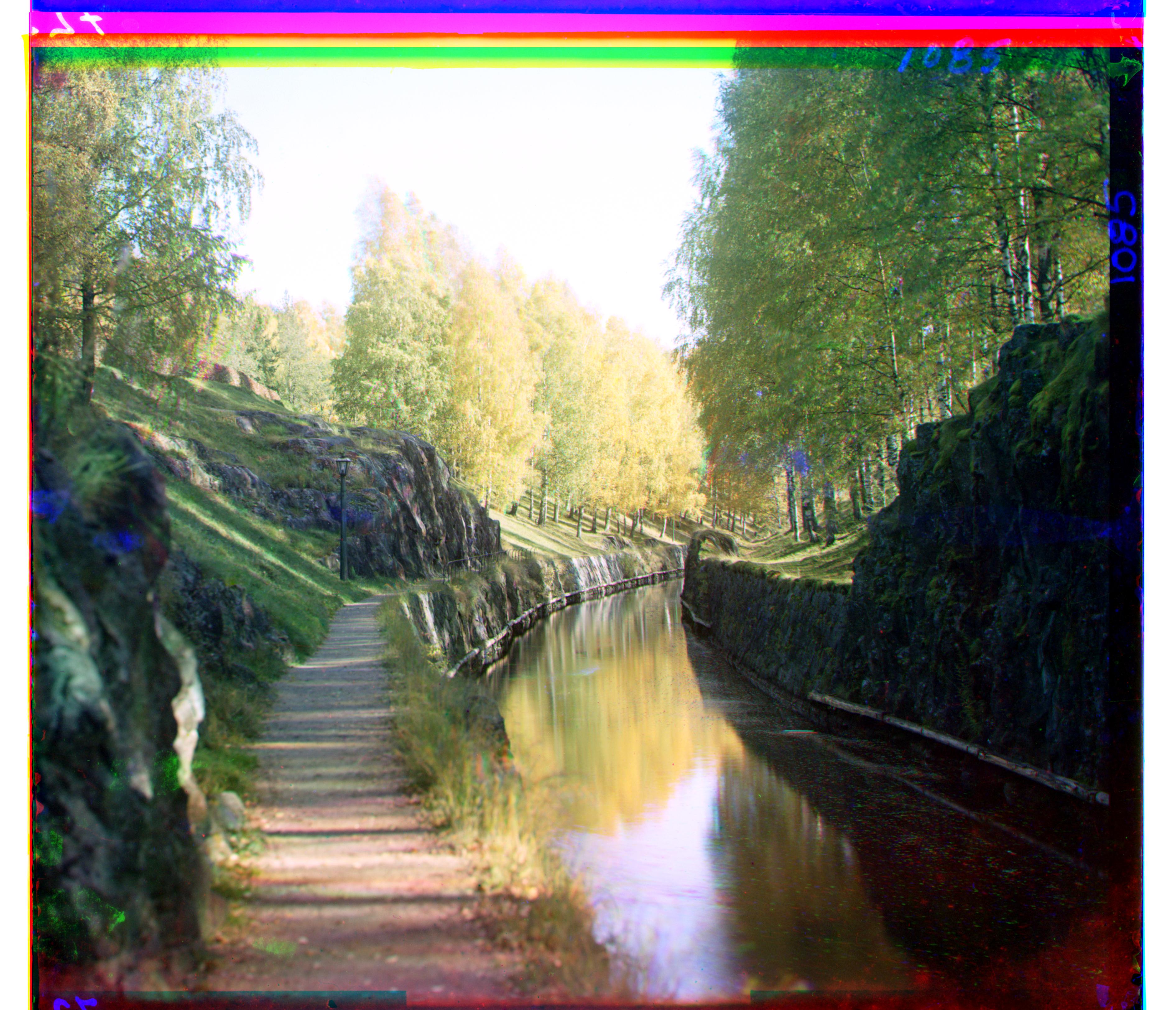

While exhaustive search works well for smaller images, on longer images it takes too long to compute. Thus, for large images, we implemented an image pyramid:

In order to align the image of Emir correctly, we used scikit-image to apply a Sobel filter on the image in order to detect its edges. This filter approximates horizontal and vertical gradients by using 3x3 kernels or convolutions on the image. For example, for the image of Emir, we detect the following edges for each channel:

Left: Aligned image from color channel pixel values; Right: Aligned image from edge pixel values

In addition to the alignment methods mentioned above, we also implemented contrast stretching on each color channel in order to improve the perceived quality of the image. As mentioned on the course website, the safest method is to rescale the image such that the darkest pixel has a value of 0 and the lightest pixel has a value of 1. However, since we did not observe much of a change in image quality through this method, we instead rescaled the image such that the darkest 1% of pixels became 0 and the lightest 1% of pixels became 1. We also only considered internal pixels when calculating the 1st and 99th percentile of pixel values.

We also implemented automatic white balance using a gray-world assumption, in which we assume that the average color of the image is gray. In order to do so, we calculated the mean value of each color channel's internal pixels to get some average r, g, and b, found the maximum value of those averages, and scaled up the other two channels so that the mean values of all the channels were equal. For example, if the green channel had the highest mean value, we would multiply the red channel by g/r and the blue channel by g/b. Once again, we also only considered internal pixels when calculating the mean value for each channel.

From left to right: Original example image, image with white balancing, image with contrasting, image with white balancing and contrasting

| Image | Green | Blue |

|---|---|---|

| Cathedral | [5, 2] | [12, 3] |

| Monastery | [-3, 2] | [3, 2] |

| Nativity | [3, 1] | [7, 1] |

| Settlers | [7, 0] | [14, -1] |

| Emir | [48, 24] | [106, 40] |

| Harvesters | [60, 16] | [124, 12] |

| Icon | [40, 16] | [88, 22] |

| Lady | [48, 8] | [112, 12] |

| Self Portrait | [78, 28] | [176, 36] |

| Three Generations | [52, 12] | [112, 10] |

| Train | [42, 4] | [86, 32] |

| Turkmen | [56, 20] | [116, 28] |

| Village | [64, 12] | [136, 20] |

| Cathedral (Inside) | [32, -6] | [94, -26] |

| Glass | [20, 16] | [60, 16] |

| River | [40, 4] | [152, 8] |

| Tower | [56, 12] | [106, 24] |