CS 194-26 Project 1: Images of the Russian Empire

Name: Andrew Oeung

Instructional Account: cs194-26-adz

Objective:

This project utilizes the automatic alignment of three color channels to produce a RGB color image. We can use any preprocessing/postprocessing techniques as we desire, as long as they fit within the time constraints. The three color channels are separated into three numpy arrays, and I will use NCC, an image pyramid, sobel edge detection, and automatic white border cropping to achieve my goal.

Single-Scale:

There are two intensity metrics that will aide us in image alignment: Sum of Squared Differences (aka SSD) and Normalized Cross-Correlation (aka NCC). I shift the red and green channels against the blue channel in a range of +/- 15 pixels, using the smallest SSD value/highest NCC value as the "best fit." The SSD is fairly straightforward: it calculates the squared differences between two channels, and then sums them up. The NCC normalizes both channels and then computes the dot product between the two channels. A higher dot-product will signify similar intensities between the two channels.

Multi-Scale:

The image pyramid construction is primarily used for .tif files, which are several magnitudes larger than .jpg files. The image pyramid recursively resizes the image and does a naive scan within a certain range of that smaller, resized image. Once the corresponding shift values are returned to a higher level of recursion, I simply keep track of the cumulative shift offset and update my recursion parameters accordingly. Tinkering with the amount of recursion levels will also modify the accuracy of my images. Right now, my algorithm takes around 50 seconds per .tif file, so making it any more fine-grained will cause it to fail the time constraint. An optimization I could have probably made would have been to switch to an iterative approach or reduce the amount of shifts.

Results:

Here are all of my image results, as well as their corresponding offsets and cpu time measured by %%time.

cathedral G: [5, 2] R: [12, 3] CPU times: user 6.09 s, sys: 1.46 s, total: 7.55 s |

emir G: [49, 24] R: [105, 41] CPU times: user 40.4 s, sys: 10.1 s, total: 50.5 s |

harvesters G: [56, 11] R: [118, 10] CPU times: user 39.3 s, sys: 9.15 s, total: 48.5 s |

icon G: [39, 16] R: [88, 23] CPU times: user 40.5 s, sys: 8.89 s, total: 49.4 s |

lady G: [57, 9] R: [121, 13] CPU times: user 39.4 s, sys: 8.45 s, total: 47.8 s |

monastery G: [-3, 2] R: [3, 2] CPU times: user 4.56 s, sys: 116 ms, total: 4.68 s |

nativity G: [3, 1] R: [8, 0] CPU times: user 6.49 s, sys: 1.64 s, total: 8.13 s |

self_portrait G: [74, 25] R: [175, 37] CPU times: user 41.9 s, sys: 9.68 s, total: 51.6 s |

settlers G: [7, 0] R: [14, -1] CPU times: user 4.31 s, sys: 87.4 ms, total: 4.4 s |

three_generations G: [59, 16] R: [115, 12] CPU times: user 41.6 s, sys: 8.92 s, total: 50.5 s |

train G: [53, 5] R: [84, 28] CPU times: user 38.8 s, sys: 8.17 s, total: 47 s |

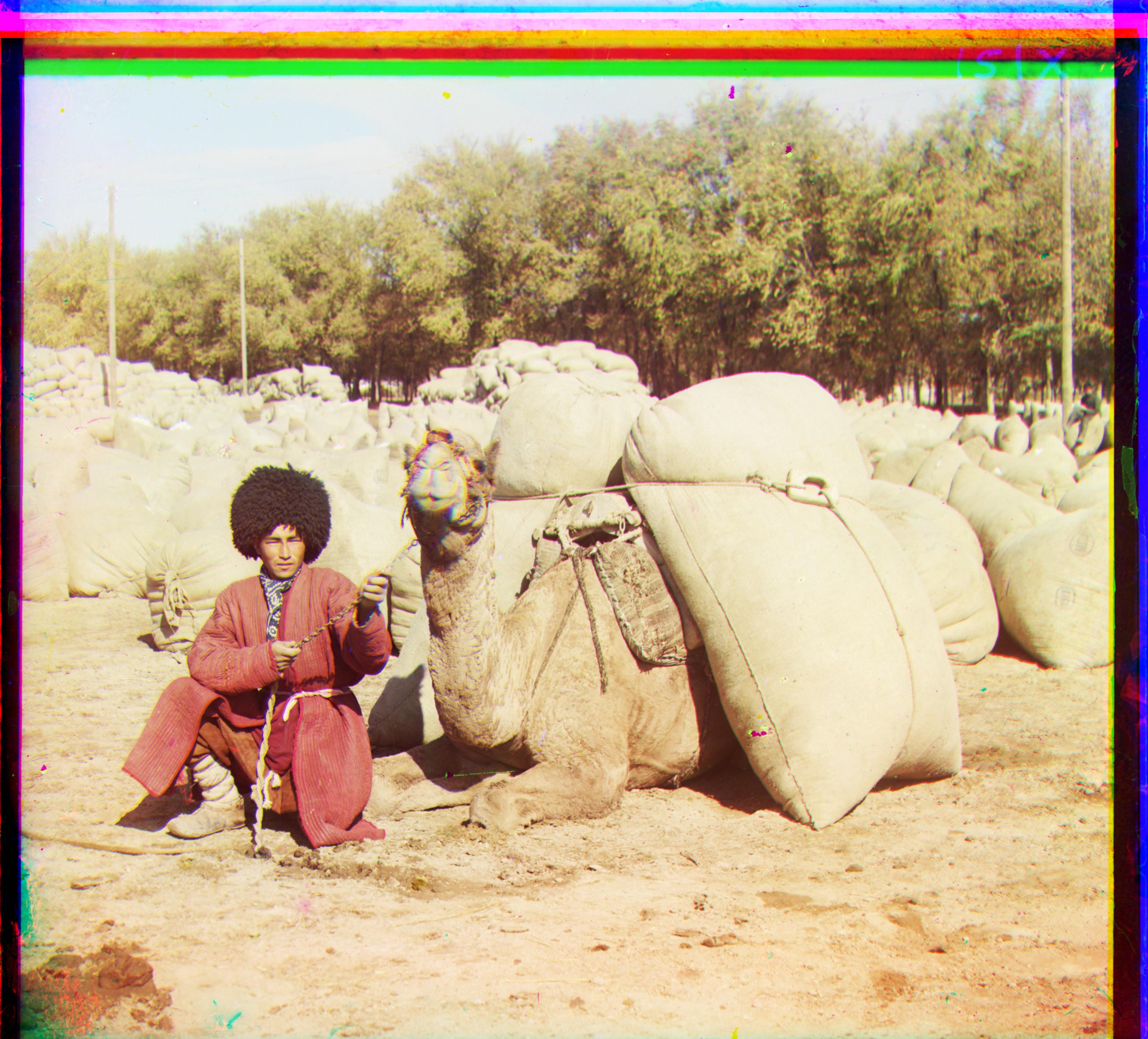

turkmen G: [59, 21] R: [117, 29] CPU times: user 40.8 s, sys: 8.66 s, total: 49.4 s |

village G: [65, 11] R: [138, 22] CPU times: user 43 s, sys: 9.94 s, total: 52.9 s |

Bells and Whistles

Automatic White Border Cropping:

All of the images have white borders on the horizontal edges, and so after I aligned the color channels to create the image, I converted the image to grayscale via dot product and summed up the columns, removing columns on the edges that were above a certain threshold value. Since the leftmost and rightmost group of columns are primarily white, they will have a high summation value.

|

|

|

|

|

|

before_emir G: [-4, 7] R: [106, 17] CPU times: user 37.9 s, sys: 8.8 s, total: 46.7 s |

emir G: [49, 24] R: [105, 41] CPU times: user 40.4 s, sys: 10.1 s, total: 50.5 s |

|

|

|