Project Outline

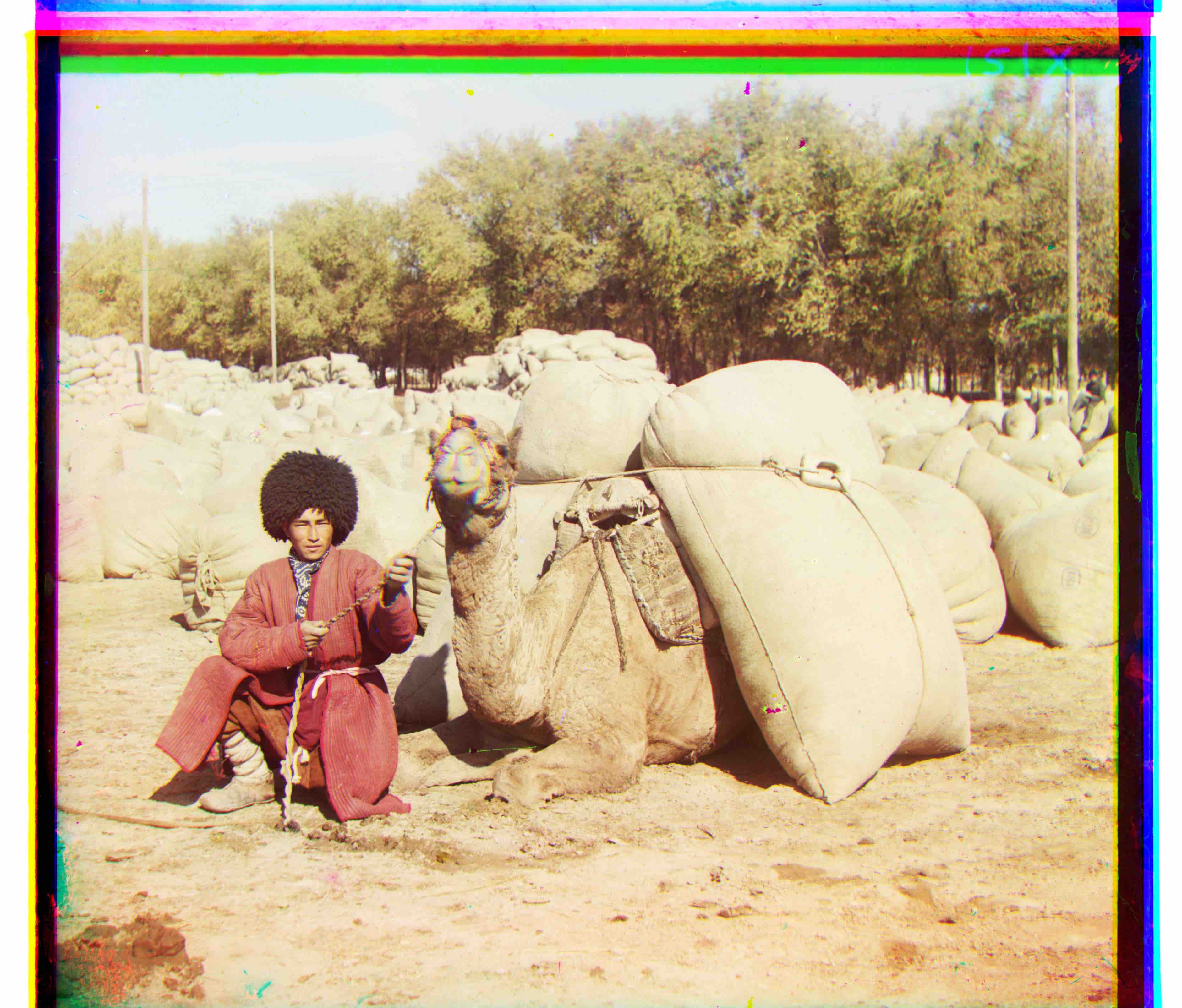

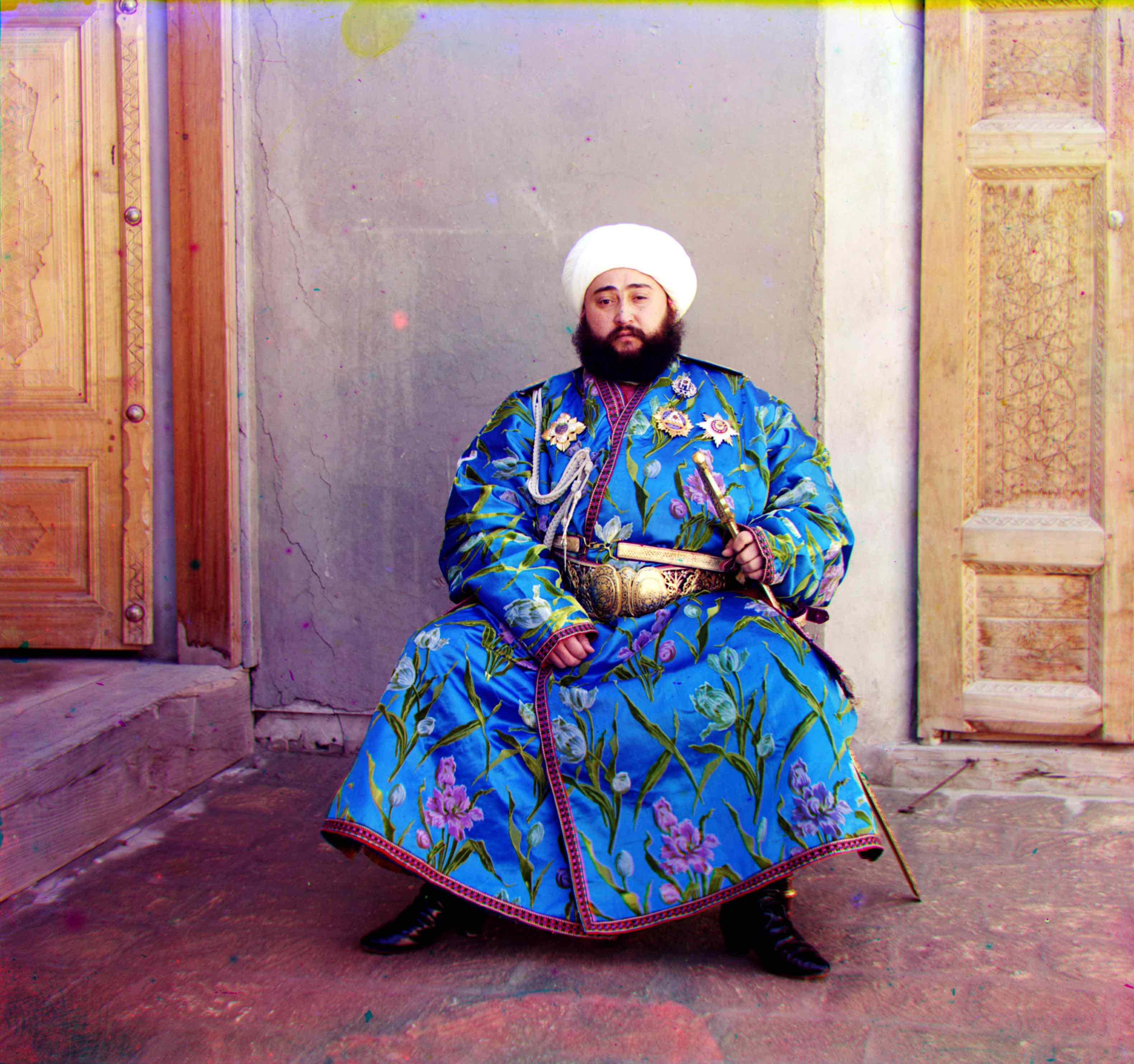

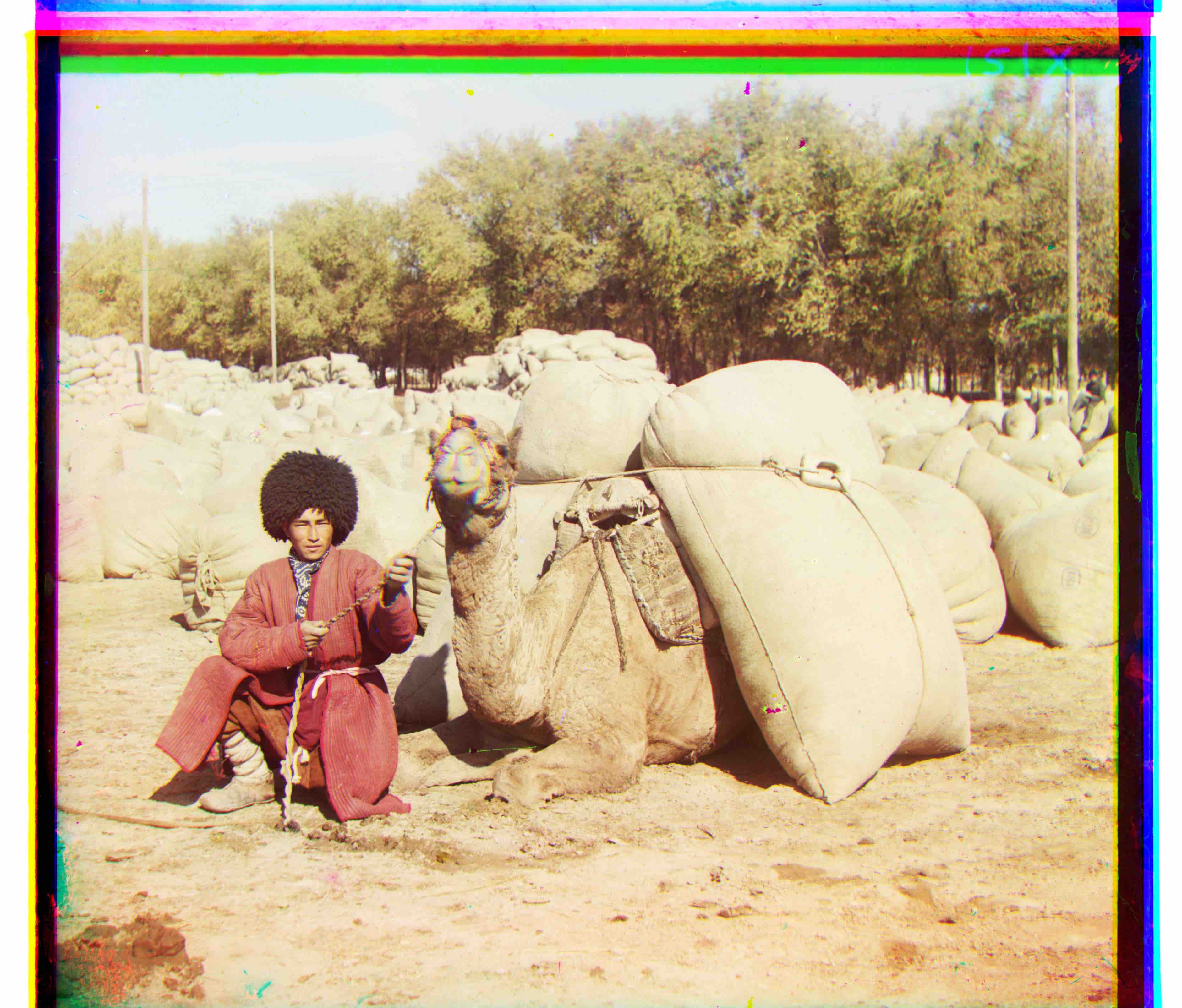

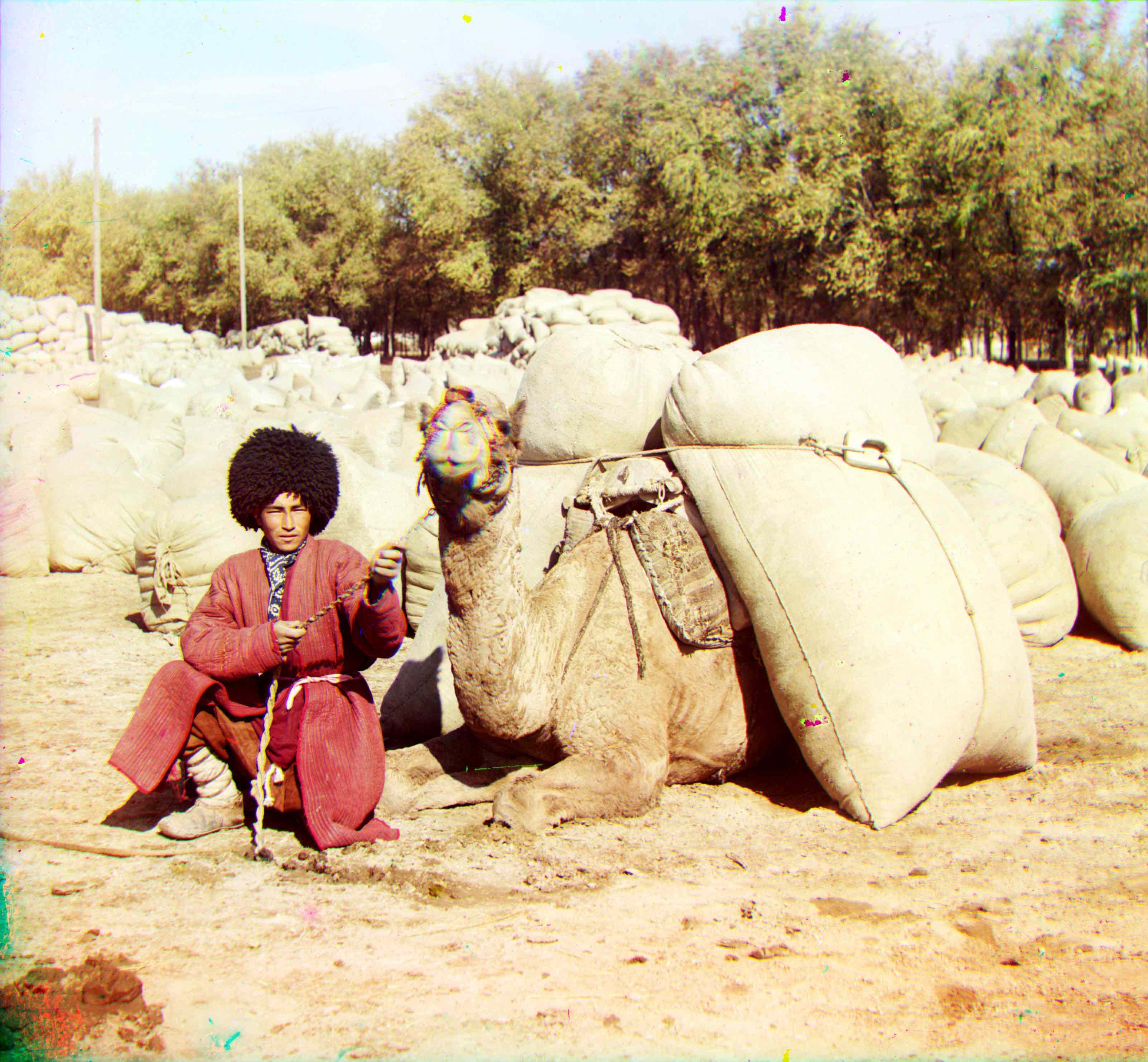

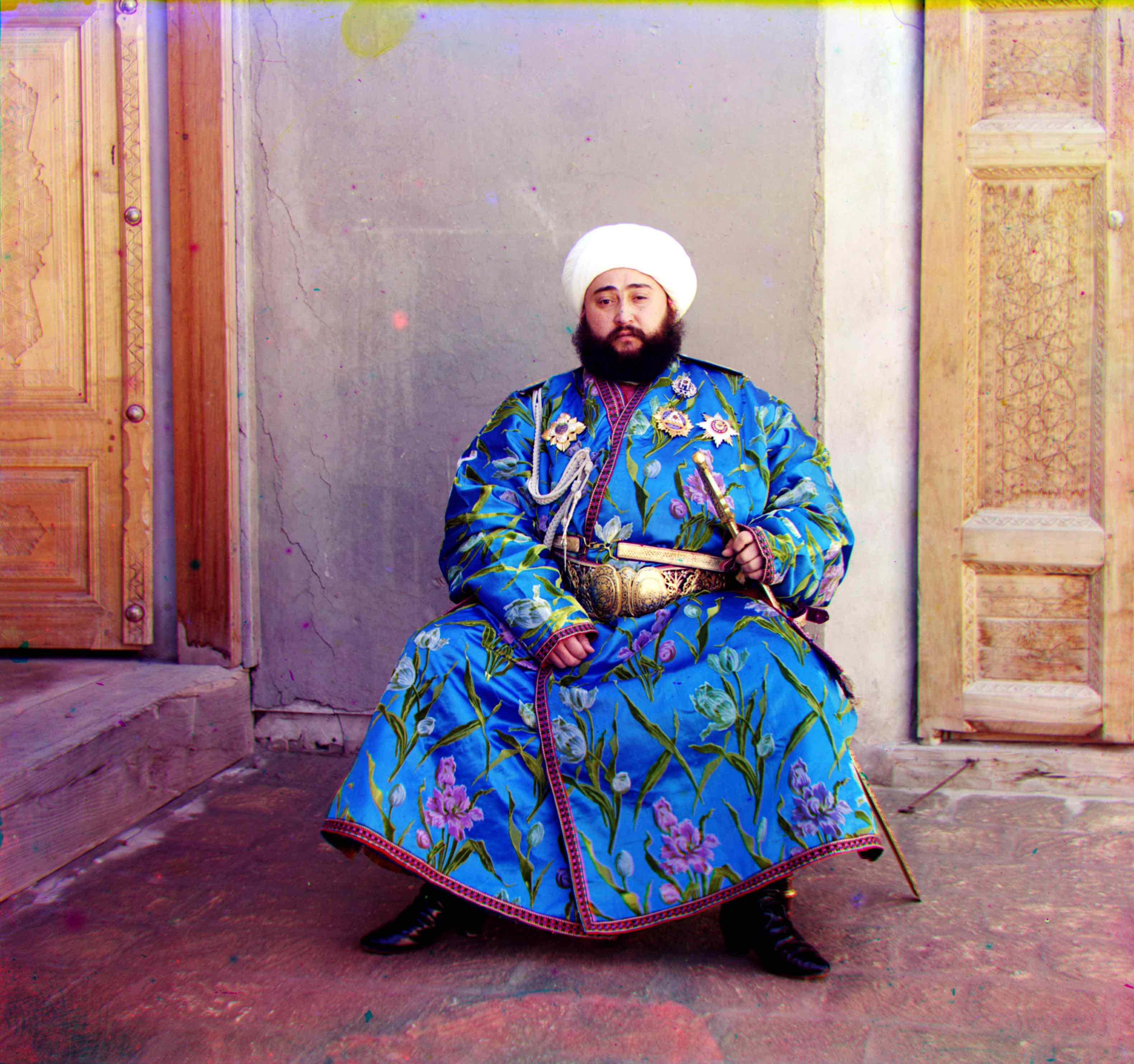

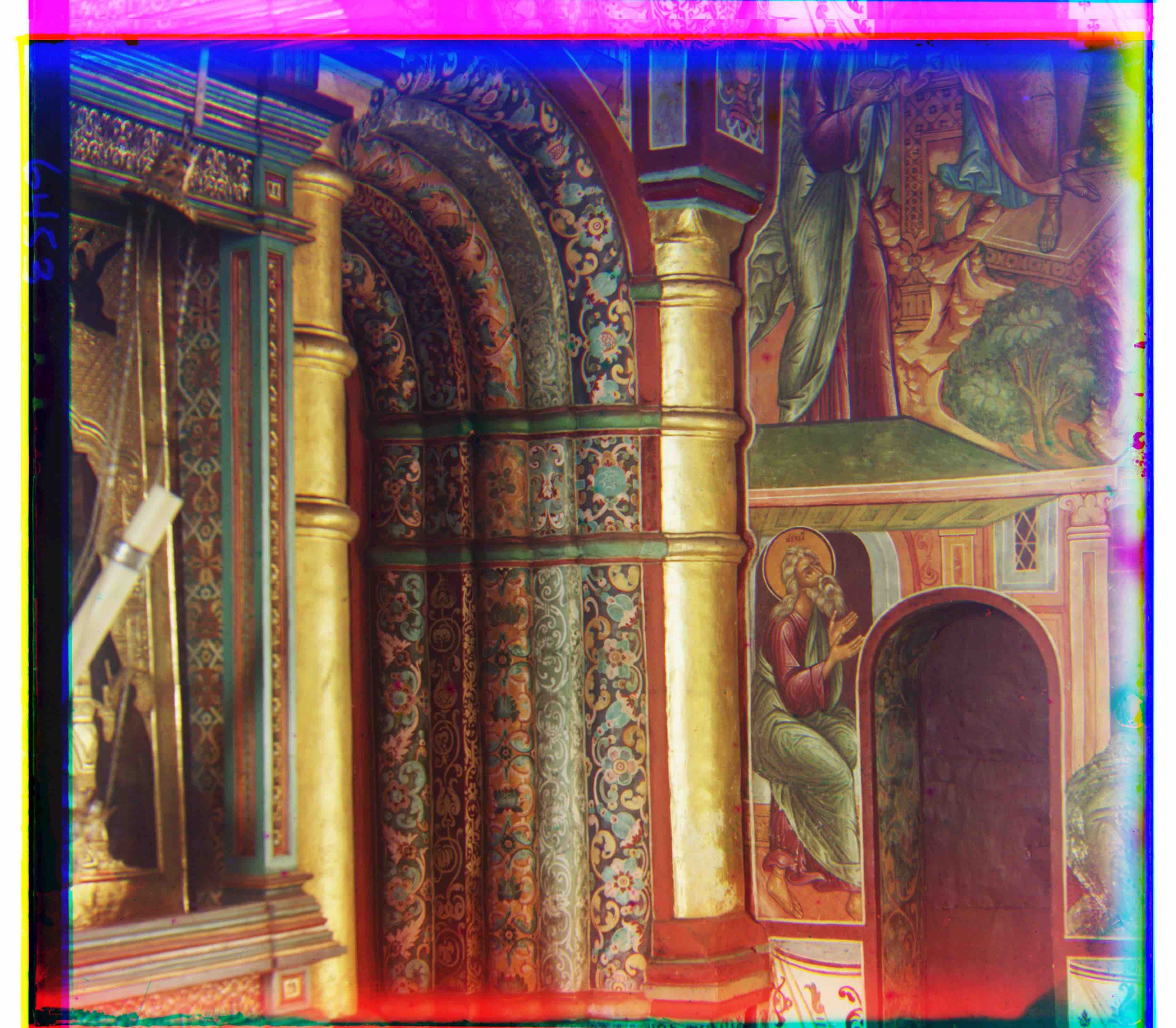

In the early 1900s Sergei Mikhailovich Prokudin-Gorskii travelled the Russian empire taking color photographs of everything he saw. He did so by recording three exposures of the same object onto a glass plate using red, green and blue filters.

|

|

In this assignment our goal was to take the three filtered exposures and combine them to produce one colored image. To do this we had to align the three exposures.

Basic Approach

The basic approach I used to align the images was:

- Use one of the filtered exposures as the base image: blue.

- Take the remaining filtered exposures and try every possible pixel shift within a searchable window and compute a metric on how well the shifted image aligned.

- Take the shifts with that result in the highest computed metric for each exposure and overlay them to be the r,g and b channels to get the final colorized image.

|

|

|

|

10 percent of the image borders were cropped prior to computing the metrics. Of the two metrics that I tried:

- SSD: or sum of squared differences of the reference and shifted image: sum(sum((image1-image2)^2)).

- NCC: Normalized cross correlation of the reference and shifted image: A dot product of the normalized image values.

I found NCC to work better in finding the optimal alignment for the images.

This technique works well for smaller images where the optimal result can be found by scanning over a smaller window. However for larger images the search window to find the optimal alignment can be quite large causing this technique to be computationally inefficient. To align larger images we use the "Image Pyramid" technique.

Image Pyramid

In this technique we do the exhaustive alignment search at different levels. We start at the deepest level where we rescale the image to be very small: 1/(2^layers) of the original size. Here we do an exhaustive search over a larger window: 40 by 40 pixels in size. We then shift the original picture by the appropriate number of pixels and move one layer up. Here we rescale the shifted original image to 1/(2^layers) of the original size and repeat an exhaustive search. The large 40 by 40 pixel exhaustive search is only needed at the bottom most layer. On higher layers we perform a smaller 4 by 4 pixel exhaustive search. We continue this process for all layers to align the different filtered exposures. The aligned exposures are then overlayed to get the final colorized image.

|

|

All example images were aligned fairly well using the image pyramid approach.

Bells and Whistles

Edge Detection

By comparing the edges within each image we can improve the alignment of the images. To utilize edge detection we utilize the same image pyramid technique to align the images however at each layer before doing an exhaustive search we run the sobel edge detection (which convolves a sobel filter with the image) on the rescaled images. This gives us a black and white image composed of only edges that we use to run the exhaustive search.

|

|

|

|

|

Though the Sobel edge detection technique does align the images quite well the differences in alignment across the images are slight.

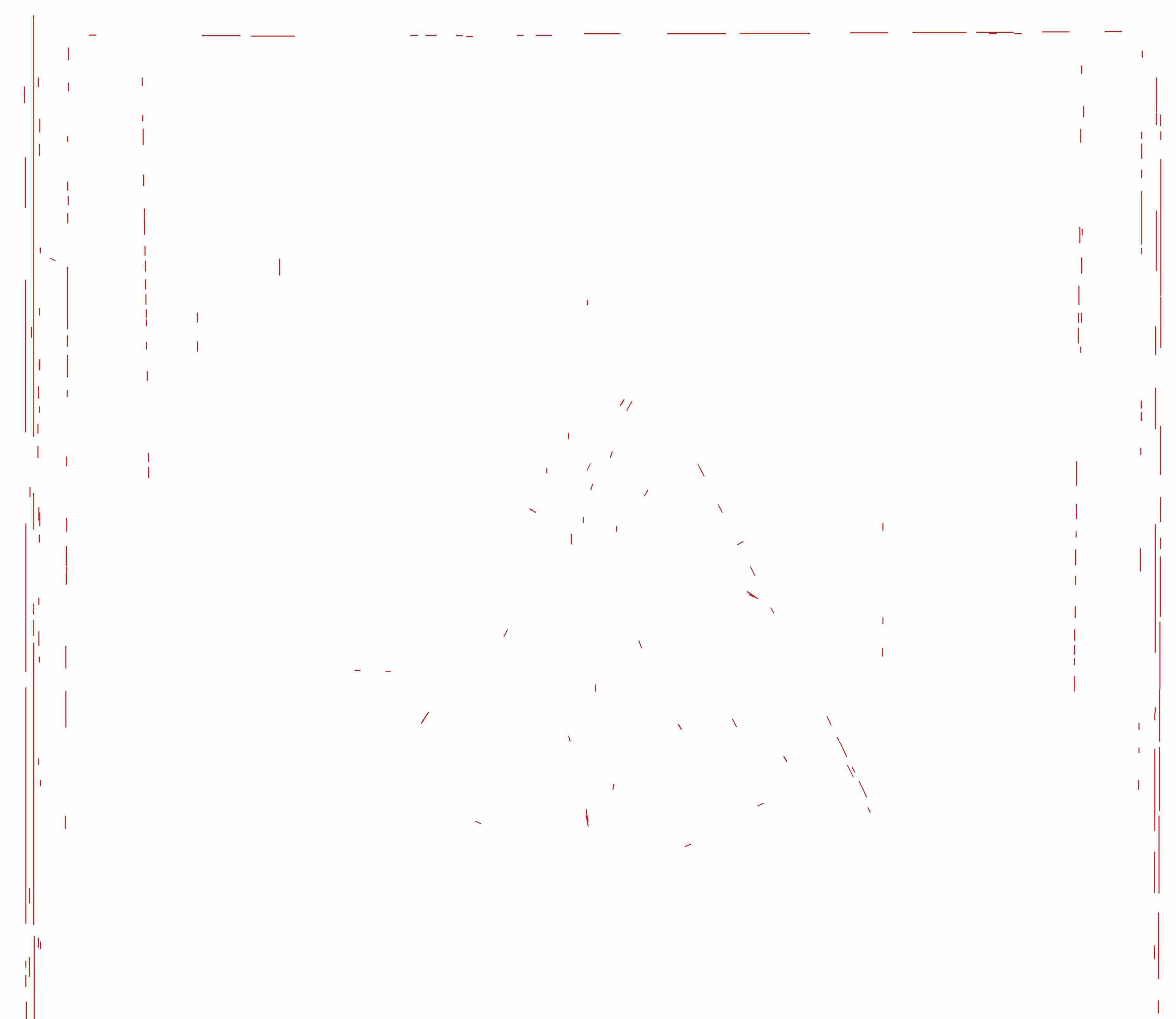

Auto Cropping

To enable autocropping we use canny edge detection to find the edges in the image. Then using the Hough Line transform we are able to get all the vertical and horizontal lines in the image. To find the borders we filter the lines that are within a certain percent of pixels away from the edges of the image. We only filter out horizontal lines to be considered for the top and bottom borders and vertical lines to be considered for the right and left borders of the image. The most constraining lines (that clip off the largest portion of the image) are reported back.

We repeat this process on all three channels of the image and the finally aligned image and take the most constraining of each border: top, bottom, right and left. This is then used to crop the image

|

|

|

Auto Contrasting

To increase contrast in the image we rescaled the pixel values of the image by a scaling factor that was decided based on trial and error with visual inspection. This increased the variance of the colors in the pixels of the image. We then recentered the image to have the same mean as before and clipped values that were above a certain threshold.

|

|

Example Images

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

Extra Images

|

|

|

|

|

|

|

|

|

|

|

|

Website template inspired by: https://inst.eecs.berkeley.edu/~cs194-26/fa17/upload/files/proj1/cs194-26-aab/website/