Now the project description stated that the naive approach would be to scan a [-15,15] area by shifting one image and keeping another stationary. Each shifted version of one of the color channel images would have its SSD with the stationary channel calculated and the shift with the smallest one would be the shift to use. I decided to use the blue channel as the stationary one and move the red and green channels. However, I did a silly mistake. Instead of calculating over all possible displacements, I took the minimum of each direction separately, simply the minimum vertically and horizontally. What made this hard to realize however was that this metric worked for three out of the four images I tested.

Now, what I thought was that I should just find a better way of analyzing the images. Rather then using the raw pixel values, I decided to use edge detection from gaussian blurring. As part of the spec, I knew we were not allowed to use any hi-level outside functions, but we were allowed to use resize. I knew that when a resized image was scaled back to its original size it created a gaussian blurred version of that image. Using this fact I subtracted resized and scaled back up versions of the images from themselves to get a mask of the original image.

This happened to work on all of the images now. However, I was still missing the fact that I was using a wrong displacement algorithm.

Once I moved onto trying to use an image pyramid to calculate the shifts for the larger images, I knew instantly something was wrong. After trying to perfect my image pyramid algorithm I realized that there was something simply wrong with what I was doing. I looked far and wide and realized that other people were describing that they used 2 loops. I only had 1 loop. Welp, time to start all over.

I went back to my naïve approach and worked on fixing it. However, there was something still wrong, I was getting the wrong numbers. I thought it could be the edge detection, so I scraped that. Then I realized something blood boiling, the roil and resize function I had been using change the range of the image from 0-1 to 0-255, so my math was inherently wrong. I fixed this and, what do you know, everything worked beautifully.

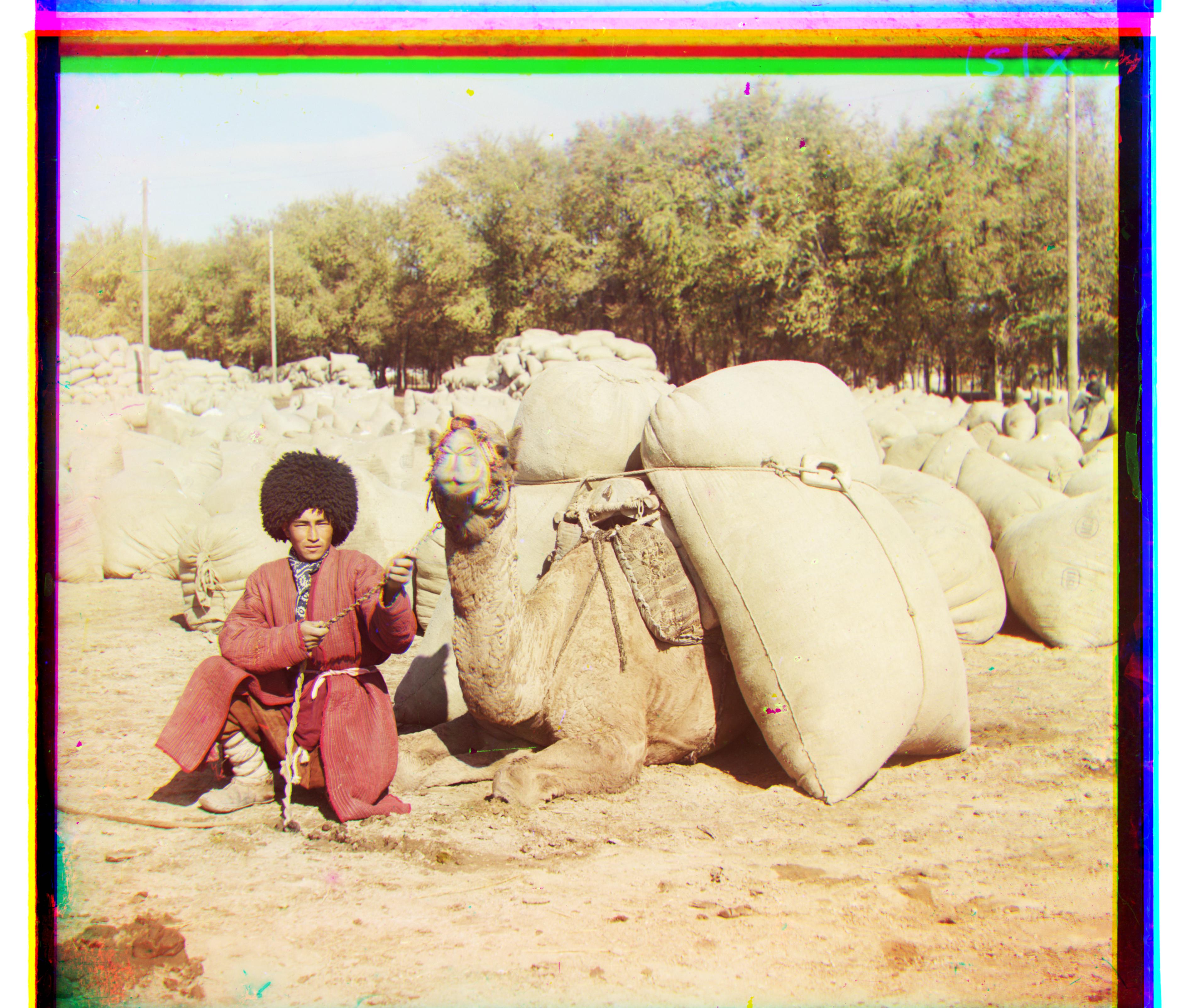

All the sample images I tried worked until I tried the emir image. The simple SSD metric just didn’t work. I heard other people had used a different color channel as there base so I tried that but got worse results. Therefore, I decided to go back to edge detection.

Again something was wrong, I looked at my code for hours trying to figure it out and realized that I forgot to change my base color channel back to blue. I changed it back, and presto, it worked beautifully.

I was able to get all the images to align. I used SSD with a recursive image pyramid. For images that did not align with this, I used edge detection by downscaling and then upscaling the image to create a gaussian blur that I subtracted from the original images.

A weird bug that I have is that I think the images might be unaligned by like a single pixel as compared to others results, but, as shown from the above trial and error, I don’t have the time to look for it at the moment. However, the images are still pretty much perfectly aligned.

This page uses bettermotherfuckingwebsite as a reference