Part 1

In this part of the project, we first implement hybrid images, which are images that look like something from close up, and something entirely different from away. This is accomplished by combining the low pass filtered version of one image with the high pass filtered version of another image and averaging them. We then implemented Gaussian and Laplacian stacks, the former of which is just an image passing through a Gaussian filter multiple times and seeing its progress as it gets blurrier, and the latter is the difference between the applications of the Gaussian filter so that we can see divide of the image into different frequency ranges. Lastly, we implemented multiresolutional blending, which utilizes Laplacian stacks in order to let us combine two different images in a smooth manner.

Part 1.1

|

|

Part 1.2

|

|

|

|

|

|

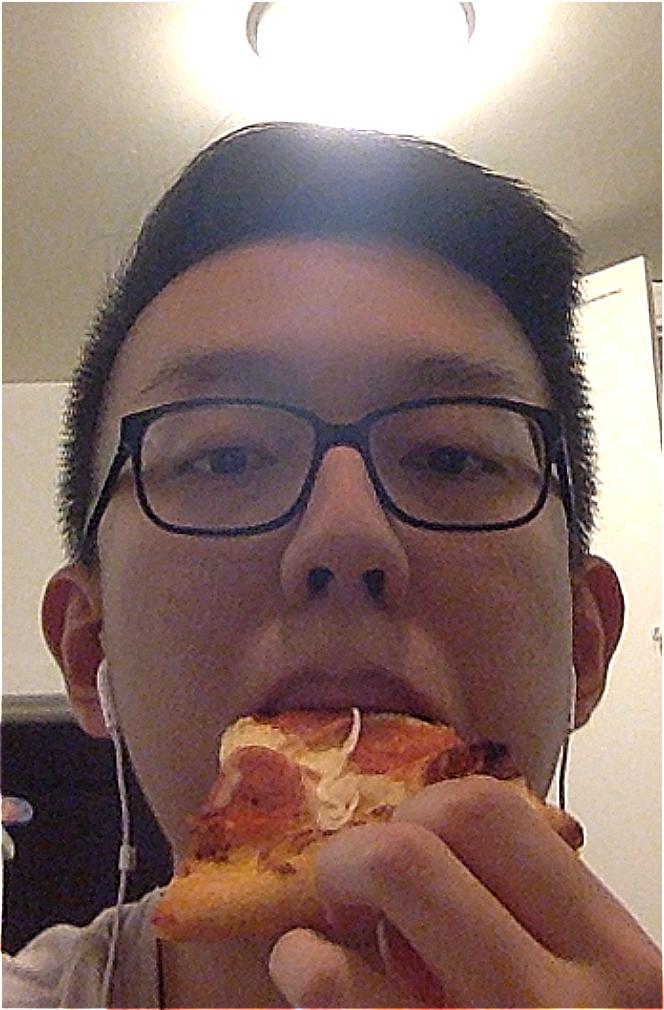

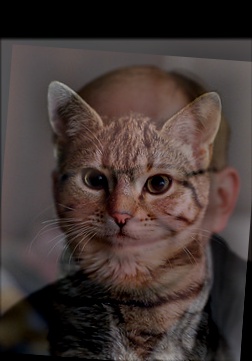

Below is a failed hybrid of a chameleon and former UC Berkeley Chancellor, Nicholas Dirks. This is likely because the chameleon's eyes are a lot farther apart and the images are very different sizes.

|

|

|

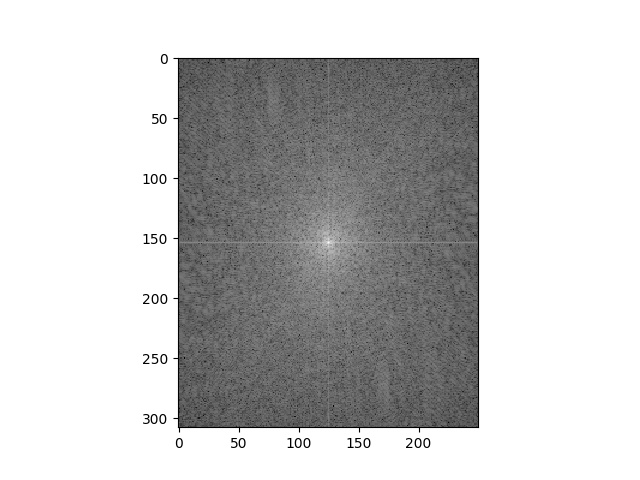

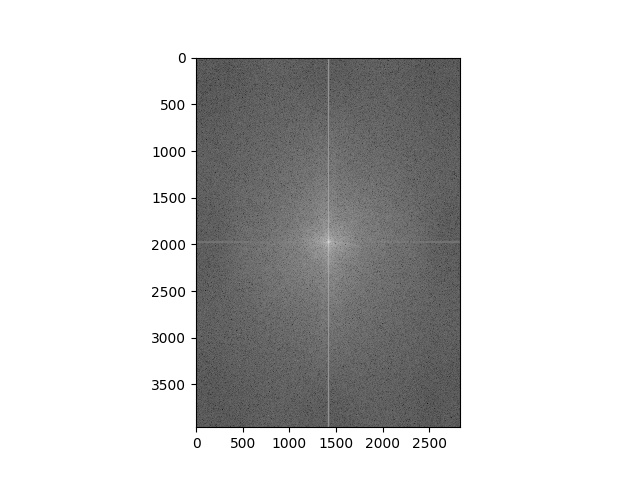

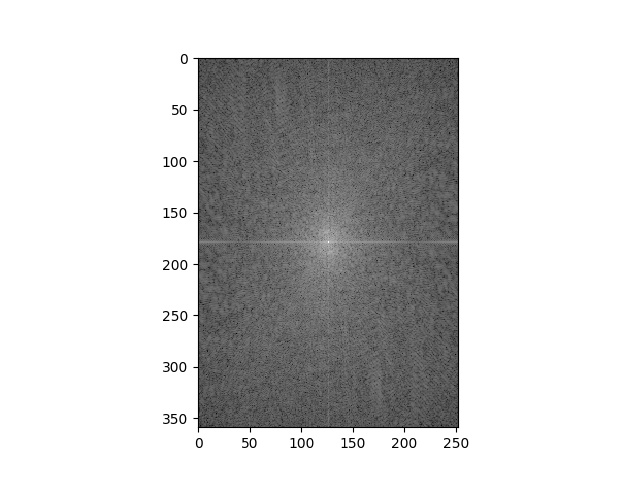

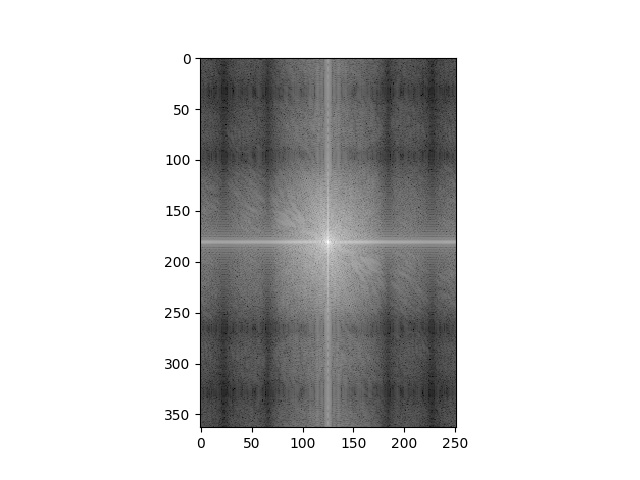

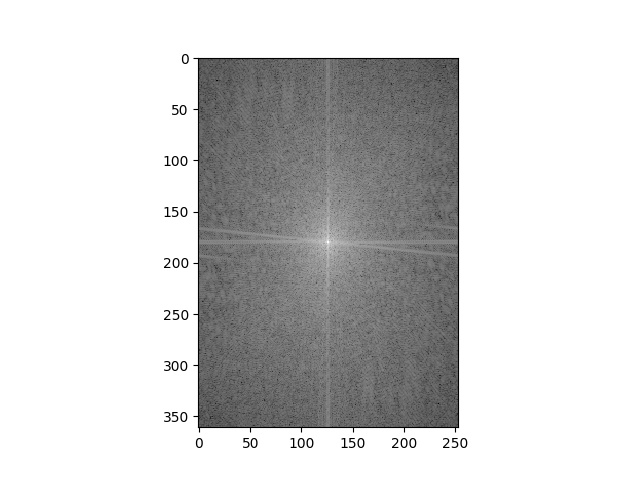

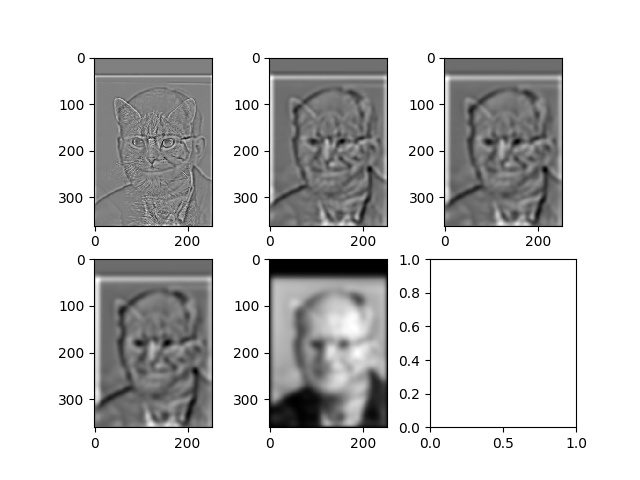

Below is the Fourier analysis for the kitten/Professor Efros hybrid.

|

|

|

|

|

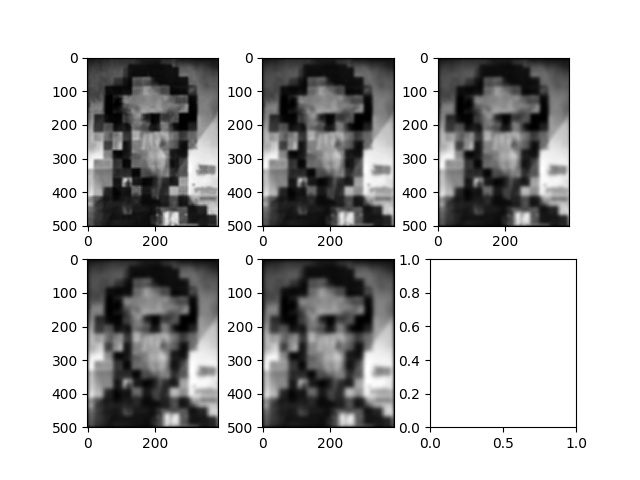

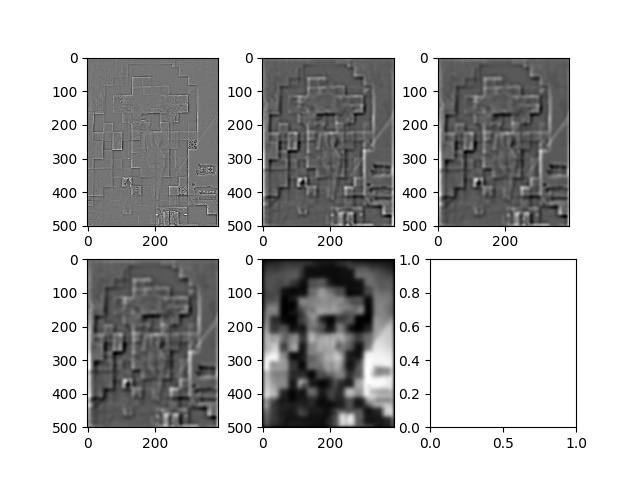

Part 1.3

|

|

|

|

Part 1.4

|

|

|

|

|

|

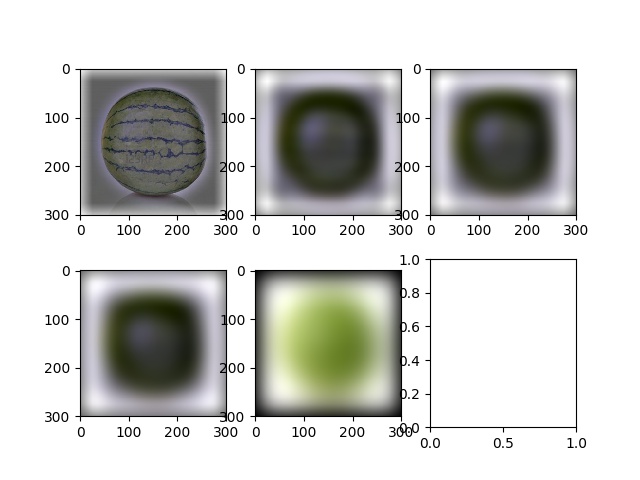

Below is the Laplacian stack (N = 5) for the waterlemon.

|

Below is an example for an irregular mask.

|

|

|

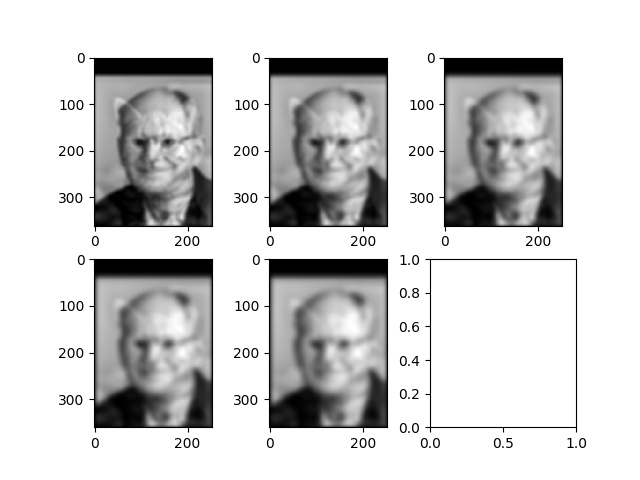

Part 2

In this part of the project, we implement Poisson blending. This allows us to implant a source image of our choosing into a target region of our choosing, and the algorithm tries to blend the two images together as naturally as possible. This is accomplished by first creating a mask of the region in the source image that we want to transpose, and overlaying that mask into the area of the target image that we want the source image to be placed on. We then reconstruct the pixels in that target region by minimizing the least squares difference of the gradient of the pixels in the target region and the source region, as well as minimizing the last squares difference between the gradient at the boundary of the region and the source region boundary.

Part 2.1

|

Part 2.2

Blending Keanu Reeves inta a coral reef was my favorite blend. The blending works by minimizing the least squares difference of the gradients in the target region and the source region, as well the least squares difference of the gradient at the boundary of the target region and the source region. Notice how there are "shadows" around Keanu in the blended image - this is because the original background Keanu was in was lighter than that of the ocean.

|

|

|

|

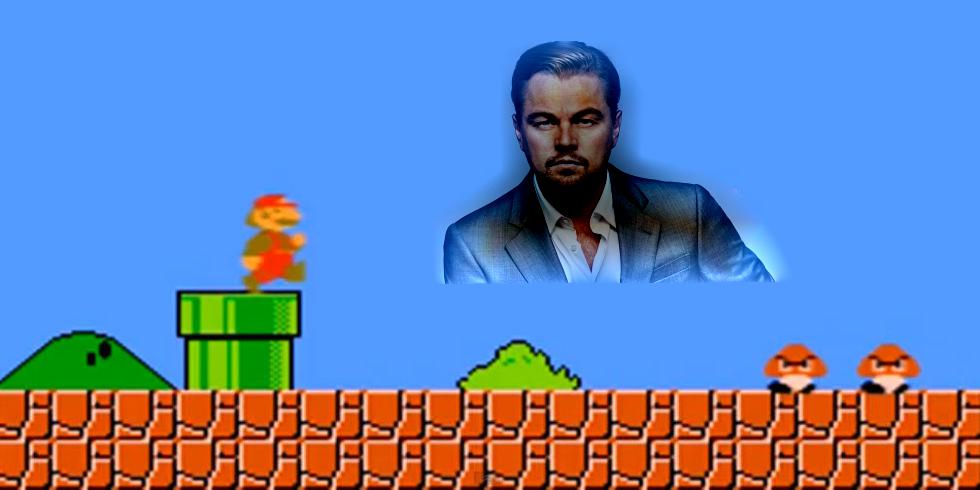

Leonadro Dicarprio blened into a mario game.

|

|

|

|

Below is a failed blend. The area around cropped Oski is not a single color nor is it smooth, so blending it into a smooth background like the grass on a golf course is likely difficult.

|

|

|

|

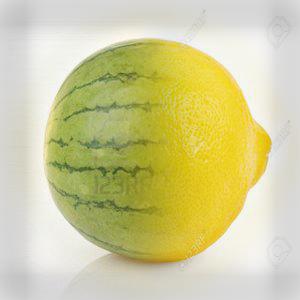

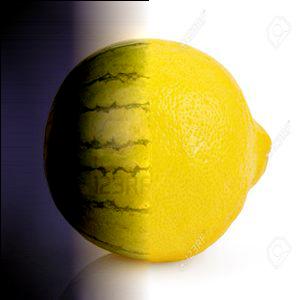

Below we blend the watermelon and lemon using Poisson blending, and find that the result is not nearly as good as the result from multiresolutional blending. There are likely a couple reasons for this, the first being that the mask is very big compared to the size of the image (it takes up half the source and target images). Additionally, Poisson blending works best when trying to "fit" a source into a target background, whereas here we simply want to blend the watermelon and lemon at the midpoints to form one continuous object.

|

|