CS194-26 Project 3

Zhanshi Wang

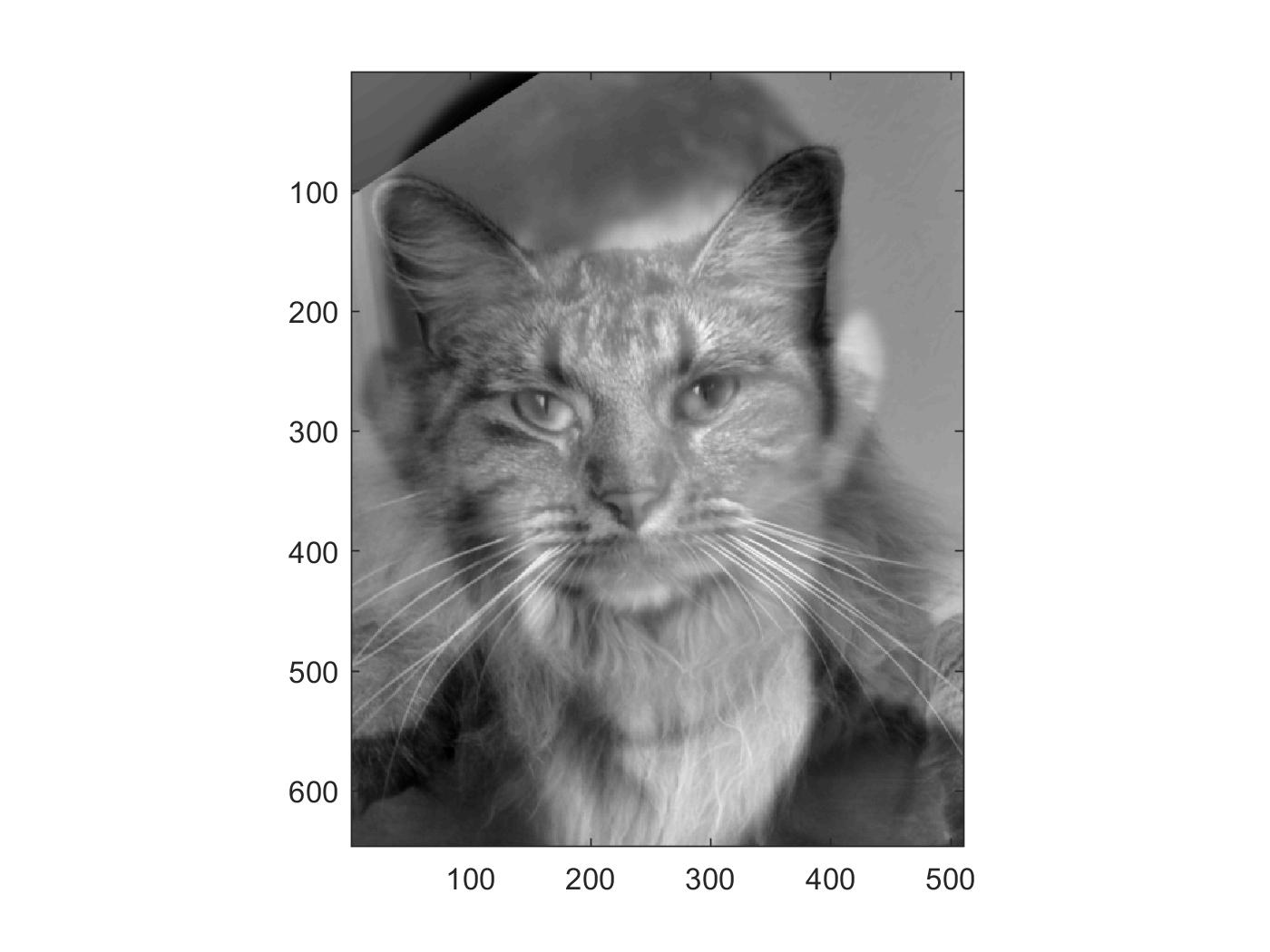

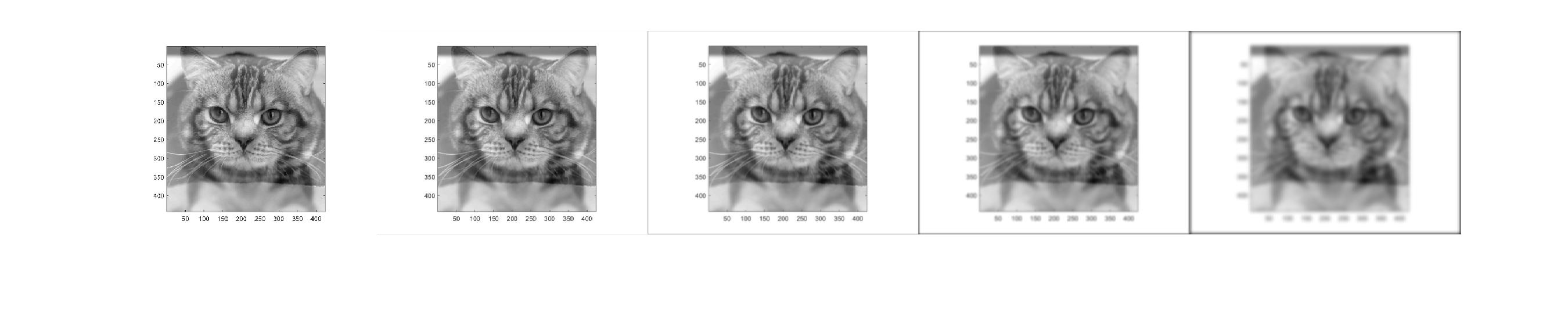

Part 1.1: Warmup

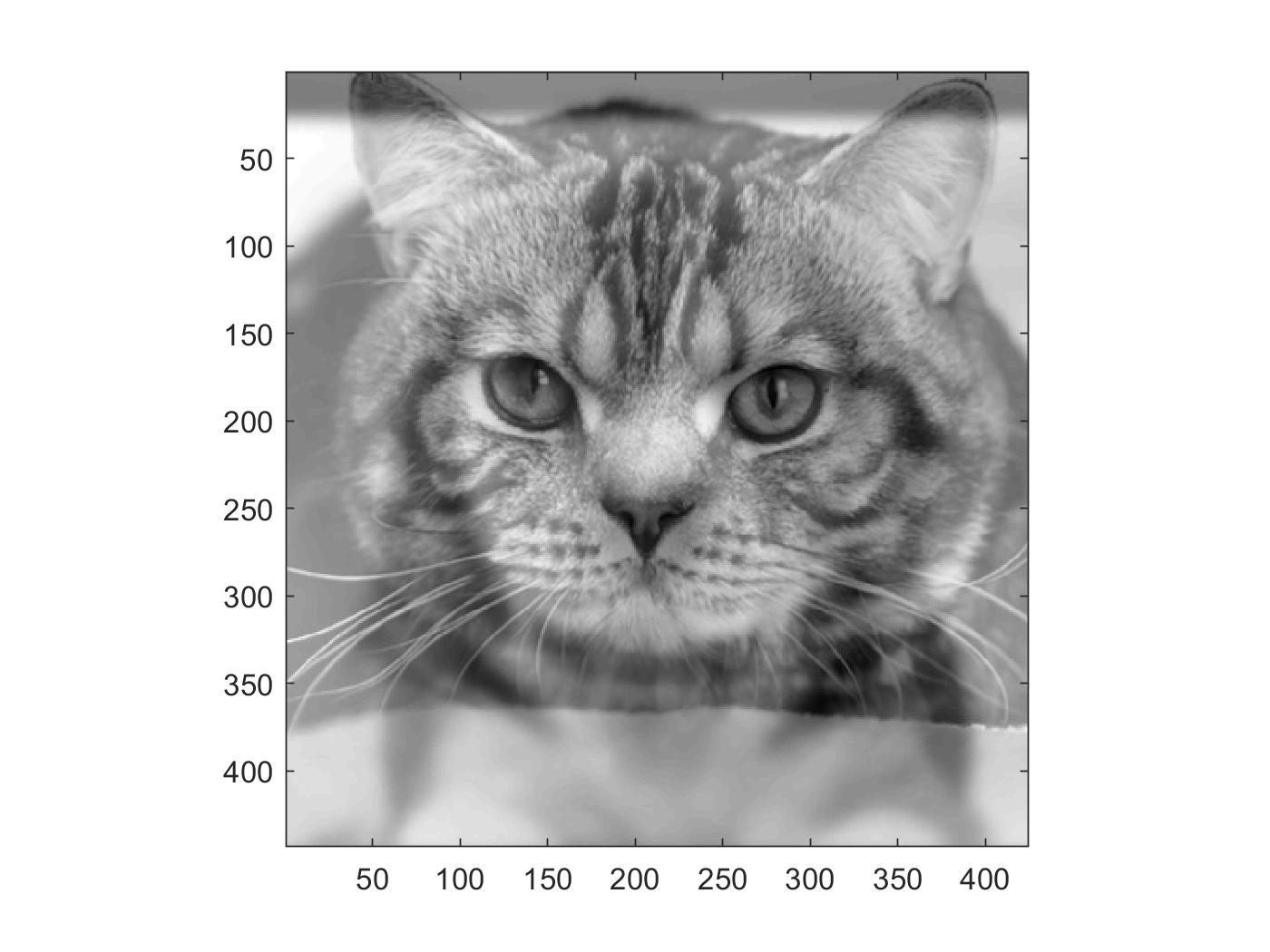

Using unsharp masking technique, "sharpen"ed these cat and sheep!

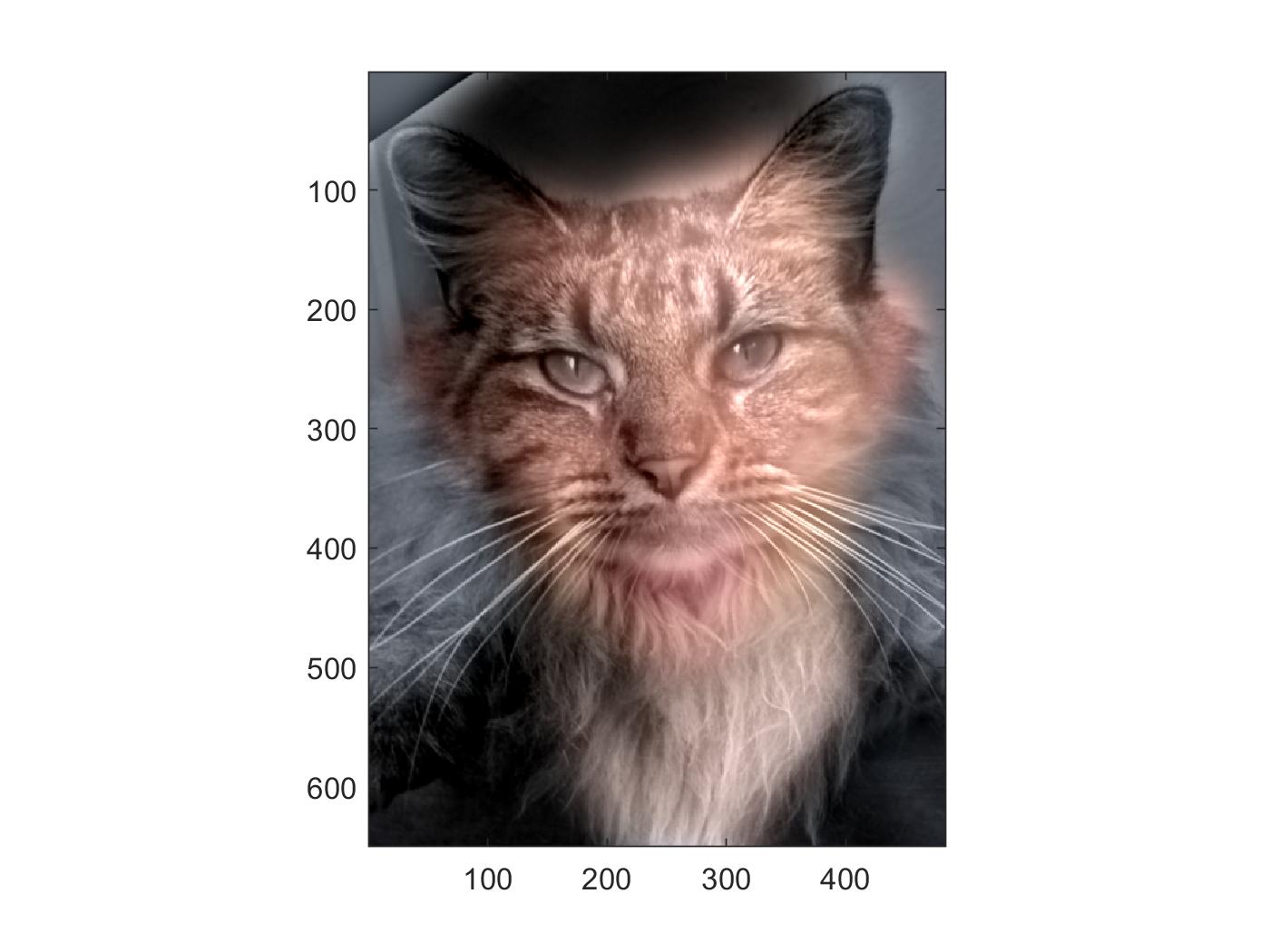

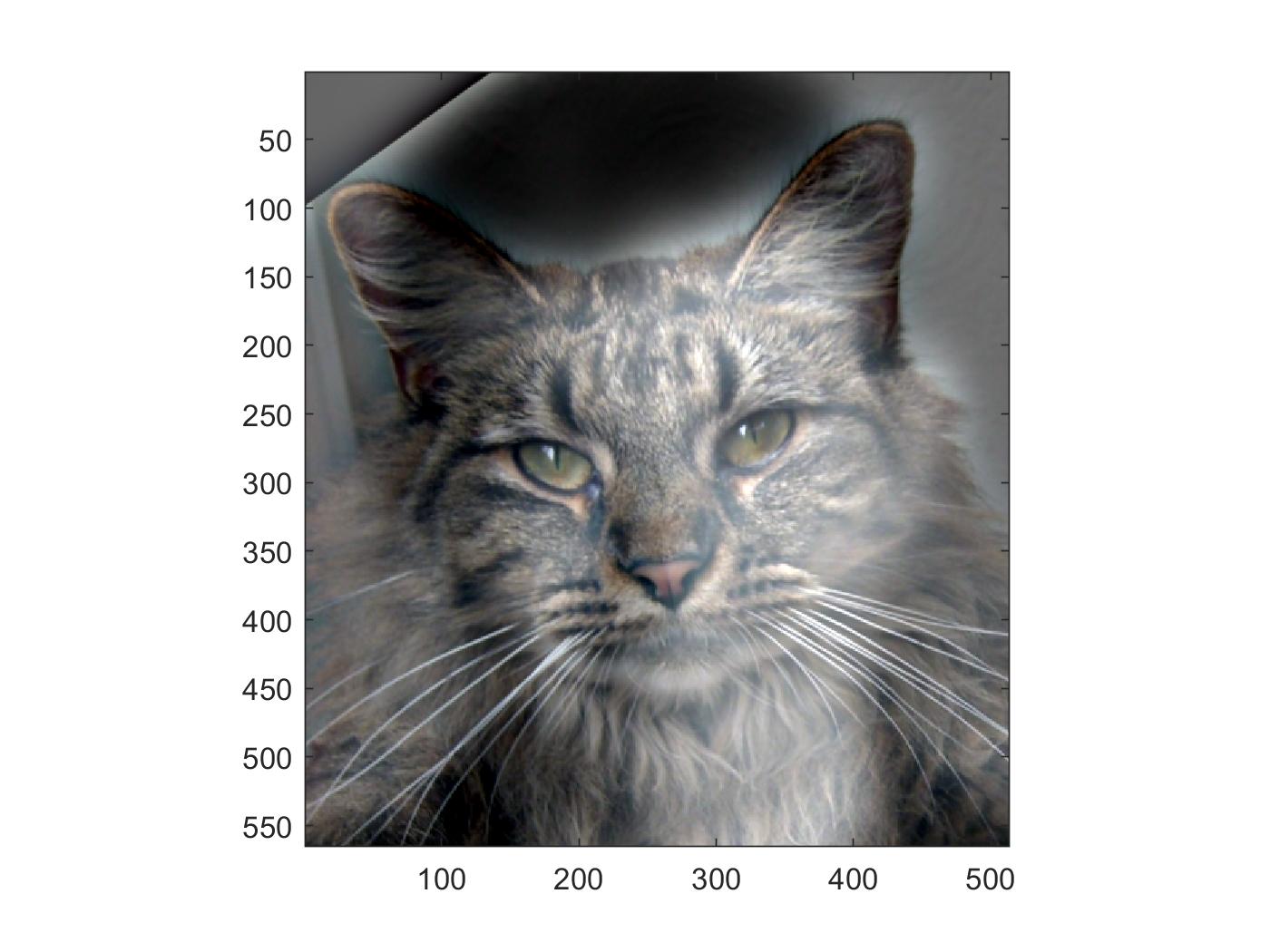

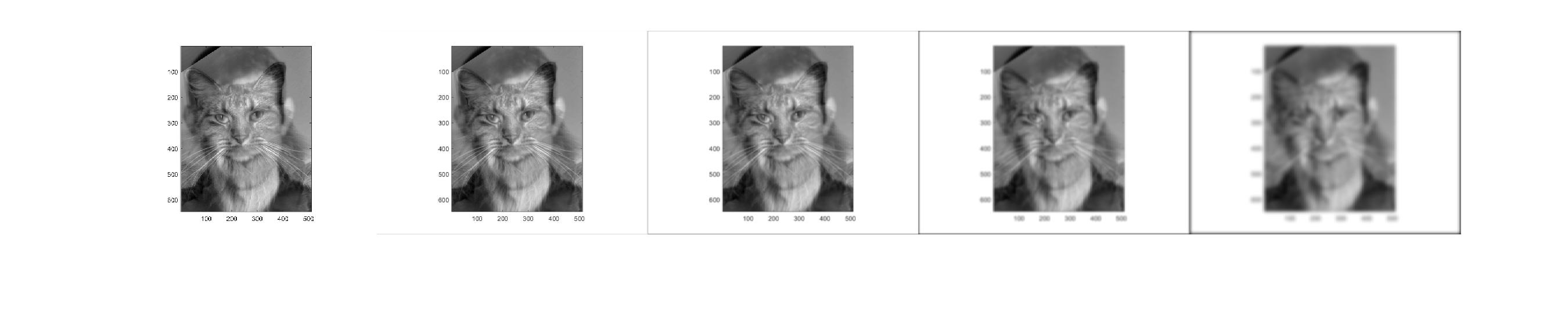

Part 1.2: Hybrid Images

Based on the approach described in the SIGGRAPH 2006 paper by Oliva, Torralba, and Schyns, created these hybrid images! These images change in interpretation as a function of viewing distance.

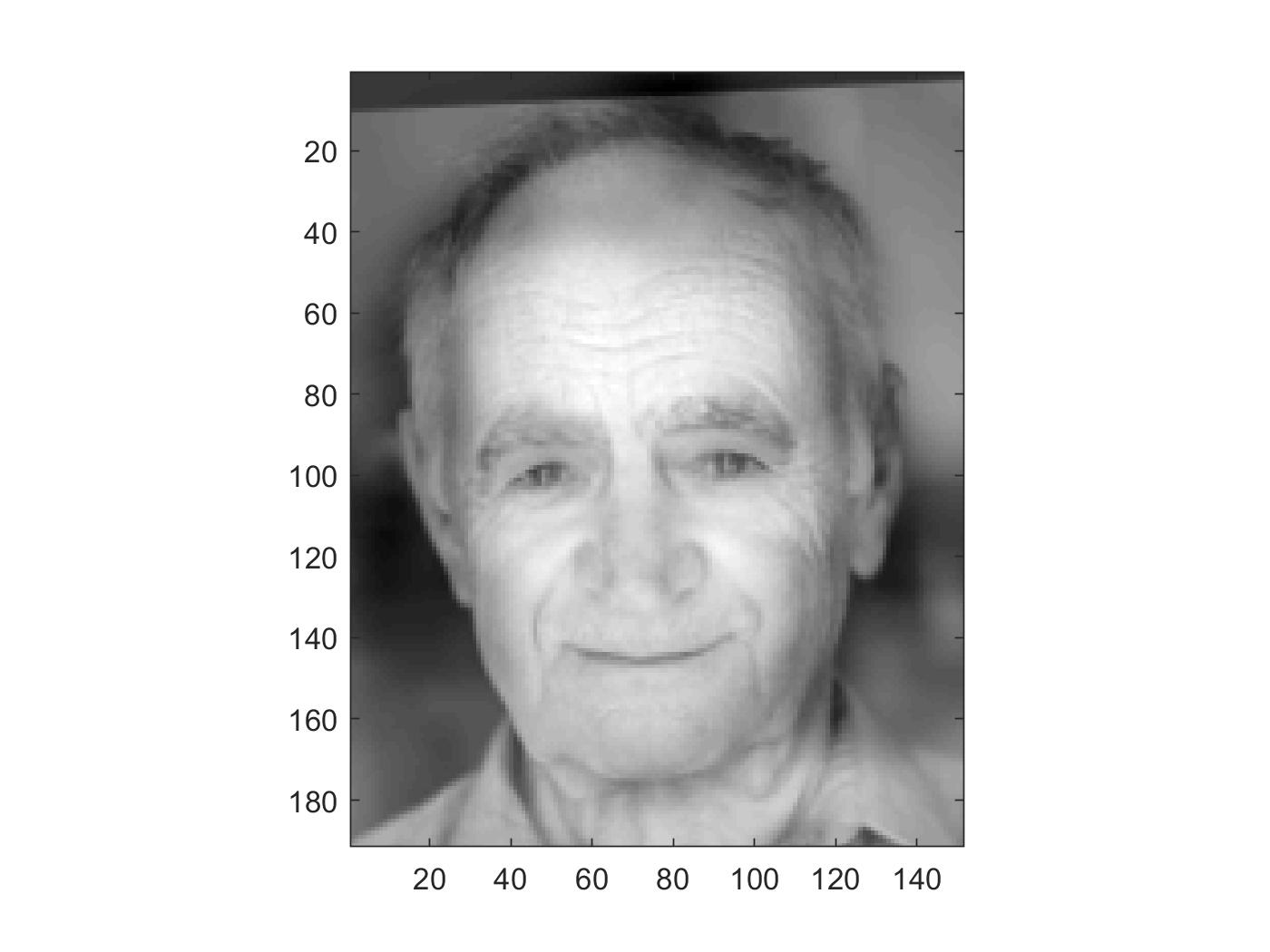

First let's take a look at the derek and nutmeg

The effect seems enhanced with color added to both low and high frequency images. (Left add color to Derek)

If the images are too similar, like the case when both are human faces, we can barely notice the low frequency one. (Failure)

When the two images are not that similar, we can easily see the effect.

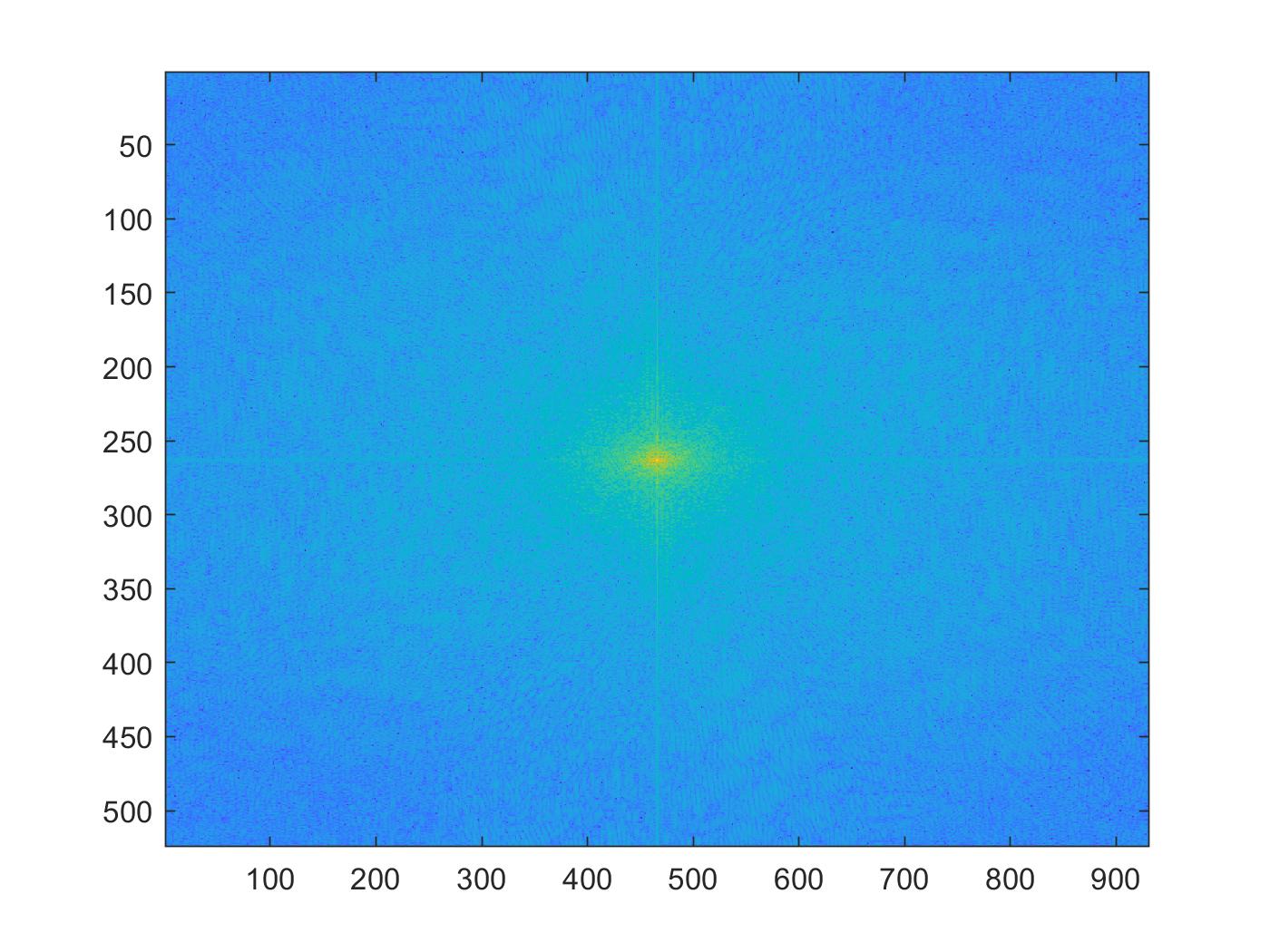

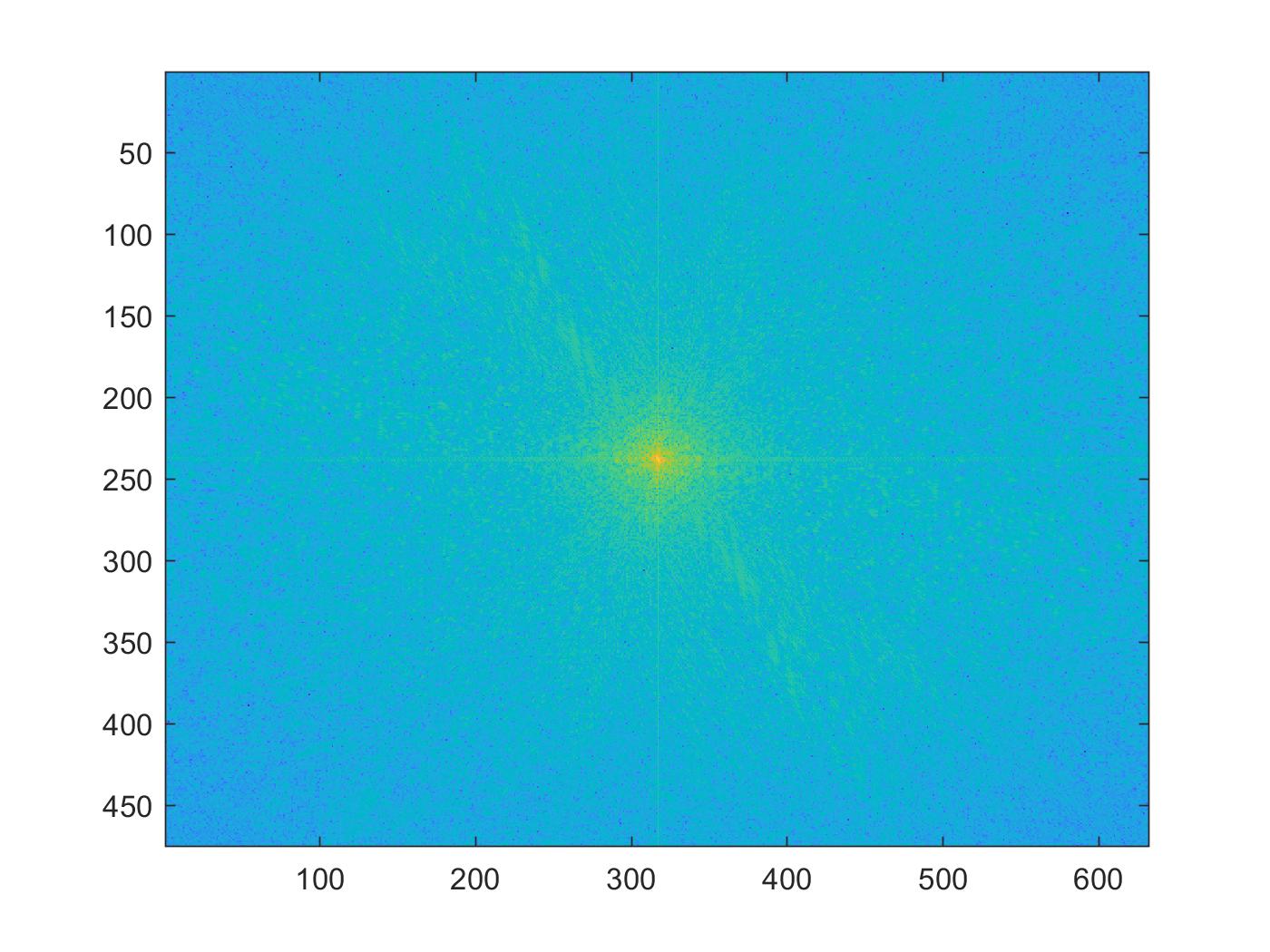

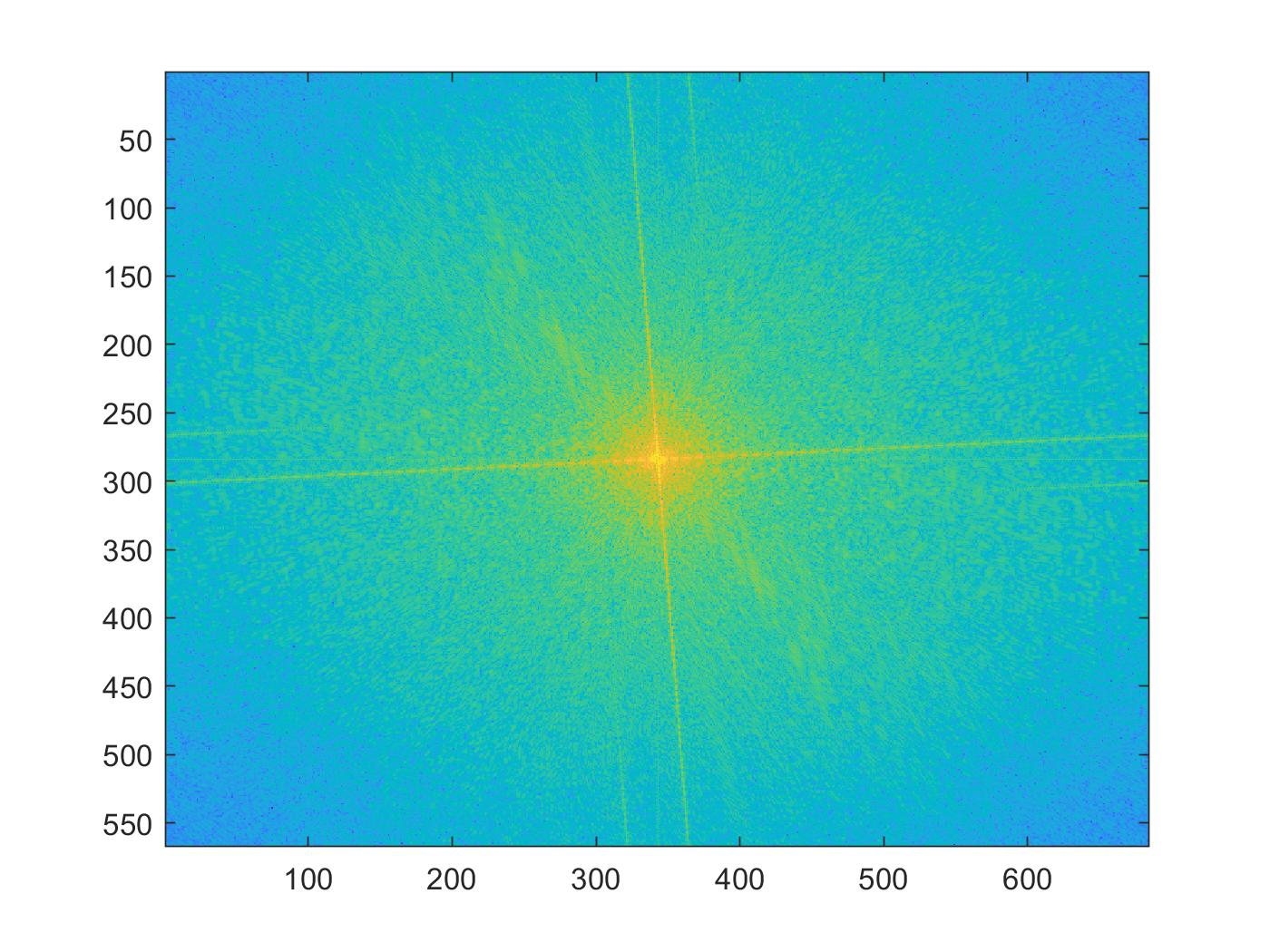

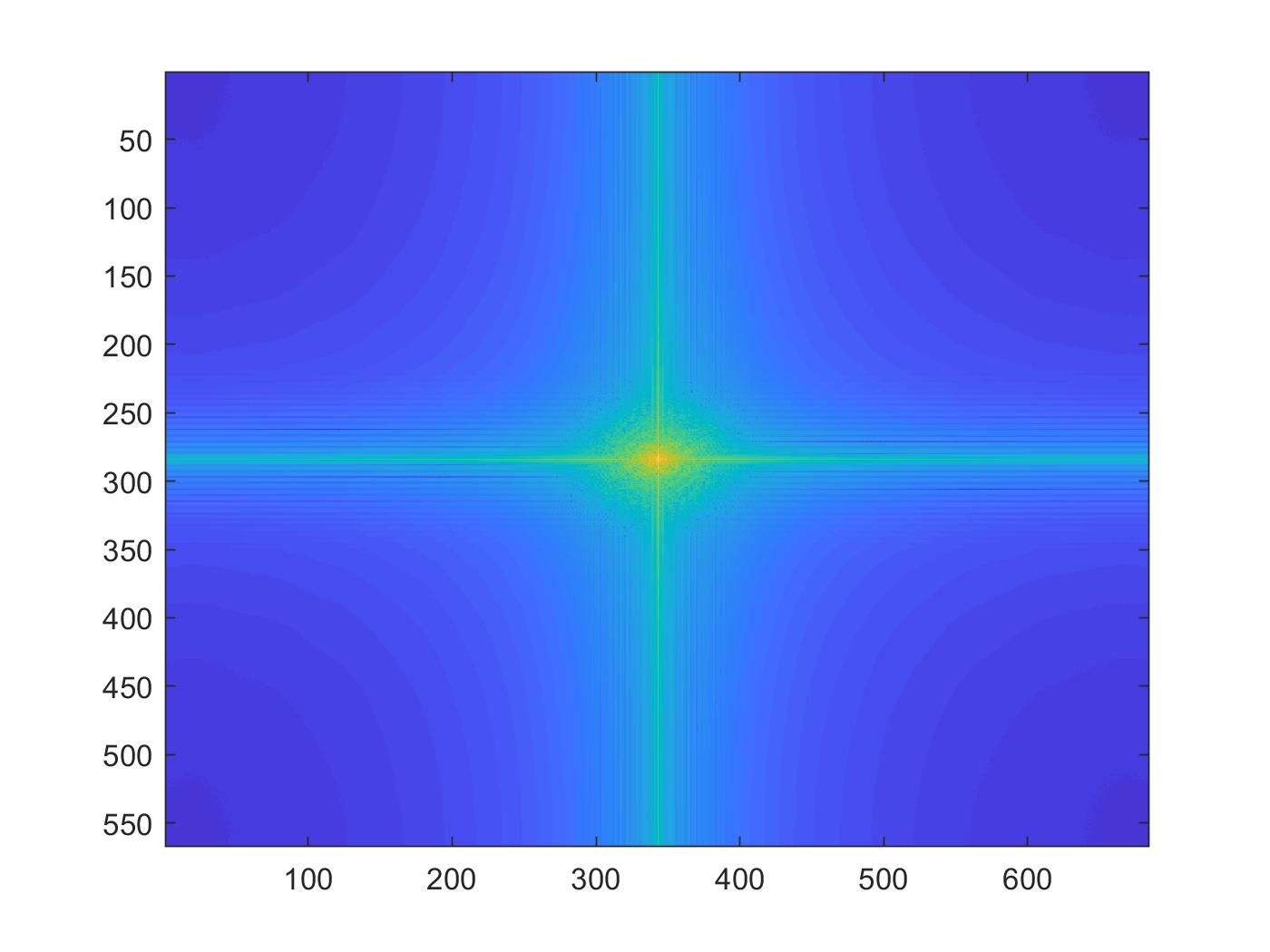

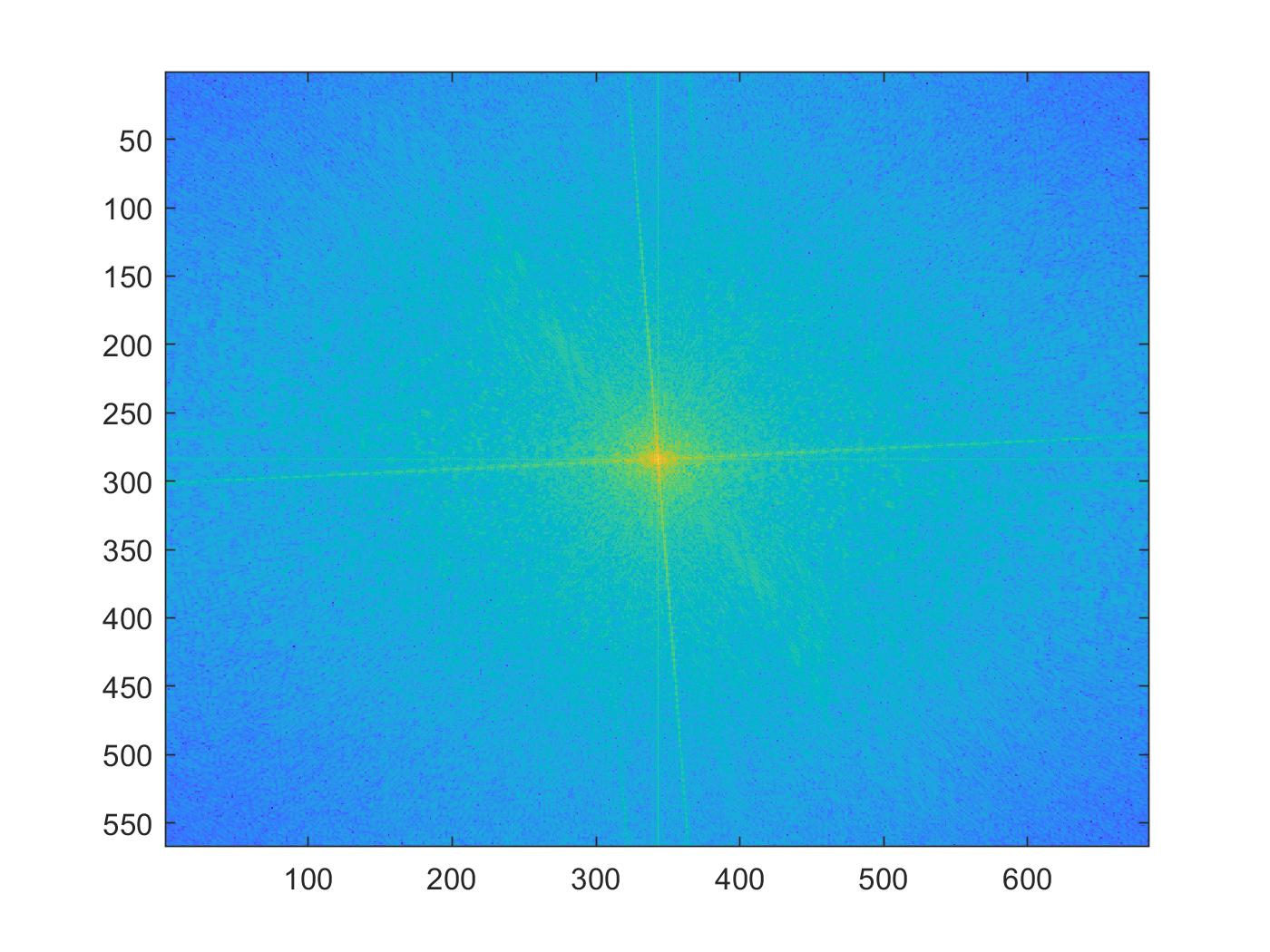

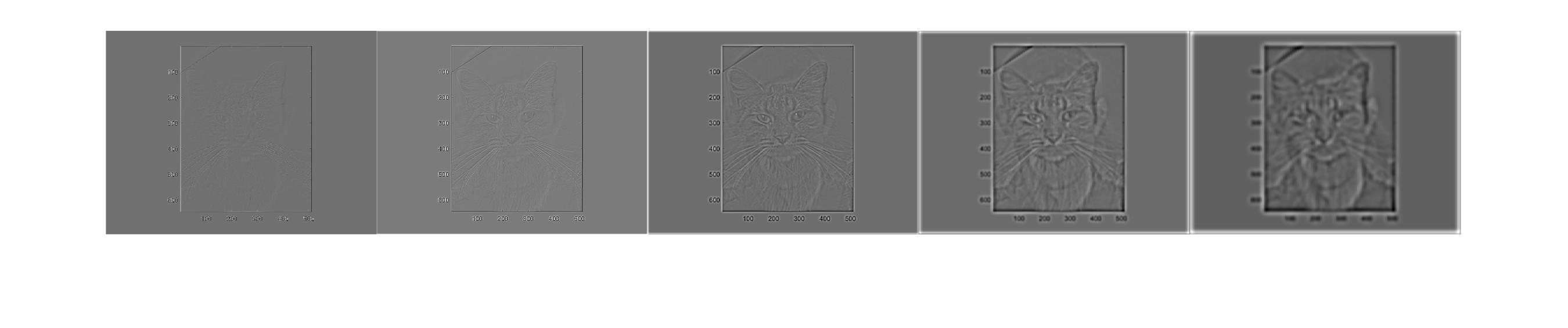

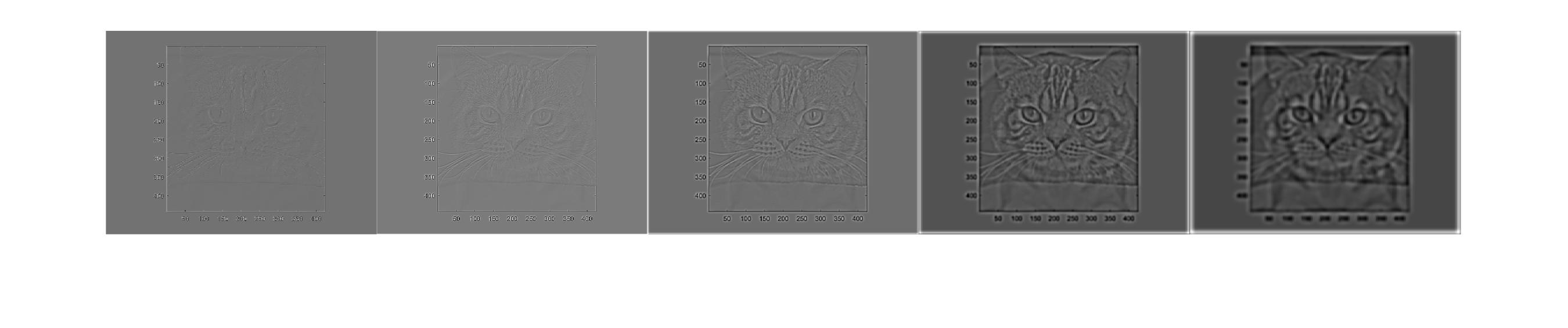

Frequency analysis:

Original images'

High pass

Low pass

Hybrid

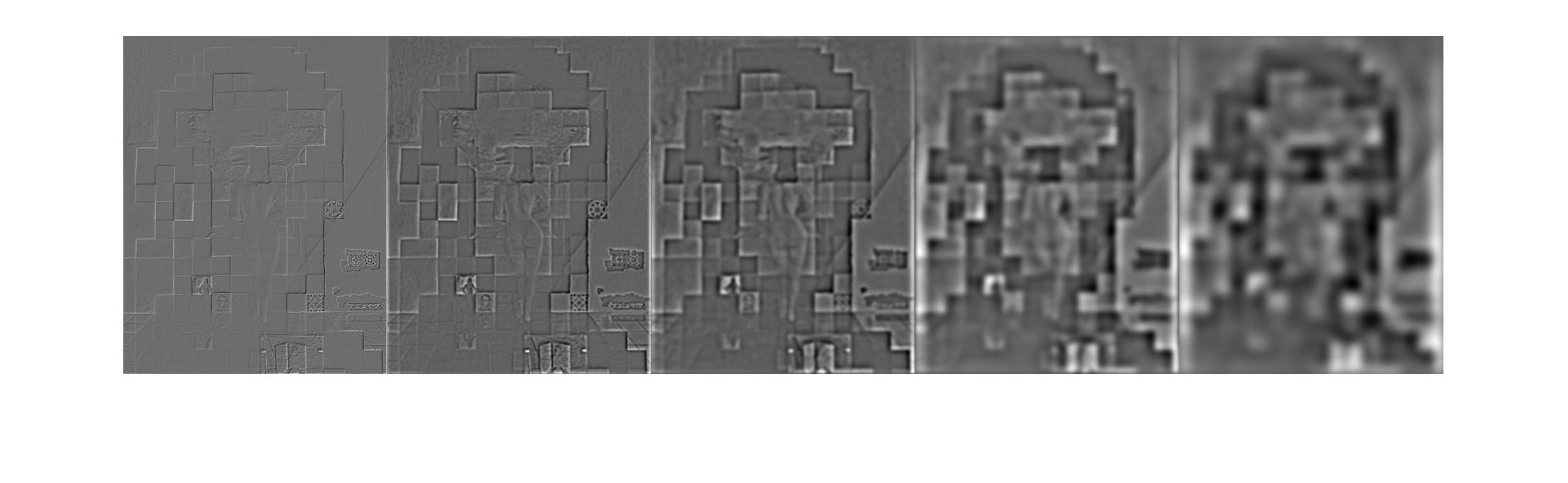

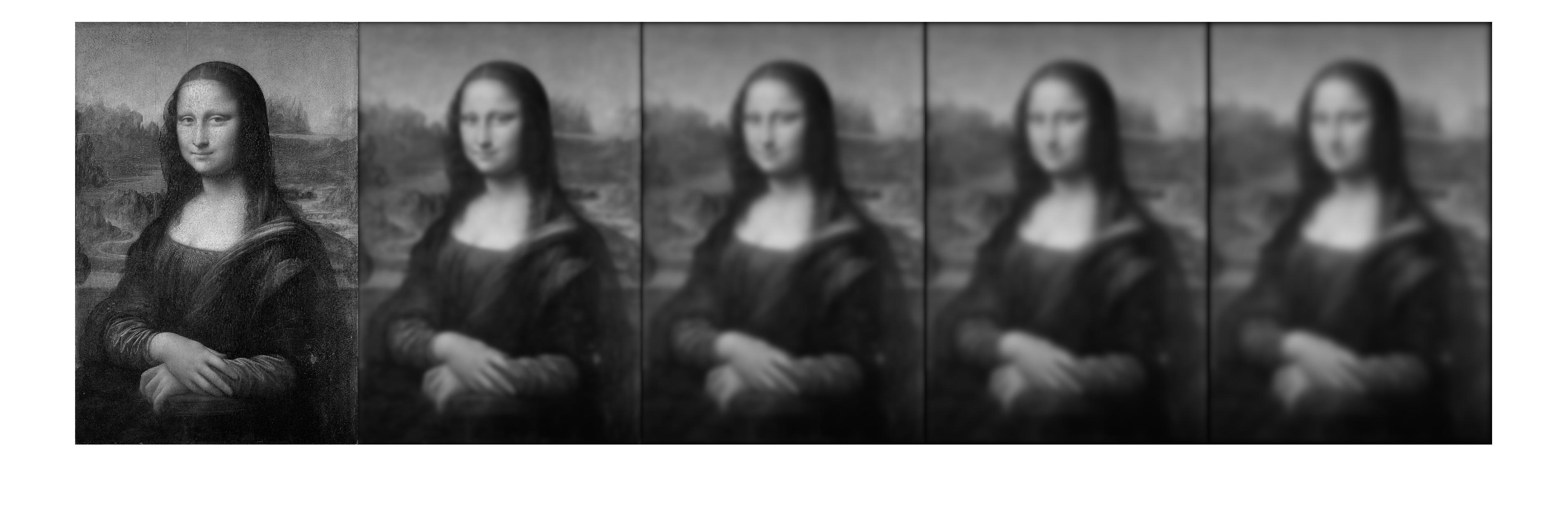

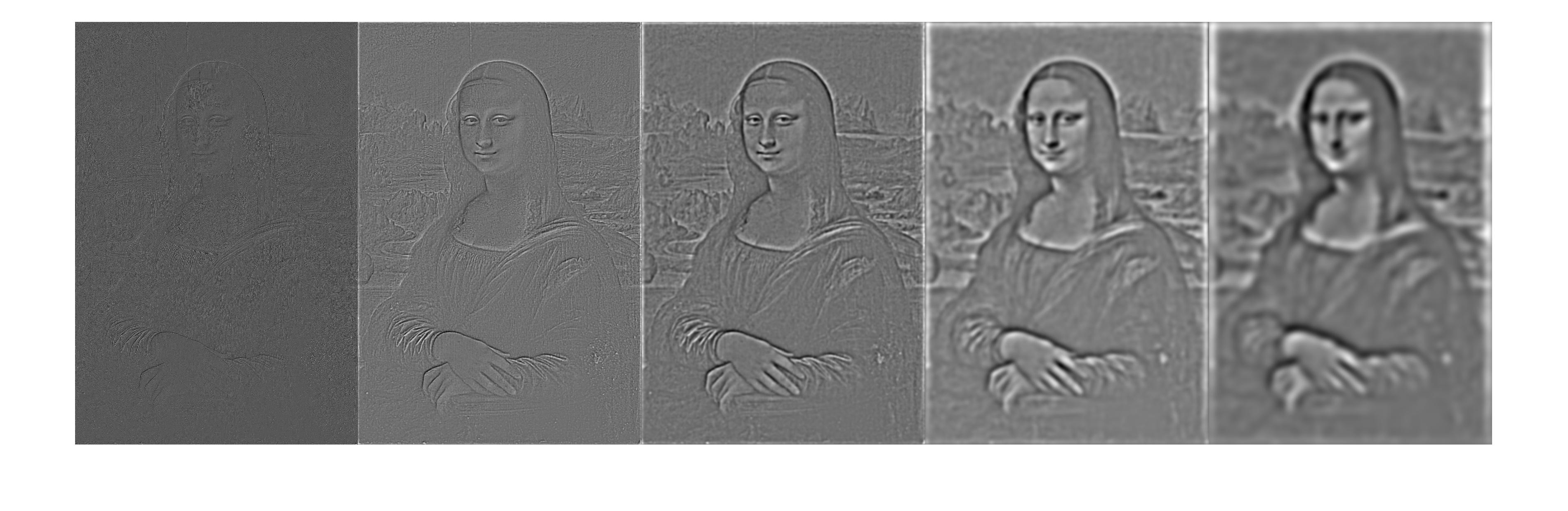

Part 1.3: Gaussian and Laplacian Stacks

Built a Gaussian and a Laplacian stack. The Gaussian stack applies same Gaussian filter at each level. Each layer of the Laplacian stack is a summation of the differences of previous Gaussian layers. We can enjoy the multiple structures of the images at ease!

Mona Lisa looks old in high frequency :(

Now we use the result from Part 1.2

We can tell the cat and dog!

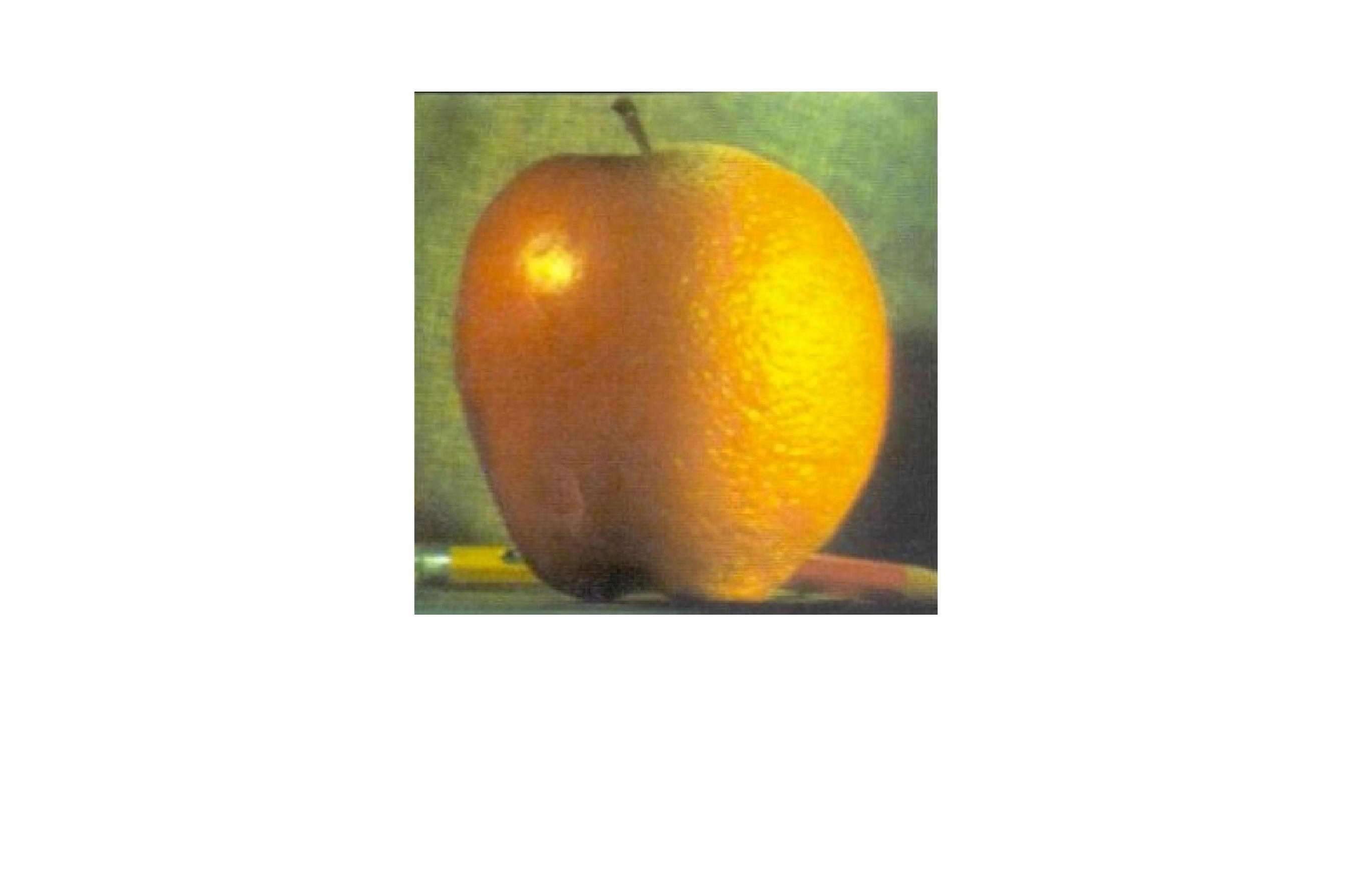

Part 1.4: Multiresolution Blending (a.k.a. the oraple!)

To apply multiresolution blending, we first create Laplacian Stacks on both images. Then we build a mask with feathering on the transition area to keep only part of one of the images. We then build the Gaussian stack of the mask and combine the mask and the Laplacian stacks using the method mentioned in the paper by Burt and Adelson. Finally we collapse the combined stack. Note that apart from summing up the stack, we need to add one additional layer of the Gaussian stacks of the original images. Also used color to enhance.

Let's first take a look at an oraple we made!

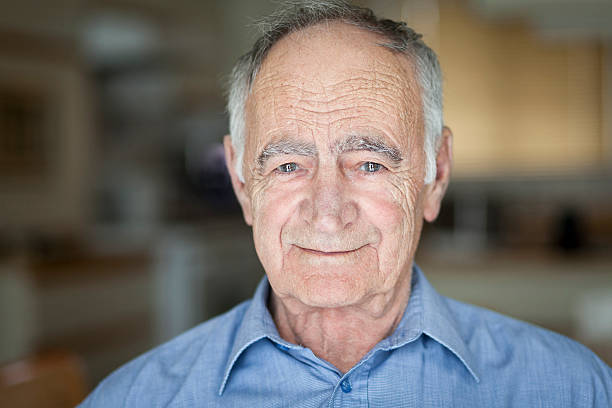

Now let's meet Mr.Furface and Mr.Facebelly ... ┌(。Д。)┐

(Also sorry for Jim and some random face from google image search)

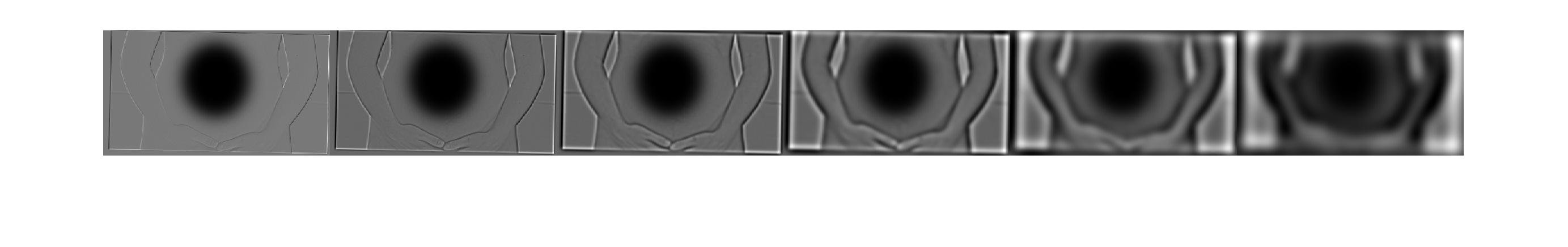

To see the process, let's have a look at the Laplacian stack of both images used for the Facebelly and also the two masked original image. (Here only shows images for one color channel)

Part 2.1: Toy Problem

Now fininaly get to part 2. For this part, we want to use Poisson blending to blend in on image a part of another image. What we do is to solve an least square optimization problem to maximally preserve the gradiend of the source region without changing the original image's pixels.

For 2.1, the matlab code is submitted seperately.

Part 2.2: Poisson Blending

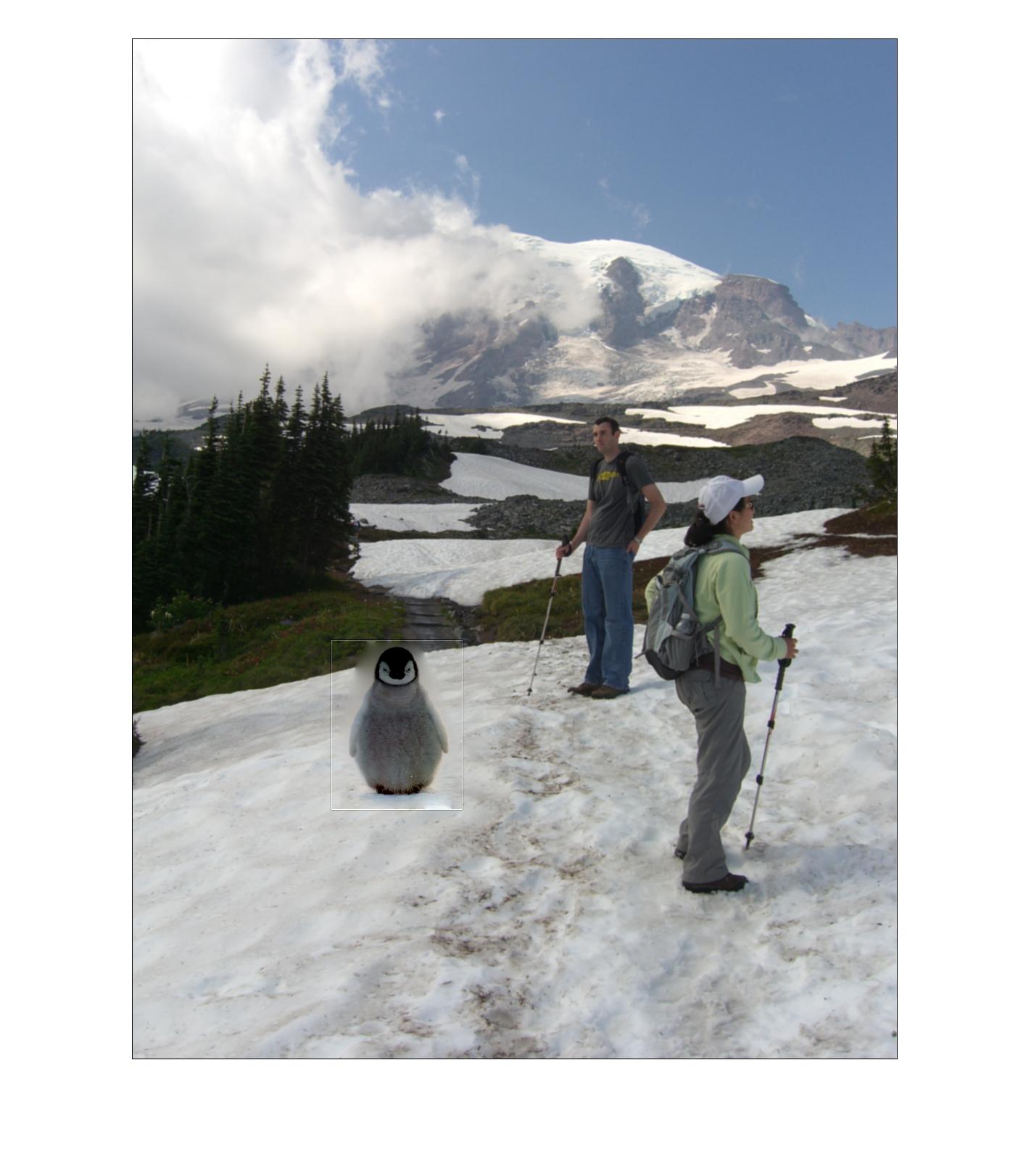

Solving the optimization problem for the blending. We first get the penguin around.

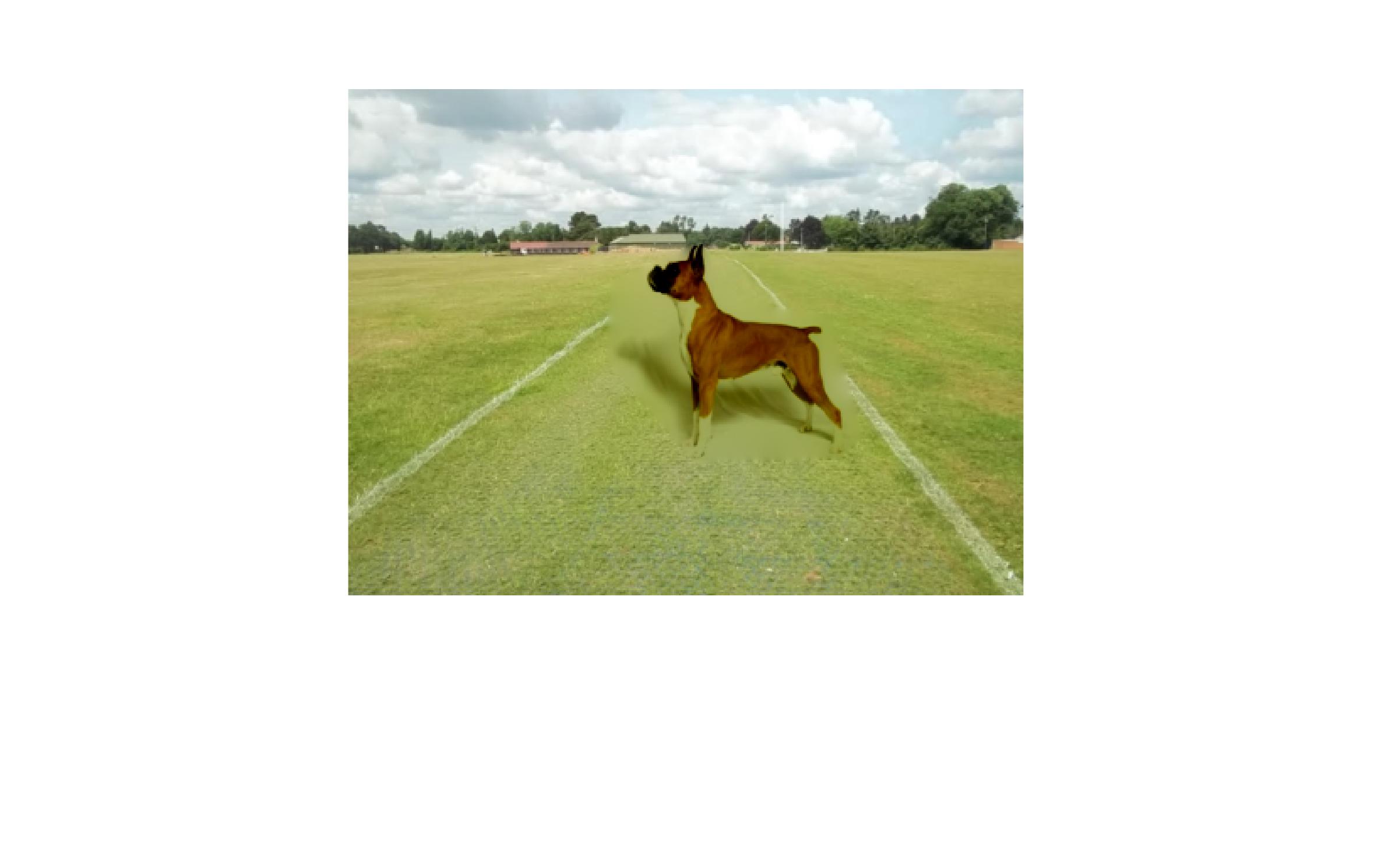

Now let's try to put a dog on some random place.

We see the result is not idea. Apart from changing the dog's color to green... because the background of the dog is pure white, the missing texture of the ground is obvious. And then we dance in the fire.