CS 194-26 Project 3

Fun with Frequencies and Gradients!

Borong Zhang cs194-26-agb

Part 1: Frequency Domain

Part 1.1: Warmup

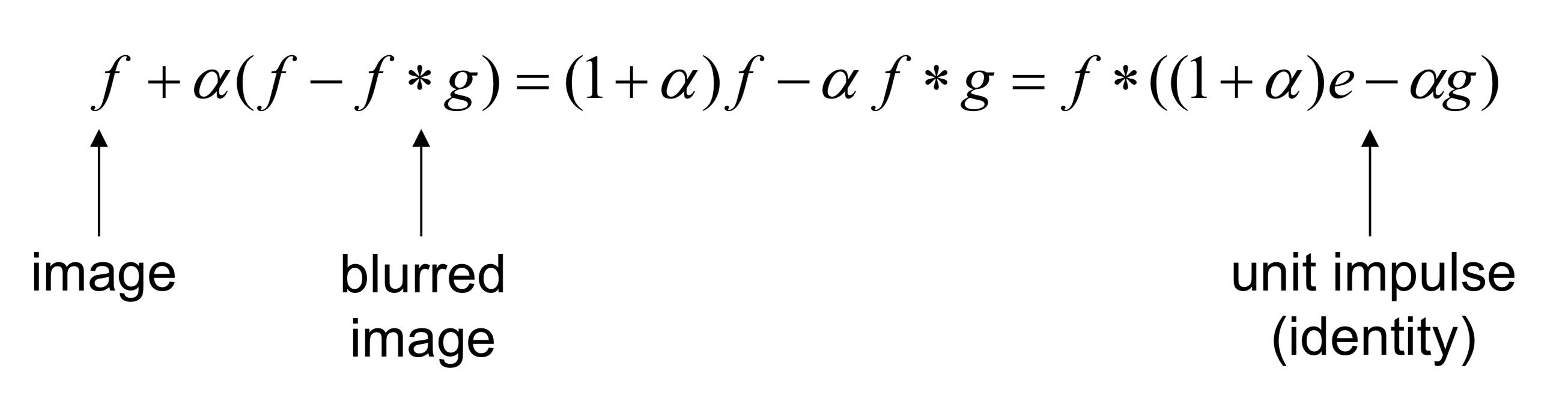

As a warmup, we try to sharpen a image. First, we blur the image by Gaussian filter. Then, subtracting the blurred image from the original, we are able extract details from an image. Finally, enhancing the details by adding them back with a factor greater than 1 produces a sharpening effect. The corresponding formula is shown below.

PS: g in the formula is a gaussian filter with paremeter sigma

Examples: Campanile and Raccoons, alpha = 2 and sigma = 5

Blurry Campanile

Sharpened Campanile

Blurry Raccoons

Sharpened Raccoons

Part 1.2: Hybrid Images

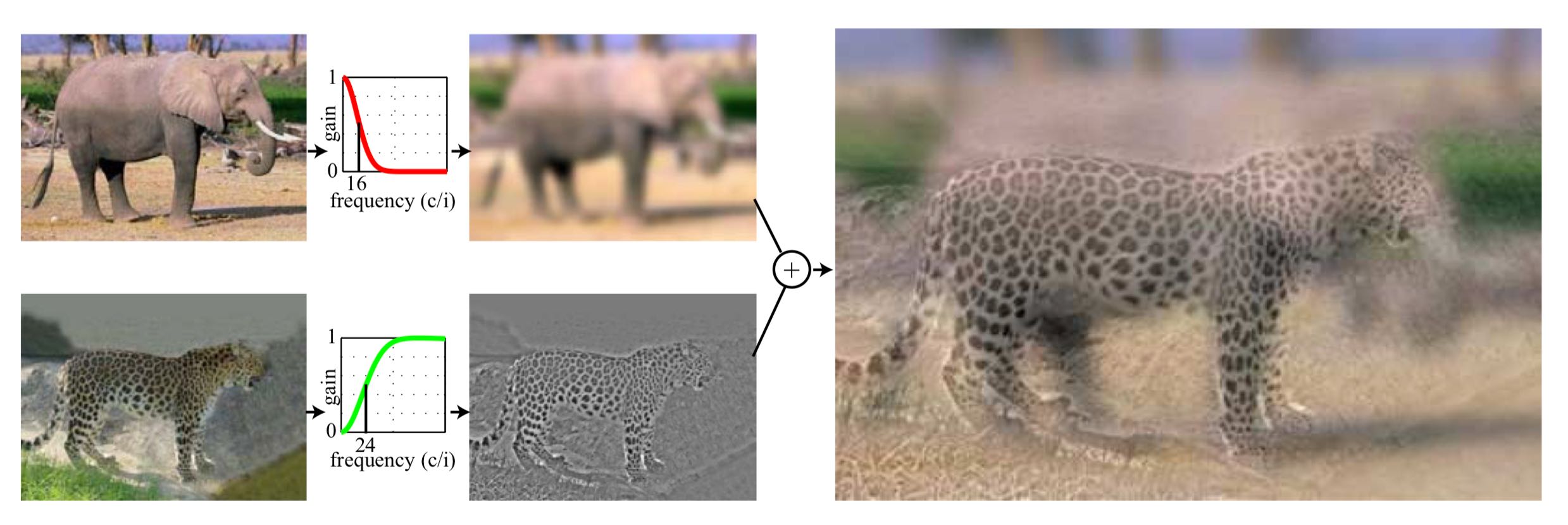

Described in the SIGGRAPH 2006 paper by Oliva, Torralba, and Schyns, Hybrid images can be created by blending high frequency of an image and low frequency of another. The idea is that high frequency tends to dominate perception when it is available, but, at a distance, only the low frequency (smooth) part of the signal can be seen. Here is a nice illustration of this method by Oliva, Torralba, and Schyns:

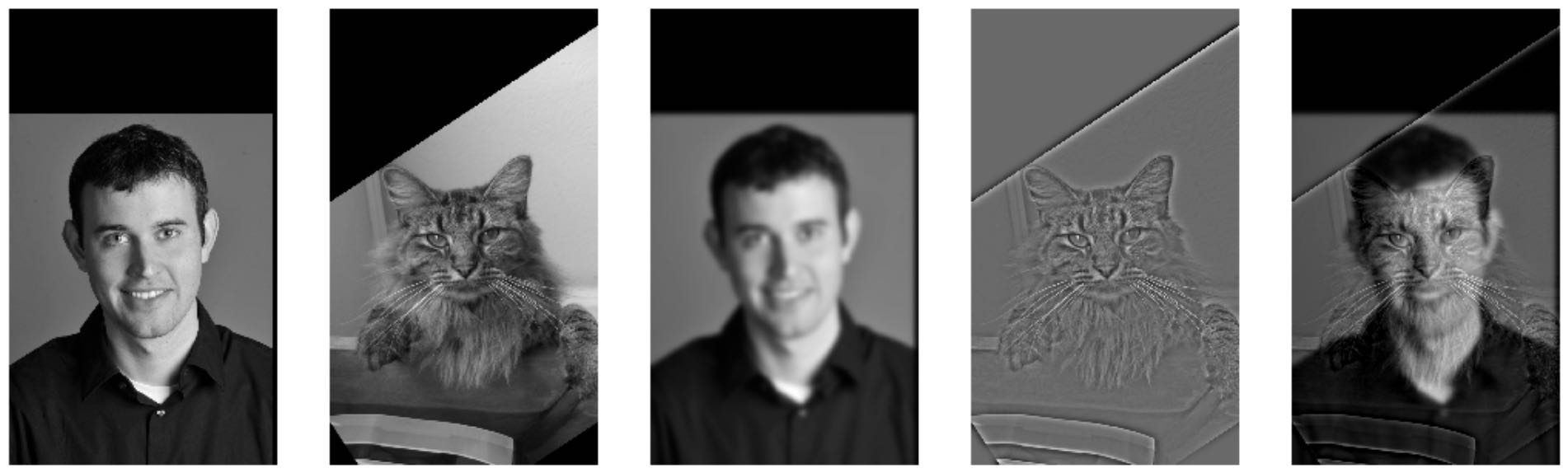

Examples: Derek and his former cat Nutmeg, sigma_low = 7 and sigma_high = 11

Derek Nutmeg Blurred Derek Nutmeg's details Hybrid

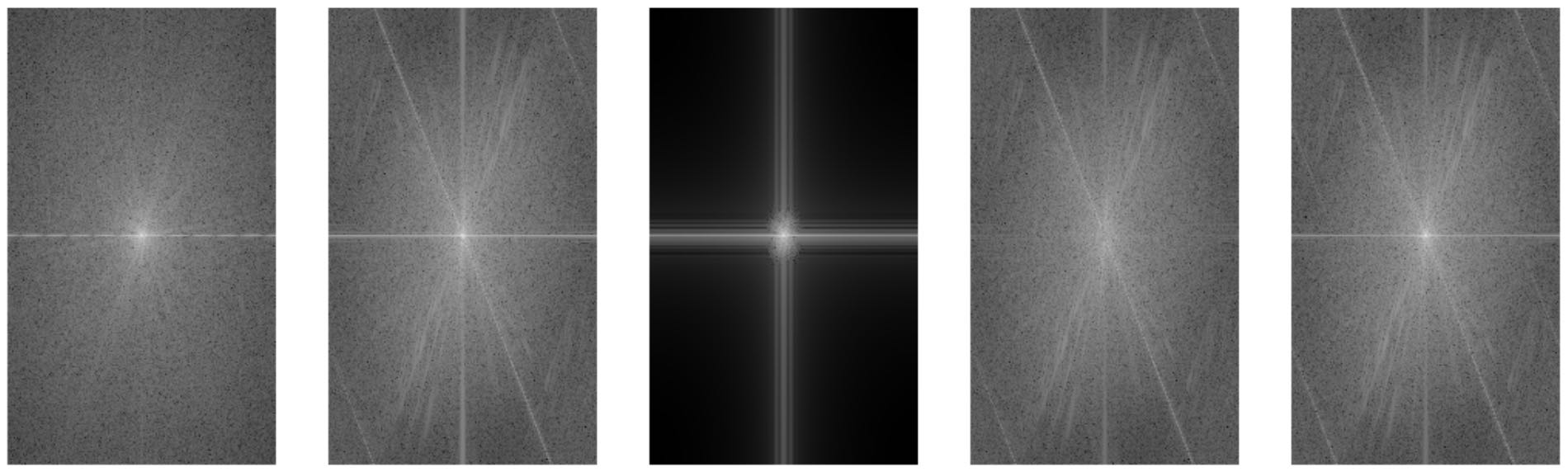

The Fourier Analysis of images above

Results: the larger image shows details of Nutmeg and the smaller image shows Derek's contour

Additional Examples 1 (failure): Tiger and Lion, sigma_high = 3 and sigma_low = 6

This example fails because the background of tiger is not very clean.

Additional Examples 2 (success): Tiger and Lion, sigma_high = 3 and sigma_low = 6

We tackle the problem above by cleaning the background of tiger image using a mask, and we now have:

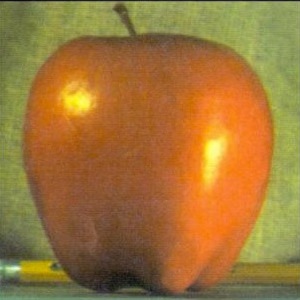

Additional Examples 3 (failure): Apple and Orange, sigma_high = 2 and sigma_low = 10

This example fails because apple and orange look too similar.

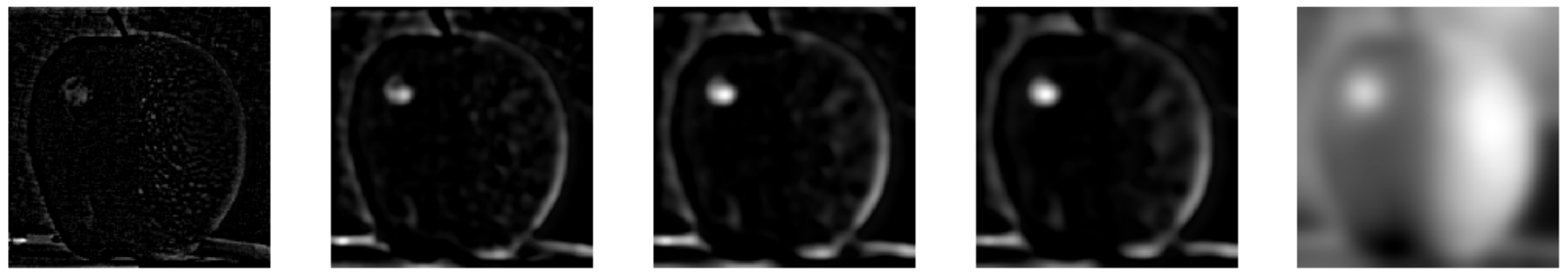

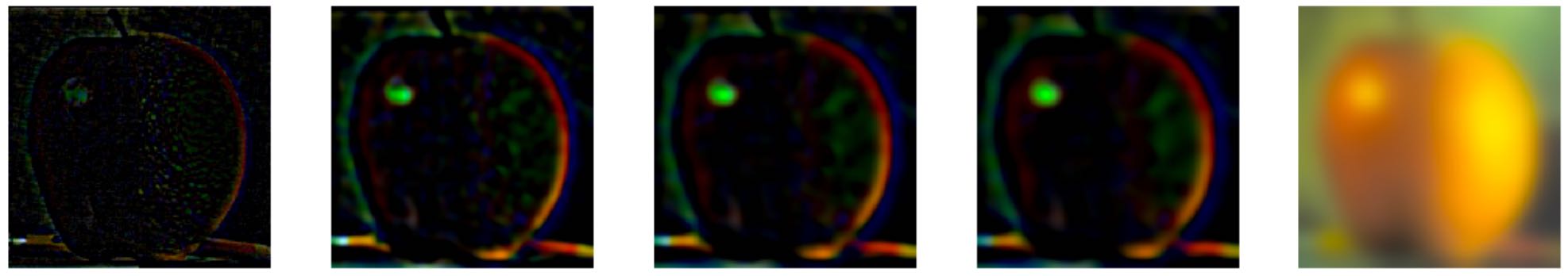

Bells & Whistles (Part 1.2): Using color to enhance the effect, and find how to obtain the best result.

Using color for low Using color for high

Using color for both

Using color for low works the best. It's because color on the high-pass image only adds some unnoticeable details to it. Also, if we add color on the low-pass image, the color won't be too overwhelming so to affact the details of high-pass image.

Part 1.3: Gaussian and Laplacian Stacks

In Gaussian Stacks, subsequent images are weighted down using a Gaussian average.

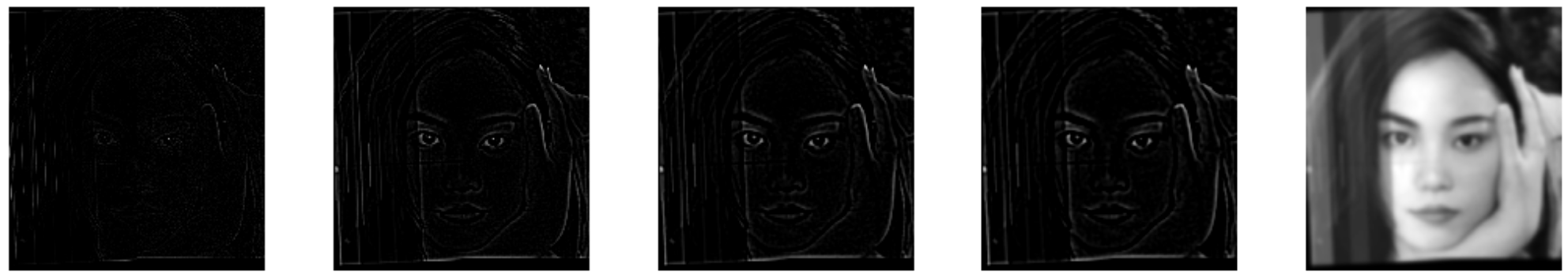

In Laplacian Stacks, subsequent images are the difference image of the blurred versions between each levels of Gaussian Stack and the last image is the same

as the last image of corresponding Gaussian Stack.

Gaussian Stacks

Laplacian Stacks

Part 1.4: Multiresolution Blending (a.k.a. the oraple!)

Example 1 (interesting image):

Example 2 (hybrid image: tigerlion in part one):

Gaussian Stacks

Laplacian Stacks

In this part, we blend two images seamlessly using a multi resolution blending as described in the 1983 paper by Burt and Adelson.

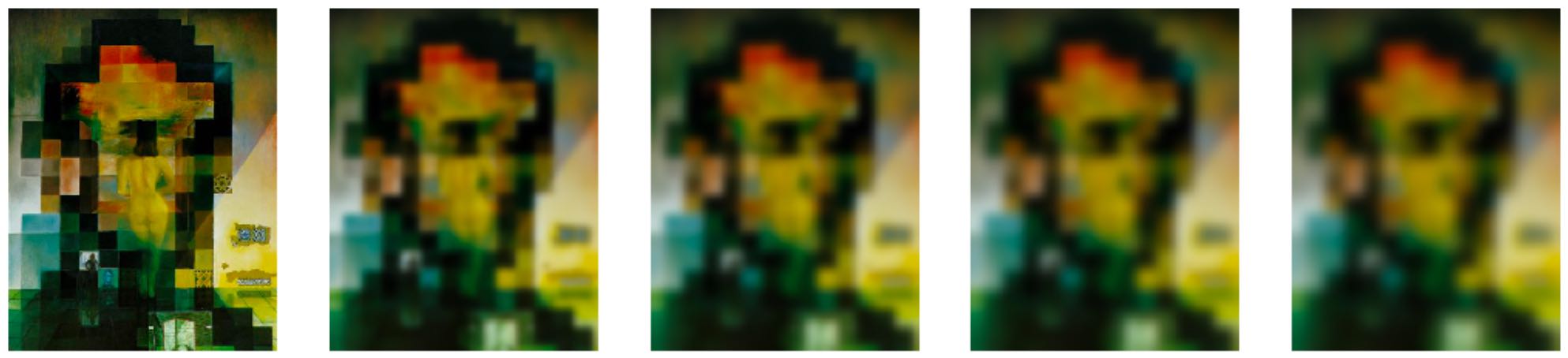

Example 1 (Orapple): sigma = 5, N = 5 (parameters for Laplacian Stacks), sigma(mask) = 15 (parameters for Gaussian Stacks applied on the mask)

Laplacian Stacks during blending multi resolution blending

Gray Orapple

Bells & Whistles (Part 1.4): Using color to enhance the effect.

Colored Laplacian Stacks during blending multi resolution blending

Colorred Orapple

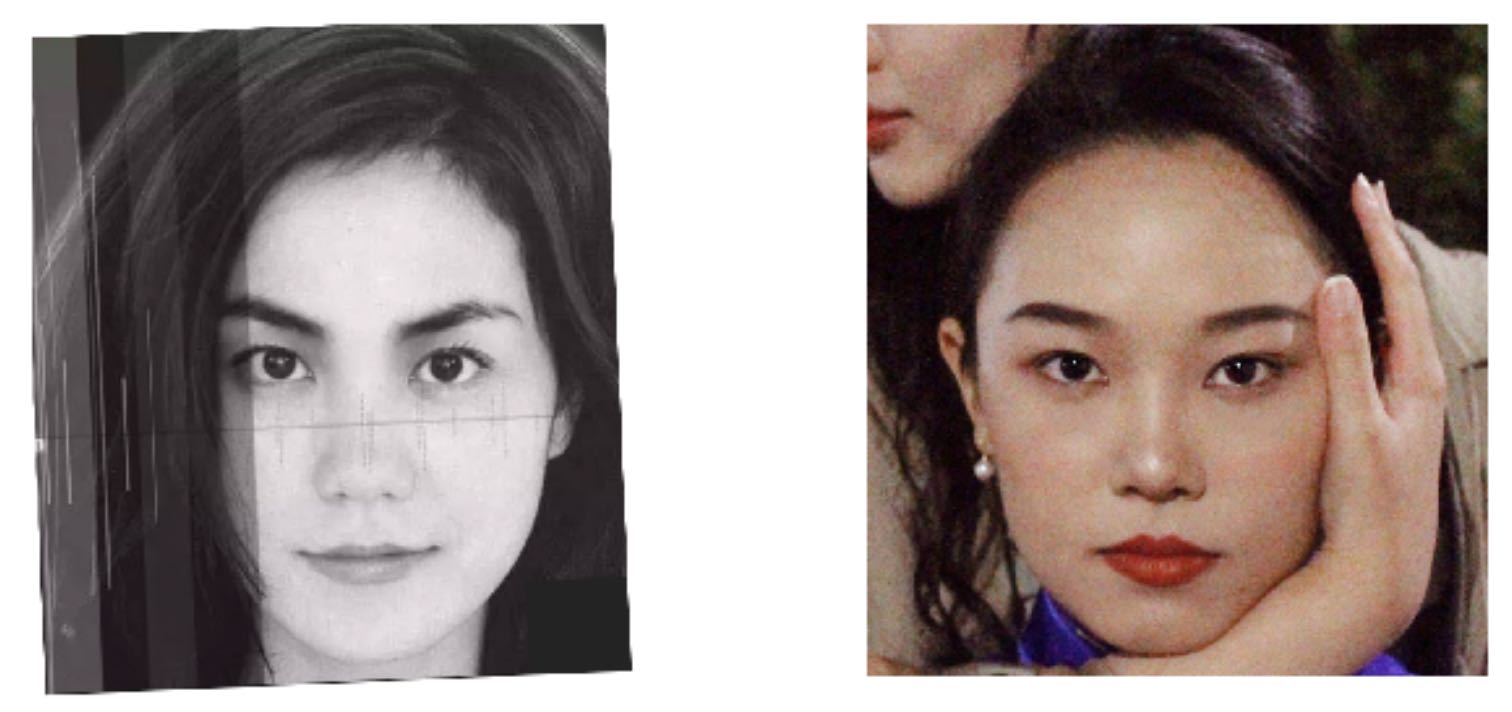

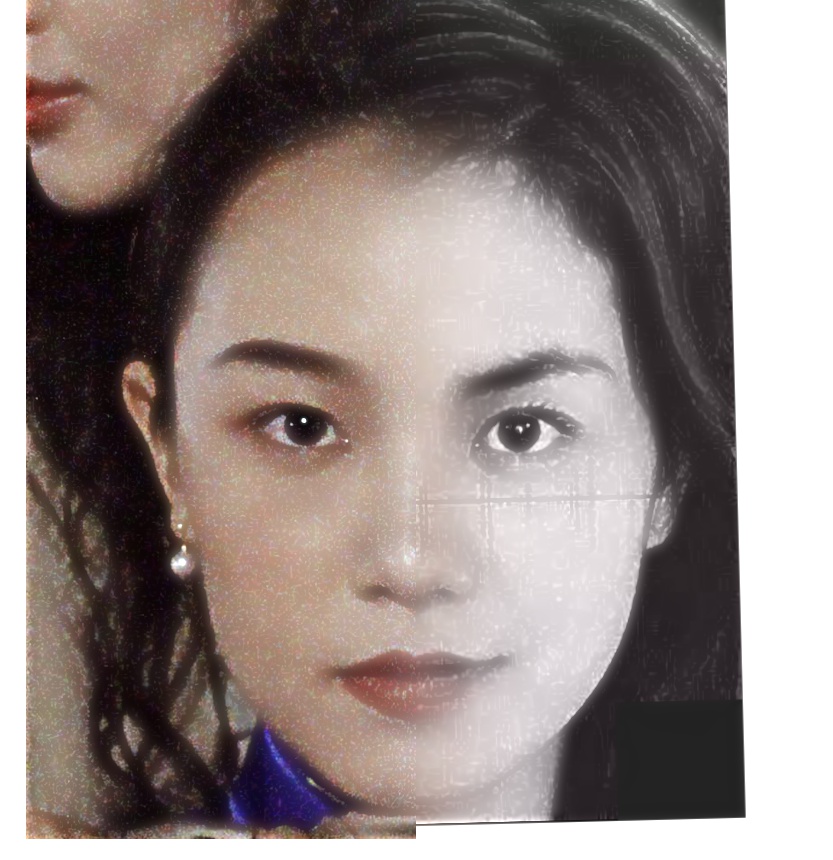

Additional Examples 1 (Xixi and Faye): sigma = 3, N = 5, sigma(mask) = 15

Faye Wong Xixi S????

Faye S???? Xixi Wong

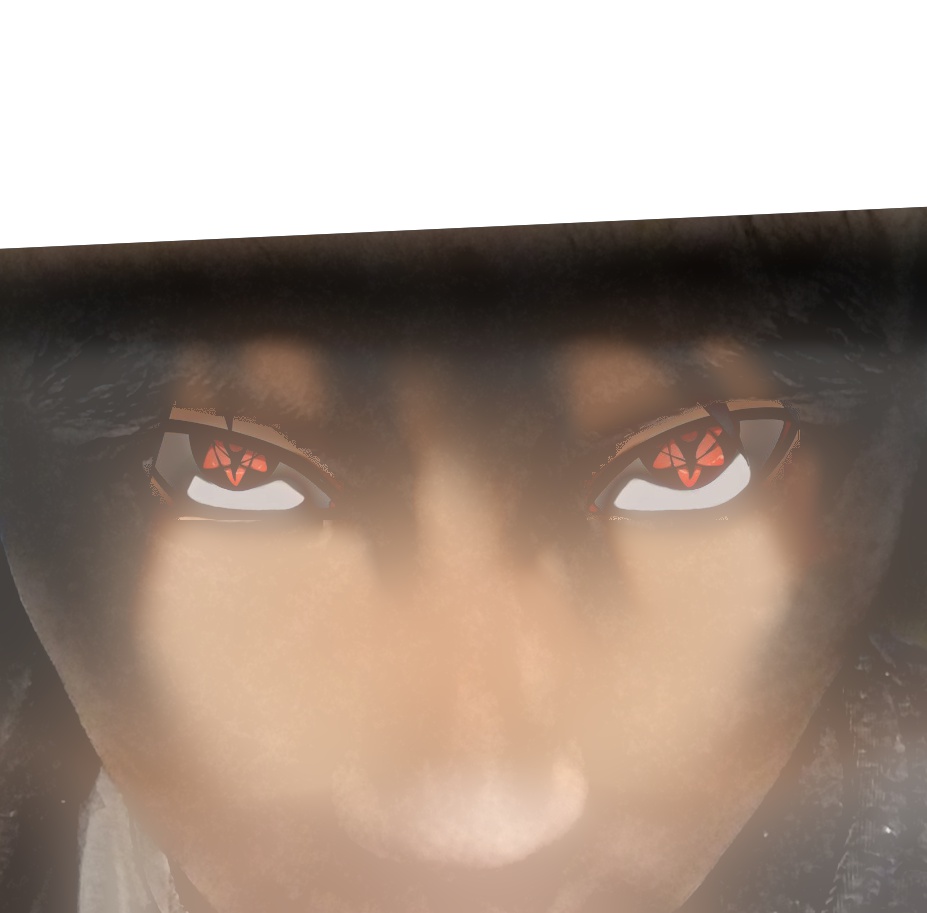

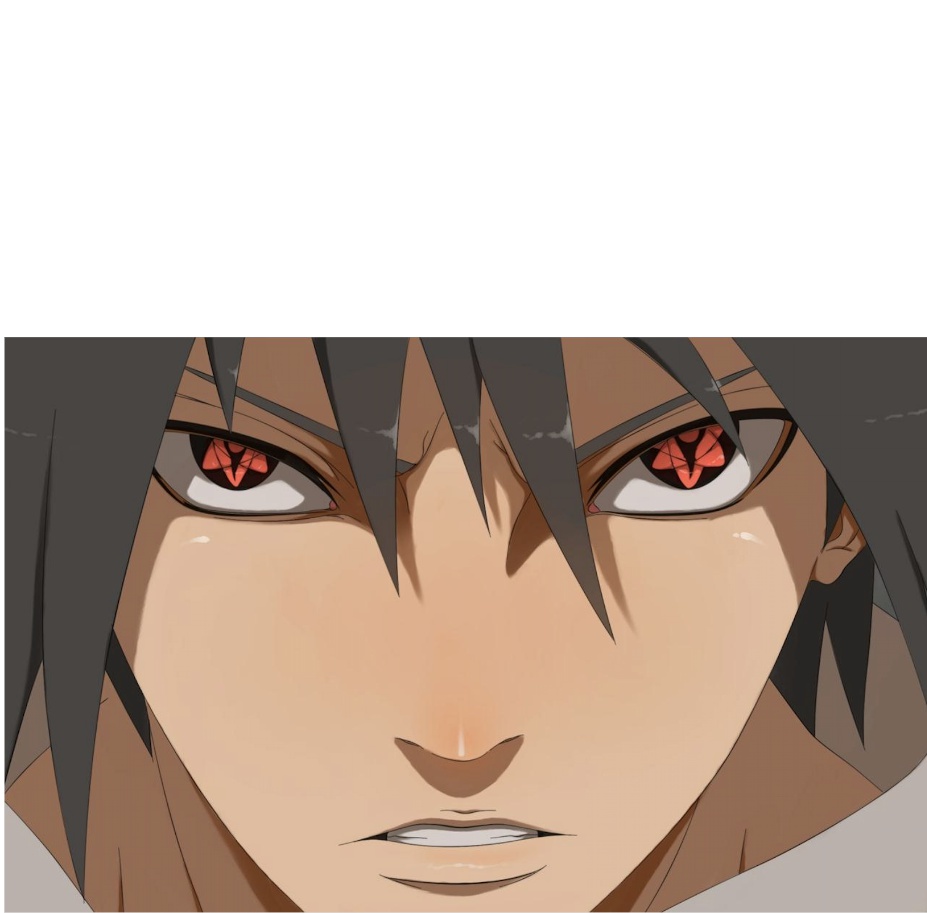

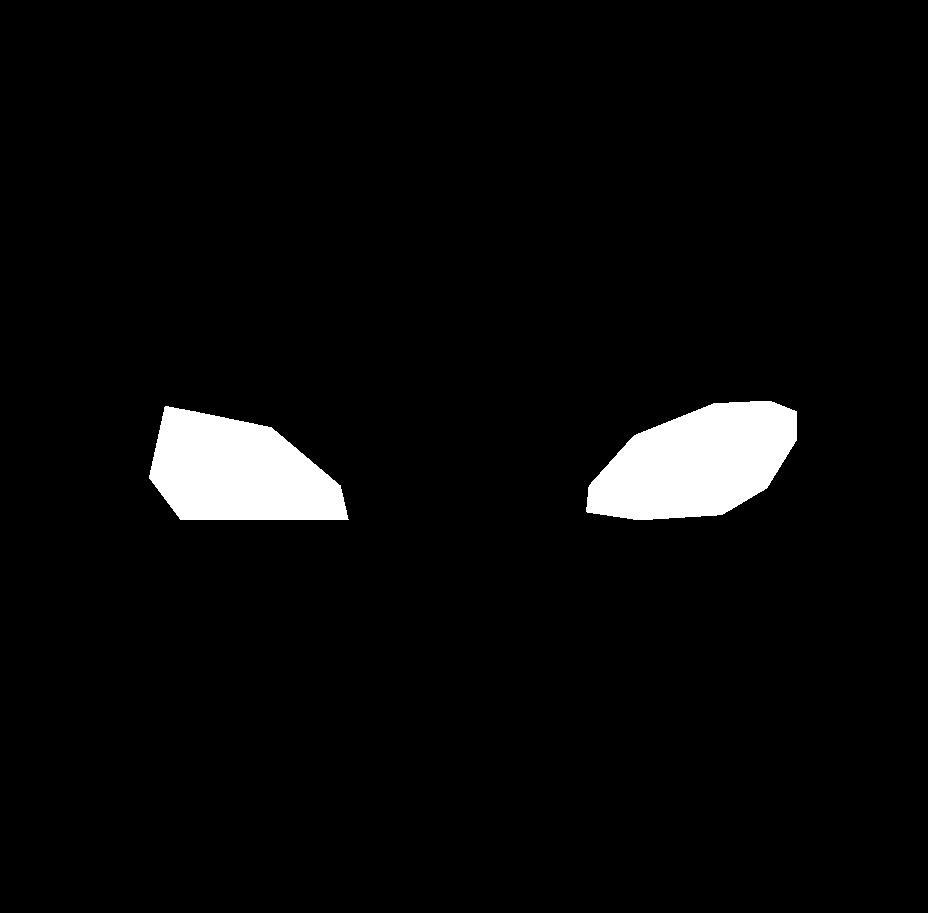

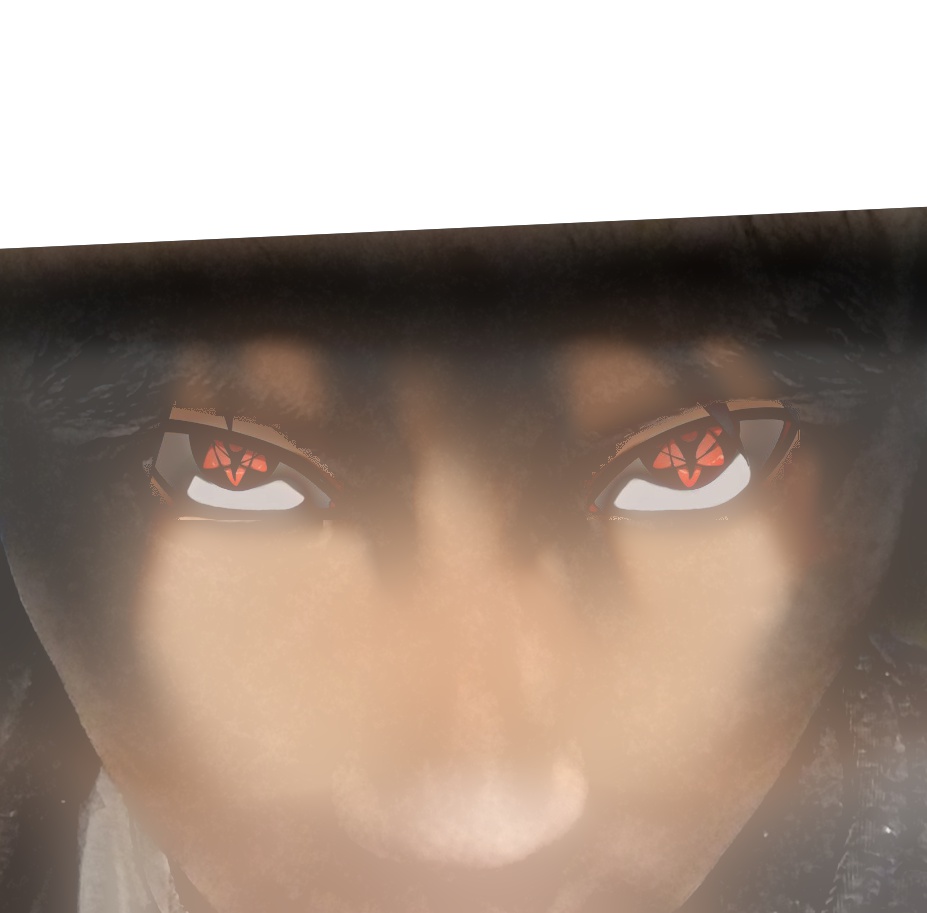

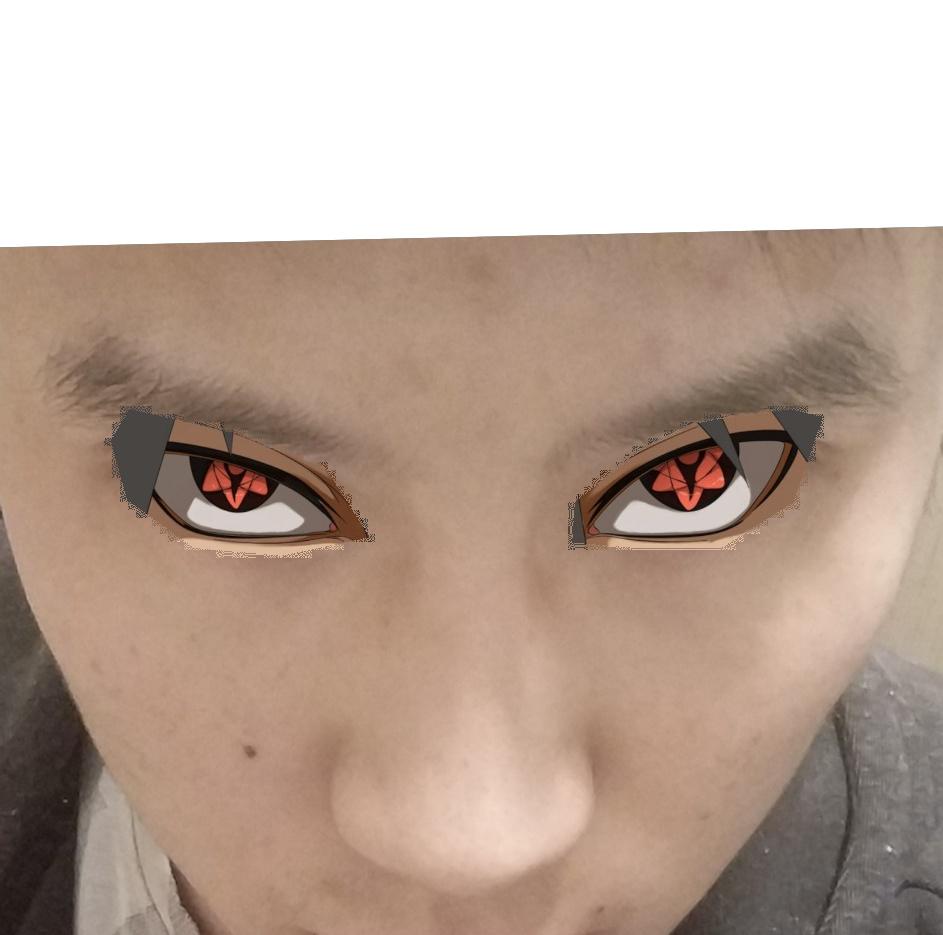

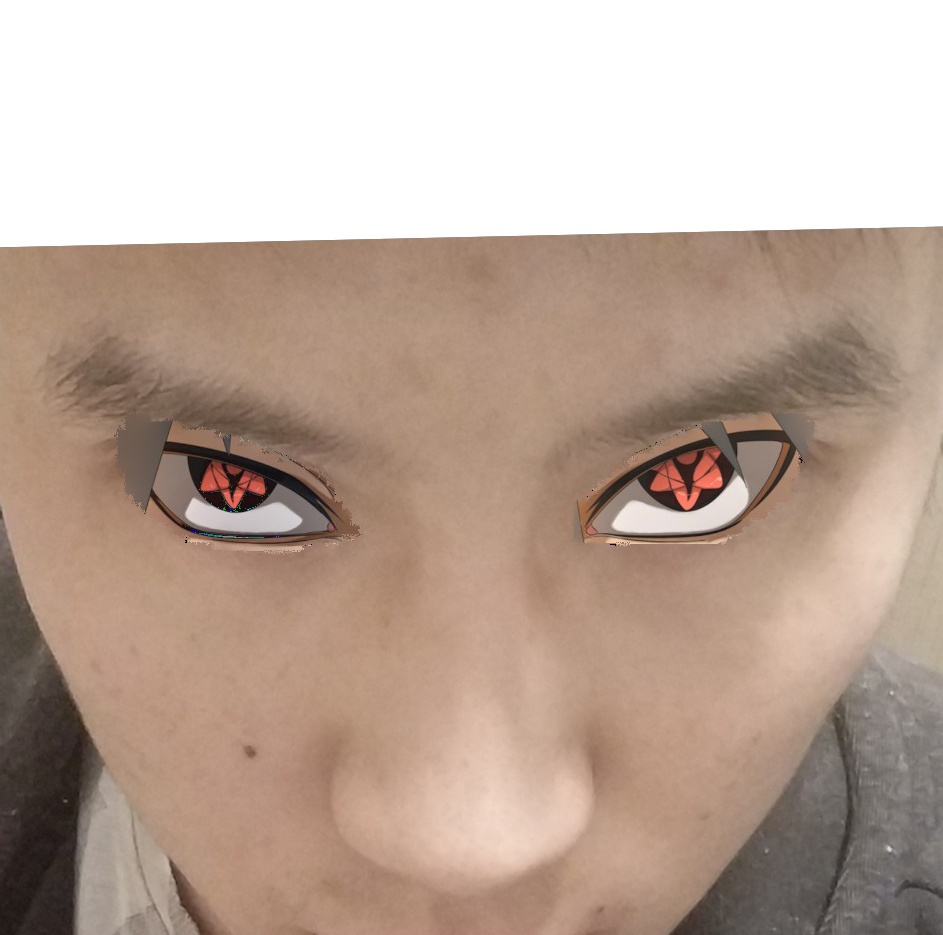

Additional Examples 2 (Mangekyou Sharingan and me; irregular mask): sigma = 15, N = 15, sigma (mask) = 30

Sasuke mask me

😂

Part 2: Gradient Domain Fusion

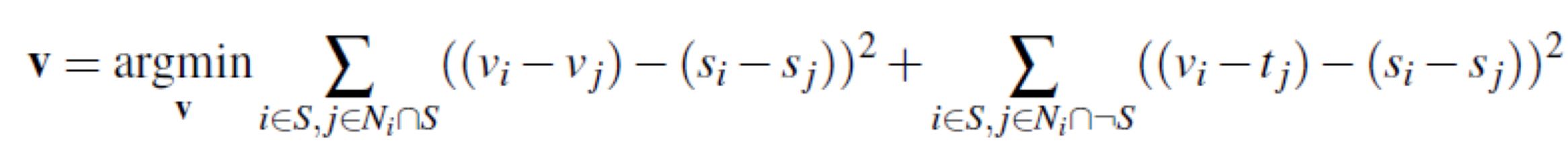

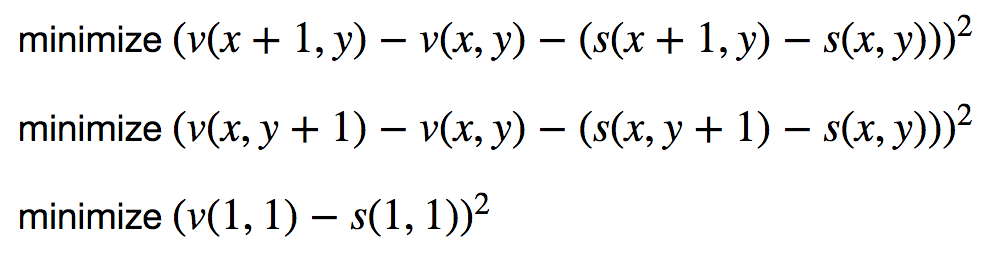

In the Part 1, when blending images, all we concerned about was how to make the blended image seamlessly. Different from the implementation in part 1, for which we worked on Laplacian Stacks, in this part, we explore more about the gradient domain. In order to make pixels around the blending boundary have the same color and to preserve features of both images, the only thing we need is just to solve an optimization problem with blending constraints (Poisson Blending):

Part 2.0: A brief description

Laplacian Stacks during blending multi resolution blending

Part 2.1: A Toy Problem

In this part, we try to reconstruct an image only using the pixel on the top left corner of the original image and all the gradients on x-axis and y-axis.

The problem is equivalent to solve:

Result:

Original image reconstructed image

Part 2.2: Poisson Blending

Exmaples:

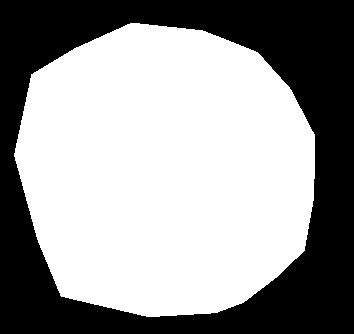

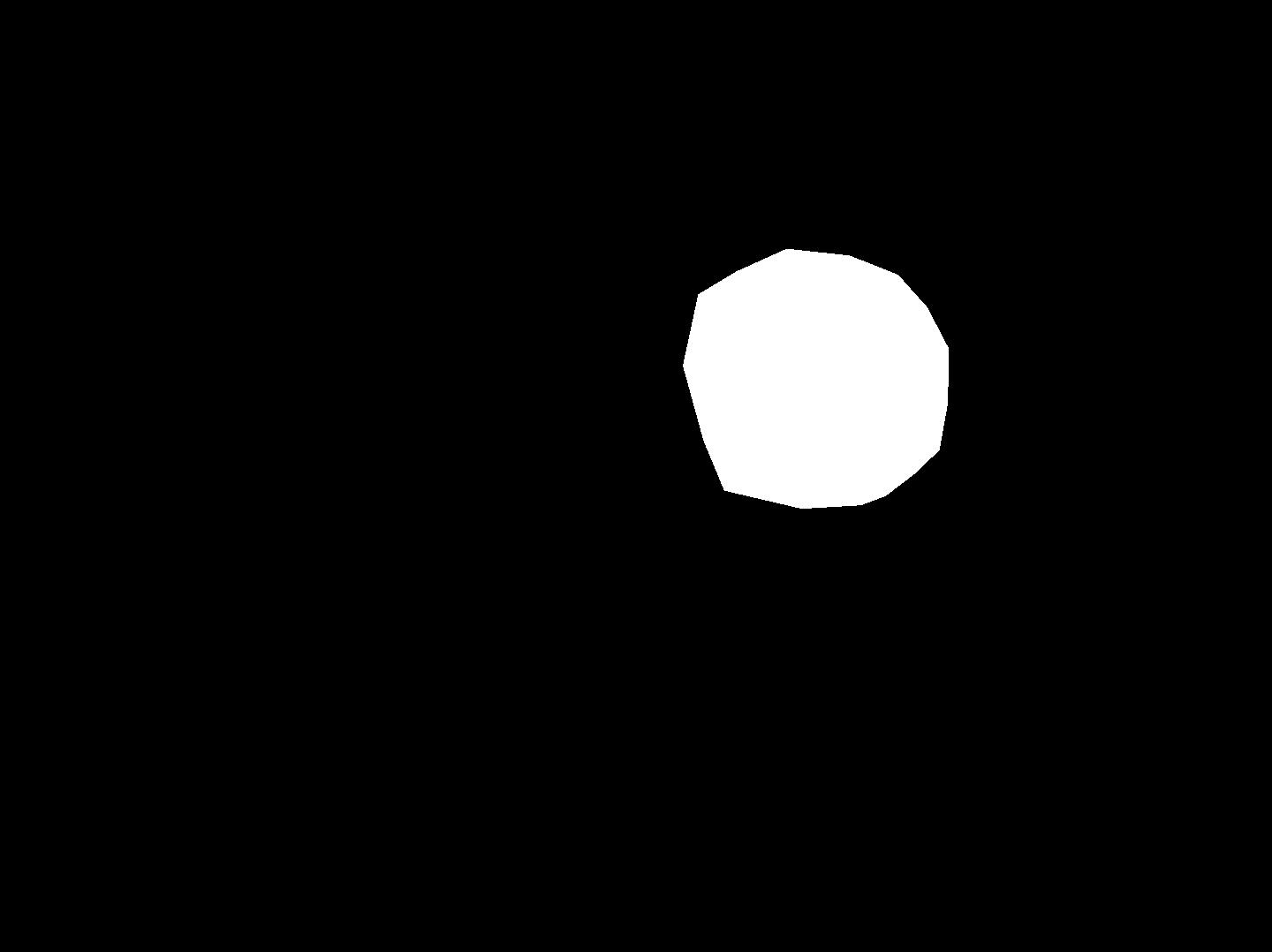

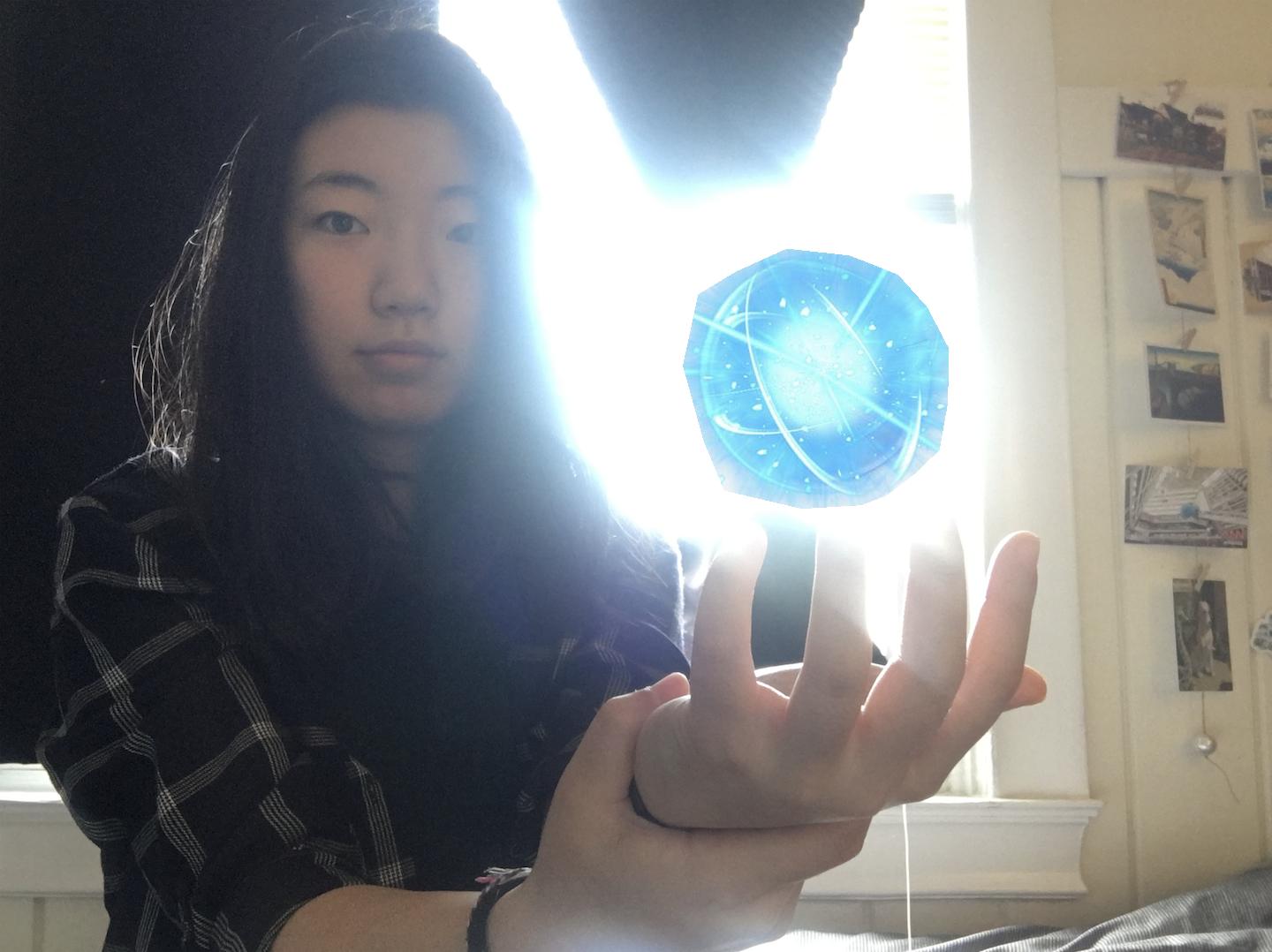

Source Image (Rasengan) Source Mask Coco (Target Image) Target Mask

Direct Copy Image Poisson Blended Image (Coco Rasengan)

Explanation of the Examples:

As described in Part 2.0, I first set up a system of linear equations that solves the optimization problem. At first, I made vt represent a image of the same size as Target Image because it's easier to implement. However, it takes about 20 minutes to run, so I later modified getMask.m such that it returns the place that the source image is copied. Using these additional values, we are able to set up equations to solve for vs that represents an image of the same sive as Source Image, and to blend the image represented by vs into Target Image by copying pixels. It only takes 20 seconds to run now!

Also, when I tried to directly copy the pixels of the image represented by vs to the background, there is a large black seam in-between. I don't exactly know what caused this but I tackled this problem by adding an if statement that requires pixels to have values greater than 0.03 to be copied.

Additional Examples (success):

Source Image Source Mask Target Image Target Mask

Direct Copy Image Poisson Blended Image

Additional Examples (failures):

While I was trying to add more penguins on the snow, as you can see, the bottom left penguin has a weird face. It's because there is a big contrast between colors on the penguin's boundary.

The same thing happens to the Rasengan below : (

Source Image (Rasengan) Source Mask Coco (Target Image) Target Mask

Direct Copy Image Poisson Blended Image (Coco Rasengan)

Part 2.2.1: Comparison of Blending Technics

Source Image (Sasuke) Target Image (me) Source Mask

Laplacian pyramid blending Direct Copy Poisson Image Blending

The Laplacian pyramid blending works better for the images above because the source image the target image don't have the same kind of color and texture.

In conclusion, if the source image the target image are drastically different, Laplacian pyramid blending works better because we want the blended image to have not only the same color, but also the same texture and shape. However, if the source image the target image are compatible, like a penguin and snow, then Poisson Image Blending works better because it preserves more details of both image.

Thanks for reading!