For this project, we practiced used face morphing techniques to morph faces from one to another, compute the mean face for a group of people, and create caricatures by extrapolating from a mean face.

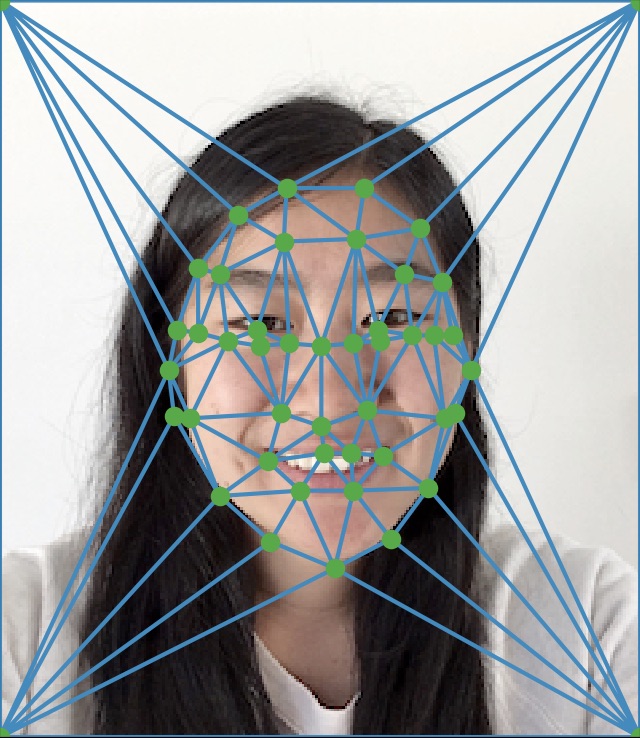

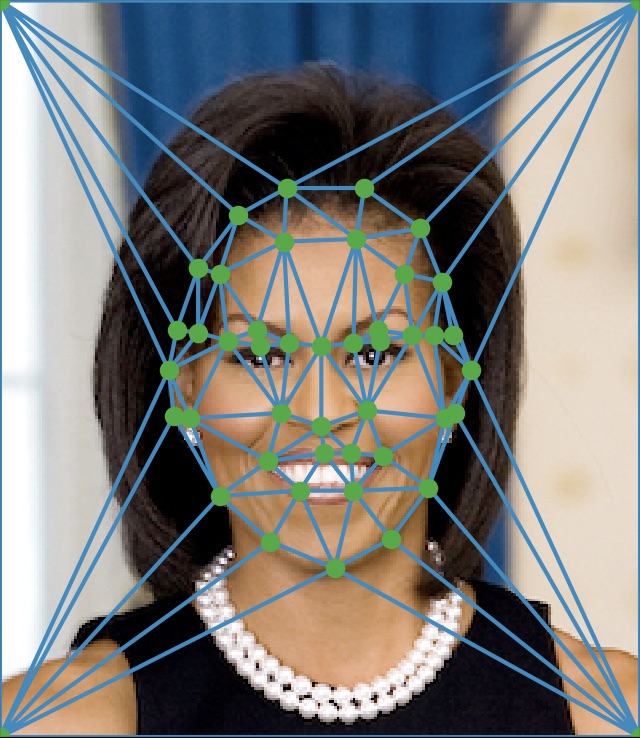

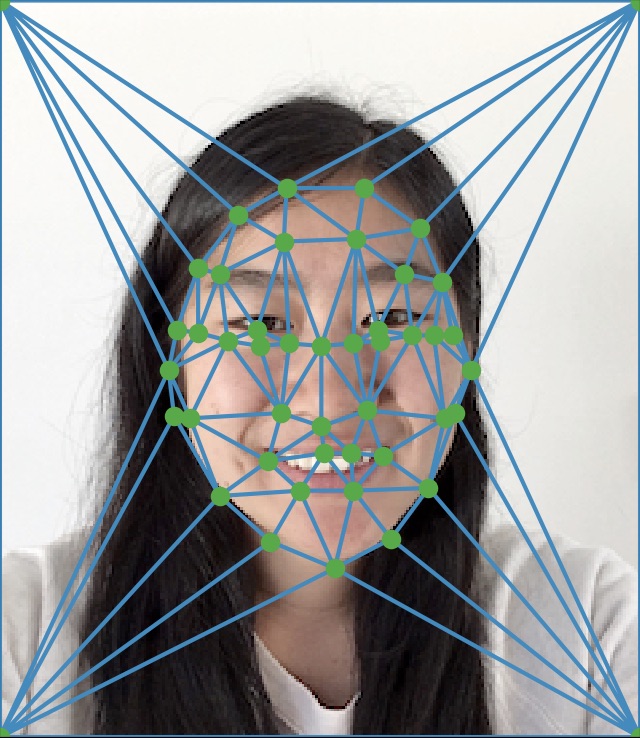

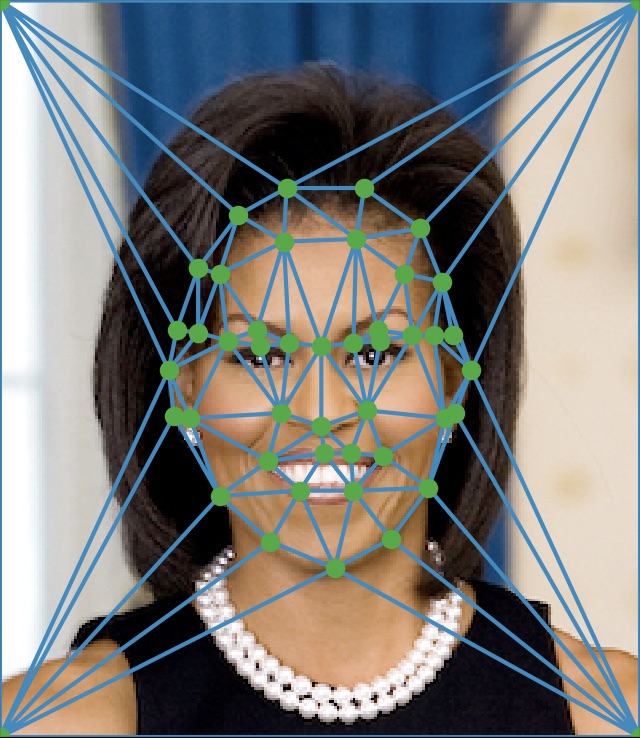

First, I annotated 43 points that corresponded to similar features on both images. Then, I took the average of the two images' corresponding points to get corresponding points for a midpoint image. After, I used Delaunay triangulation on this midpoint image's corresponding points. Then, I computed an affine transformation warp for each triangle, going from the target image to the source. I calculated the final image pixel points by warping them into the source image points with the correct affine matrix transformation (based on the triangle the pixel point was in), using interp2d to extract the interpolated pixel value at that point. This led to my final result once I did this for each color channel and combined them.

This involved basically the same steps as part 1, except this time including a warp factor and dissolve factor. At each step t, I would compute the target image points as (1 - t) * im1(x,y) + t * im2(x,y). I would also compute the corresponding pixel value as (1 - t) * pixel_val_im1(x,y) + t * pixel_val_im2(x,y).

I used the Danes database of 37 images to calculate my mean face (http://www2.imm.dtu.dk/~aam/datasets/datasets.html). The idea was first finding the mean of all the key points of each image. Then, I warped each image to the mean face values. Finally, I overlaid all these faces together by weighting each images' pixel value at original pixel value / 37. This gave the final result.

This involved first getting the difference between my correspondence points and the mean images correspondence points. Then, I added this difference to my correspondence points to get new correspondence points. Finally, I warped my image to the one based on these new correspondence points.