Overview

In this project, we aim to utilize simple image transformations, such as shifting and averaging, to generate complex effects such as image depth refocusing and aperature adjustment. To do this, we take advantage of images captured in an 17 x 17 plane orthogonal to the optical axis.

1: Depth Refocusing

For images captured in an orthogonal, evenly-spaced grid, objects that are far away do not vary significantly in position across cameras whereas closer objects will have a greater variation in distance. We can take advantage of this for depth-refocusing by shifting images by its offset from the center scaled by a constant alpha. That is, we can shift each image by

By averaging these shifted images, we get a resulting image where parts of the image that end up aligned are in focus and other parts are blurry. Thus, by adjusting our ALPHA value, we can refocus at different depths, where a smaller alpha focuses on the back of the image and a larger alpha focuses on the front.

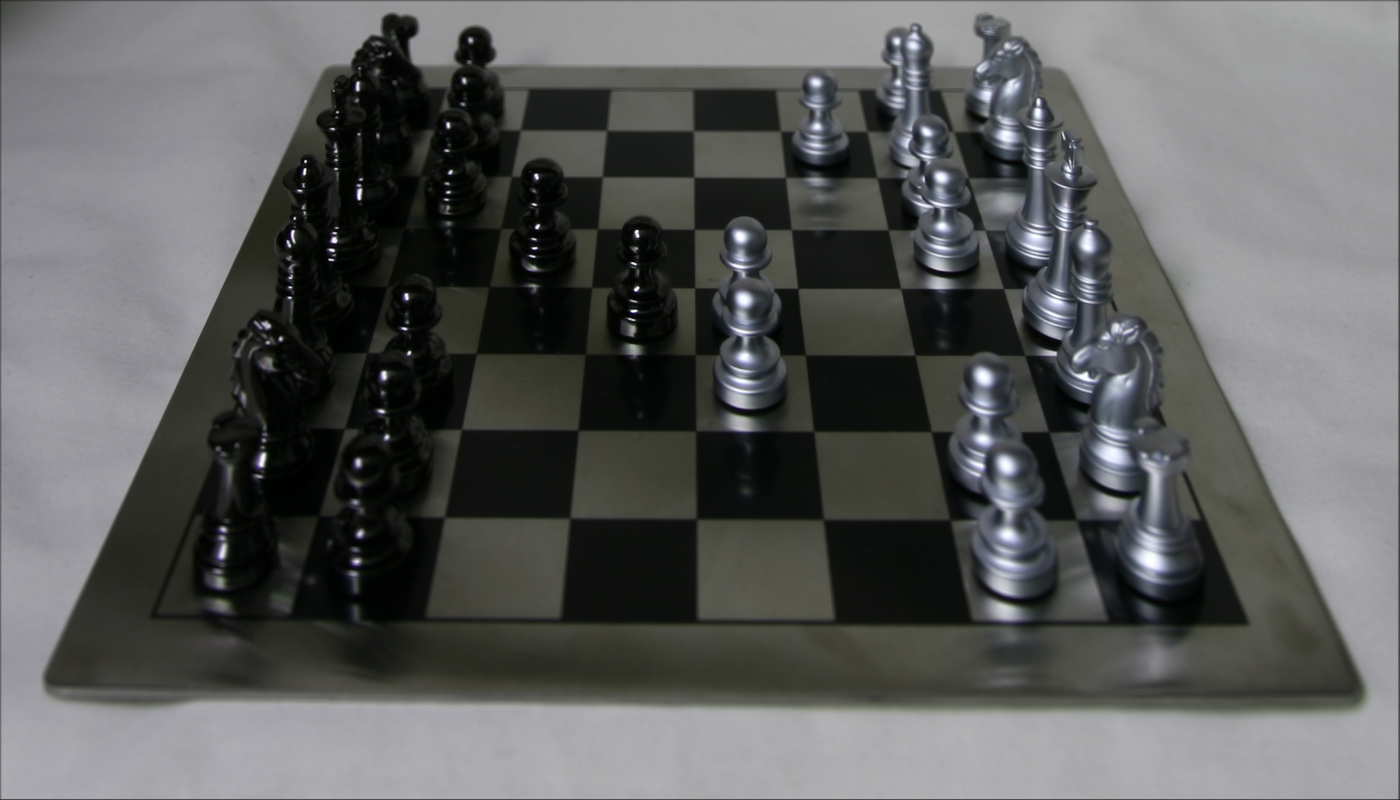

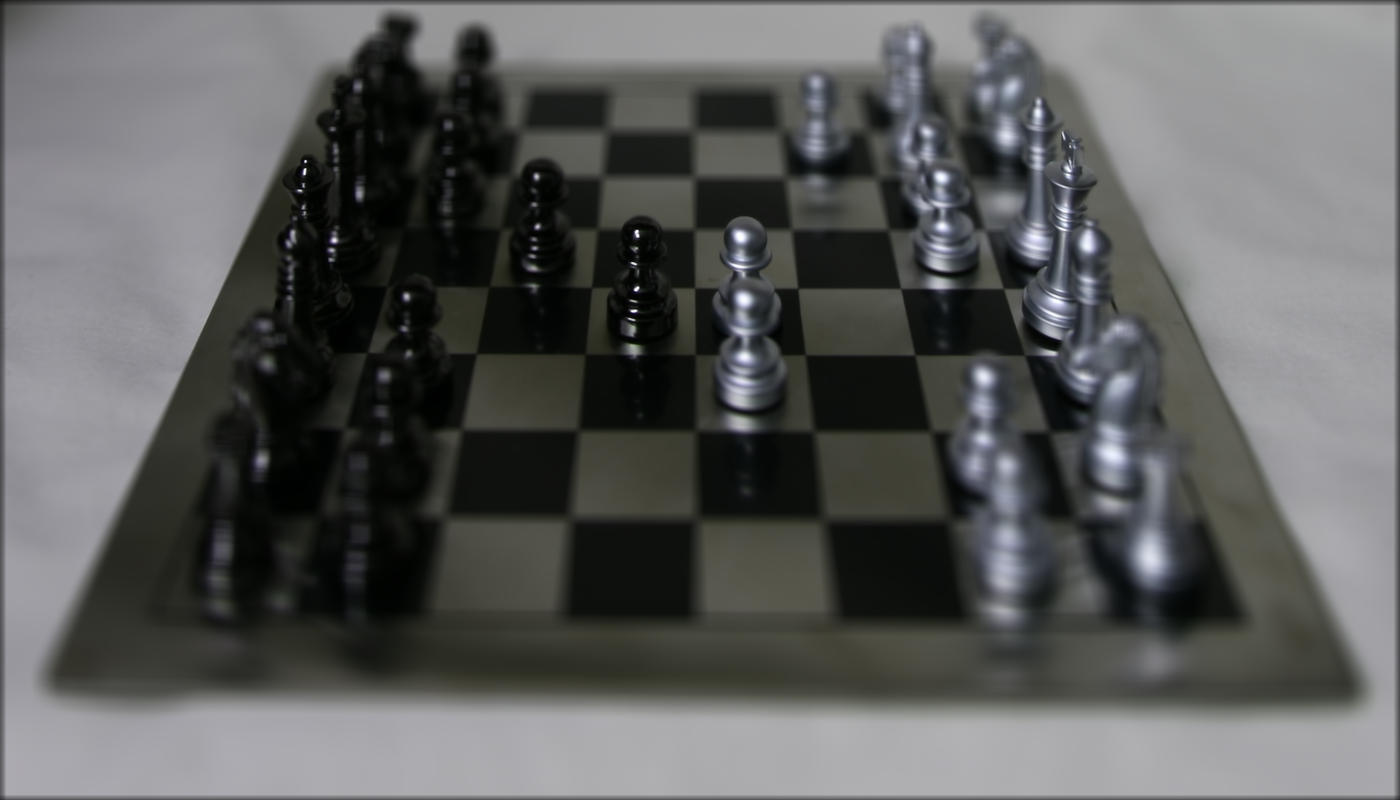

Refocus with alpha = 0.00

Refocus with alpha = 0.02

Refocus with alpha = 0.05

Refocus Animation from alpha = -0.3 to 0.6 at increments of 0.05

2: Aperature Adjustment

We can simulate adjusting the aperature of a camera by manipulating the differences in variation for far objects and small objects in a similar manner to the last part. By adjusting a radius around the center camera (i.e. at radius 1 we only include images from cameras 1 away from the center camera), we can generate images that mimic the effect of a higher aperature. Note that as further cameras' images are included or excluded from our shifted/averaged image, we get more and less of an aperature blur effect respectively. For the images generated below, we used a constant ALPHA value of 0.2, which focuses the camera on roughly the middle of the image.

Radius 1

Radius 5

Radius 8

Aperature Adjustment Animation from radius = 1 to 8 at increments of 1

Radius 1

Radius 5

Radius 8

Aperature Adjustment Animation from radius = 1 to 8 at increments of 1

3: Summary

From the project, I learned a lot about lightfield cameras, in particular about how depth can be captured in 2-dimensions using an array of cameras using post-processing techniques. This project also demonstrated how simple techniques, such as averaging and shifting, can be very powerful given the appropriate data.