Overview

In this project, our goal is to produce mosaics generated from multiple images. To achieve this, we must set up correspondence points between images, define and apply projective warps, and composite.

Shooting Photos

In order to generate mosaics, we must first take several photos. One way we can do this is by taking pictures from the same location, but rotating the camera to capture overlapping fields of view. We captured the three following sets of images.

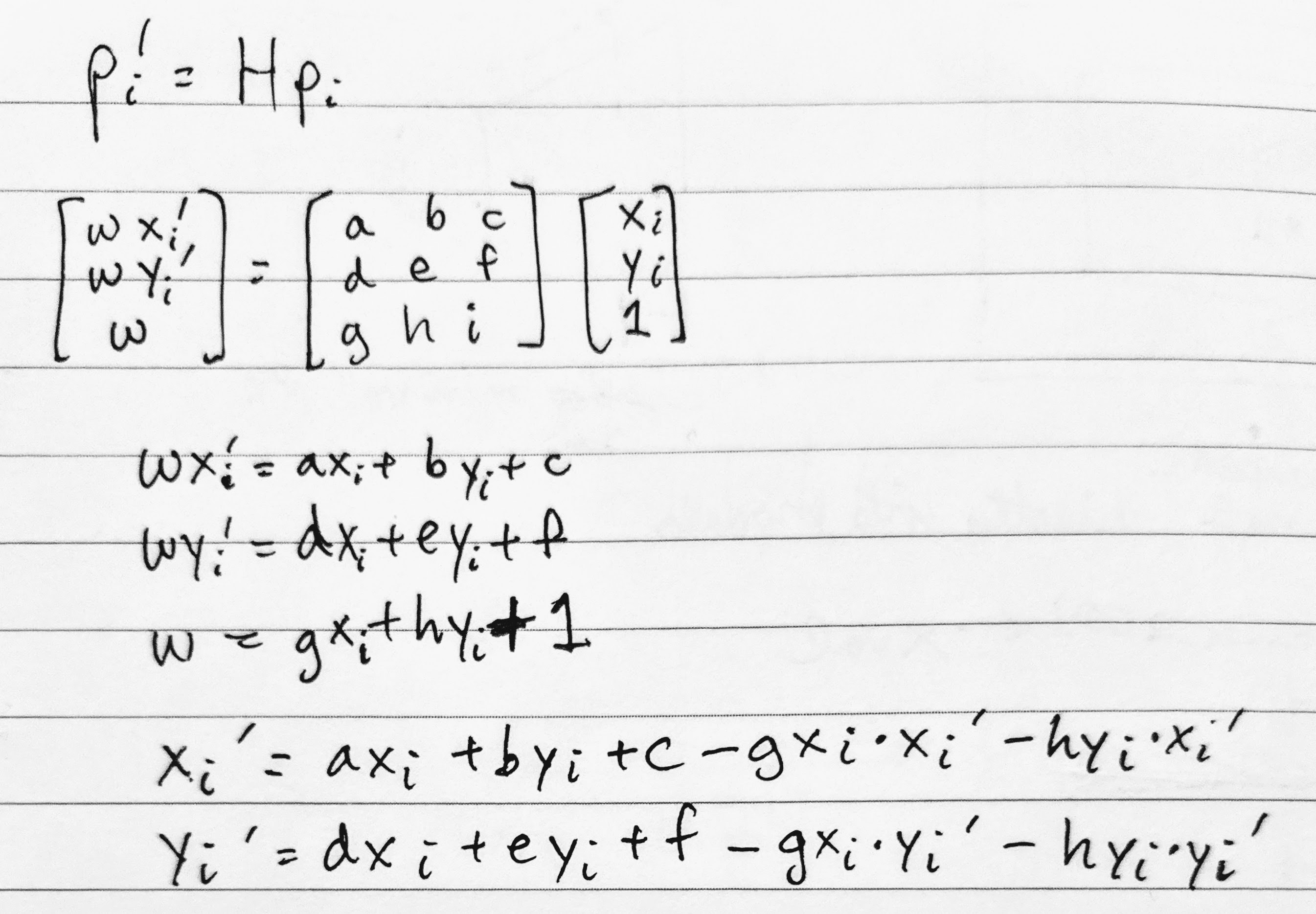

Recovering Homographies

Before we can warp one image to align with another, we must first determine

the parameters defining the transformation between the two images. That is,

we must find the parameters of H in p' = H * p,

where H is 3x3 with 8 degrees of freedom (the ninth being a scaling factor we set to 1).

We can find these parameters by solving a least squares problem generated

from n point correspondences between a pair of images. At

minimum, we need n=4 pairs of points, but this would result in

a noisy/unstable homography; thus, my image pairs typically use 8

correspondence pairs defined manually using Python's ginput.

The following is the derivation of the least squares setup. We

begin with the relationship between the ith point (p_i)

and its transformed counterpart (p_i'). Performing the

matrix multiplication, we get the three equations in the middle block.

Substituting w = g * x_i + h * y_i + 1 into the other two

equations, we get the last two equations that we can use in our setup for

every pair of points. That is, we add x_i' and y_i'

to b and the coefficients on the right-hand side to

A.

Note that our h in A * h = b is

flattened from 3x3 to 8x1 for the 8 unknown degrees of freedom (p_i'

is merely the scaling factor).

Warping Images and Rectification

Now that we know the parameters of the homography, we now have the transformation matrix we can apply to all of the points of an image in order to warp it. What we can do here is first forward warp the corners of the original image so we can determine the bounding box of the new image. Based on this bounding box, we can iterate over it and inverse warp back to the original image to sample the pixel colors. If a pixel in the warped image's bounding box maps to a pixel in the original image, we copy the original image's pixel colors to this pixel.

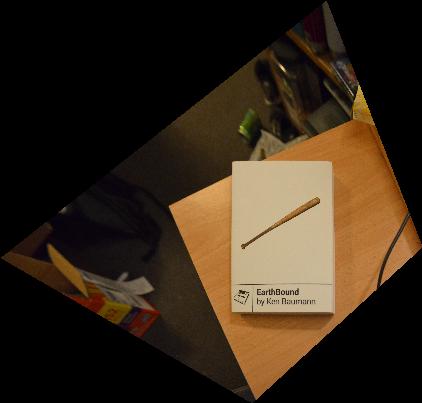

We can test out our image warping for the application of rectifying images. We can rectifying images simply by selecting four corners in the original image and defining the correspondences to match a rectangle's four corners. Below are the results of rectifying a book and a trackpad:

Blending Images into Mosaics

Once we are able to warp images from one projection to another - i.e. from the perspective of one image to the perspective of another via their correspondence points - we are able to create our mosaics. All we have to do now is implement some sort of blending to reduce the obvious edge that would result from simply overlaying one image on top of the other. We can achieve this with simple alpha blending, masking both images and then compositing them into a final image.

What I've Learned

One thing I've learned - or perhaps the word is "internalized" - is just how our brain deceives us. Things that we perceive as being rectangular are actually trapezoids or other parallelograms; rarely are they truly rectilinear. It's just that our brain, over time, has learned to process these images as "rectangles." However, when we perform mosaicking, we cannot take this fact for granted. We have to warp images to be truly rectangular to produce a satisfying result.

Another thing I've learned is that plenty of the concepts we've used in previous projects keep returning. For example, alpha blending from "Fun with Frequences" and object warping from "Face Morphing" have shown up again, which demonstrates that there are indeed fundamental, reusable concepts within the realm of computational photography and computer vision.

Acknowledgements

Credit to Rohan Narayan for helping me with aligning images for blending.

Credit to Tianrui Guo for spotting an issue with inconsistent data types for my numpy arrays.