Part 1.1: Recover Homography

I have a function that does this, look at my code

Part 1.2: Image Warp

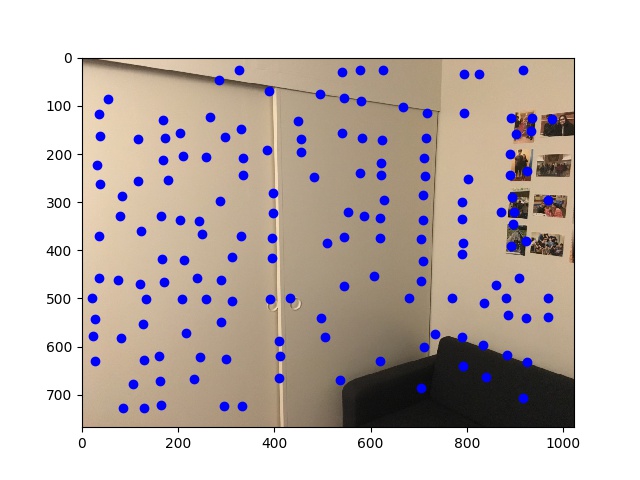

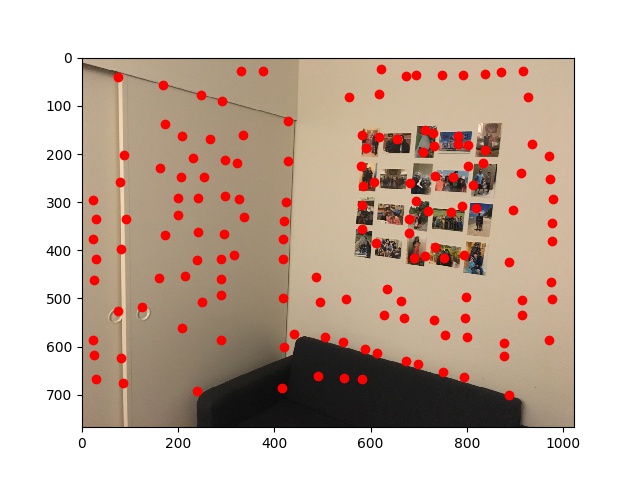

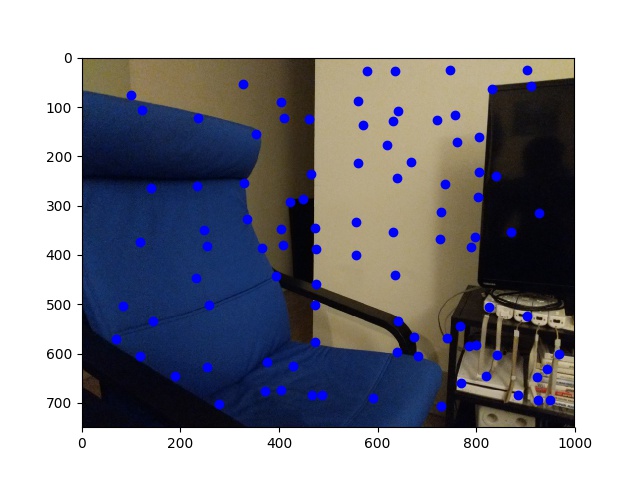

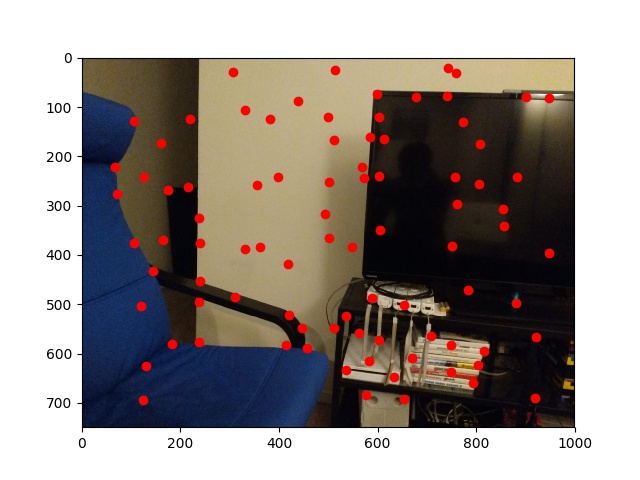

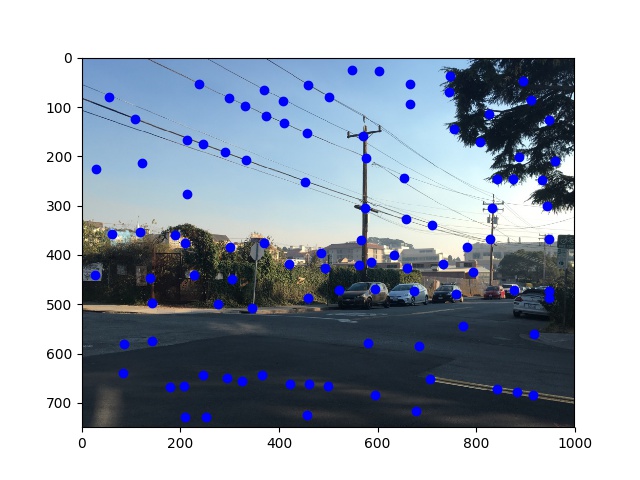

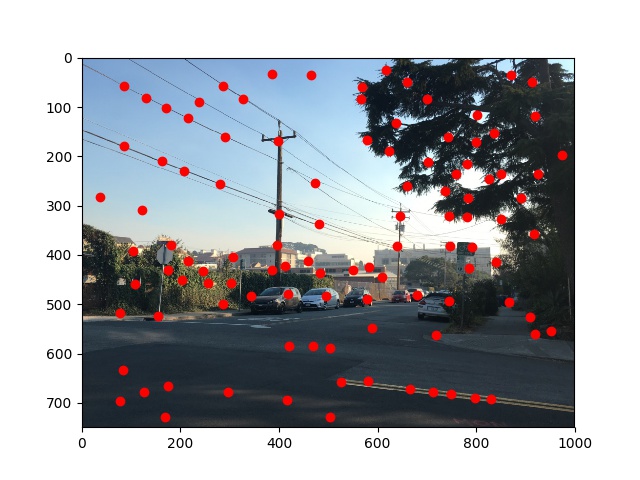

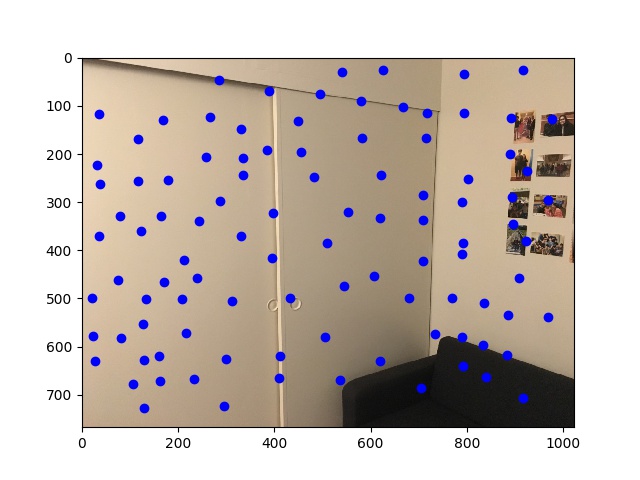

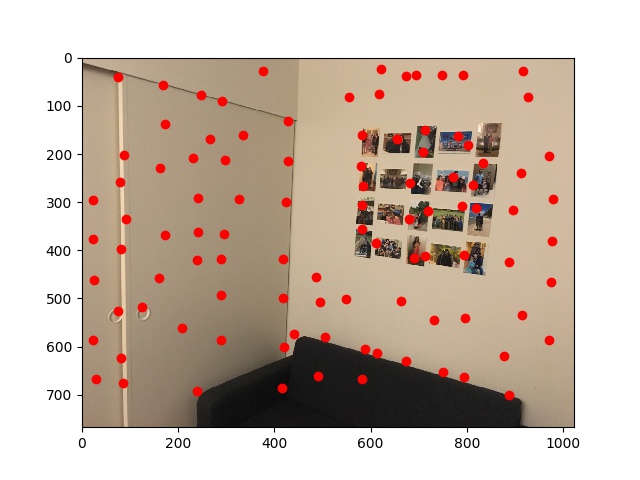

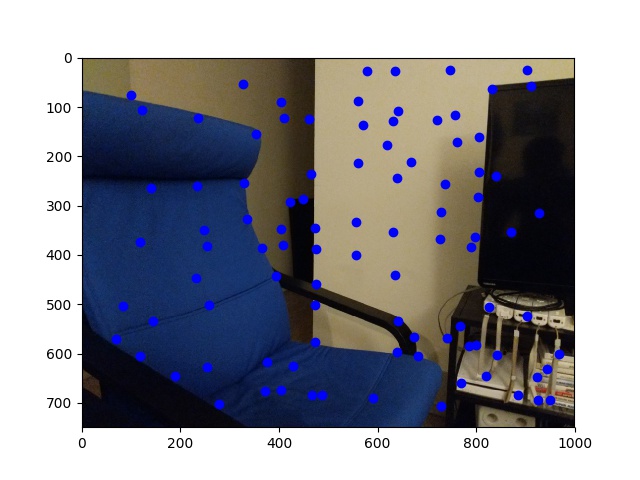

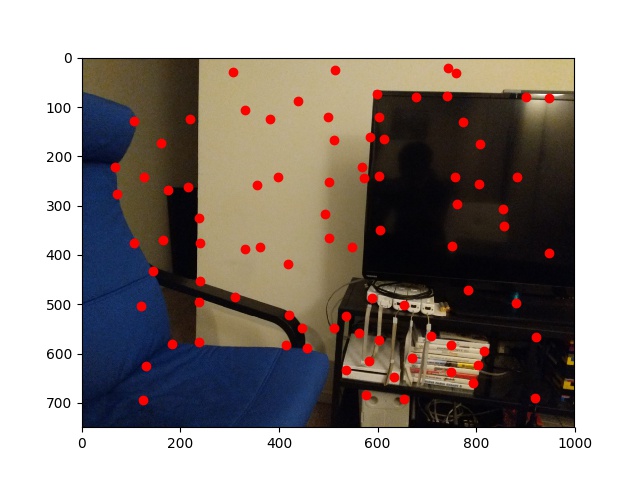

Here are a couple of examples of images warped to new coordinates. This is when you take an image and shift it using a homography so that points of it align with selected points from another image. Below are two images, and one of them warped to the other

Look at that beauty. Doing this required us to recover the homography (by choosing 4+ points of correspondences in each image, and running as least squares solver) and then doing some fancy looping over the images

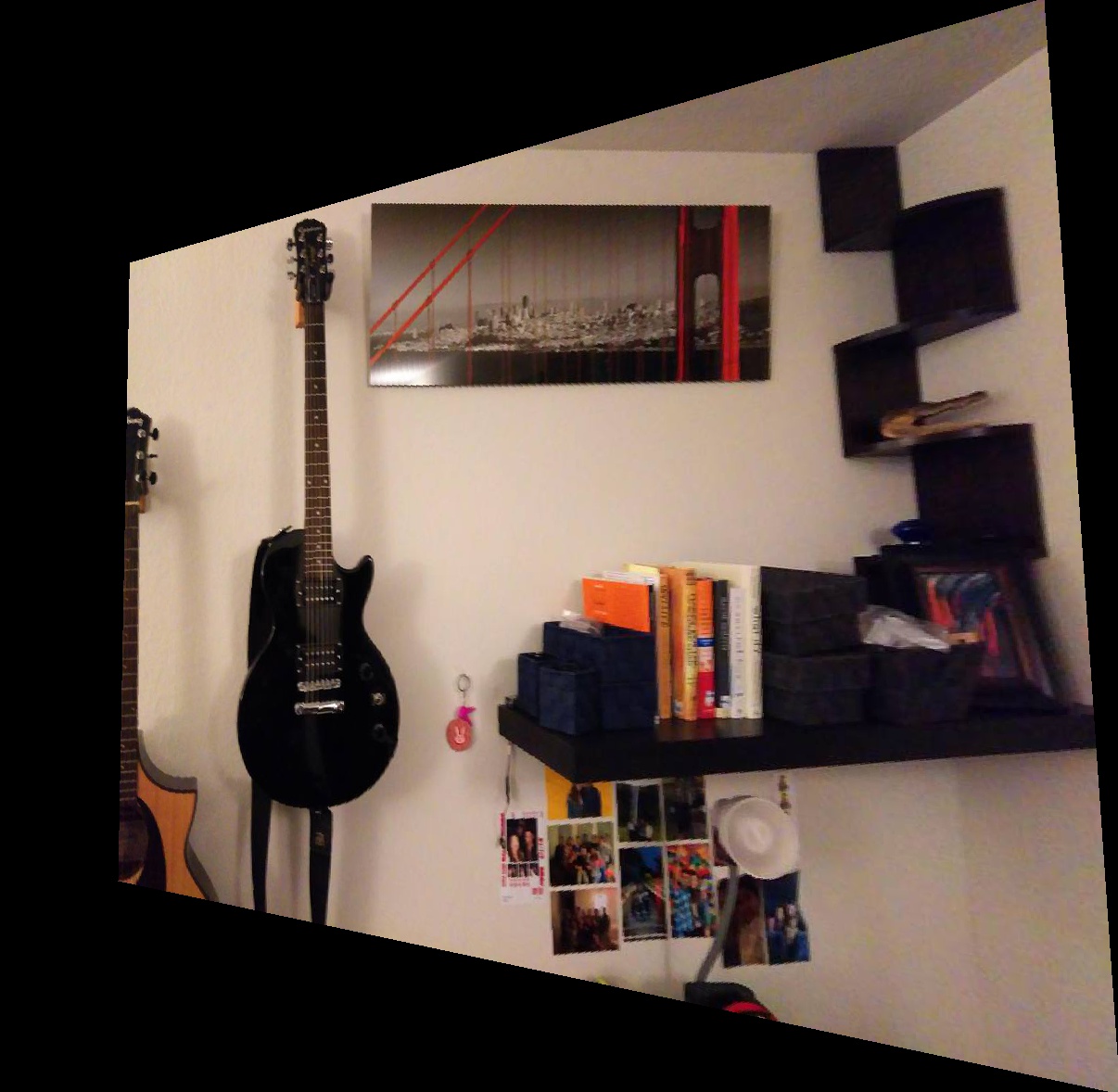

This is my room

This is my TV

Part 1.3: Image rectification

Instead of just warping images, here we can specifically warp to make it such that an arbitrary quadrilateral in the source image is now a rectangle. Here are a couple examples

Look at that beauty. Doing this required us to recover the homography (by choosing 4+ points of correspondences in each image, and running as least squares solver) and then doing some fancy looping over the images

Part 1.4: Image Mosaic

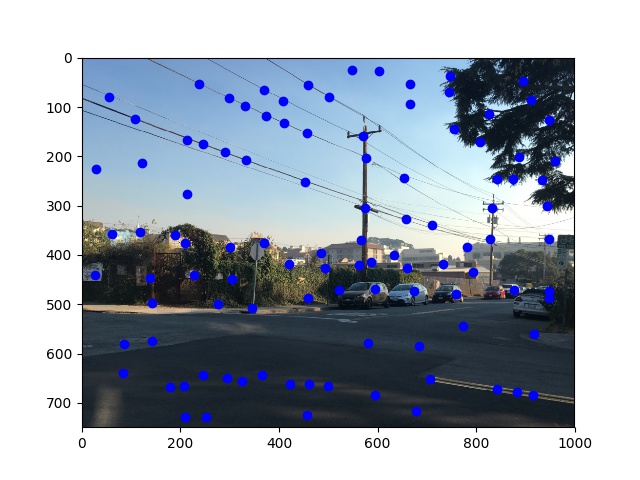

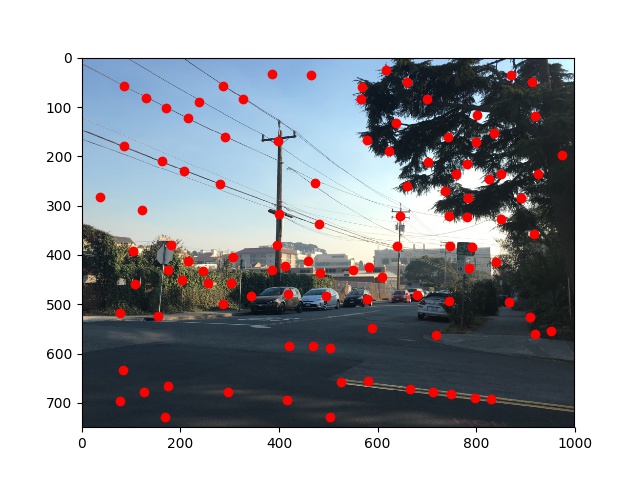

Here are 3 examples of images stitched together into a mosaic

Look at that beauty. Doing this required us to recover the homography (by choosing 4+ points of correspondences in each image, and running as least squares solver) and then doing some fancy looping over the images

This is my room

This is my TV

This project was actually pretty cool, if a little painful. Learning how to get the homography to work properly was an interesting part. In particular I had an issue for a while which really taught me the difference between projective and affine transforms. Specifically, I new that with a project transform when you input a point [x, y, 1] you would get [x'w, y'w, w]. With an affine transform, you would be able to swap the order of coordinates and do something like [y, x, 1] -> [y'w, x'w, w]. This is NOT the case with a projective transform :D You have to ensure that you input points in the right order.......