CS194-26: Project 1

Susan Lin

Objective

Our goal of this assignment is to take the digitized Prokudin-Gorskii glass plate images and produce a color image. Using image processing techniques, I generated a colored image by aligning and then combining the three color channel images (Red, Green, and Blue). It was quite interesting to learn about and work through this!

Approach

Low Resolution

For lower resolution images, it was feasible to exhaustively search over a window of possible displacements, judge them, and then select the displacement that gave the best metric.

I started out by cropping a flat 10% off the boundary of the images (to avoid skewing the metric by outliers and unimportant parts of the image).

Then, I aligned R with B, and G with B. I used either of two metrics / approaches across the bounds [-20, 20] by

- minimizing the Sum of Squared Differences [SSD or L2] (which adds up the squared differences between the two input images), or

- maximizing the Normalized Cross Correlation [NCC] (which takes the dot product of the normalized input images)

From there, I returned the x and y offset that would best suit the metric I used.

High Resolution

For higher resolutions, using a naive implementation took too long, so I created image pyramids when aligning to help speed up each comparison. By considering smaller resolutions of the image first, one can narrow down the bounds for where to align the color channel images, reducing how many times we have to calculate the SSD or NCC.

I created a recursive solution, where it would scale images down by a factor of 2 to the size of around 64 pixels by 64 pixels. From there I worked my way back up by testing across a small interval. At each level, the function would call the naive implementation of SSD/L2 across that small interval to find the offset bound for that level.

Problems that Arose

I somehow ran into a lot of trivial issues with pyramid align, so it took me a few days to fully debug it. One issue was that I was originally using resize instead of rescale, and it didn't downsize the image properly. I ended up spending a bit trying to figure that out. I also ran into a lot of issues with the parameters (number to scale by, number of levels, window to scan at for each level). I spent some time playing around with the bounds to work out what was wrong -- and I think it was a combination of either scaling too little and having my bounds set too small as well, or scaling too much and having too large of bounds (this threw some of my offsets into negative values). Alongside adjusting those parameters, I ran into some time issues, but after reassessing the paramters I got it to speed up!

My algorithm works on pretty much all the images, though I ran into some minor issues when running my algorithm on emir.tif (which likely has to do with the contrast).

Results

(note - I flipped my x and y coordinates)

Example Images (Low Resolution)

Cathedral.jpg

[Red Offset with respect to Blue [12, 3];

Green Offset with respect to Blue [5, 2]]

|

Monastery

Red Offset with respect to Blue [3, 2]

Green Offset with respect to Blue [-3, 2]

|

Tobolsk

Red Offset with respect to Blue [6, 3]

Green Offset with respect to Blue [3, 3]

|

Example Images (High Resolution)

Castle

Red Offset with respect to Blue [98, 4]

Green Offset with respect to Blue [34, 3]

|

Emir

Red Offset with respect to Blue [75, 42]

Green Offset with respect to Blue [49, 24]

|

Harvesters

Red Offset with respect to Blue [123, 13]

Green Offset with respect to Blue [59, 16]

|

Icon

Red Offset with respect to Blue [89, 23]

Green Offset with respect to Blue [41, 17]

|

Lady

Red Offset with respect to Blue [112, 12]

Green Offset with respect to Blue [50, 5]

|

Melons

Red Offset with respect to Blue [178, 13]

Green Offset with respect to Blue [81, 10]

|

Onion Church

Red Offset with respect to Blue [108, 36]

Green Offset with respect to Blue [51, 26]

|

Self Portrait

Red Offset with respect to Blue [176, 37]

Green Offset with respect to Blue [78, 29]

|

Three Generations

Red Offset with respect to Blue [112, 11]

Green Offset with respect to Blue [53, 14]

|

Train

Red Offset with respect to Blue [87, 32]

Green Offset with respect to Blue [42, 6]

|

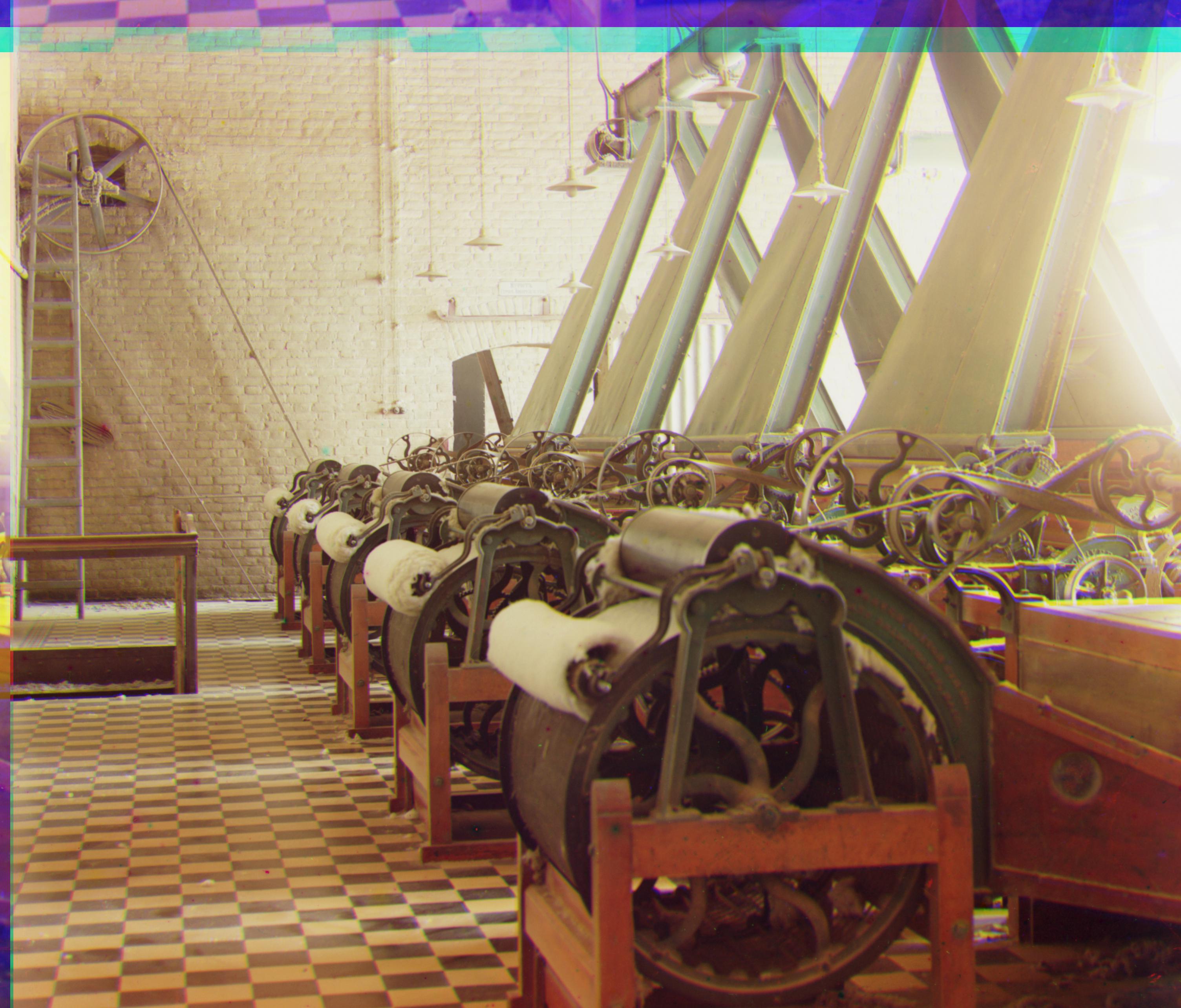

Workshop

Red Offset with respect to Blue [105, -12]

Green Offset with respect to Blue [52, 0]

|

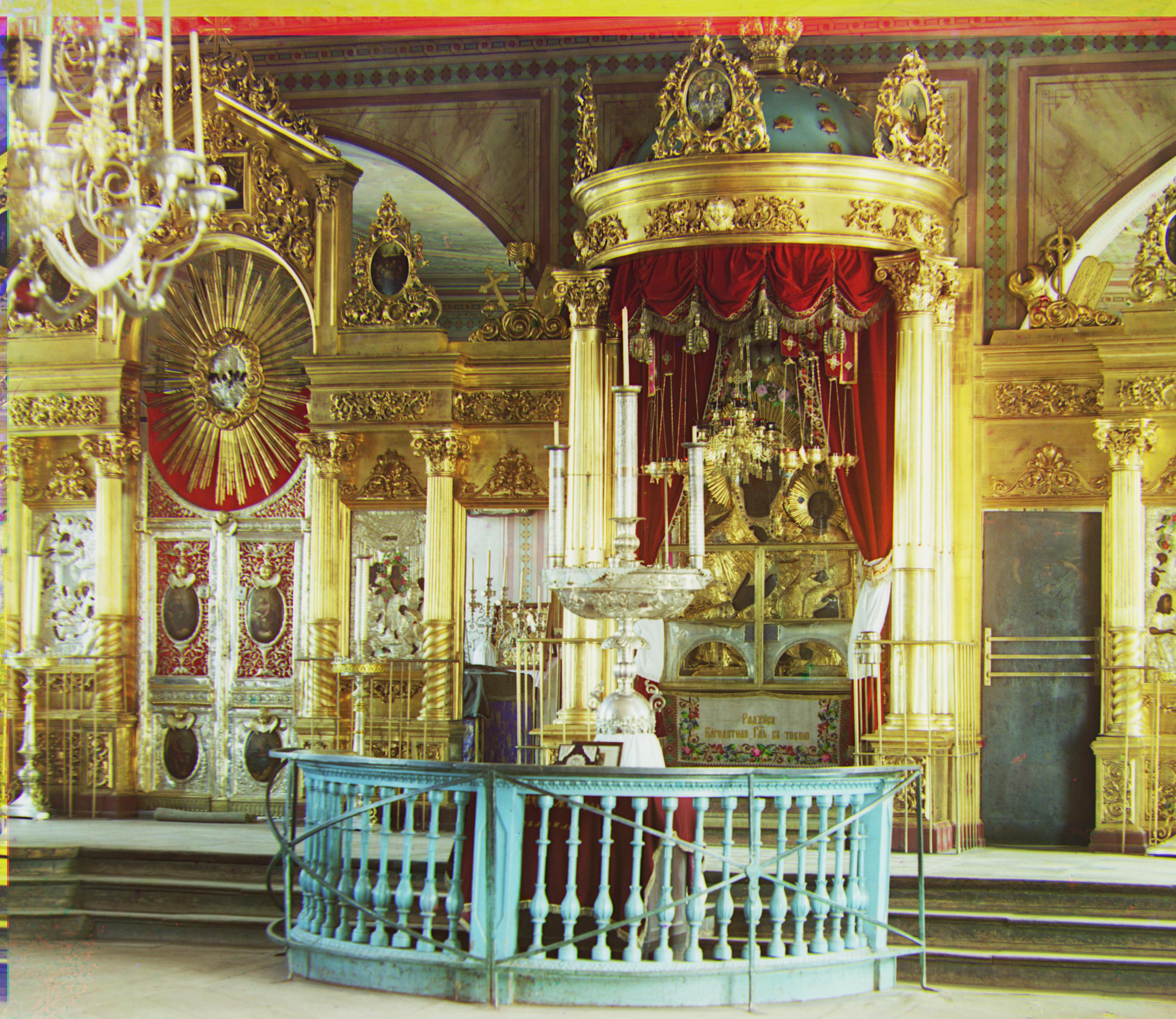

Images of My Choosing

Red Offset with respect to Blue [152, 24]

Green Offset with respect to Blue [72, 18]

|

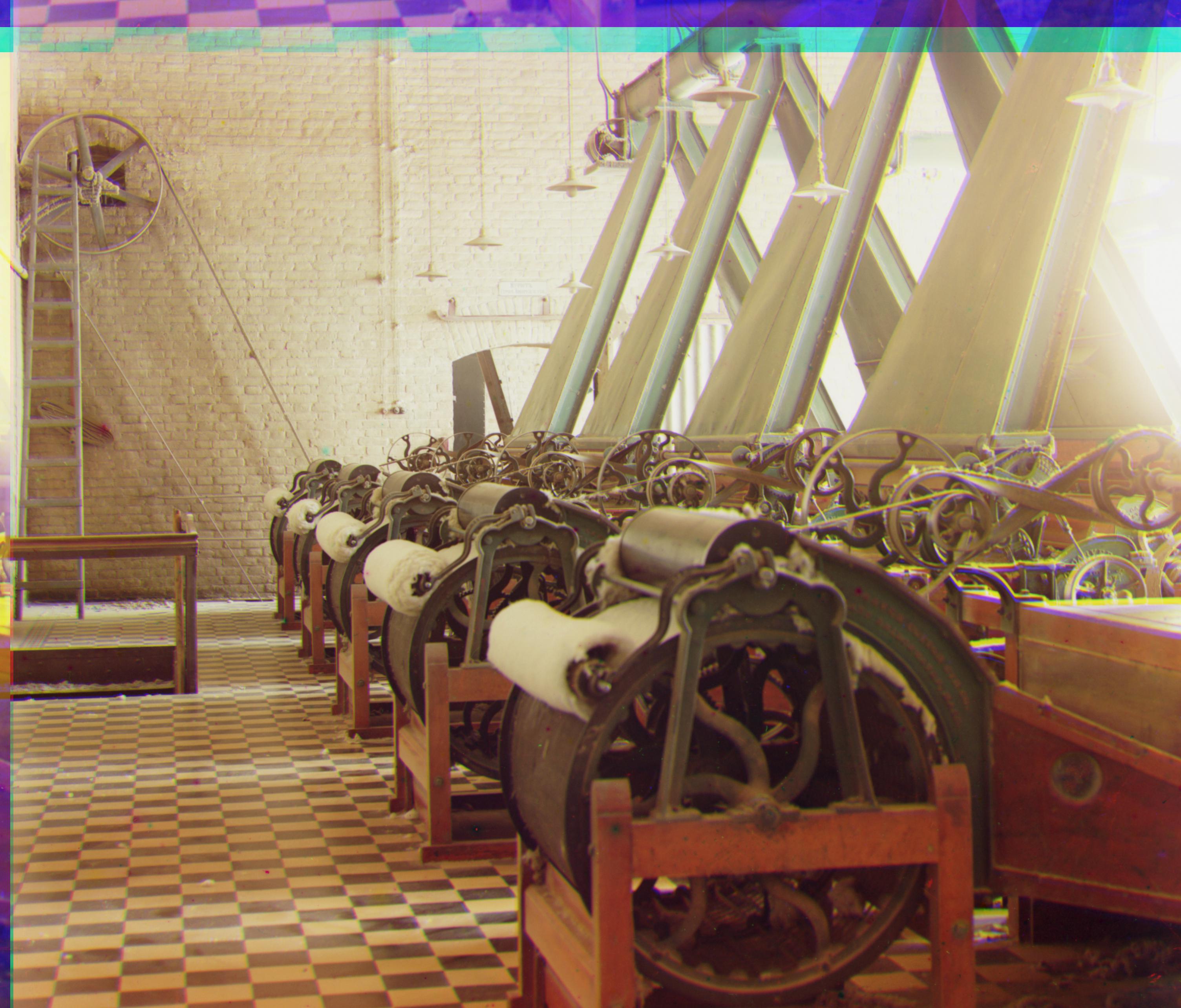

Red Offset with respect to Blue [133, 34]

Green Offset with respect to Blue [69, 26]

|

Red Offset with respect to Blue [126, 24]

Green Offset with respect to Blue [57, 12]

|

Red Offset with respect to Blue [97, -3]

Green Offset with respect to Blue [12, -1]

|