Project 01: Colorizing the Prokudin-Gorskii photo collection

Vanessa Lin

Overview

The Prokudin-Gorskii collection of photos contains photos of various people, architecture, historical sites, industry, and scenic views. In this project, the black and white triple-frame images are provided with the exposure of each scene in a red, a green, and a blue filter. To reconstruct these photos to their original colorful moments, I aligned each black and white filter and stacked all the aligned filters together to obtain the color image, for each of the images in the collection.

Alignment

To find the correct alignment(x, y) for each of the colored plates, I used normalized cross correlation (NCC), which is a dot product between two normalized centered vectors, as suggested by the project spec. In this method, I maximized the normalized cross correlation among the range of displacements. Initially, I used sum of squared differences (SSD), which calculates the sum of the squared difference value for each pixel, and found the displacement that gave me the smallest sum of squared differences. However, the sum of squared differences was not very robust in that the images still appeared slightly misaligned and blurry.

Algorithms

Low Resolution Images

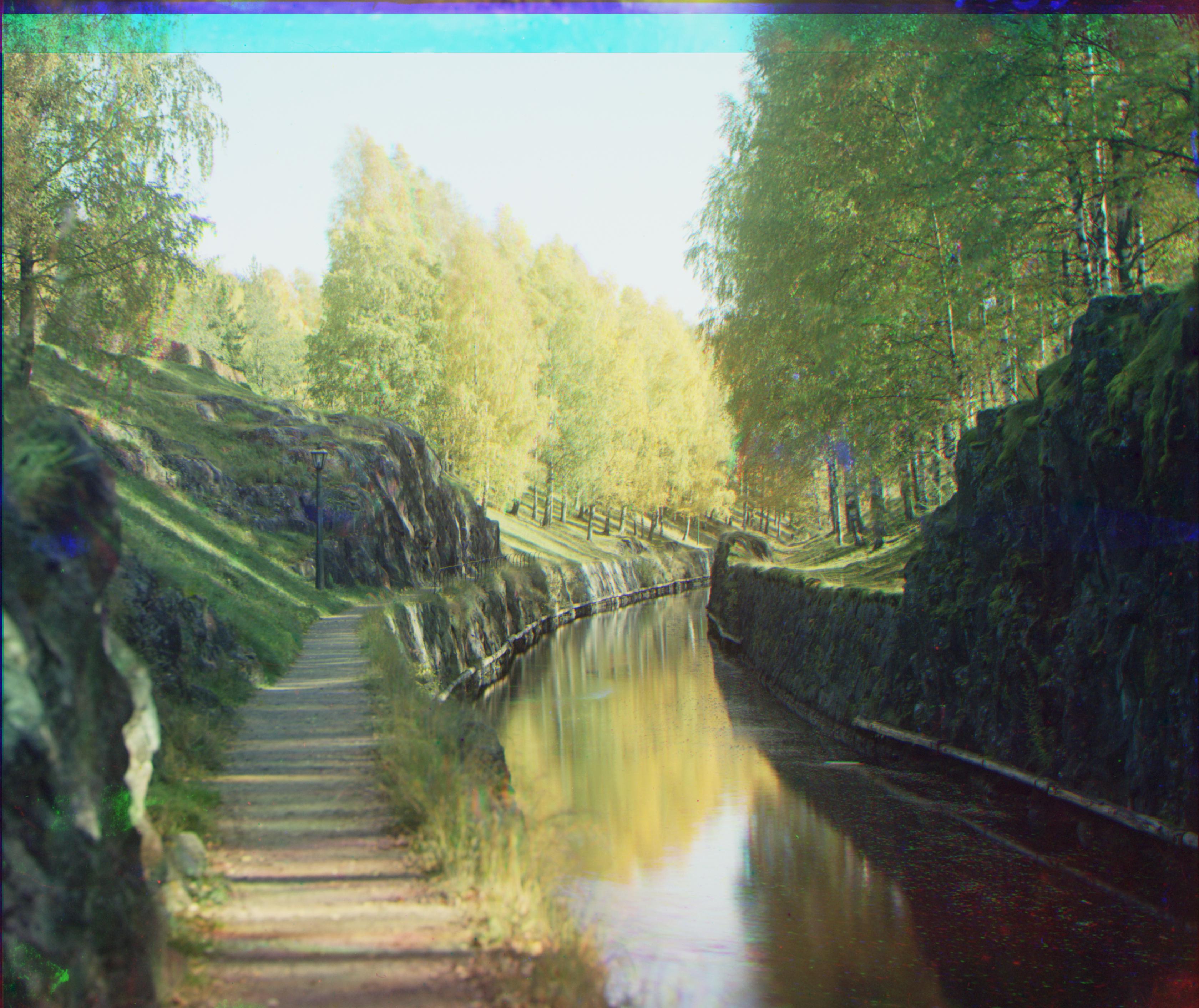

For these images, after separating the images into their respective color filter, I cropped off a certain number of pixels from all four sides of the images to remove the black edges. The crops for the smaller images are specified below in captions. If the black edges were not cropped off, the naive alignment with normalized cross correlation would still make the image slightly blurry. Using the blue filter as the base, I aligned the green filter and red filter to the blue filter image through the alignment process that I described above. I usednp.roll to shift the image based on the displacement, which was chosen in a range of (-30, 30): 30 pixels in both horizontal and vertical directions. After retrieving the best displacement coordinates for both the green and red filters, I then shifted the green filter and red images to their respective displacement coordinates and finally stack all the filters together by red, green, and blue to produce the colored image.

High Resolution Images

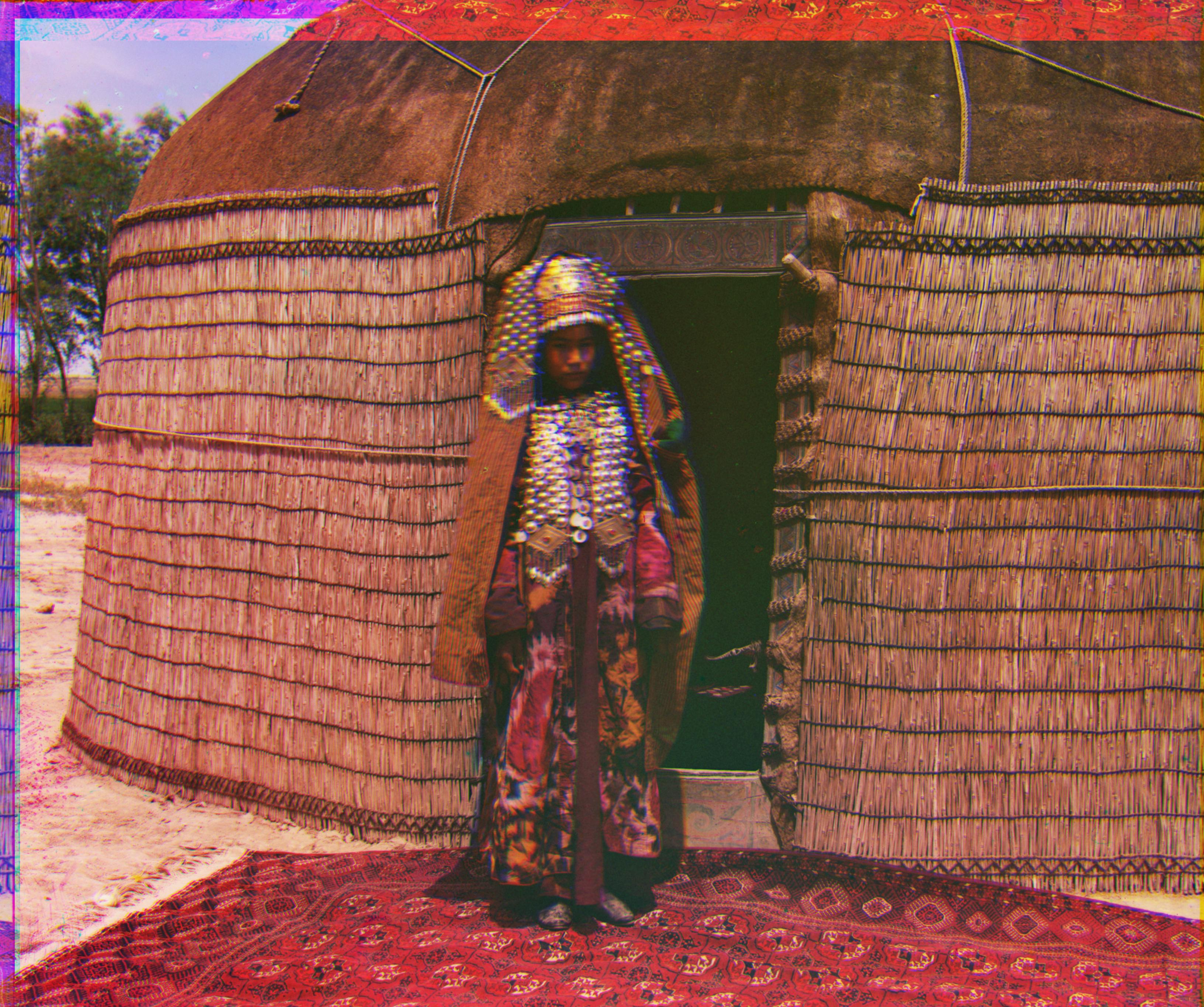

Because the high resolution images are so large, the naive alignment method for low resolution images does not work for these larger images. To improve runtime, I implemented an image pyramid that searches for the best alignments from the blurriest/coarsest image to the finest image. Similarly to the process of colorizing the low resolution images, I again cropped off a pre-set percentage of pixels from all four sides of the images to remove the black edges. The crops for the high resolution images are specified below in captions.For the image pyramid algorithm, I defined a maximum number of levels for the pyramid, a factor alpha for scaling, and displacement for each level. First, I saved copies of the original filters, so at the end, I can align those original filters and stack them to produce the high resolution colorized imaages. Then, I started to scale the blue, green, and red filters down to the coarsest level, which I defined to be if the image's height was less than 250 pixels. While I scaled down, at each level, I would keep track of the factor that I would need to multiply to return that image to the original size through a list of multiplying factors. Now that the filters are at the blurriest level and a much smaller size than before, I started to scan with a range of

(-20, 20) pixels to find the best displacement and updated the optimal displacement for the green and red filter with regards to the multiplying factor. After, I rotated the filters by the best displacement for that level and rescaled the filters to be finer to move to the next level. For all the finer levels after that, I scanned with a range of (-5, 5) pixels and did the same process until I reached to the original size. With the optimal displacements calculated, I shifted the original green and red filters by those displacements and stacked the colored filters together to produce the colorized image. The parameters used for the image pyramid were 8 as the maximum number levels of the pyramid, a factor alpha of 0.5 for scaling, and a displacment of 5 for each level.

Problems

Overall, the naive alignment algorithm and the image pyramid performed pretty well on most of the images, other thanemir.tif, due to considerable differences in brightness between the filters. Initially, I had a problem with blurriness with the naive alignment method of normalized cross correlation, but cropping the black edges helped fix that problem. Some other problems that I encountered were aligning photos that involved people, because for the harvesters.tif and lady.tif, there was some movement that went on between the separate color filter shots, so there are some misalignments of the girl near the left side of harvesters.tif and slight blurriness around the lady's face of lady.tif. Also, for images that had some sort of movement like wind, there would be a small notable patch of the filter in the colorized image, like the man's coat in three_generations.tif.

Bells and Whistles

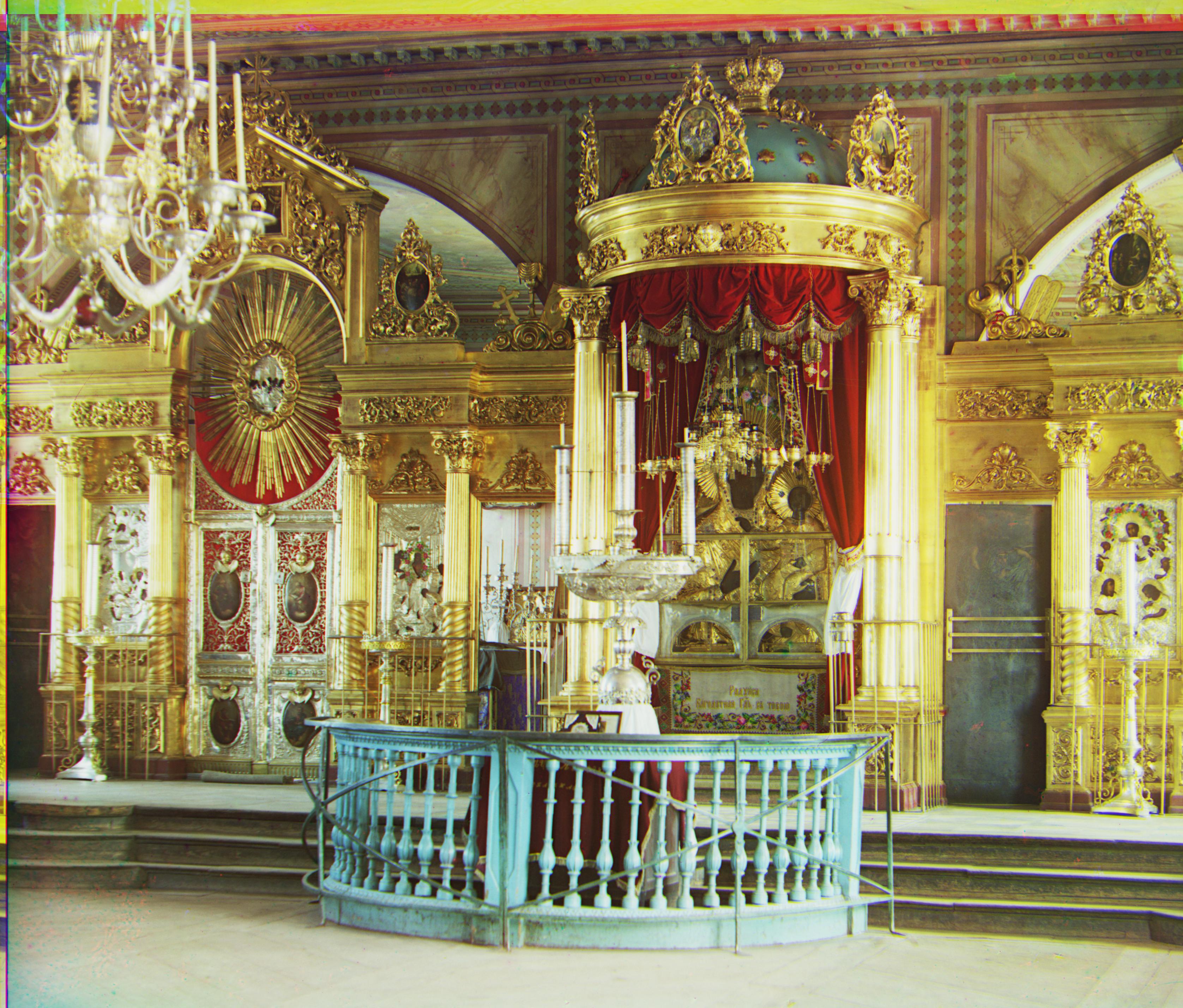

Edge Detection

To fix the alignment problem inemir.tif, I used the Canny Edge Detector from skimage with a sigma of 1.5 on the blue, green, and red filters. Using the normalized cross correlation and my image pyramid but with the Canny filter versions to compare and align the colored filters instead, emir.tif was cleanly aligned. This worked a lot better compared to the RGB comparison as the filters were being aligned based on the outlines of the clothing and his silhouette. Also, similarly for lady.tif, the Canny filter was able to align exactly on the face of the lady, compared to before where there was some haziness around her face.

Original Colored vs Bells and Whistles

Additional Photos