To align the images, I used an image pyramid starting at the least resolution (2x2 grid) and aligning from there going up in resolution. The error function I used was the euclidian distance between two matrices squared, only caring about the middle 4/5ths of the pixels.

At the start, instead of actually resizing images I thought it would be faster if I just looked at a subset of pixels. For example, if I wanted to find the best displacement at a factor of 1/4x the original image, I'd look at every 4th pixel and compare them for the error, and modifying the displacement vector by 4 for that level. This was indeed fast because no extra resizing was necessary, however it had very hit-or-miss results, where for some images it would work great but for others it would not work at all. Looking back, this might work pretty well if I were to smooth out the image to remove some noise, but in the end the resizing was nearly as fast so it didn't matter.

Initially, to calculate error I was looping over the matrix's indices and comparing the two matrices like that. This turns out to be pretty slow and it was getting tough to change the loops to maps/parallelize them. In the end, I realized that the distance between two matrices A and B is just sum(np.power(A, 2) - np.power(B, 2)), and replacing the error function with that dramatically sped up the program. It went from taking around 3 minutes for small images to less than 5 seconds, with the largest images taking less than 20 seconds. Running the script on all the provided images took around 2 minutes total, which is much faster than the original implementations.

I was able to get edge comparison to work. The simple way I did it was just finding the derivative of the image at each point and storing it in that pixel, and then using the same error function for that. This did not end up working for a couple of reasons. First, the noise in the images caused the edges to misalign, but this could've been fixed with some smoothing before the fact. Additionally, it was super slow, with the large images taking over 2 minutes to edge-ify. I tried to fix this by replacing my own naive for loop with numpy's convolve, however it does not seem that numpy has a 2d convolve implementation so it did not end up working. Afterwards, I can see that scimage has a 2d convolve, so I could've implemented canny edge detection for some success, however in the end my images were aligning pretty well.

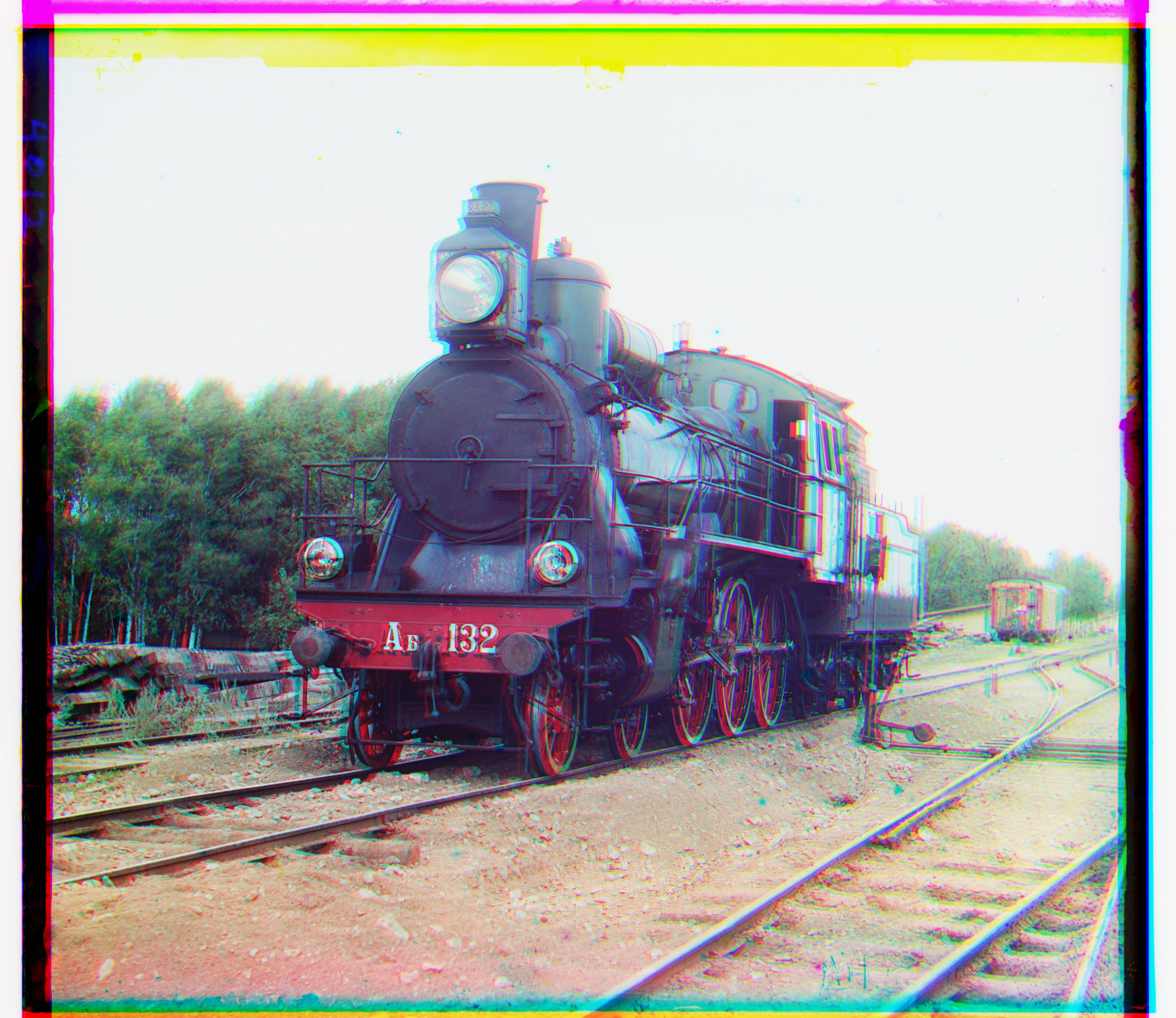

The image aligning worked fast and accurate. The images it worked best on had a large amount of color, but those colors weren't primary tones (like red, green) but instead were combinations of colors (like gold, purple). The images it worked poorly on were either monochromatic (and there would generally be one layer misaligned for those), or images that have a lot of the primary colors (ant these would be just overall fuzzy.)

Image Z_aqueduct g: (3, 0) r: (14, -1)

Image Z_rafts g: (3, -1) r: (6, -2)

Image Z_sunset g: (4, -1) r: (-7, -3)

Image cathedral g: (5, -1) r: (11, -1)

Image monastery g: (-3, 1) r: (3, 2)

Image tobolsk g: (3, 2) r: (6, 3)

Image castle g: [35, 4] r: [98, 5]

Image emir g: [49, 21] r: [98, 17]

Image harvesters g: [59, 12] r: [124, 9]

Image icon g: [41, 16] r: [90, 22]

Image lady g: [57, 3757] r: [118, 3745]

Image melons g: [82, 5] r: [180, 9]

Image onion_church g: [52, 23] r: [108, 35]

Image self_portrait g: [76, 3809] r: [172, 3808]

Image three_generations g: [52, 7] r: [111, 8]

Image train g: [41, 3740] r: [90, 3]

Image workshop g: [54, 3738] r: [104, 3727]