# CS194-26 Project 1: Images of the Russian Empire (colorizing the Prokudin-Gorskii photo collection)

By Yihang Ye

Overview

Back to 19th when colorized photography was not invented, Prokudin-Gorskii had the idea of recording photos with filters of different colors so that, some day in the future, colorized pictures could be reconstructed using filtered images. With his effort, collections of thousands of photos taken under red, green, blue filters were preserved.

This paves the goal of this project: digitalized his collections of photos and reconstruct colored images using the filtered images taken at the time.

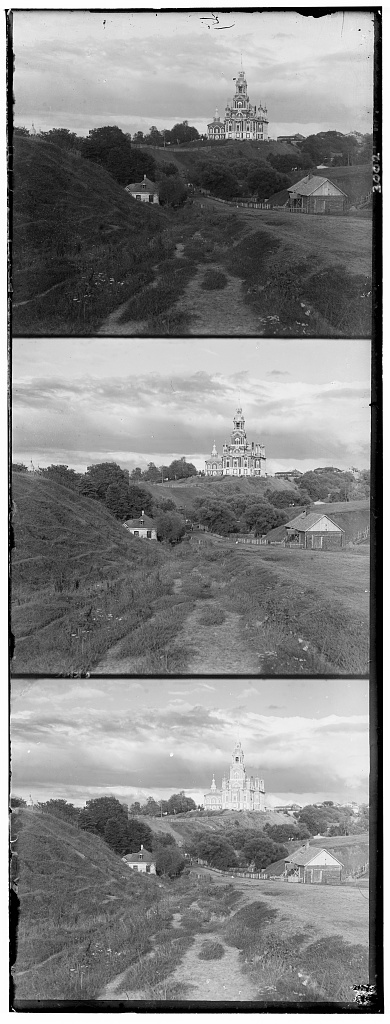

Original images with no colors (cathedral.jpg)

My Approach

The basic idea of reconstructing colored images goes simple: Align and stack the 3 images of the same scene taken under different filters to fill up missing pixels. However, specific approaches differ slightly as photos of different resolutions are handled.

Low-Reso: Single-Scale Alignment

For pictures with low resolutions such as 300*300. A naive sliding algorithm is used to find the best alignment. We simply need to search iteratively through all possible combinations of shifting R-image(referring to images taken under red filter) and G-image over a fixed B-image to find the best alignment.

The sliding interval was set to be 10%~15% of the image's length and width since we dont't want more than 20% of the image to be clipped since shifting would blur the edge of the final image. By optimizing over SSD(NCC has similar results) of the shifted image with respect to one of the original image to preserve most features, a set of offsets could be found for G-image and R-image. Here are the results of using found offsets to reconstructed colorized images(Offsets Format: [R-vertical, R-horizontal, G-vertical, G-horizontal]):

cathedral.jpg

offset: [12, 3, 5, 2]

monastery.jpg

offset: [3, 2, -3, 2]

tobolsk.jpg

offset: [6, 3, 3, 2]

High-Reso: Multi-Scale Alignment

For images with high resolution such as 4k * 4k, the naive solution would not work well as finding an ideal combination of shifts would have become too computationally expensive. To tackle this, a recursive pyramid algorithm is implemented: Shrink the resolution of the image recursively and find approximate best offsets in the new low-reso image.

In this algorithm, new search space for possible shifts would be the block chunk with previously-found offsets positioning at the center, and the distances between sample points are determined by a passed in argument(number of intervals). Starting with a approximation of ideal offsets in its low-reso version, with appropriate rescaling and search of points around the approximation, best-offsets could be found in a much more efficient way. Here are results of applying multi-scale search over high-resolution images (original image size:~70MB) & (Offsets Format: [R-vertical, R-horizontal, G-vertical, G-horizontal]):

self_portrait.jpg

offset: [177, 39, 78, 31]

melons.jpg

offset: [175, 4, 79, 4]

castle.jpg

offset: [95, 9, 31, 9]

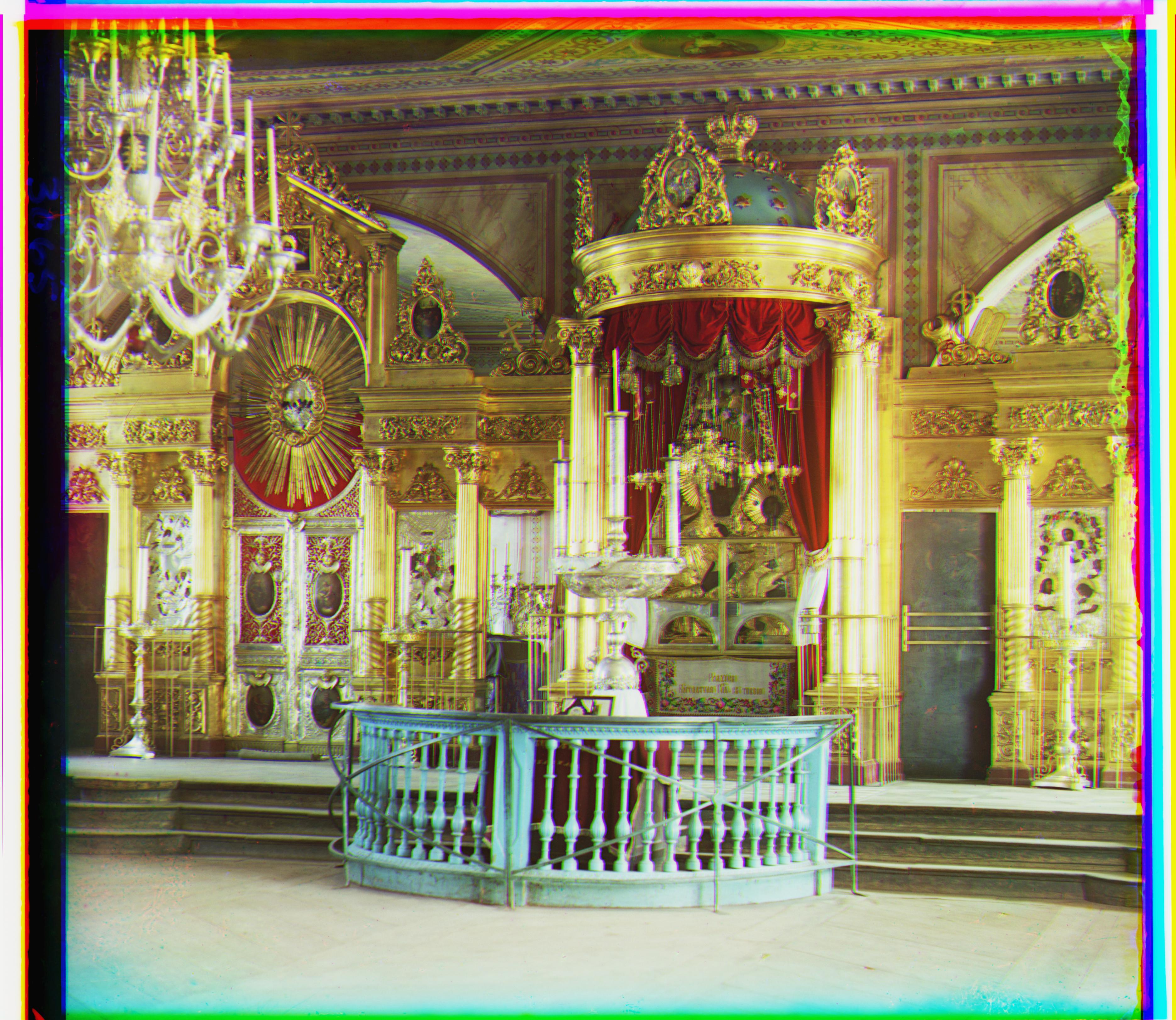

onion_church.jpg

offset: [111, 33, 55, 25]

icon.jpg

offset: [94, 19, 46, 9]

train.jpg

offset: [90, 31, 40, 7]

lady.jpg

offset: [112, 4, 48, 4]

workshop.jpg

offset: [104, -10, 56, -3]

three_generations

offset: [112, 8, 48, 8]

emir.jpg

offset: [112, 72, 48, 26]

harvesters.jpg

offset: [128, 12, 64, 12]

Additional Examples

(Offsets Format: [R-vertical, R-horizontal, G-vertical, G-horizontal])

ukraine.jpg

offset: [120, 6, 24, 6]

wooden_church.jpg

offset: [39, 39, 7, 15]

school.jpg

offset: [64, 4, 24, 16]

Challenges

One of the provided image emir.tif and one of the additional example image has 3 images under different brightness. This has made the resulting images much more blurred compared to other colorized high-reso images. To solve the problem, a finer granularity in terms of sample distance is granted for it to perform a more thorough search. The resulting image does become more clear for the additional example; however, the results of 'emir' were still not as promising as other pictures. One of the potential reasons is that the additional example contains more referencing items that could help the metrics determine shifting optimal (such as the wooden fence next to the woman) whereas 'emir' only has 1 major figure located in the center of the image. This might have led to the substantial differences in reconstructed results. As a potential solution, a more informative metric might help with coping with brightness-issues in image 'emir', where referencing-items in the image are less informative compared to other images.

Bells & Whistles

Auto-Cropping

As mentioned in previous part, image's shifting and re-stacking would lead to inevitable blurs at the edge of the resulting image, harming the overall image quality. With regards to the situation, an auto-cropping mechanism is implemented to improve the image quality. It is done by removing sections around the harsh edges so that the core contents of the images could be better presented. Here are results of auto-cropping performed on several images:

Before:

After:

Before:

After:

![]()