The gradient magnitude tells us how quickly intensity is changing in the direction of the gradient. This allows us to define which edges are most significant in the image.

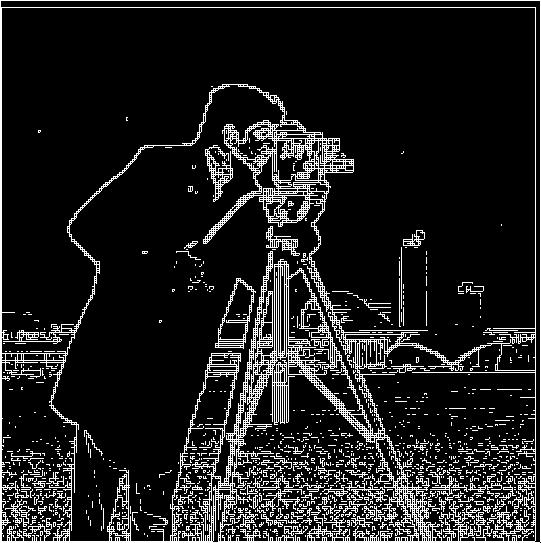

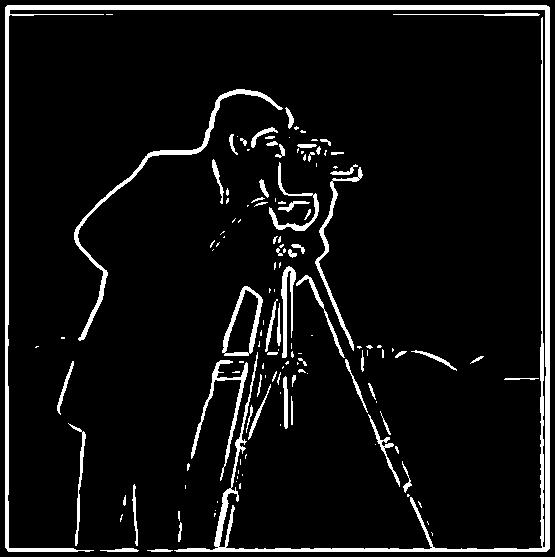

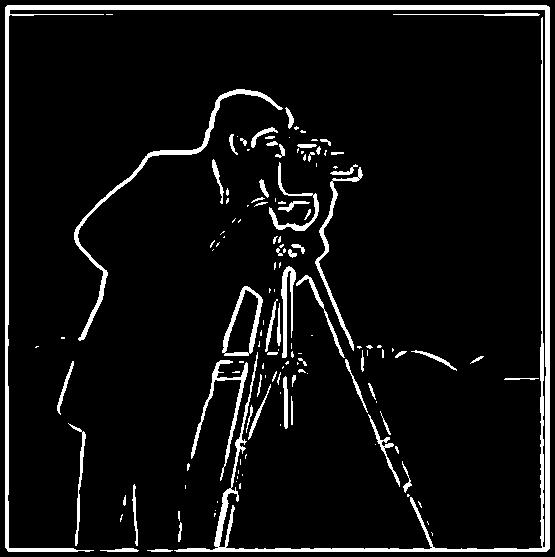

The left image shows the gaussian filter on the cameraman photo by convolving the image with a gaussain. The right image shows the derivative of gaussion (DoG) filter by creating a single convolution. Compared to part 1.1, these edge-defined images are more blurry, as they are impacted by the smoothing operator. However, despite the differnt processes, the two images (gaussian and dog filters) do match.

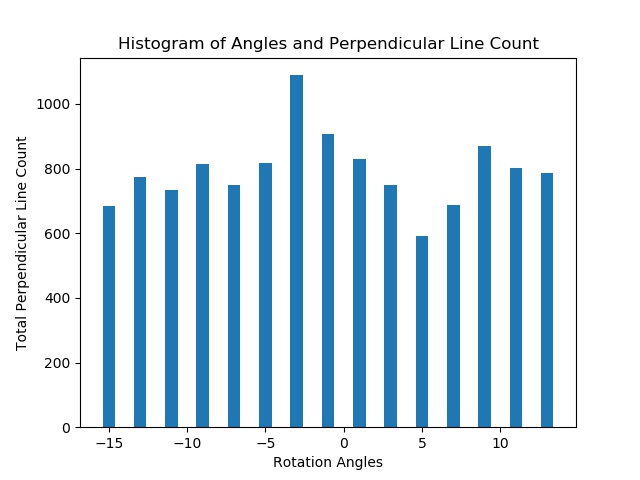

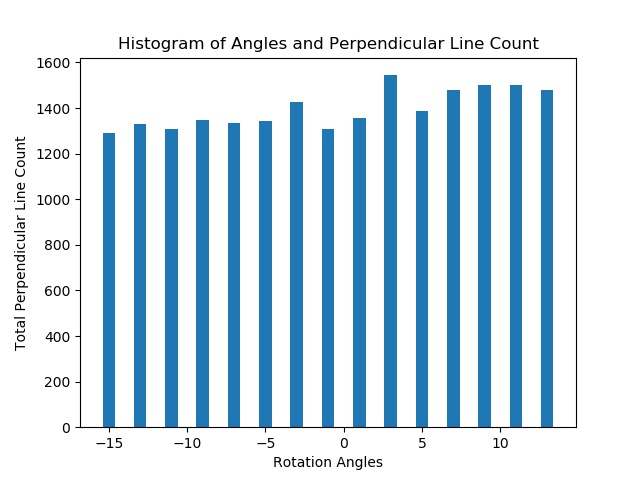

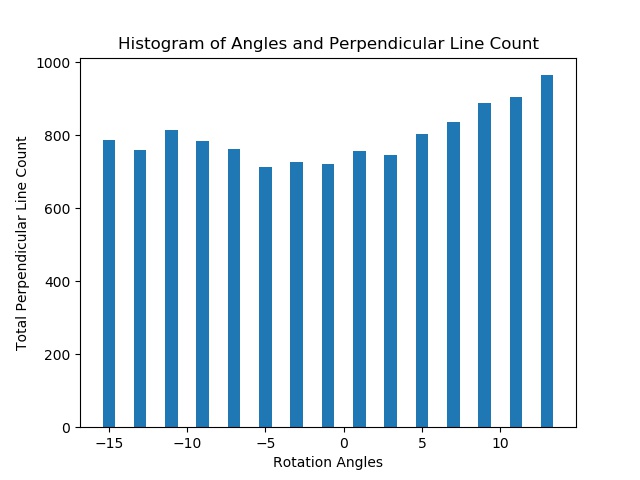

Here, I looped through possible rotations, then used the gradient angle to determine the number of horizontal and vertical lines detected at each rotation. We take the rotation with the max value of perpendicular lines and apply that to the original image to get automated rotation.

Chosen Angle: ~ -3 degrees

Chosen Angle: ~3 degrees

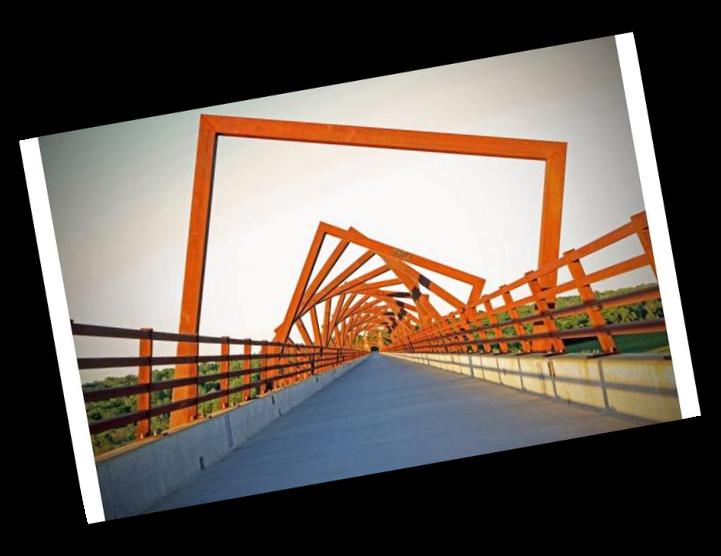

This picture failed because there are many perpendicular lines that the program detected, but that did not represent the correct alignment.

Chosen Angle: ~15 degrees

I subtracted a lowpass blurry image from the original image to get a sharper image of the stronger higher frequencies.

Progression: original, low frequencies, high frequencies = original - low frequencies

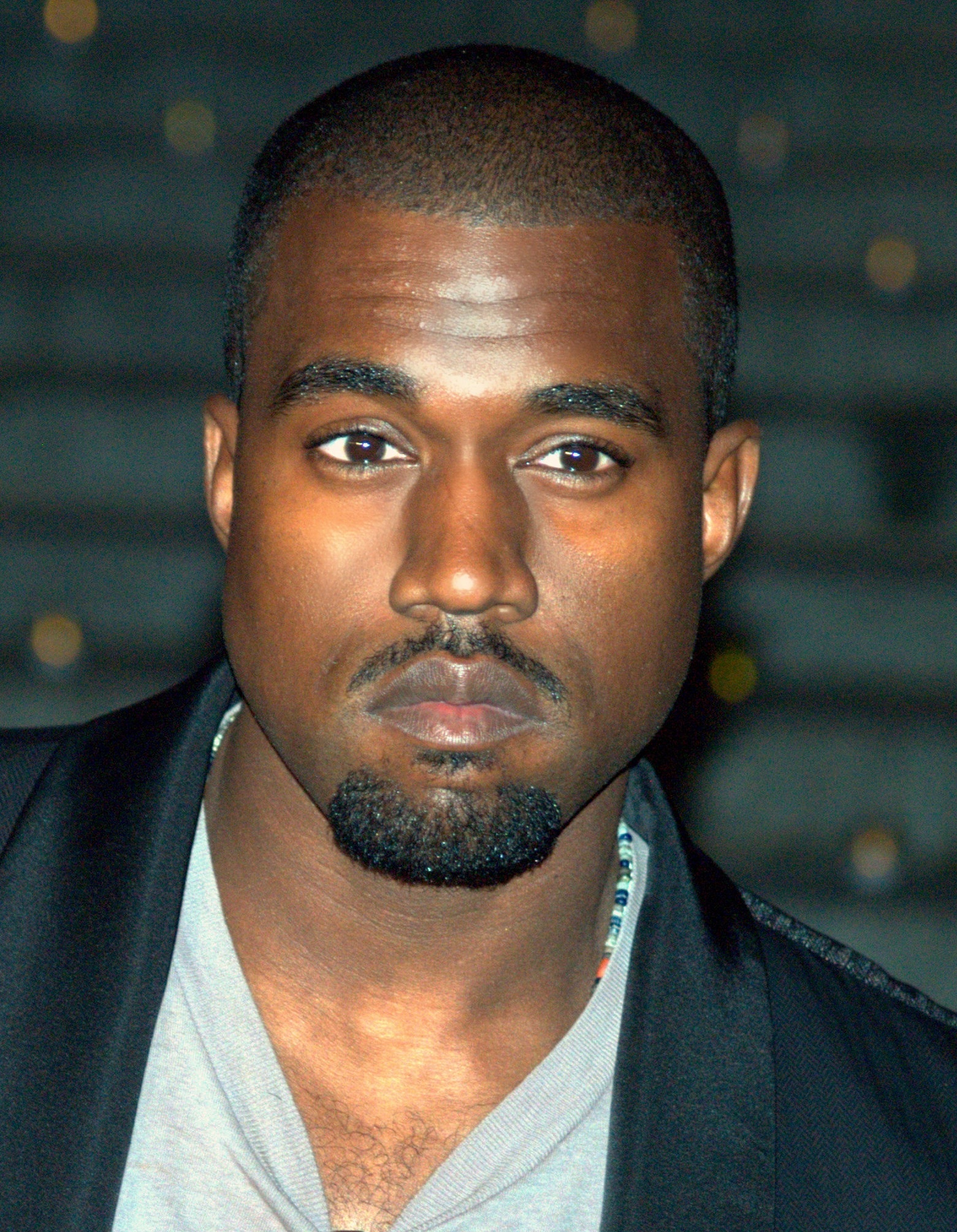

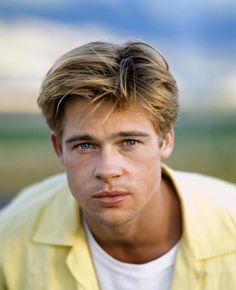

We blend the high frequency portion of one image (that tends to dominate perception) with the low-frequency portion of another (which can be seen from a distance), you get a hybrid image that leads to different interpretations at different distances.

These are the frequency domains of input images Taylor Swift, Kanye and of output hybrid image

High Freq Image, Low Freq Image, High Freq Image (filtered), Low Freq Image (filtered), Hybrid

The blend below "failed" because the photos are taken at different angles and their face shapes are quite different. (Even though their eyes line up, their chins do not line up).

The images are convolved with a Gaussian filter with an increasing sigma by a power of two every time, resulting in an increasingly coarser image. The Laplacian stack represents the difference between every level of the gaussian stack. Started with sigma = 1, size = 45.

Gaussian

Laplacian

I applied the Laplacian to the two images. Then, applied the gaussian filter to the mask. (The Gaussian filter determines which nodes at each pyramid level lie within the mask, and it "softens" the edges of the mask through an effective low-pass filter.) Finally, we combine the images and the mask with the following equation: result = sum(mask[i] * la[i] + (1-mask[i])*lb[i]). This results, in a correctly applied mask onto the combination of both images.