Project 2

by Danji Liu

Sep 24

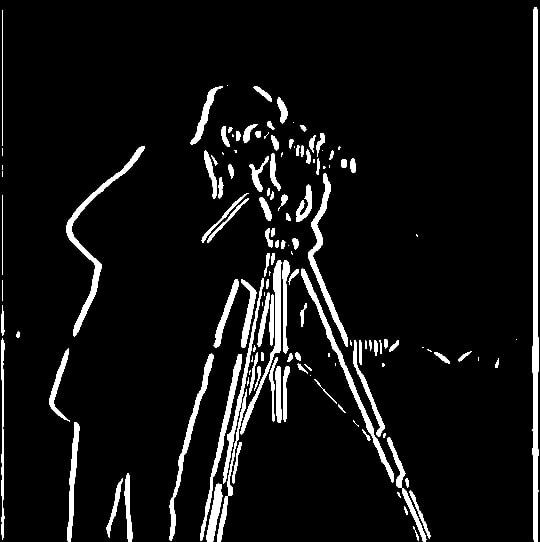

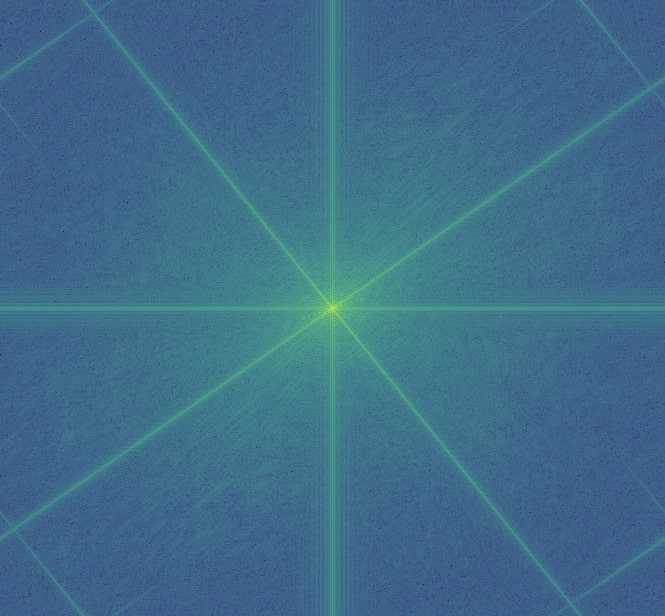

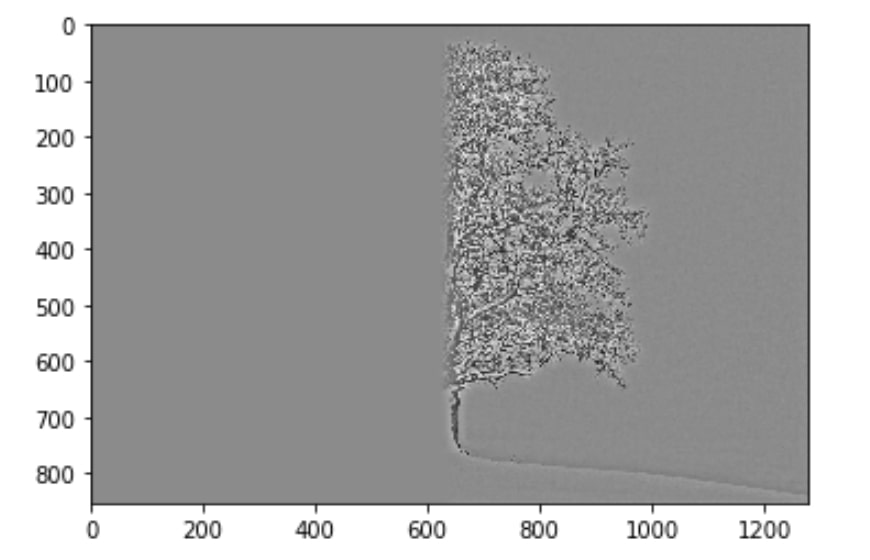

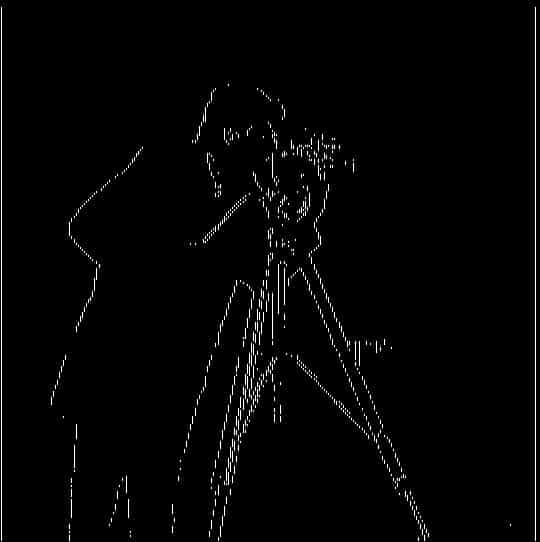

Part 1.1 gradient magnitude computation

I first turned [1, -1] into a 2 by 1 matrix for Dx and 1 by 2 matrix for Dy. With these matrices, I ran covolve2d on an image channel and the derivative matrices to output the gradient with respect to x and to y. Then, I calculated the gradient magnitude (the edge) by taking the square root of squared df/dx and squared df/dy. Finally, I experimented with the threshold value and set it at 0.31 for part 1.1. For every pixel value that's smaller than the threshold, it gets mapped to 0. Otherwise, it's mapped to 1.

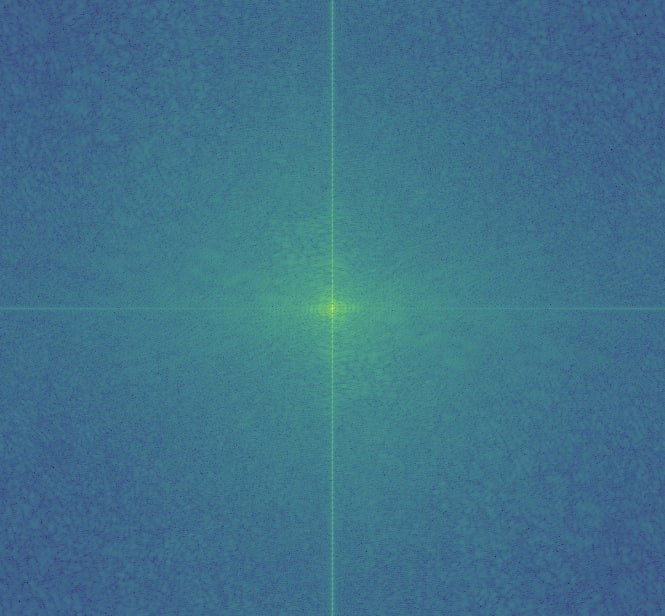

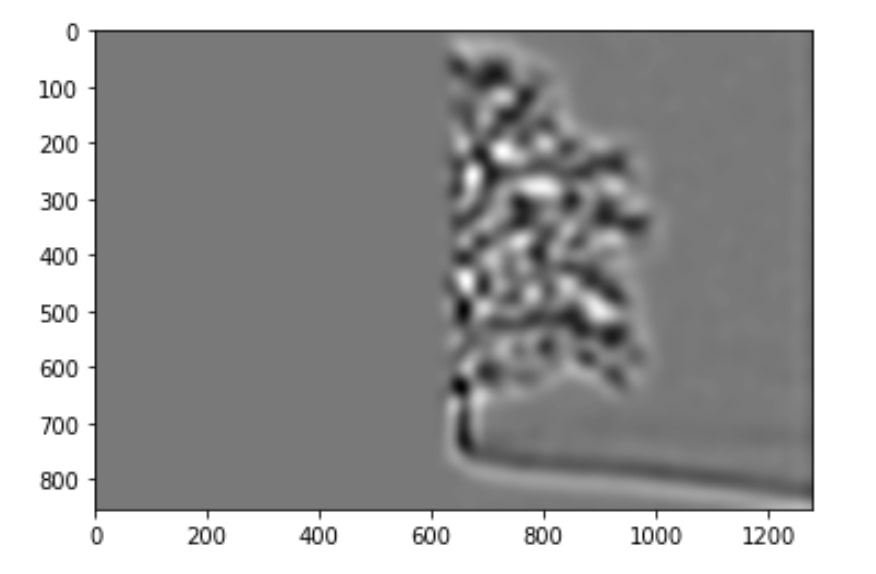

Part 1.2 What differences do you see?

The differences compared to 1.1 are that the edges are more prominent and the noises are significantly reduced in the final image. This is because we applied a Gaussian filter on the original image. The Gaussian filter serves as a low-pass filter and smooths out all the high frequency pixels in the image. Therefore, random noises no longer show up in the final edge image.

The two different ways: taking derivative on the filtered image vs creating a derivative of gaussian filters. The second way is faster than the first one. They show the same results.

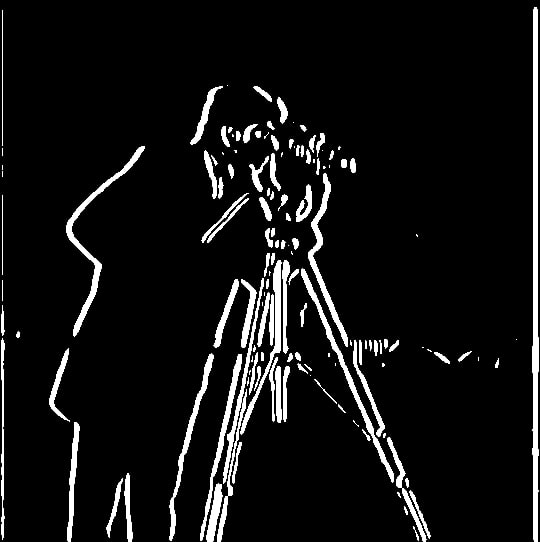

Part 1.3 Straighten the image

Results

facade.jpg

original vs straightened

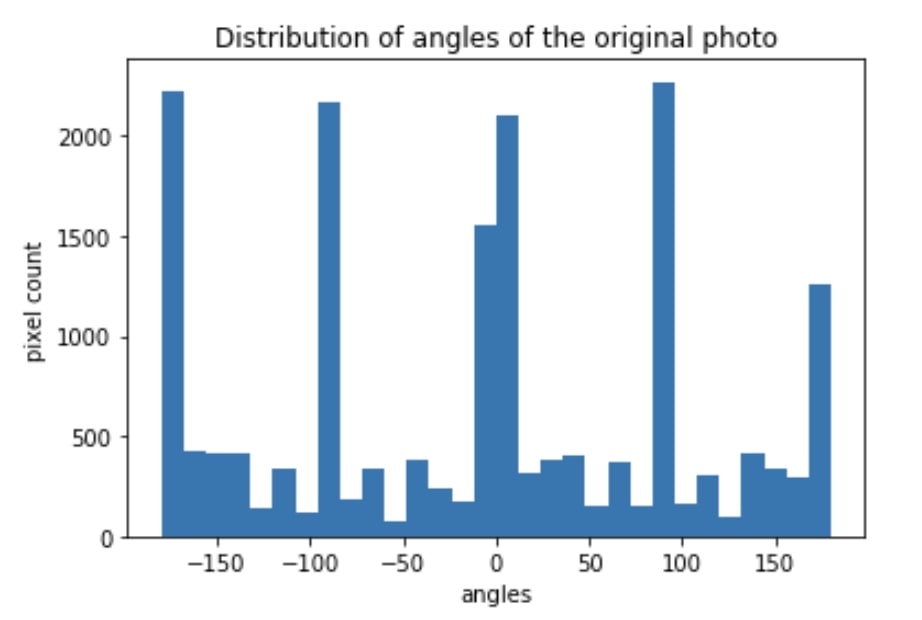

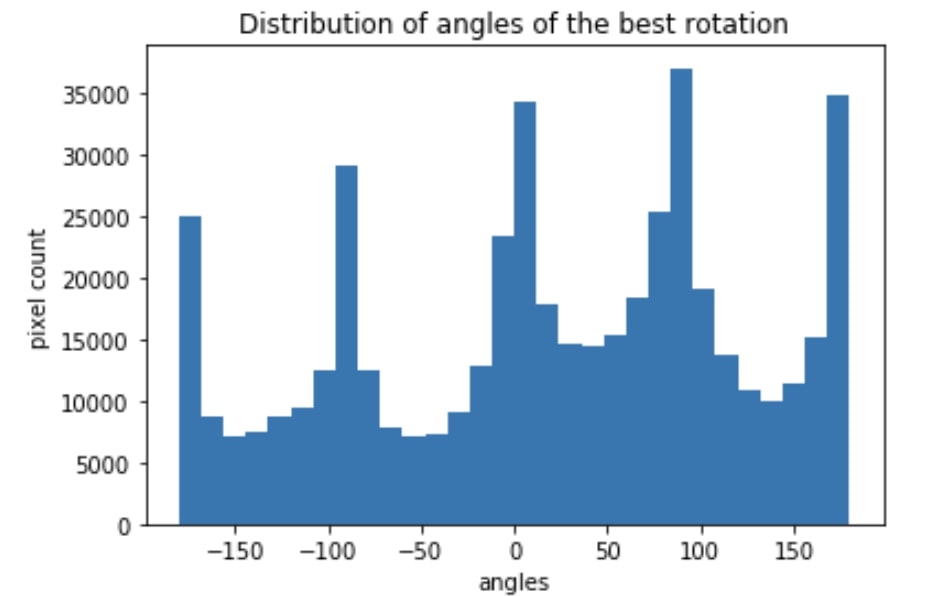

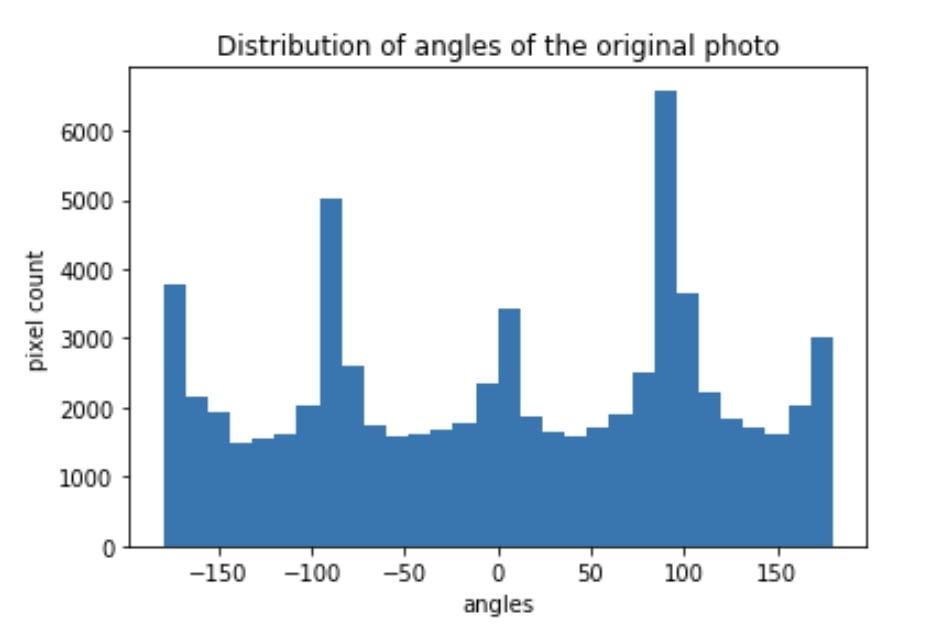

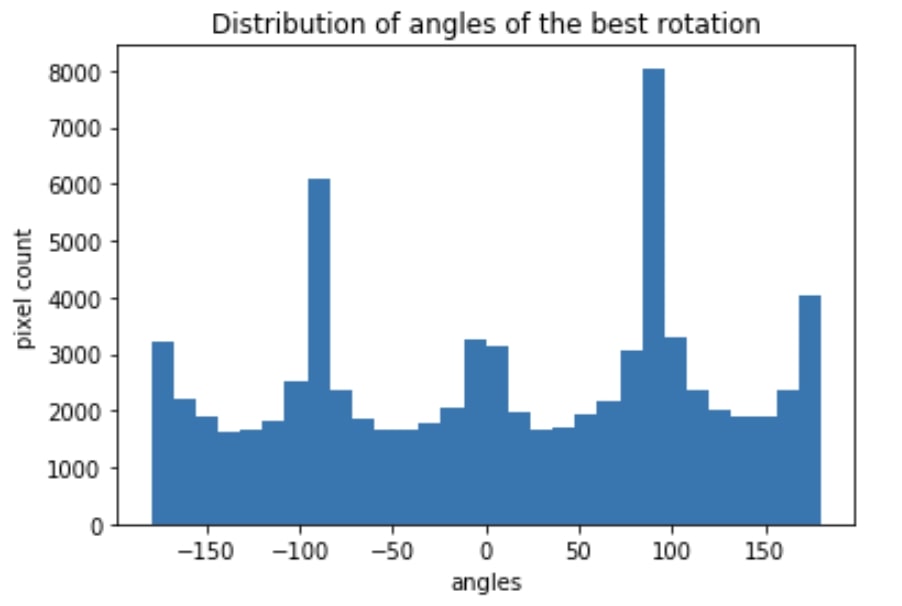

orientation histogram distribution

building.jpg

original vs straightened

orientation histogram distribution

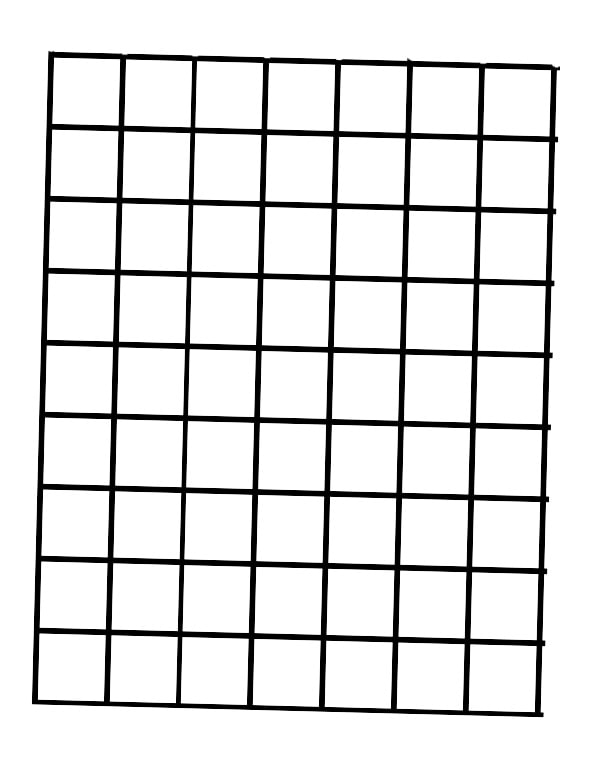

dog.jpg - failure case

original vs straightened

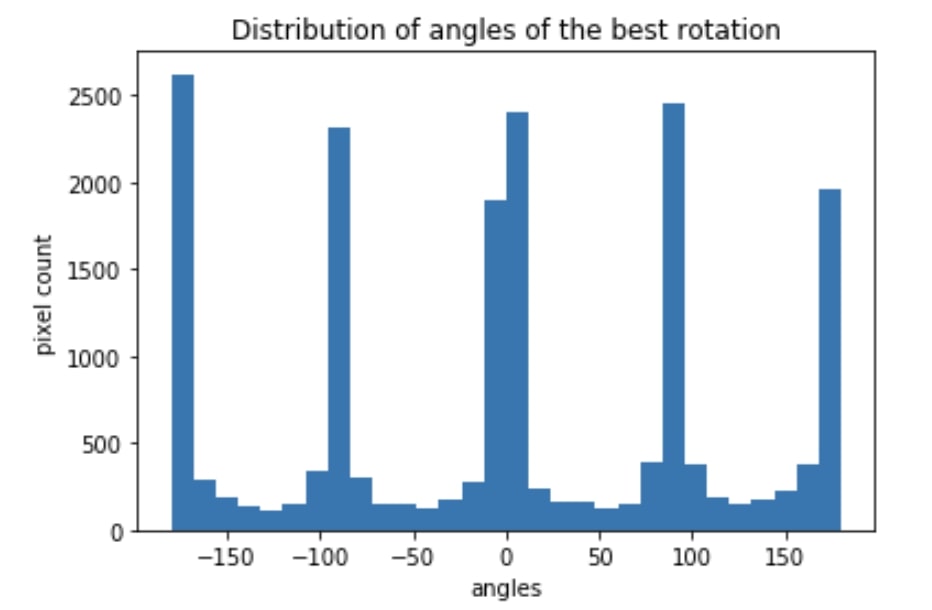

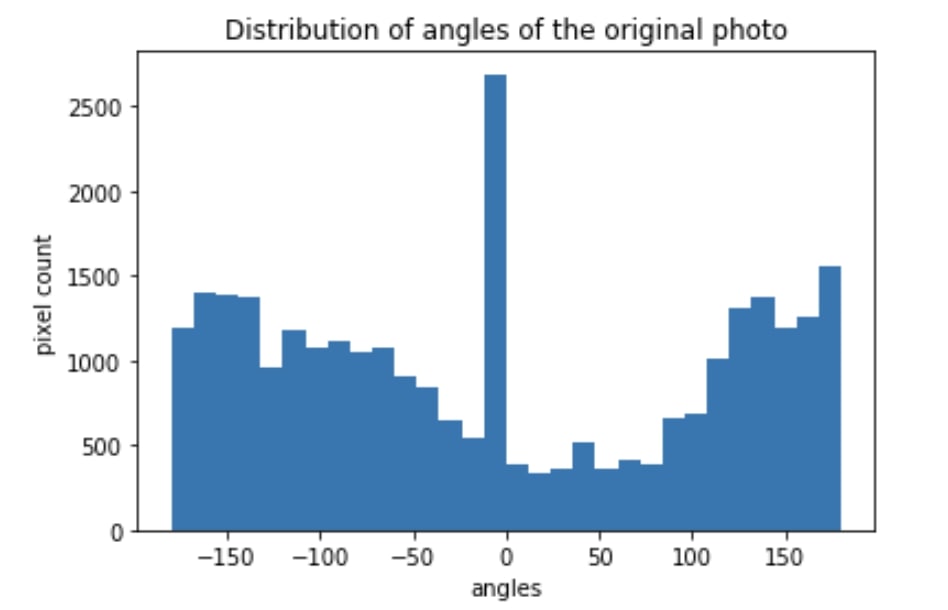

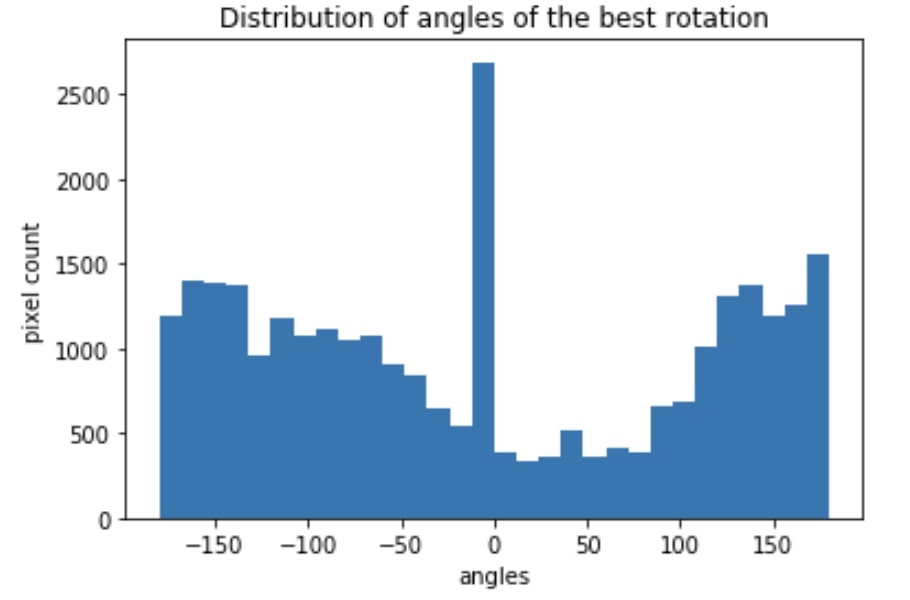

orientation histogram distribution

The problem is a lot of the background is just white sky. The frequency will be mostly the same. It heavily distorts the calculation

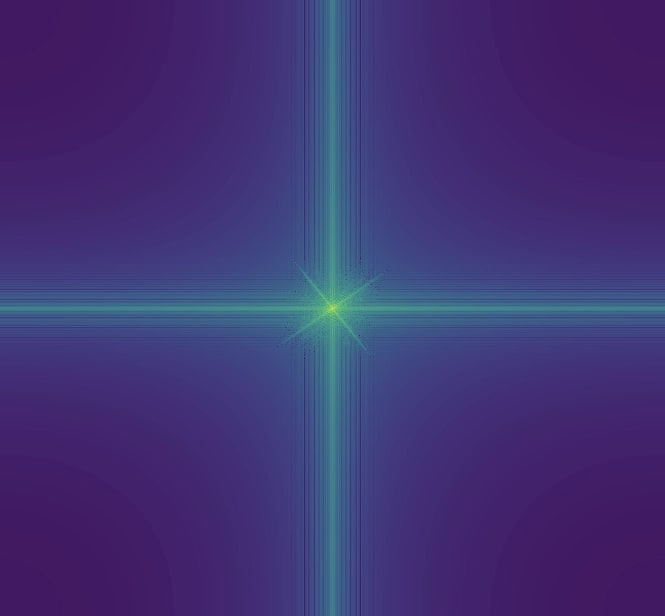

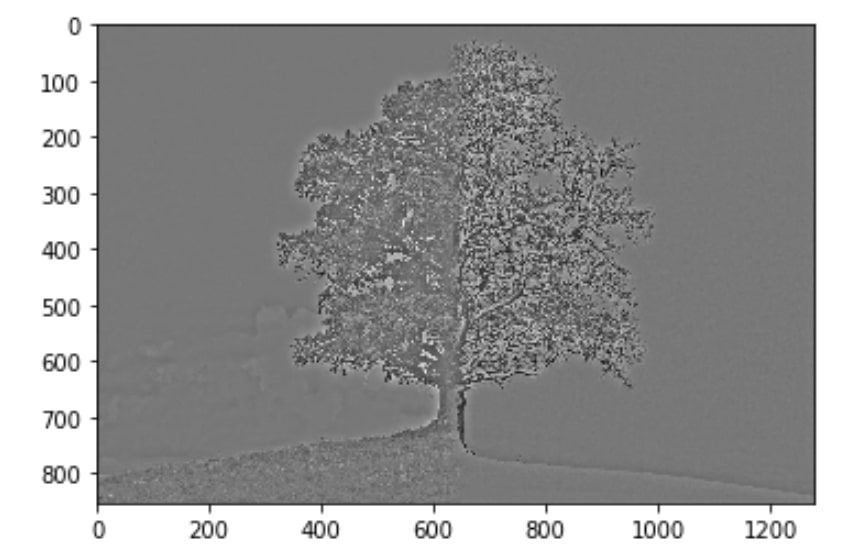

Part 2.1 Unsharp Masking

The given image: taj.jpg

Blur the image and then sharpen it

The result shows that details are lost when I blurred the image. Although I attempted to sharpen the blurred image, it didn't retain the information in the original picture.

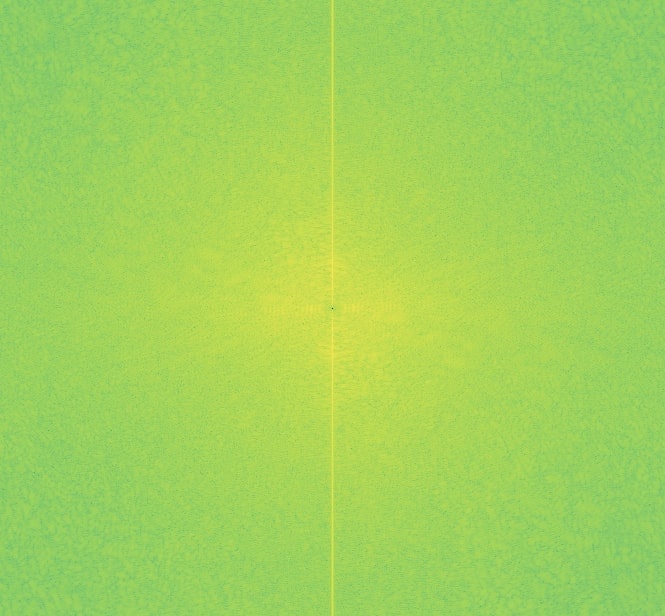

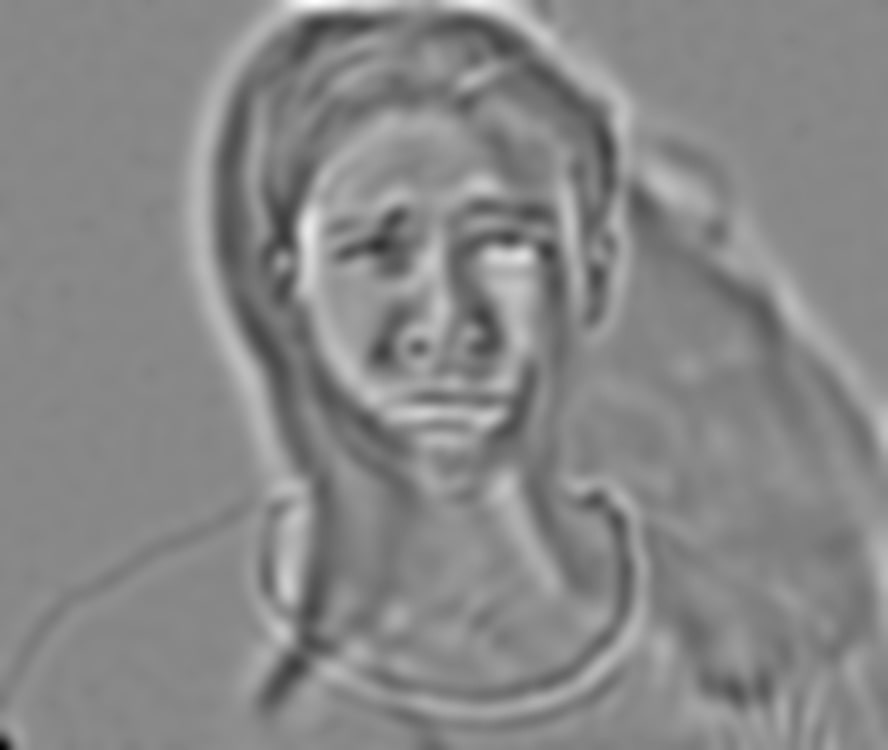

Part 2.2 Hybrid

The parameters for the gaussian filters are:

low-pass filter: size: 61, sigma: 10

high-pass filter: size: 51, sigma: 1

original

hybrid image

my example - original

Hybrid - experiments on color

high pass with all colors and low pass with slightly desaturated colors works the best

all colors vs. high pass with little colors vs. low pass with little colors

another successful example:

originals

hybrid

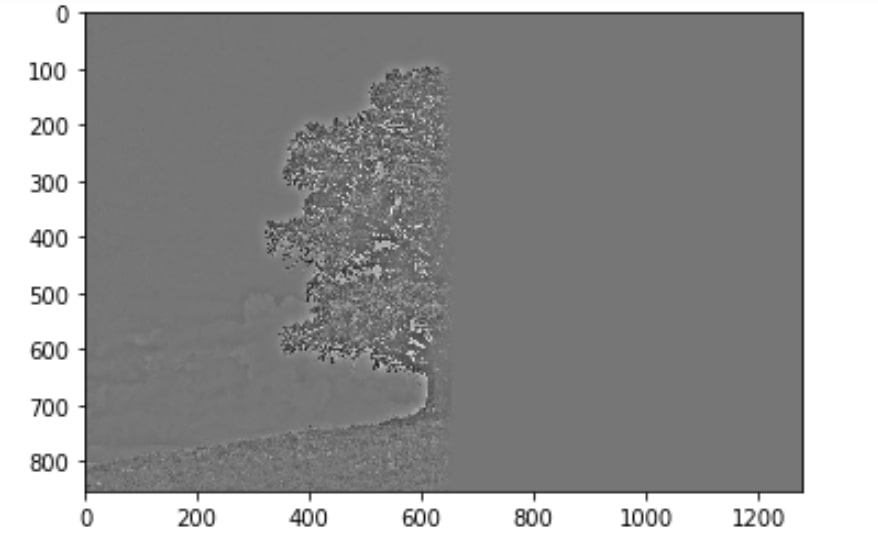

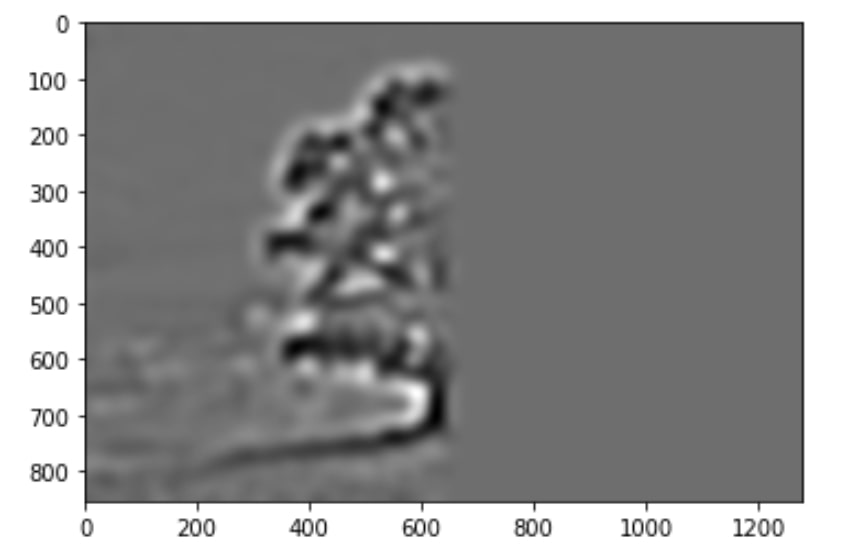

One failed example:

original photos

hybrid

It didn't work as well because the the texture of a summer tree covers all the details of the winter tree. The texture of the grass also blends in weirdly with the image

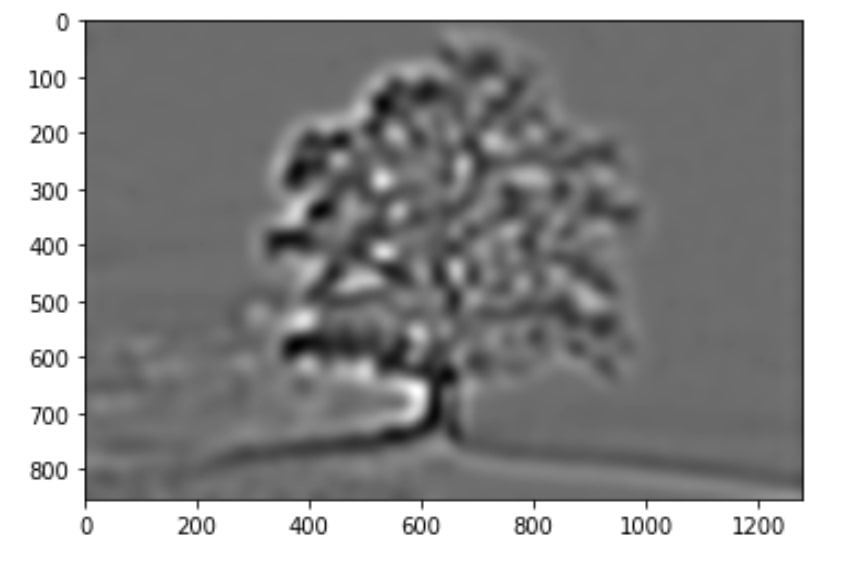

Part 2.3 Stacks

Mona Lisa

Part 2.4

original images

blended image

stacks

original photos

blended

irregular mask

original photos

After calling align_image

final result with some cropping