Project 3 - Face Morphing

By Myles Domingo

Overview

In this project, we explore how we can define corresponding features across multiple images, and use them to align and create unique combinations. We use triangulation and affine transformations to create face morphing sequences, find the average face of a population, and even create face filters.

Defining Correspondances

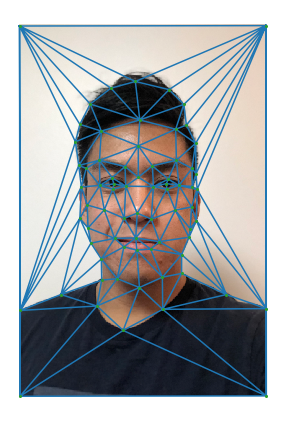

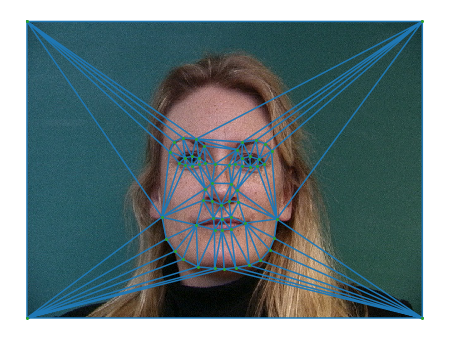

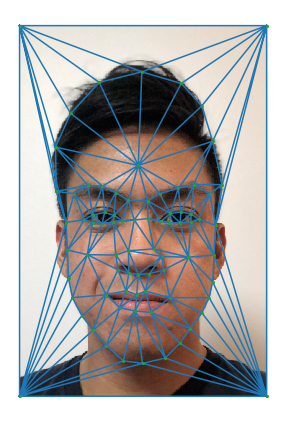

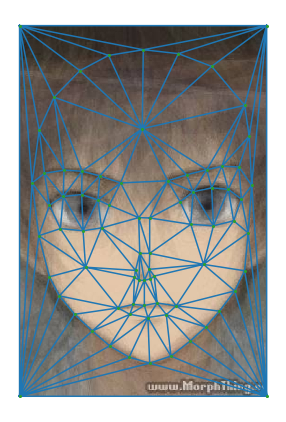

The first part of creating the face morphing sequences is to define a set of points for our triangulation mesh. I had planned out points to select in an external tool, and captured facial features as points (eyes, ears, mouth, nose, etc) by plotting it via ginput().

For this mesh, I used 65 points to define facial features. I averaged the coordinates of points across both images, and calculated a Delaunay triangulation that would give me a map of connected vertices for our triangulation mesh.

Triangulation of myself, 65 points

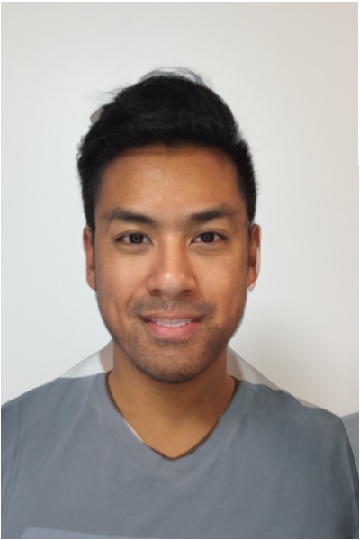

Triangulation of stranger, 65 points

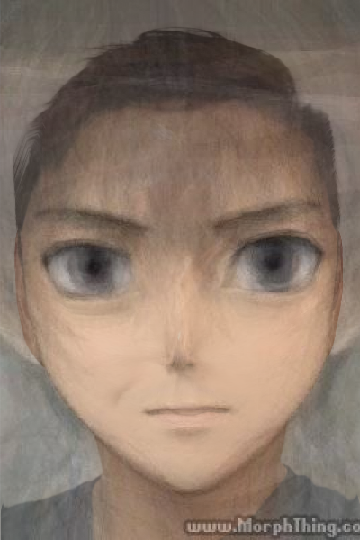

Computing the Mid-Way Face

Since the face shape in our two input images are very different, we cannot simply cross dissolve our two images — we have to transform both our faces into a similar shape. To do this, we first take the triangulation of average points, which represents our mean face shape.

From each triangle in our mean face shape, we calculate a transformation matrix A that would perform an inverse warp to each triangle of the face of our corresponding images. We interpolate our color values to each corresponding image by calling rectBivarateSpline on each color channel (R, G, B). Lastly, with this mapping of points to colors of each image, we take the average colors of both images, effectively creating a cross-dissolve that is aligned to our mean face shape.

I decided to take someone who could feasibly look like me. It’s actually frightening how alike we look. This is what it looks like —

Average Face, 65 points

The Morph Sequence

To generate the morph sequence, we perform a similar process where we align our faces to some mid-way shape and cross-dissolve interpolated values. Instead of being the average, we take two weights, 1 - a = b in [0, 1] and assign those weights to our color values for each image. We create 50 frames using this process, where we start with one face and transition to the next by slowing increasing the weight of a.

I had some trouble increasing the speedup of my code, so what helped a lot was parallelizing the computation of each frame, this helped reduce the overall runtime of rendering the animation. Lastly, to put this all together, I used an external software, ezgif, to assemble the frames into a final morph. Here’s what that looks like —

The Mean Face of the Population

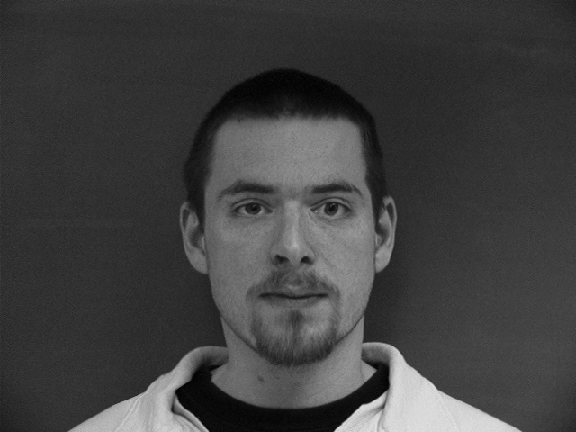

I used the Danes dataset as my population because it had annotated points that were relatively simple to parse through. I chose a subset of the entire population, one for all neutral face expressions.

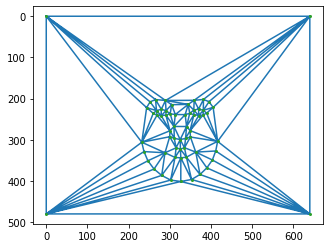

Average Face Triangulation

Triangulation Applied

First, I compute the average shape of the entire population by reading all the corresponding points and taking the mean, similar to the process in part 1. To morph the images into the average shape, I take the corresponding points and calculate an affine transformation matrix applied to each triangle in the mesh. Here are some examples.

By cross-dissolving all the images in the population warped to the average shape, we are able to find the true average face of the population. I did a separate calculation for males and females.

Average Danes Female

Average Danes Male

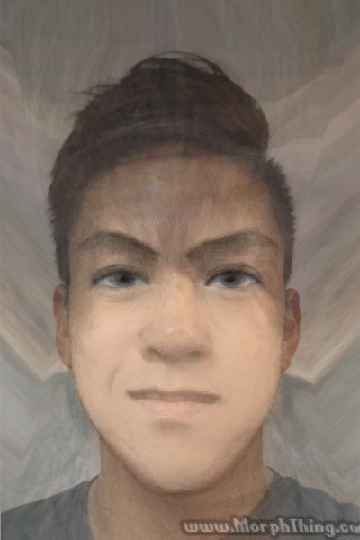

I also took myself and morphed myself into the average face of the Danes population, and vice versa. I redefined my triangulation using the same image of myself in different dimensions, and with different points than from part 1.

Caricatures

I can extrapolate from the mean Dane shape by multiplying it by an increasingly greater weight. This allows me to create caricatures of my face. I created 8 iterations split along from [0.25, 2].

Extra: Bells and Whistles, Anime Filter

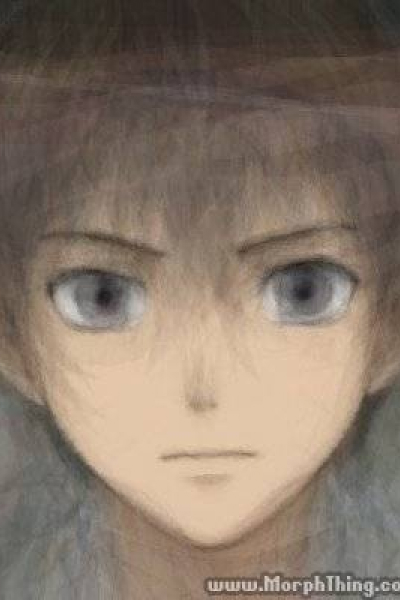

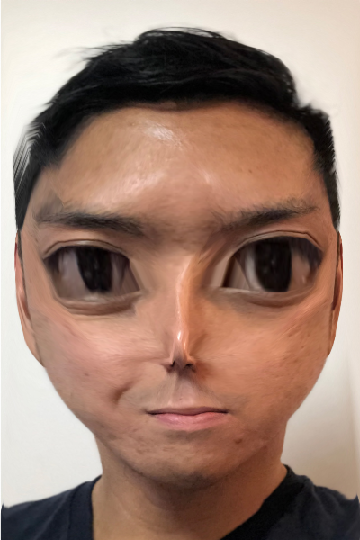

I wanted to create an anime filter that would turn myself into an anime character. Here are some inspirational examples.

First, I found an image of an average male anime character from the early 2000s, and created a triangulation mesh.

I tried morphing my face to have the shape of an anime male, and then cross dissolving it. It looked horrific, and was the opposite of what I was going for.

I then tried the other way around, by morphing the anime face into my face shape. It worked kind of well, and was not an abomination, however, I did still look like myself, with some colors changed.

I realized what was missing were “distinguishable” facial features of an anime male — big eyes, small nose, small mouth. I still wanted to keep my face shape, but have these features portrayed on me.T o do this, I sliced the set of points on a face of the anime male into separate regions. I created a weighted triangulation that would give greater emphasis to features I would want. This face mesh that combined different features — retaining my face shape, while having an anime face. When cross-dissolving, I now looked like this --

Note: Look how the eyes are different!

Very cool, one step closer to escaping reality

I also added some sharpening by repurposing some code from project 2, creating sharper, more anime-like edges around my face to give better contrast. Overall, I’m really happy with the results!

Sharpened Face