CS194-26 Project 5: Image Warping and Mosaics

Christine Zhu

Overview

In this project, implementing the necessary components to warp and combine one image with another, forming a 'panoramic image' of sorts.

Part 1: Shoot and Digitize Pictures

To start out, we need two photographs from the same point of view in different directions. The two photographs must have overlapping fields of view. I have chosen two photographs outside a mall in Boston, displayed below:

Part 2: Recover Homographies

In the next step, we must compute or homography matrix H, which will allows to perspective warp one image into our desired perspective of the second image. To do this, we use the least squares method after constructing our equations A*h = y where h contains the 8 unknowns of our homography matrix and A contains combinations of our x,y correspondance points in both images. Below we can see our correspondance points in the two images and the resulting warp of the first image into the second image's perspective. I have downsized the size of the original images due to computation time.

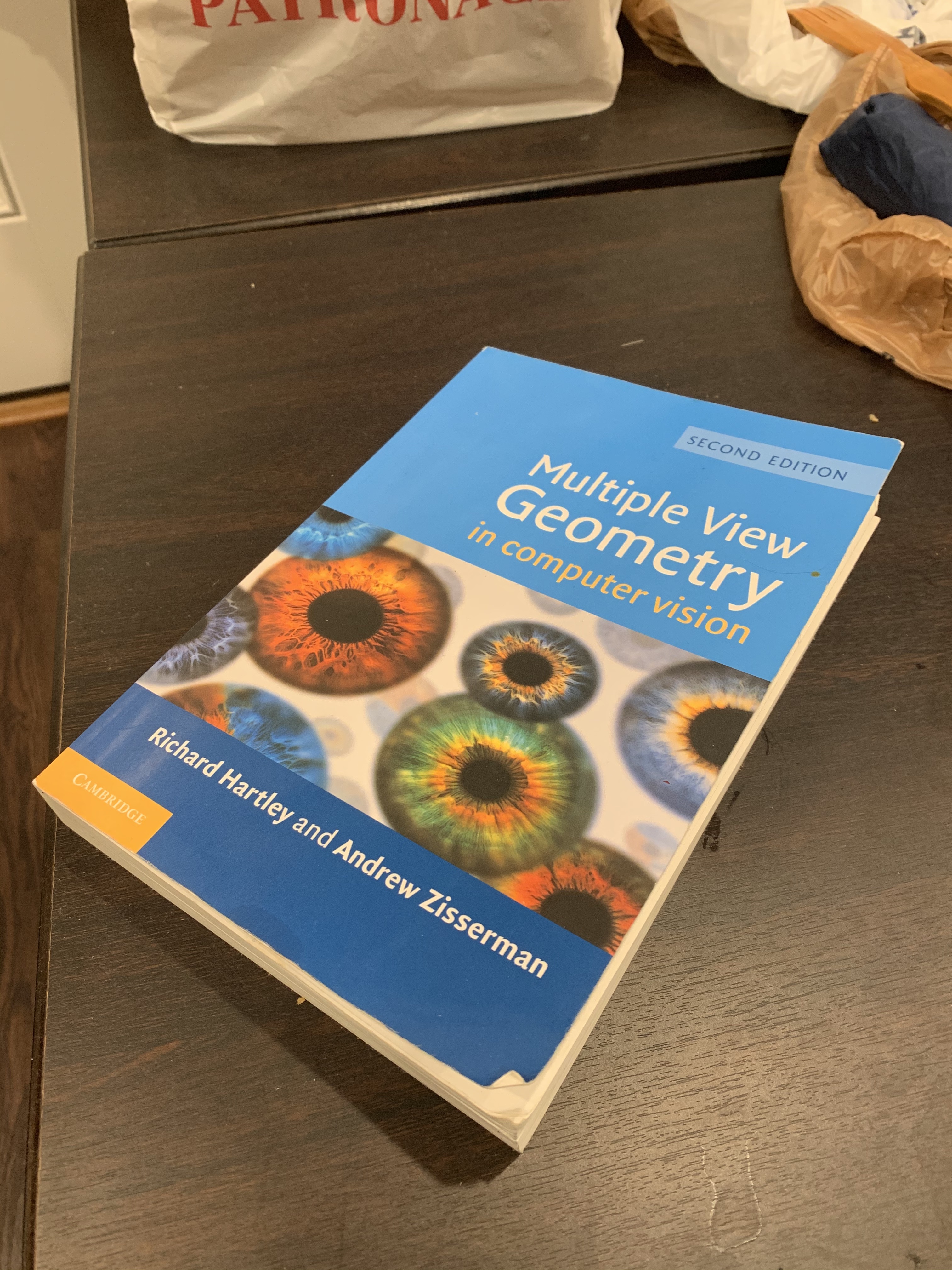

Here are some more examples of image rectification, with a textbook and a laptop. Again, the warped images have been downsized so they may seem slightly blurry. As new points were manually selected, they may not be a perfect rectangle, however the general principle of warping the original image into a top / front facing image is maintained.

Part 3: Image Mosaics

Now that we have ensure our homography is working correctly, we will attempt to stitch together images for image mosaics. An example on the first Boston images is show below along with 2 more mosaic images. The previous downsizing still applies.

Part B: Automatic Feature Detection

In this next part we will attempt to replicate our results from part a but with automatically detected correspondance points, using harris corner detection, mops, and RANSAC.

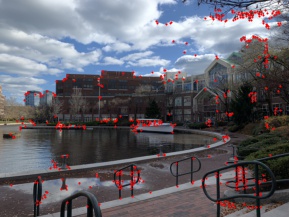

Corner Detection

First we will be using harris corner detection to locate all likely corner / feature candidates. An example on our first original boston mosaic is shown:

ANMS

Next we implement adaptive non-maximal suppression (ANMS) and take the top n points ranked by radius i (definition in “Multi-Image Matching using Multi-Scale Oriented Patches” by Brown et al.). Here are examples of the top 500 and top 200 points: notice that we maintain a well spread out distribution of corner points (so not all corner points are clustered in 1 area of the image).

anms 500 points

anms 500 points

anms 200 points

anms 200 points

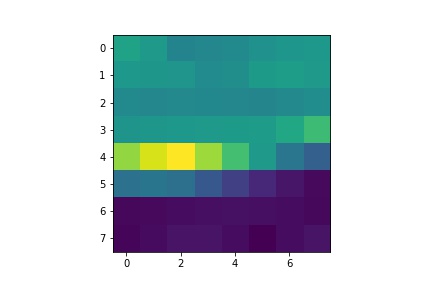

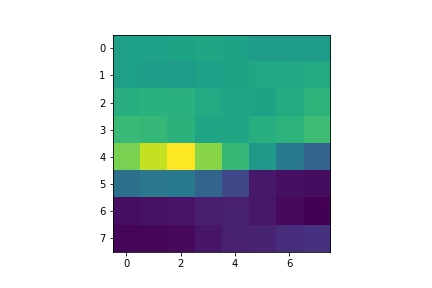

Feature Extraction and Matching

Once we have our top N candidate points for each image, we proceed to find the best matches between the points and only keep matched pairs above a certain error threshold (using e1/e2 where e1 is best match error and e2 is second best match error). Our features are extracted as 40x40 patches around the candidate points and then sampled/ downsized to an 8x8 patch. We can look an example feature pair below:

im1 feature

im2 feature

RANSAC and homography

Finally we use RANSAC and least squares to compute a homography estimates. The way we do this is by running through N iterations and selecting the homography / set of points / fit that matches with the most points (following principleof all right points are right in the same way, all wrong points are wrong in their own unique way). Below, we can see post RANSAC outlier removal:

post ransac processing, im1

post ransac processing, im2

Image Mosaics

At last we can make our mosaics! Here are the previous manually stitched mosaics next to their automatically stiched counterparts:

manual boston

automatic boston

manual kitchen

automatic kitchen

manual living room

automatic living room

Lessons Learned

Overall, this project was a lot of fun to make. After stitching together my first boston mosaic, I didn't realize that I would have to adjust the parameters for the other images and they turned out horrible initially. It took a while sitting down to realize I was adding in duplicate matches and also to realize that I needed to adjust the thresholds for each image. After that, my results for the other mosaics turned out a lot better.