Table of Contents :)

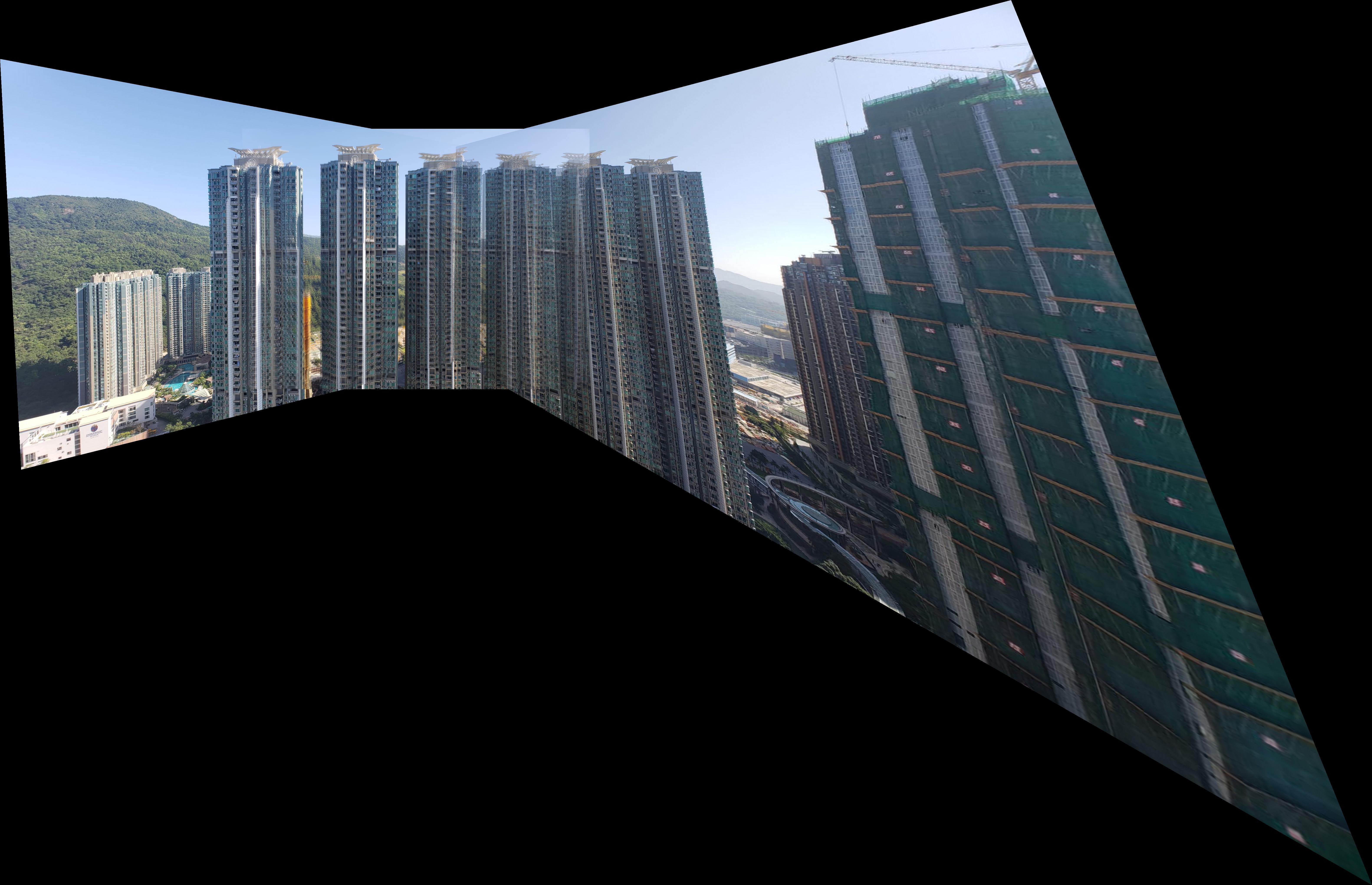

The goal of this part is to blend two images of the same scene togheter. we can

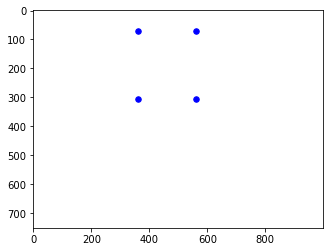

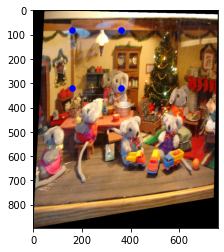

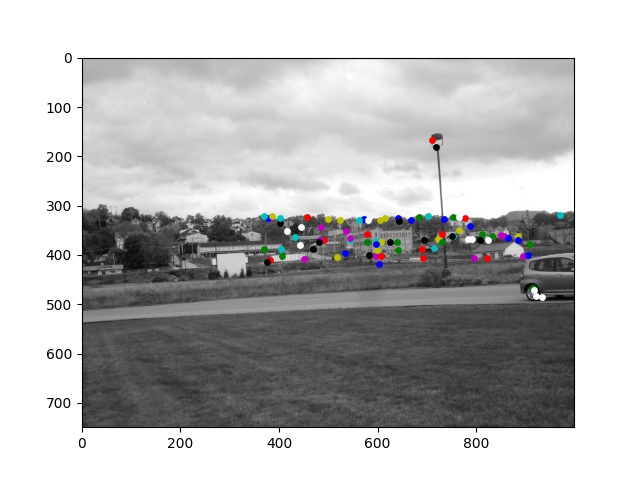

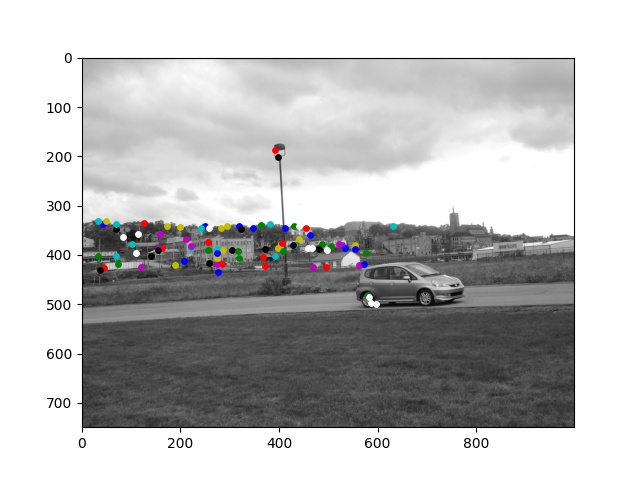

In this first part, we first pick correspondences by hand and then use those points to compute homography matrices. Since perspective transformation has 8 degrees of freedom, we need at least 4 correspondences to compute the homography matrix. Here are the input imager and the points I picked.

My input image size is 780x1000 and the points are

[[360, 70], [360, 306], [560 , 306], [560, 70]]

Using the matrices,

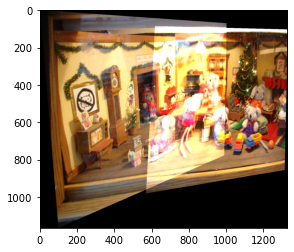

I performed forward warping on the input images. I first tried using inverse warping, but parts of the image

are

always cut out and I will need to scale each edge by trial and error. I ended up using

cv2.inpaint

with the mask shown below.

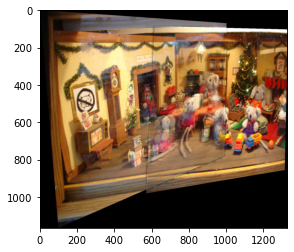

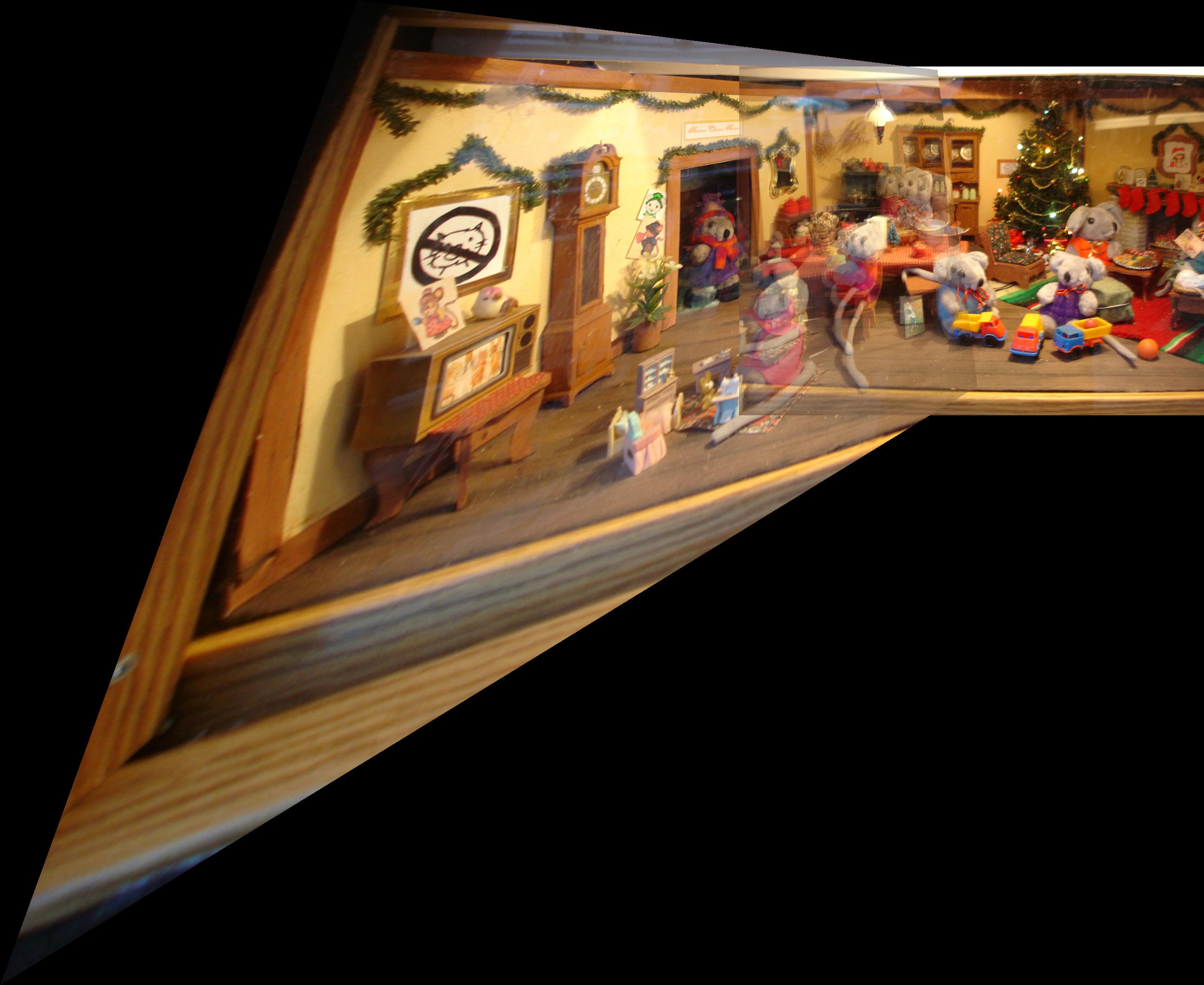

To create a mosaic, I first aligned the images by padding the images. Then blended the two images with alpha-blending.

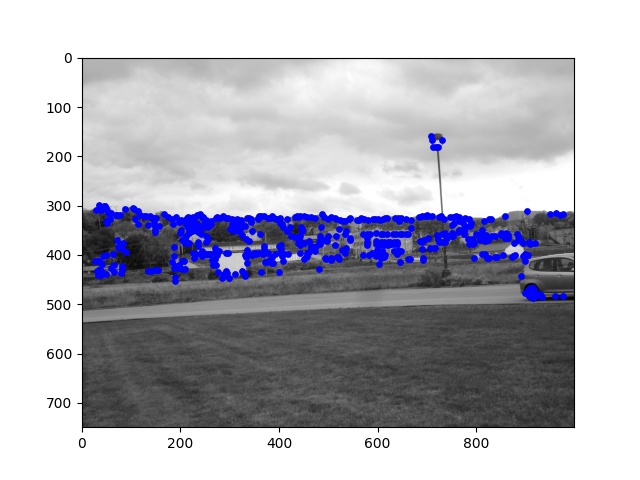

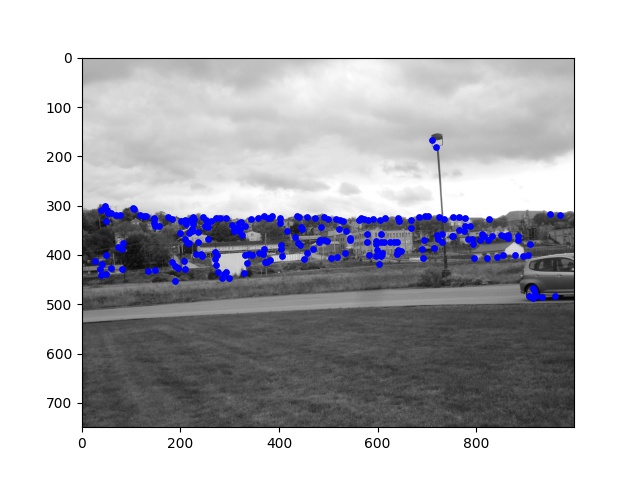

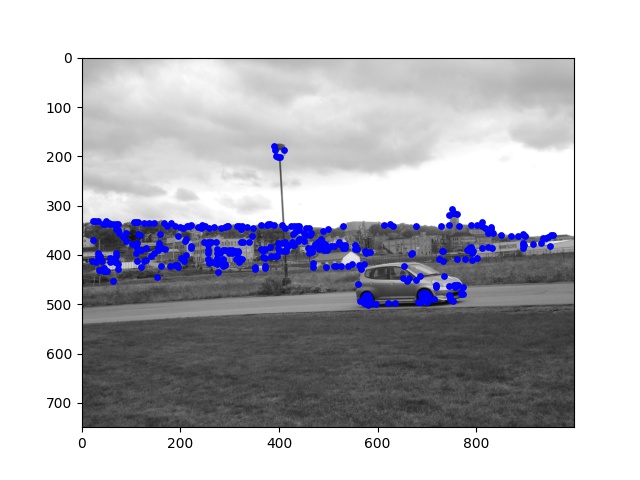

In the real world, manually selected correspondances will be a pain! So in this part, we will implement automatic feature matching following this paper: Multi-Image Matching using Multi-Scale Oriented Patches.With the matched features, we can simply apply our algorithm from part 1 again to do image stitching.

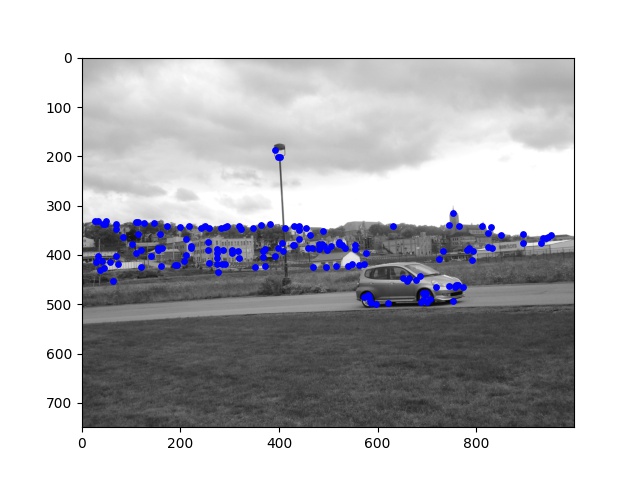

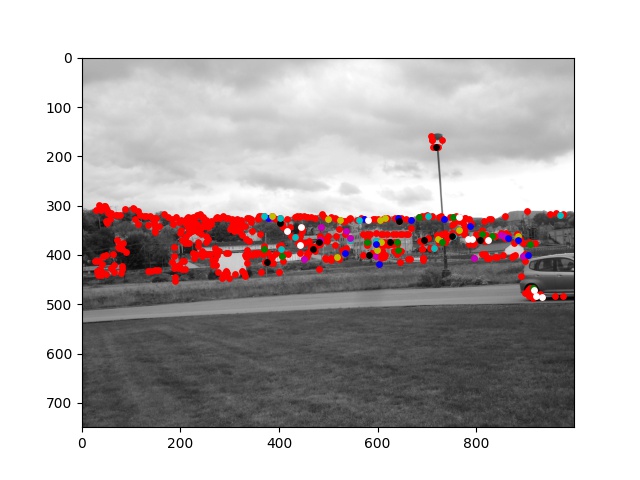

In this project, we use the Harris Detector to find keypoints. Since the Harris Detector will return a lot of points, we need to lower our computation cost for matching by doing adaptive non-maximal suppression. The idea is to have points that are evenly spread out across the input images while still choosing the points have sufficiently high Harris corner strength.

For every keypoint from the last part, we will use an 8x8 patch to describe it. First we extract a 40x40 around the keypoint's coordinate. Then we select every 5th pixel and resul in a 8x8 grid. To improve matching (step 3) results, I also applied a gaussian blur before subsampling the 40x40 patch.

This is the gaussian filter used.

Here are some of the patches extracted.

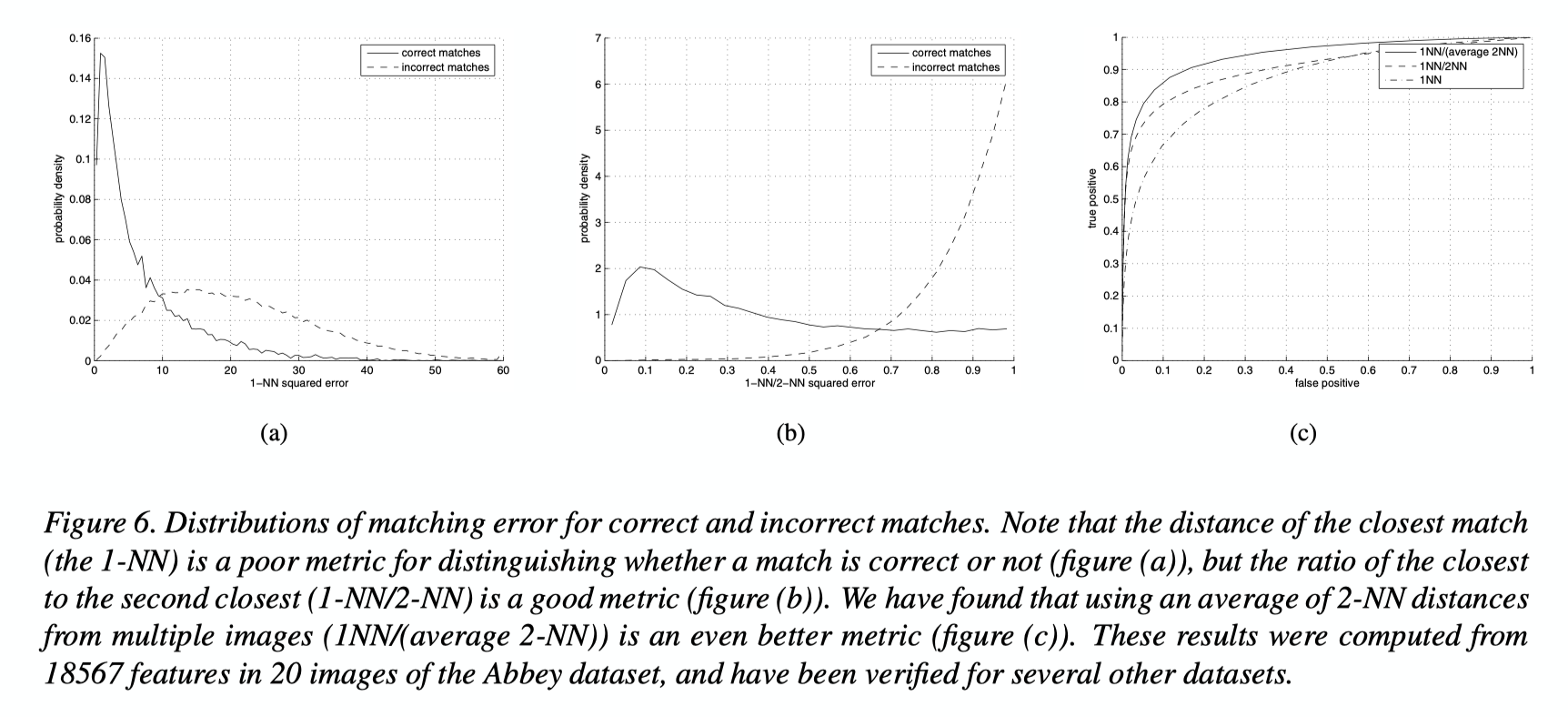

Given two sets of patches, we want to find the best way to match them. As mentioned in the paper, the best metric is 1-NN / 2-NN (atio of the closest distance to the second closest)

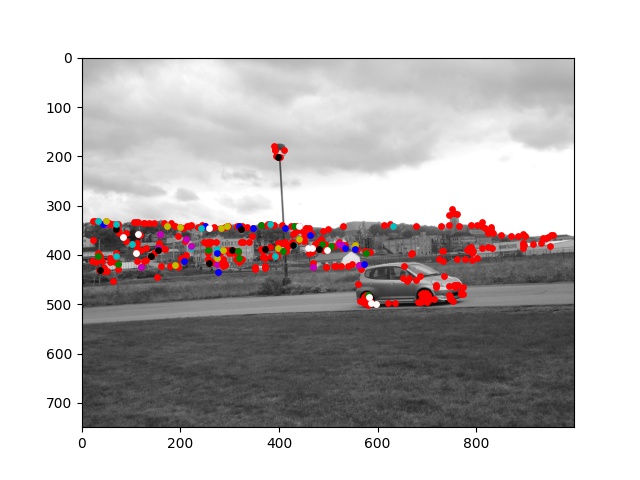

We can see that RANSAC helps finalizes good matches by rejecting outliers. Using epsilon = 3

pixels, more than 60% of the matches were rejected.

This project allowed me to learn the the new skill of reading research papers. At first I was really confused about what's going on, but as I try to implement more code, and re-read the paragraphs over and over, something magically clicks!

ANMS took me a really long time to understand and I reimplemented it 5 times!

Still my favourite thing from this project is creating the mosaic of me and my friend's plushies. The mosaic will become my wallpapers now. Haha!