Lightfield Camera

Table of Contents

1 Introduction

In this project, I implemented Lightfield Camera. In his paper written back in 2005 at Stanford, Ren Ng and

his colleagues wanted to see if depth of field and aperture could be adjusted in post process, which normally would be almost impossible to do. Specifically this could allow for refocusing blurry images as

well as allow all subject in a image to be perfectly visible. I used the data they provided in the Stanford Light Field Archive and demonstrated the computations necessary to achieve the aforementioned

effects using simple algorithms.

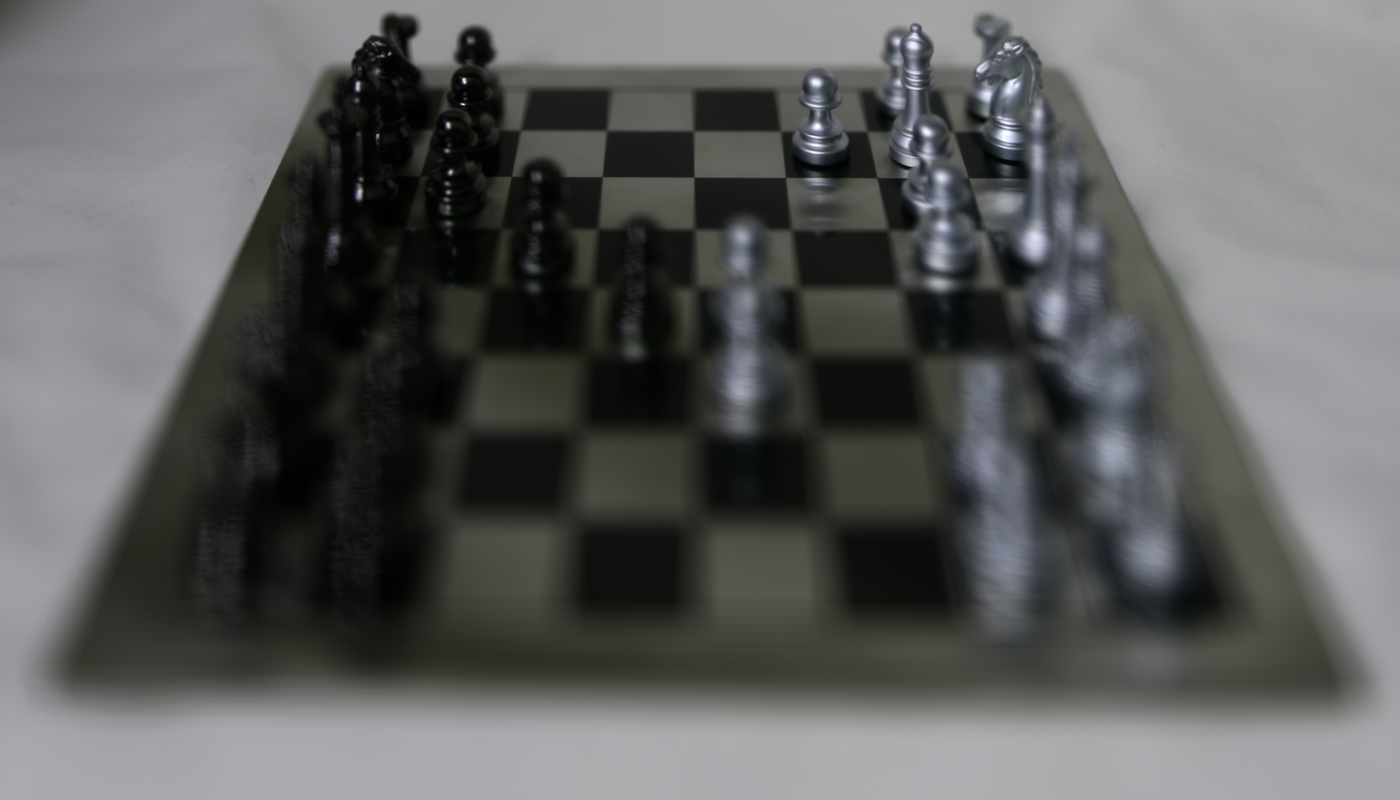

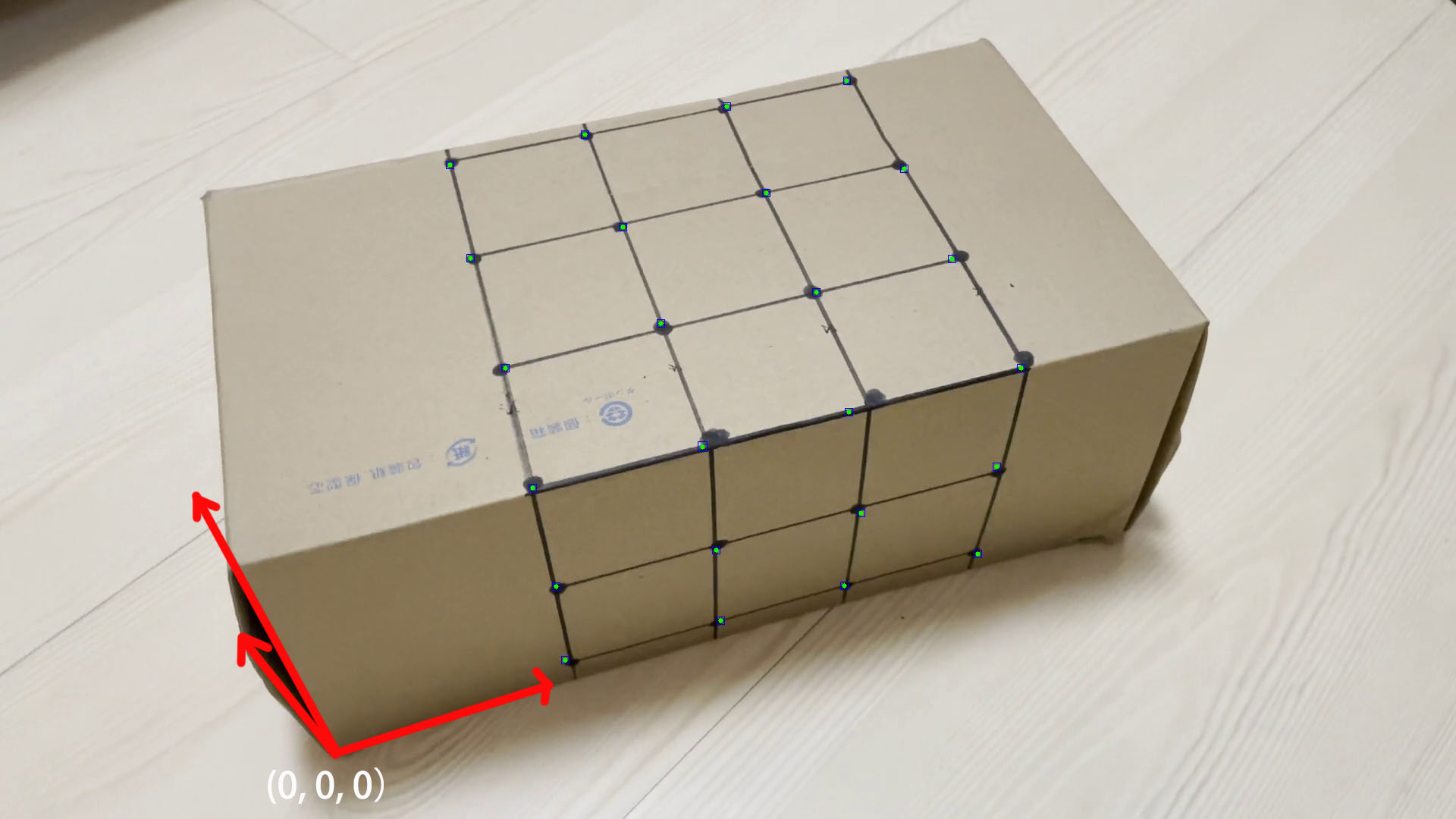

Below are some results of depth focusing.

2 Depth Focusing

Objects farther away in an image do not appear to change much when a person varies their position as opposed to foreground object. This principle is one of the main reasons why it's easy for us to determine if an object is father away from us when we move. If we average all the images in the dataset together we end up with an image with sharp far away objects and blurry nearby ones. To get around this, and to sharpen foreground objects we simply center the image around a center image, and then we shift the images that surround it (which range from [-8, 8] given a 17 by 17 array) by the amount we would like to adjust the depth focus by. In this is I used a range of [-3, 1] with step values of 1. This took around 5 minutes make the images.