Neural Style Transfer and Image Quilting

Allan Yu

Neural Style Transfer

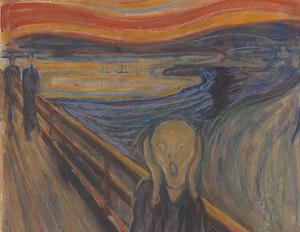

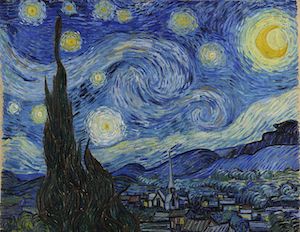

We start with a content image and a style image and through a neural network, output an image that maintains the content of the content image but with the style of the style image.

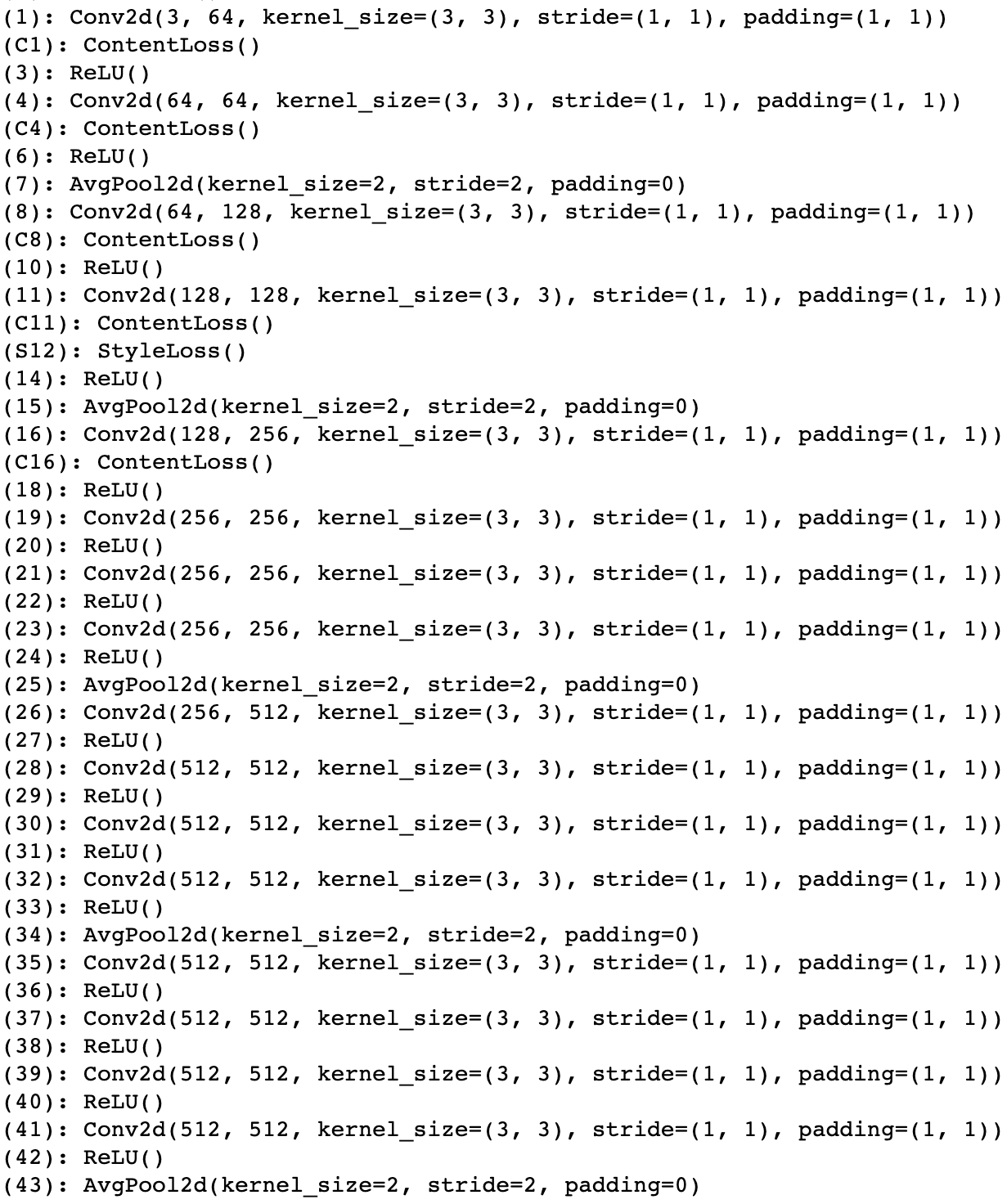

Network

The follow image is my model. Just like the paper, I use a pretrained VGG19, adding content loss layers after the first five convolutional layers and a style loss layer after the fourth content loss layer. I also replace maxpools with avgpools; I found that this resulted in better output images, just like the paper. As the input image, I start with the content image (so I start with a content loss of 0). I use the LBFGS optimizer, which I found to work better than Adam. In terms of hyperparameters, I found that in the general case, weighing the style image by 100000000 times more than the content image worked well. (This may be a bit biased to personal preference though!) I also normalize the images before processing.

Results

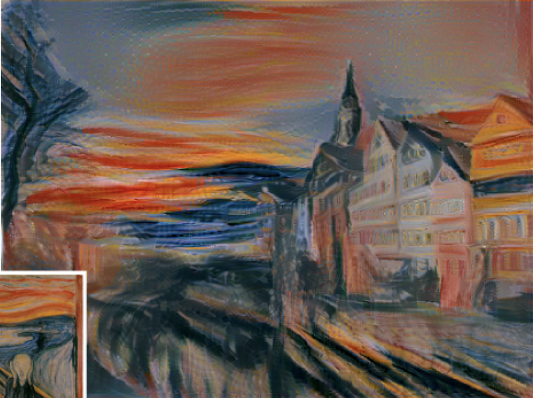

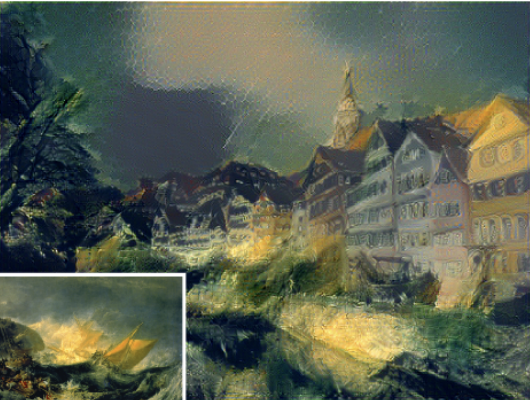

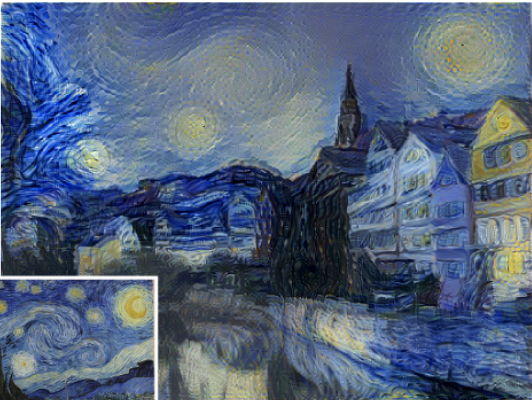

Here are the results of my network ran on three styles for Neckarfront. Compared to the paper, my images are a bit lighter in general. I definitely weigh content higher (this can be clearly seen in the starry night blend: my output has clear buildings in the center of the output, while the paper's does not). If I were to weigh style more, then my results would look more similar to the paper's results.

Neckarfront

Styles

My Results

Paper Results (For Comparison)

More Results

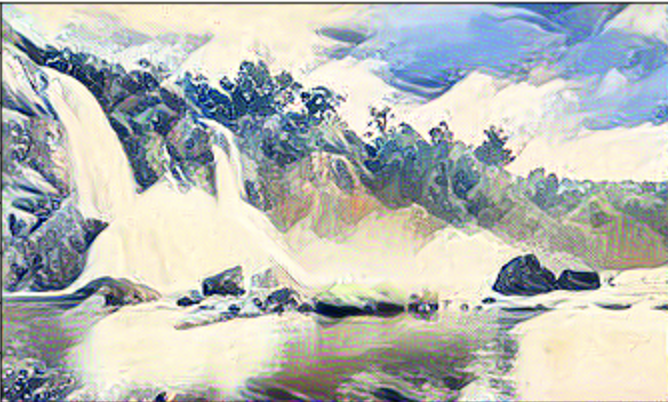

Here are more results on other content and style images. The last example is a failure case. I believe that the failure occurs for a number of reasons. First, the style image is not well defined: the right side of the image is a bit too plain. The content image is a bit plain as well: not nearly as noisy as Neckarfront. Furthermore, the horizontal trees in the style image clash with the vertical towers of the content image. I believe these factors caused the model to work less effectively.

Content

Style

Result

Content

Style

Result

Content

Style

Result

Image Quilting

Here, I randomly sample patches from a texture image to fill a size. Here is an example.

Original Image

Sampled