CS 194: Project 1

Theodora Worledge

Alignment Part 1

I aligned the R, B, and G color channels by performing an exhaustive search (over [-15, ..., 15] in both the horizontal and vertical directions) to identify the offsets that minimized L2 loss between R and B, and G and B.

- Before performing the exhaustive search, I cropped off 1/18th of the border on all sides of each R, B, and G image. This was necessary because the borders contained data unrelated to the image I was trying to align.

- My variant of L2 loss is the average L2 loss over pixel pairs in which neither pixel was exactly 0. This was a necessary modification because translating an image by vertical and horizontal offsets introduced black pixels (value 0) along the borders.

- After identifying the optimal alignment, I applied it to the original, uncropped images.

- This alignment scheme took under 7 seconds for each of these jpeg images.

R: (3, 12); G: (2, 5)

R: (2, 3); G: (2, -3)

R: (3, 6); G: (3, 3)

Alignment Part 2

I implemented the recursive pyramid scheme to identify the offsets that minimized a variant of L2 loss between R and B, and G and B. The same variant of L2 loss as above was used and the same pre-processing step of cropping out the borders was used.

- The jpeg images required 3 layers of recursion and the tif images required either 6 or 7 layers of recursion to run quickly and output the correct alignment.

- The recursive search strategy worked much more quickly than the exhaustive search. It aligned each jpeg image in less than a second and each tif image in under 50 seconds, except for two cases (<68 seconds).

R: (3, 12); G: (2, 5)

R: (2, 3); G: (2, -3)

R: (3, 6); G: (3, 3)

R: (-4, 59); G: (4, 25)

R: (13, 124); G: (16, 60)

R: (23, 89); G: (17, 41)

R: (11, 117); G: (8, 56)

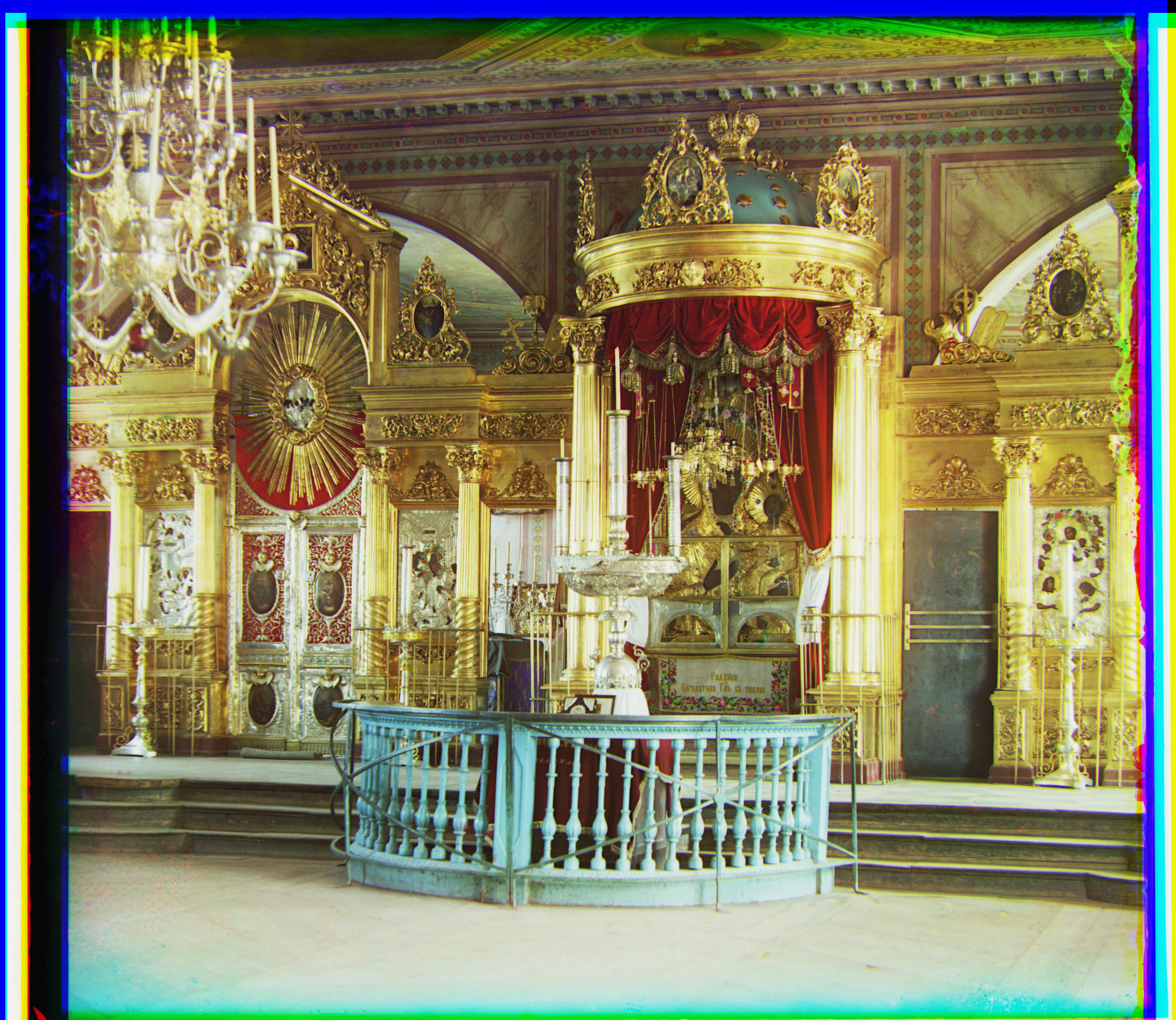

R: (13, 178); G: (10, 82)

R: (36, 108); G: (26, 52)

R: (36, 176); G: (29, 79)

R: (11, 112); G: (14, 54)

R: (32, 87); G: (6, 42)

R: (-12, 104) G: (0, 53)

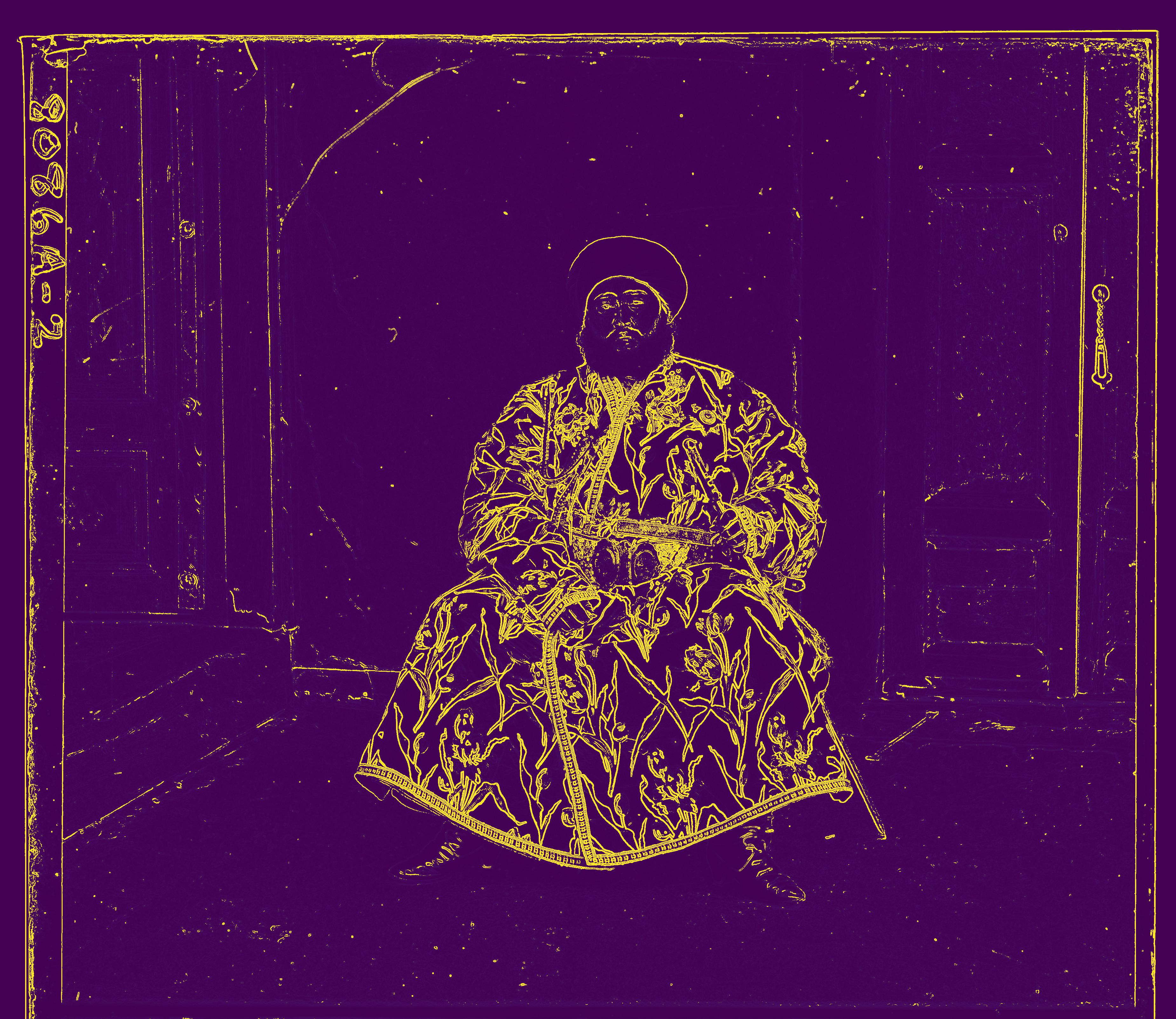

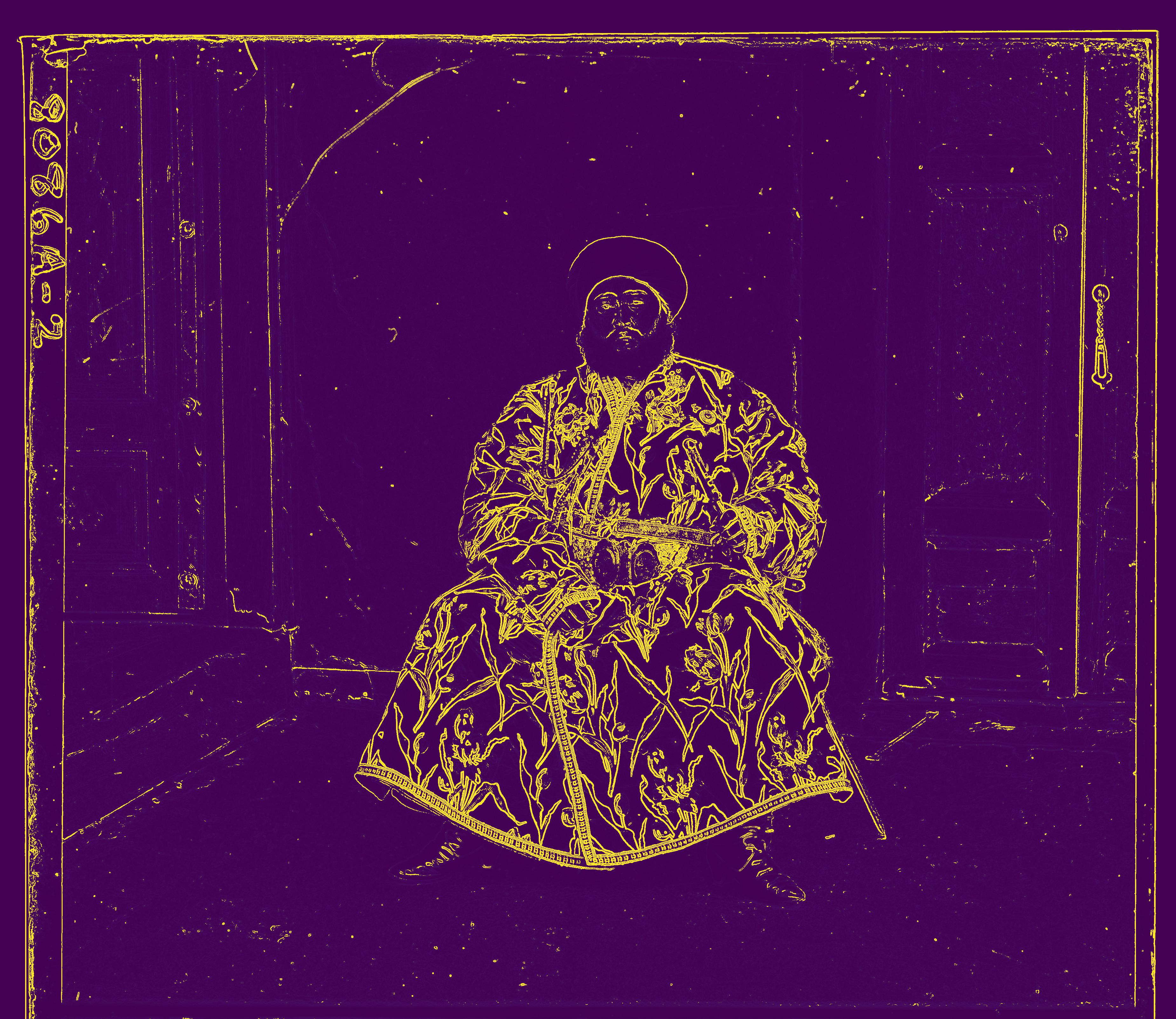

R: (-299, 83); G: (24, 49)

B&W: Implementing and Using Edge Detection

Because the values across the color channels for the Emir of Bukhara image are not of the same brightness, L2 loss is not an accurate indication of alignment, which is why the previous alignment scheme fails. Instead of using the original R, G, and B images as inputs to the pyramid alignment algorithm, I instead pre-processed each color channel by applying an edge detection filter. More specifically, two 3x3 filters were used; one to identify horizontal edges and the other to identify vertical edges.

Filter for horizontal edges:

Filter for vertical edges:

At each location on the image, both filters were applied and their results were added, then scaled. Values greater than a threshold were rounded to 1. The following images for the R, G, and B channels were obtained:

Using these as inputs for pyramid alignment yields the (after) image on the left, a big improvement from the (before) image on the right :)

R: (40, 107); G: (23, 49)

R: (-299, 83); G: (24, 49)

Additional Photos

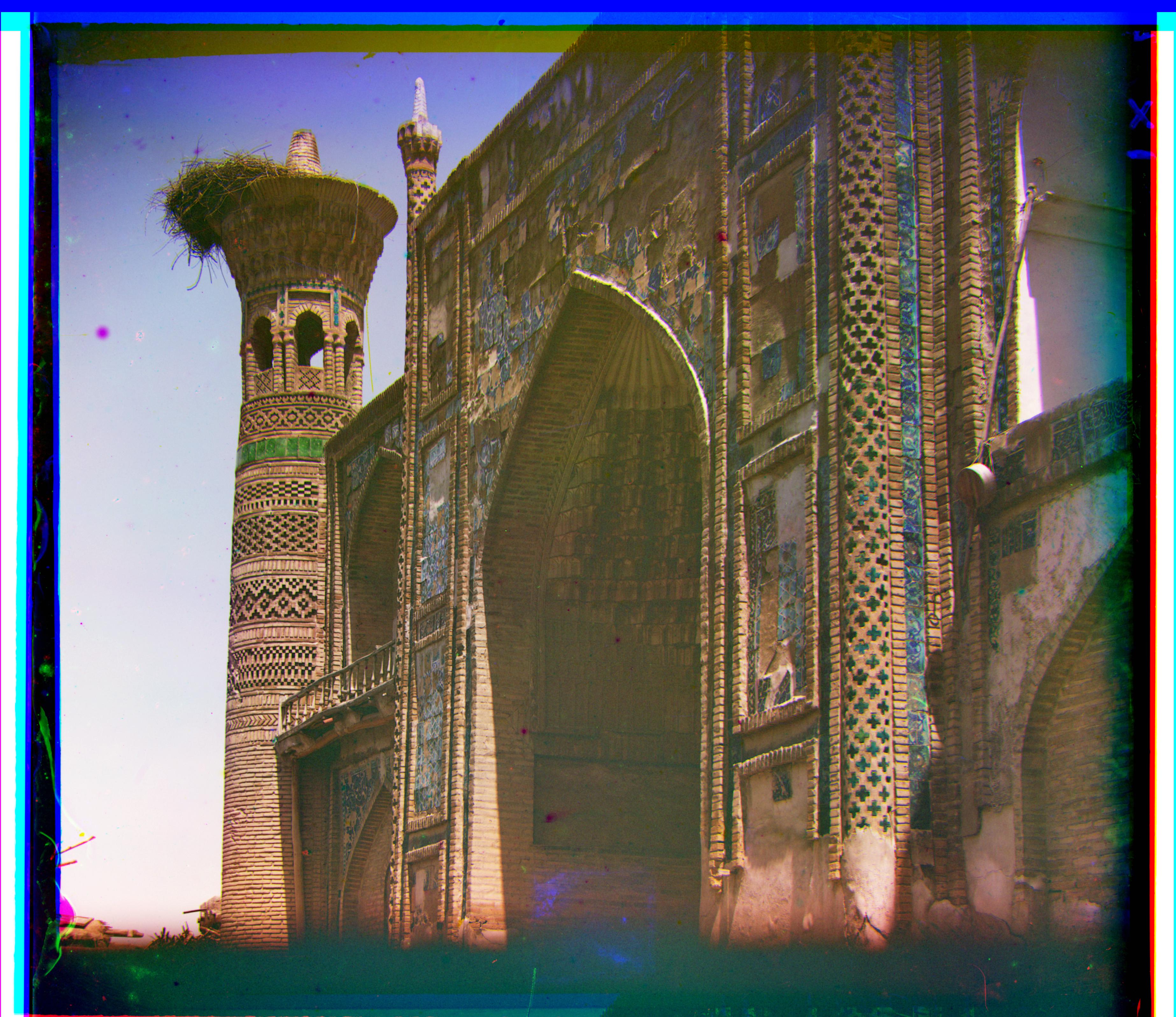

R: (286, 86); G: (26, 14)

R: (13, 60); G: (8, 8)

R: (-7, 97); G: (3, 38)

The leftmost image can be correctly aligned using the same preprocessing step of edge detection as used for the Emir of Bukhara image.

R: (51, 84); G: (27, 14)