Sergei Mikhailovich Prokudin-Gorskii, in the early 20th century, took photos with three cameras: one with a blue filter, one with a green filter, and one with a red filter. Today, this enables us to recreate the colored world Prokudin-Gorskii was able to see. This project takes the image that Prokudin-Gorskii took, aligns the three image channels in the picture, and merges the images into a single color photo. In this project, I created an exhaustive search implementation for jpg images and a pyramid search implementation for tif images.

Both my original exhaustive search and pyramid search implementations were extremely simple—I didn't even use cropping at first.

The first thing I had to consider was what image matching metric to use:

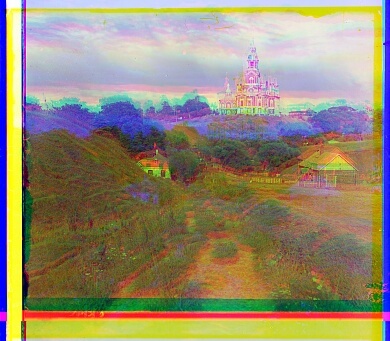

SSD was significantly better than NCC, so I ended up using SSD for the rest of my images. On the left is

cathedral.jpg aligned with SSD(green alignment=(1, -1), red alignment=(7, -1)),

and on the left iscathedral.jpg aligned with NCC(green alignment=(-20, 14), red alignment=(-20, 13)).

Exhaustive search and pyramid search worked pretty well. At first, I tried using the same alignment window in pyramid

seach as I did in exhaustive search. This was good, but aligning took as much as two minutes per picture—I didn't

really want to tune parameters. I realized that the same alignment window has exponentially more influence to change

the alignment as we move down levels, so there wasn't as much of a need to have an alignment window of $$(-20, 20,

-20, 20)$$. When I changed the alignment window to $$(-5, 5, -5, 5)$$, only Emir.tif was affected

negatively. I ultimately decided to use an alignment window of $$(-10, 10, -10, 10)$$. Below is Emir.tif

with an alignment window of $$(-5, 5, -5, 5)$$ (green alignment=(-3, 7), red alignment=(108, -789)).

Exhaustive search and pyramid search worked ok on my images: in fact, some images were already pretty well aligned without any additional changes to the searching algorithm.

However, I wanted all of the images to look good, so before running a search algorithm to find the best alignment of the images, I cropped them. Since the jpg images are vastly different sizes from the tif images, I wrote a function to crop images based on percentage before aligning.

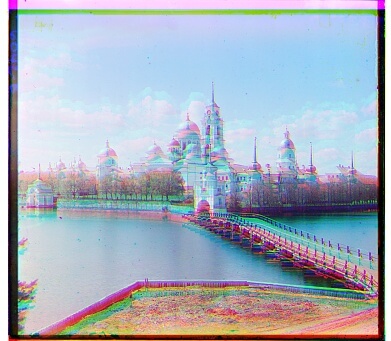

This was great for images like monastery.jpg, but it didn't completely fix all of the misalignment issues in

the

other images. On the left is monastery.jpg aligned without cropping (green alignment=(-6, 0), red alignment=(9, 1)),

and on the right is monastery.jpg aligned after cropping (green alignment=(-3,2), red alignment=(3,1)).

Some images like lady.tif looked a bit blurry. Since I got a "low-contrast image" warning when

I tried to save lady.tif, I figured that it looked blurry because it was so low contrast. To handle this,

I used the sobel edge detection filter on the image and ran the alignment algorithm on the image's edges.

Finally, I wanted my photos to look wonderful. More importantly, I was trying to figure out how to deal with the

"low-contrast image" warning while saving my images. I added constrast stretching on the images by

stretching the

cumulative histograms so that

they would look more eye-catching. However, despite my efforts, I was not able to align lady.jpg, probably since the

contrast was so low. Below are the before-and-after results of using edge detection and adding contrast

stretching. The edges of the second picture are removed since they affected the results of constrast stretching.

green alignment=(58,-22); red alignment=(123,-9)

blue alignment=(-58,9); red alignment=(64,-12)

Professor Efros told us that removing green in a picture would make the picture more blurry, which meant that humans find green to be more important. Some backstory: back in high school, I loved neuroscience, and I once talked to a professor who studied the retina. She told me that the human eye was specifically designed for Earth: if we ended up on another planet, our eyes wouldn't work as well (fun fact: the retina predicts movement of objects like a ball flying towards you). Maybe green is just more prevalent on Earth.

Essentially, I tried aligning only on the green filter. Emir.tif actually looked slighly worse, but some

of the

final images like harvesters.jpg, using edge detection and contrasting, looked slightly better, so I ended up using

the green-aligned images.

Below is the blue-aligned image of Emir, without using edge detection and adding contrast. green alignment=(16,16); red alignment=(106,8)

Below is the green-aligned image of Emir, without using edge detection and adding contrast. This is clearly worse than the blue-aligned image above. blue alignment=(-16,-8); red alignment=(104,9)

Below is the blue-aligned image of the harvesters, using edge detection and adding contrast. green alignment=(60,-13); red alignment=(138,-6)

Below is the green-aligned image of the harvesters, using edge detection and adding contrast. There is more detail in the picture than the blue aligned version. blue alignment=(-60,6); red alignment=(65,-4)

See the final images here.

blue alignment=(-6,0); red alignment=(7,0)

blue alignment=(-6,0); red alignment=(5,0)

blue alignment=(-8,-1); red alignment=(9,1)