Stephen Yang's CS194 Project 1

Approaches

Part 1

The exhaustice searching method was the first being implemented. It searches over a window of possible displacements, which defaults to be [-15,15] pixels, and try to score each displacement using one of my image matching metric -- either Sum of Squared Differences, or Normalized Cross-Correlation -- and take the displacement with the best score. For Emir of Bukhara, however, I tried another technique that will be discussed later.

Part 2

The pyramid searching method was developed based on the exhaustive one, featuring better run time and efficiency. Simply put, it fist scales down the image, and once I know the displacement at a lower resolution version of the images to be matched, I can scale that up and just search within a smaller window around that for higher resolution. This way I am not searching a massive window space at a high resolution, saving our time and computation power.

Note that the base of the logarithmic scale is set to be 4 empirically, as when I was experimenting with this, a number of pictures were either misaligned or running to slowly due to the excessive number of resizing iterations. The problem was solved by

Bells & Whistles (Extra Credit)

Edge Detection

I implemented edge detection myself as a better feature to match the displacements, by implementing a simple convolution on the input image. Edge detection is a good approach here because naturally a good alignment skeme aligns all edges of a graph, rather than the lightness and darkness.

White Balance

I referred to a blog on the ideas of white balance (see bottom of the page for references), and implemented my algorithm in a similar style, through normalizing the image to the specific percentile values in each color channel. I empirically used "95 percent" as 100 would be too bright and percentiles below 90 would be too dark. Results are shown and contrasted in the next section.

Results

PART 1 & 2

Below are the results using SSD / CCN with 10% cropping, in order to prevent the noise in the borders that mess up the alignment. The data is pretty much the same across the two metrics, so they are presented only once here. Please refer to the folder for pictures using both SSD and CCN losses. Note that ALL photos work well EXCEPT Emir of Bukhara, which do not actually have the same brightness values across colors.That picture works with edge detection though, as described in the above part. The corresponding result will be presented in the next section.

Displacement: 5, 2 and 12, 3 respectively for aligning Green and Red to Blue pieces.

Displacement: -3, 2 and 3, 2 respectively for aligning Green and Red to Blue pieces.

Displacement: 3, 3 and 6, 3 respectively for aligning Green and Red to Blue pieces.

Displacement: 25, 4 and 58, -4 respectively for aligning Green and Red to Blue pieces.

Displacement: 59, 16 and 123, 13 respectively for aligning Green and Red to Blue pieces.

![]()

Displacement: 41, 17 and 89, 23 respectively for aligning Green and Red to Blue pieces.

Displacement: 51, 9 and 112, 12 respectively for aligning Green and Red to Blue pieces.

Displacement: 51, 27 and 108, 36 respectively for aligning Green and Red to Blue pieces.

Displacement: 33, -11 and 140, -27 respectively for aligning Green and Red to Blue pieces.

Displacement: 78, 29 and 176, 37 respectively for aligning Green and Red to Blue pieces.

Displacement: 78, 29 and 176, 37 respectively for aligning Green and Red to Blue pieces.

Displacement: 53, 14 and 111, 11 respectively for aligning Green and Red to Blue pieces.

Displacement: 42, 5 and 87, 32 respectively for aligning Green and Red to Blue pieces.

Displacement: 49, 24 and 92, -217 respectively for aligning Green and Red to Blue pieces.

Displacement: 81, 10 and 178, 13 respectively for aligning Green and Red to Blue pieces.

Custom Pictures:

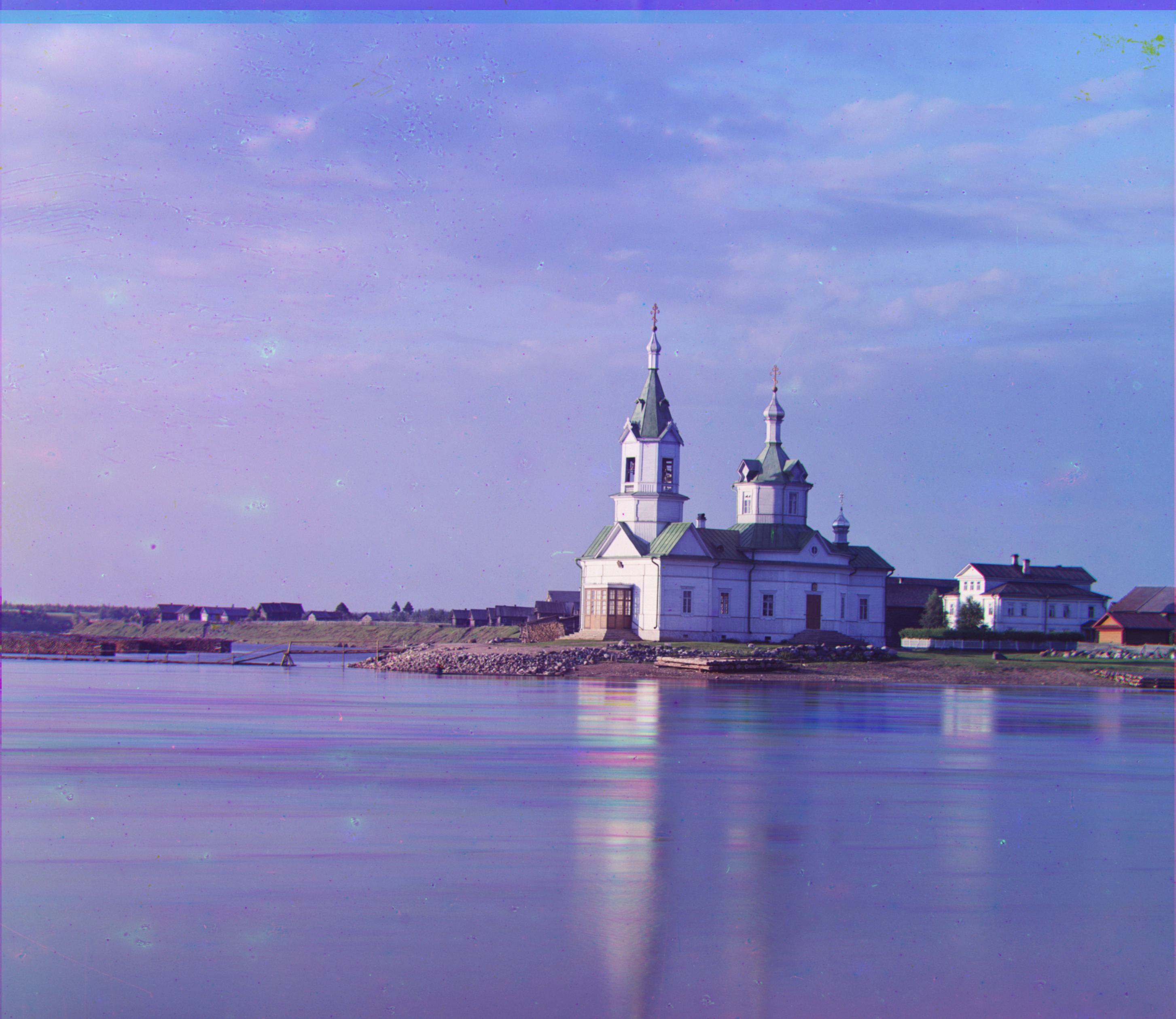

Displacement: 1, 2 and 5, 3 respectively for aligning Green and Red to Blue pieces.

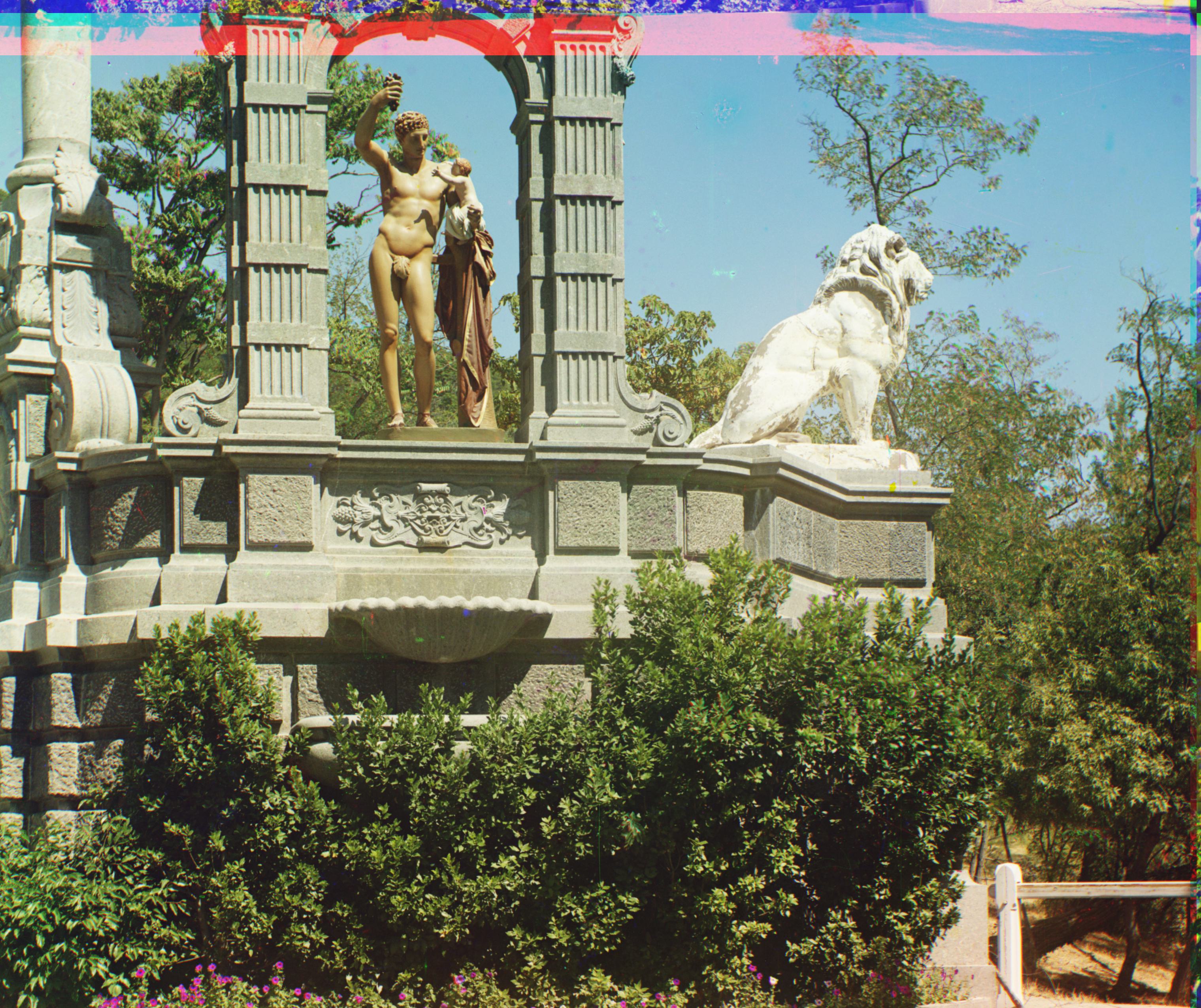

Displacement: 5, -1 and 10, -3 respectively for aligning Green and Red to Blue pieces.

Bells & Whistles (Extra Credit) :

Edge Detection as a feature extraction:

Displacement: 49, 23 and 107, 40 respectively for aligning Green and Red to Blue pieces. This uses edge detection as part of the EC, showing significant improvement compared to the same image in the previous section.

White Balance

The one on the top is white balanced by normalizing with respect to the 95% percentile value of each color channel. The bottom one is before any white balancing.

References @ https://jmanansala.medium.com/image-processing-with-python-color-correction-using-white-balancing-6c6c749886de