CS 194-26: Intro to Computer Vision and Computational Photography,

Fall 2021

Project 1: Images of the Russian Empire: Colorizing the

Prokudin-Gorskii Photo Collection

Hamza Mohammed

Project Overview

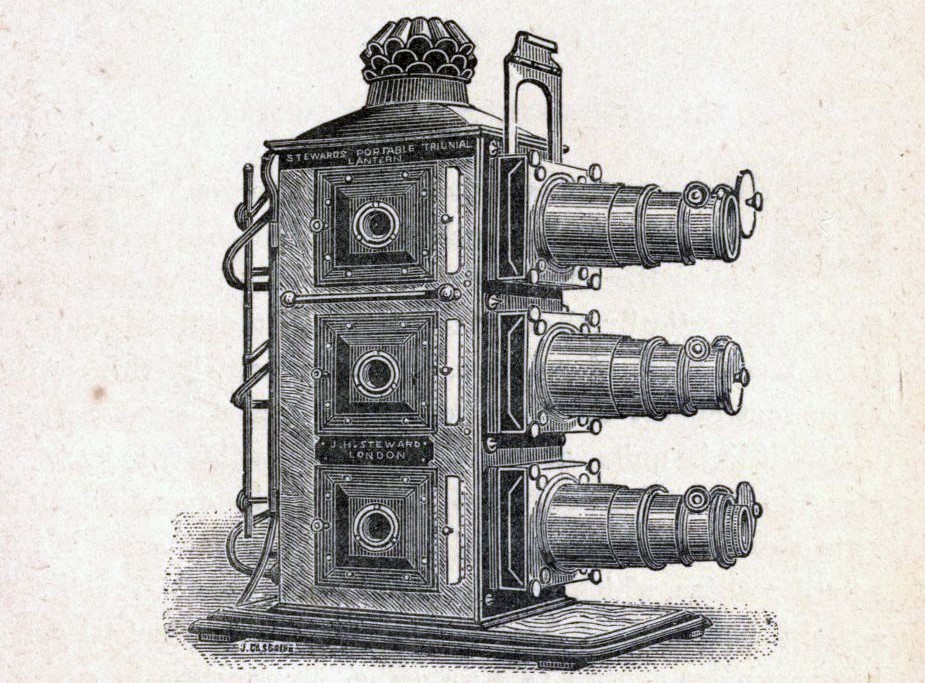

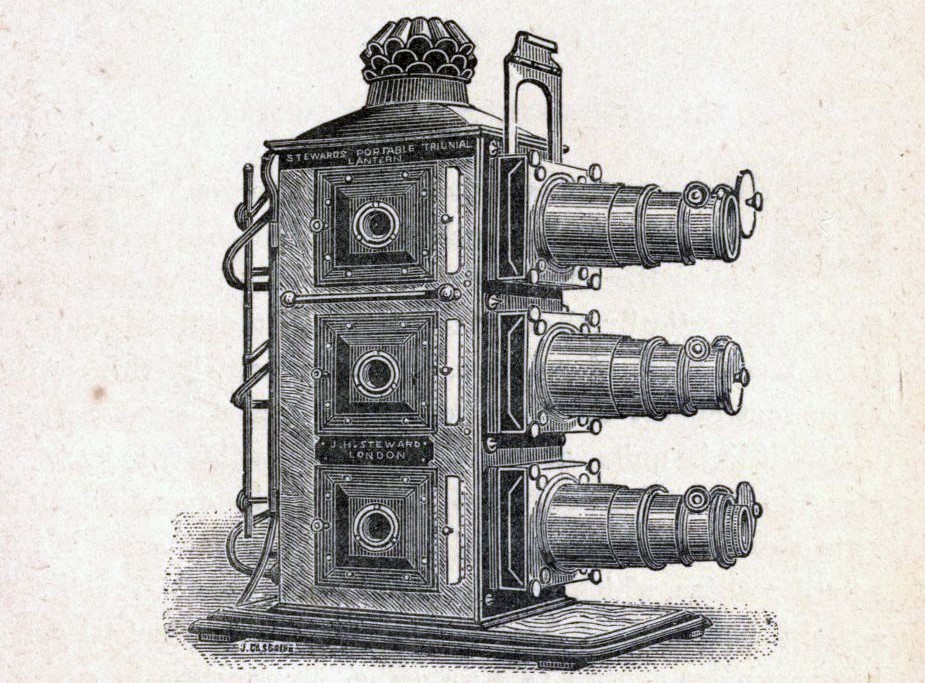

In 1907, the chemist and photographer Sergei Mikhailovich

Prokudin-Gorskii, travelled around the Russian Empire, taking photos of

various landmarks and people he visited using a unique camera apparatus

(Figure 1). The camera was actually 3 cameras stacked on top of each

other, with a color filter. From top to bottom, blue, green, and red

filters. Therefore, each exposure, done on a glass plate, was actually a

stack of 3 exposures, one with a blue filter, the other a green filter,

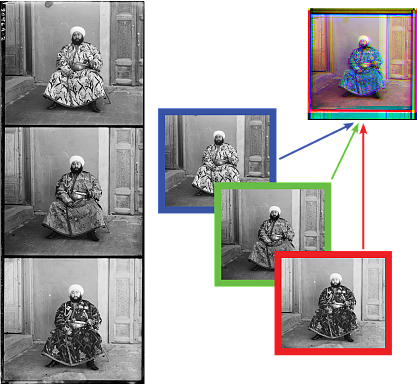

and the final, a red filter. In this manner, stacking the 3 individual

color channel exposures with proper alignment would output a resultant

colorized image of the scene photographed (Figure 2).

Unfortunately,

Prokudin-Gorskii did not have the technology and means to be able to

accurately do this. But now, a century later, technology has more than

aptly caught up, and Prokudin-Gorskii’s glass-plate negatives have been

preserved and digitized by the Library of Congress. This project aims at

developing automated and fast algorithms to reconstruct the colorized

images from the digitized copies of Prokudin-Gorskii original

glass-plate negatives, in the most accurate and photorealistic manner

possible.

Figure 1: Camera used by Sergei Mikhailovich Prokudin-Gorskii to

create the glass-plate negatives. Source: Libary of Congress

|

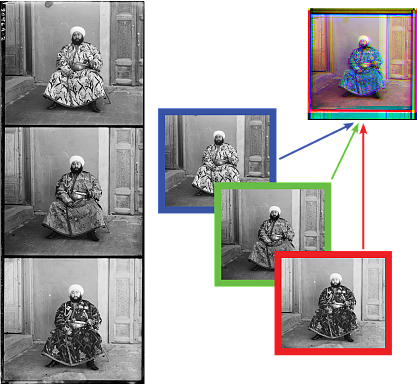

Figure 2: Example glass-plate

negative, and reconstruction of colorized RGB image with blue,

green, red filters applied. Source:

https://sechtl-vosecek.ucw.cz/en/expozice5.html

|

Approches

Exhaustive Search

The most naïve approach was to use exhaustive approach. Here we try

and shift two of the color channels (ch2 and ch3) to match the reference

channel (ch1). For each channel to be aligned, we shift it in the x and

y directions by any integer value between [-15, 15], and use a

similarity metric (explained later) to determine which of the shifted

channels is most similar to the reference channel based on the metric. We

then output the corresponding x and y value as the offset for that

specific color channel. By running on both channels, aligning the color

channels to the reference channel, and stacking the images on top of

each other, we get the corresponding colorized image for the glass-plate

negative using the exhaustive search method.

For the similarity

metric, both the Sum of Squared Differences (SSD) and Normalized

Cross-Correlation (NCC), were used. SSD metric, or l2 norm, is a

minimization of the Frobenius norm of the shifted image minus the

reference image. Whereas NCC is the maximization of the dot product of

the normalized vector of the shifted image with the normalized vector of

the reference image. Although both metrics yielded identical offset

values from smaller images, NCC ended up being the metric used for the

larger images as it’s offsets yielded slightly less artifacts and

reduced blurring (Figure 3 & 4).

Figure 3: Church image aligned

using SSD as similarity metric.

Note the blurriness of the image.

|

Figure 4: Church image aligned

using NCC as similarity metric

Note the signifcantly reduced

artifacts and blurring.

|

Cropping

Note that by aligning, we simply mean circularly shifting the pixels

of the color channel (a greyscale image matrix representing the

brightness values of the filter color for each pixel of the image). In

this manner, the border pixels would greatly affect the value of the

similarity metric once the image is shifted. As a result, before the

similarly metric is calculated for the shifted images, the images are

cropped to only include the interior 70% of the image. 15% by width and

height of the image is cropped out on either side.

Reference Color Channel

It was quickly noted that the color channel used for reference to

align the other two channels made a significant difference on the

photorealism and level of artifacts in the alignment of the images. By

default, the blue channel was used, but upon testing, it was noted that

the green channel produced the best results. This was shown no more

clearly than with the Emir photo (Figures 5 & 6). As mentioned in

lecture, this may be attributed to how our eyes are most sensitive to

green wavelengths than red or blue (Figure 7), or that green wavelengths are in

between the red and blue wavelengths of visible light, therefore, green

is spectrally close with both red and blue. As a result, aligning images

to the green reference image produces the least artifacts and more

photo-realism.

|

Figure 7: The retina cone sensitivity

curves for blue, green, and red wavelengths.

Source: The

Physics Classroom

|

Figure 5: Image of Emir aligned

using blue color channel as reference.

|

Figure 6: Image of Emir aligned

using green color channel as reference.

Note the

signifcantly reduced artifacts.

|

Results for Example Images (Small)

All images below used green as

reference channel and NCC for similarity metric

Blue Channel Offset: (-2, -5)

Red Channel Offset: (1, 7)

|

Blue Channel Offset: (-2, 3)

Red Channel Offset: (1, 6)

|

Blue Channel Offset: (-3, -3)

Red Channel Offset: (1, 4)

|

Pyramid Search

Exhaustive search worked well for the smaller .jpg digitized images,

but on larger .tif images, which have resolutions on the order of

103, this naïve search method is incredibly slow. To reduce

runtime of the search method, the image pyramid technique was

implemented. This technique constructs a pyramid of images where at each

increasing level, the image is downsampled by a factor (default used was

0.5), until the image has a height less than 100 pixels. Note that the

sk.transform.rescale function is employed for downsampling to account

for anti-aliasing.

At this highest level, the image is small enough that

exhaustive search can be used with decent runtime. Then as you

progress down the image pyramid, we can run the exhaustive search

algorithm on just a localized portion of that higher resolution image

by using the offsets learned from the previous downsampled level. Note

that first we multiply these offsets by the inverse of the

downsampling factor (default: 1/0.5 = 2) to account for

downsampling.

In this manner, by first searching the downsampled images we

can localize the search on higher resolution images, in order to

reduce the total number of searches required to still obtain the ideal

offset values for the original high-resolution image, when compared to

the exhaustive search method.

Consequently, the window size to search at each level was

decreased to [-5, 5] for both x and y. Despite the reduced window size,

because of the image pyramid, the offsets at higher levels are magnified

at lower levels by 2n (where n is the level on the image

pyramid), so the total range of offsets searched by the image pyramid

search algorithm still exceeds the range in the previous naïve

exhaustive search method.

Results for Example Images

All images below used green as

reference channel, NCC for similarity metric, 70% crop, and a

downsampling factor of 0.5 (for large images).

Blue Channel Offset: (-4, -25)

Red Channel Offset: (-8, 33)

|

Blue Channel Offset: (-24, -48)

Red Channel Offset: (17, 57)

|

Blue Channel Offset: (-17, -58)

Red Channel Offset: (-2, 64)

|

Blue Channel Offset: (-17, -39)

Red Channel Offset: (5, 49)

|

Blue Channel Offset: (-8, -52)

Red Channel Offset: (4, 60)

|

Blue Channel Offset: (-9, -80)

Red Channel Offset: (4, 96)

|

Blue Channel Offset: (-27, -50)

Red Channel Offset: (10, 58)

|

Blue Channel Offset: (-28, -77)

Red Channel Offset: (8, 97)

|

Blue Channel Offset: (-13, -49)

Red Channel Offset: (-1, 59)

|

Blue Channel Offset: (-5, -41)

Red Channel Offset: (27, 44)

|

Blue Channel Offset: (1, -52)

Red Channel Offset: (-11, 52)

|

Blue Channel Offset: (-3, -3)

Red Channel Offset: (1, 4)

|

Blue Channel Offset: (-2, -5)

Red Channel Offset: (1, 7)

|

Blue Channel Offset: (-2, 3)

Red Channel Offset: (1, 6)

|

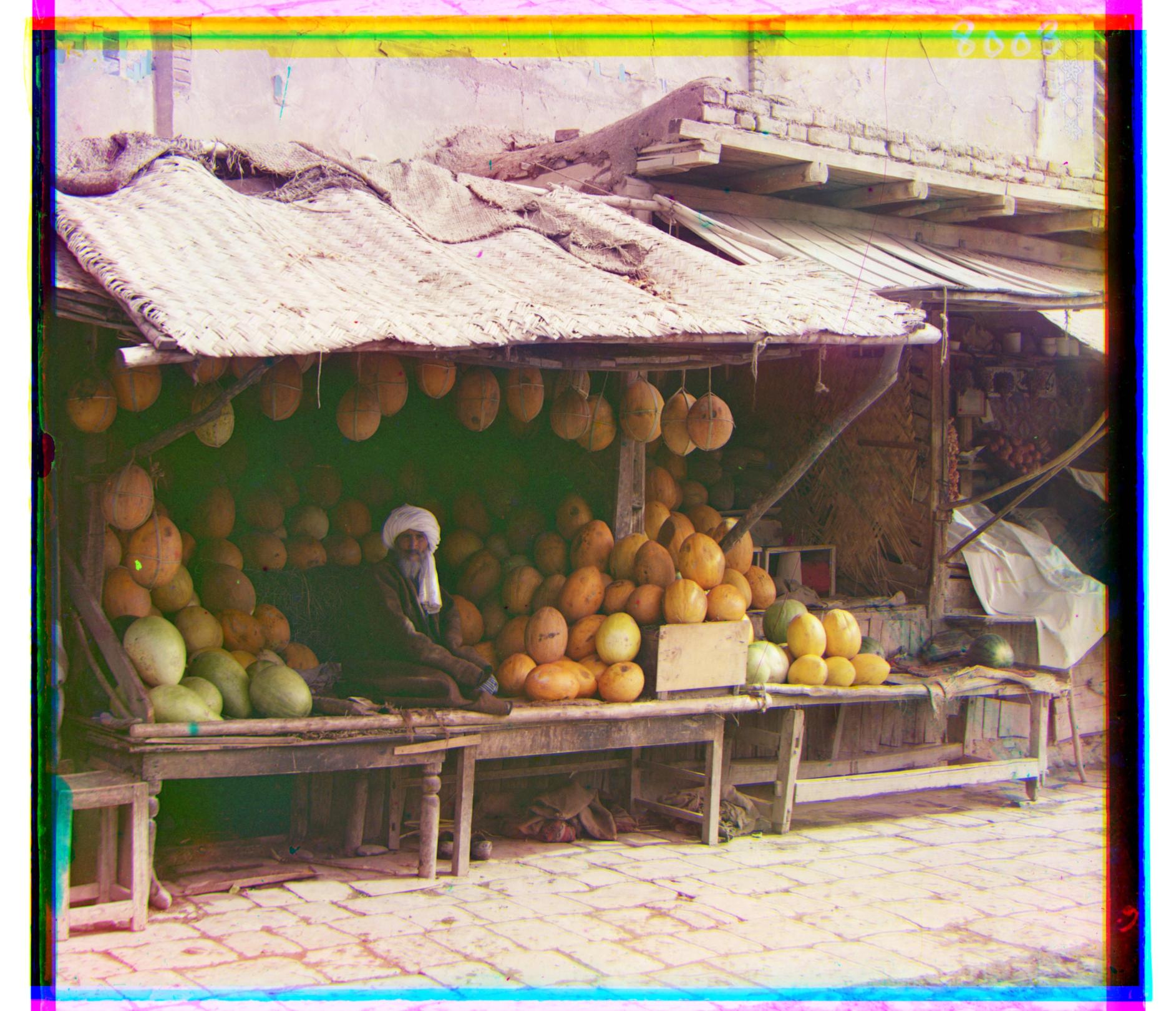

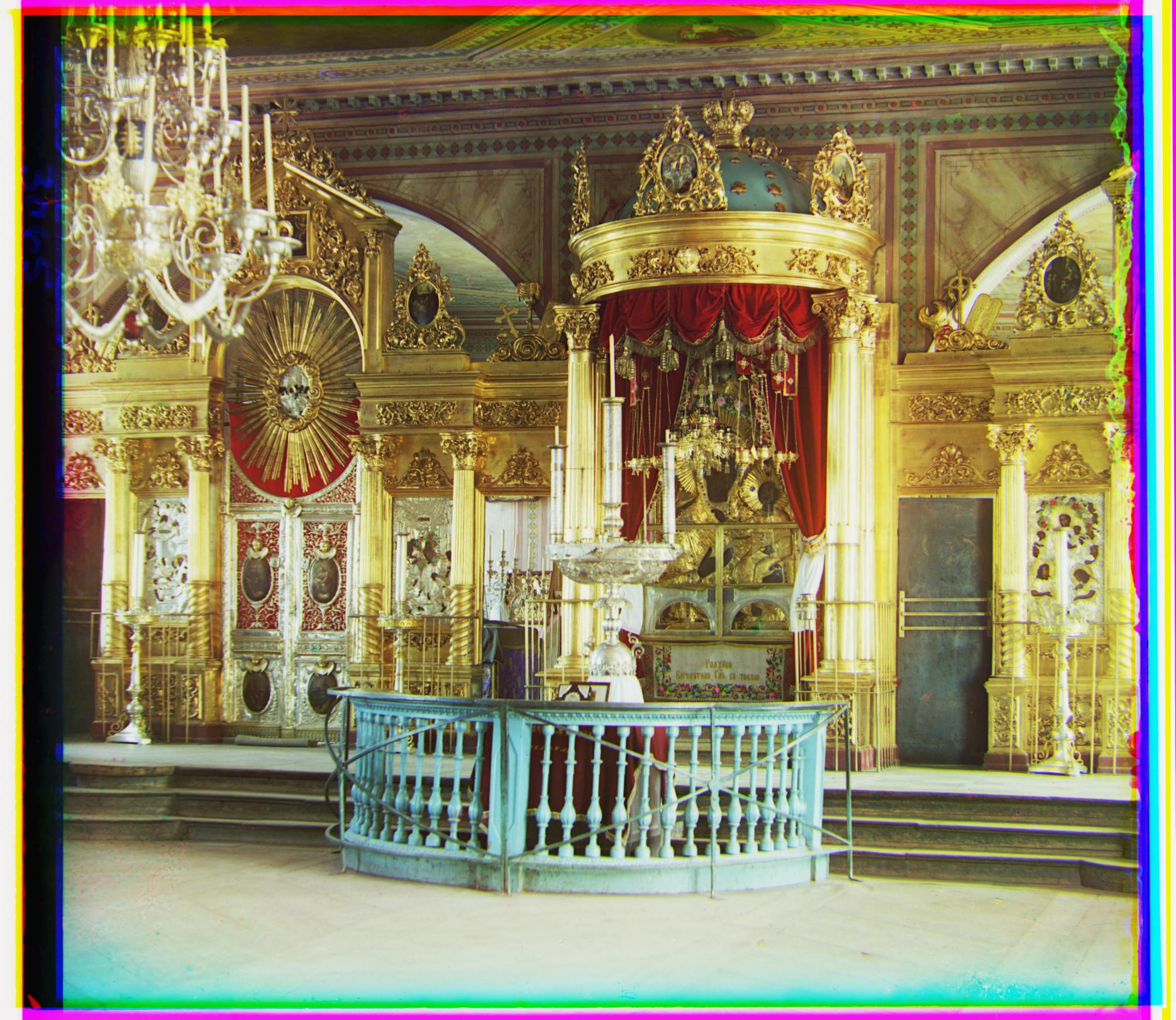

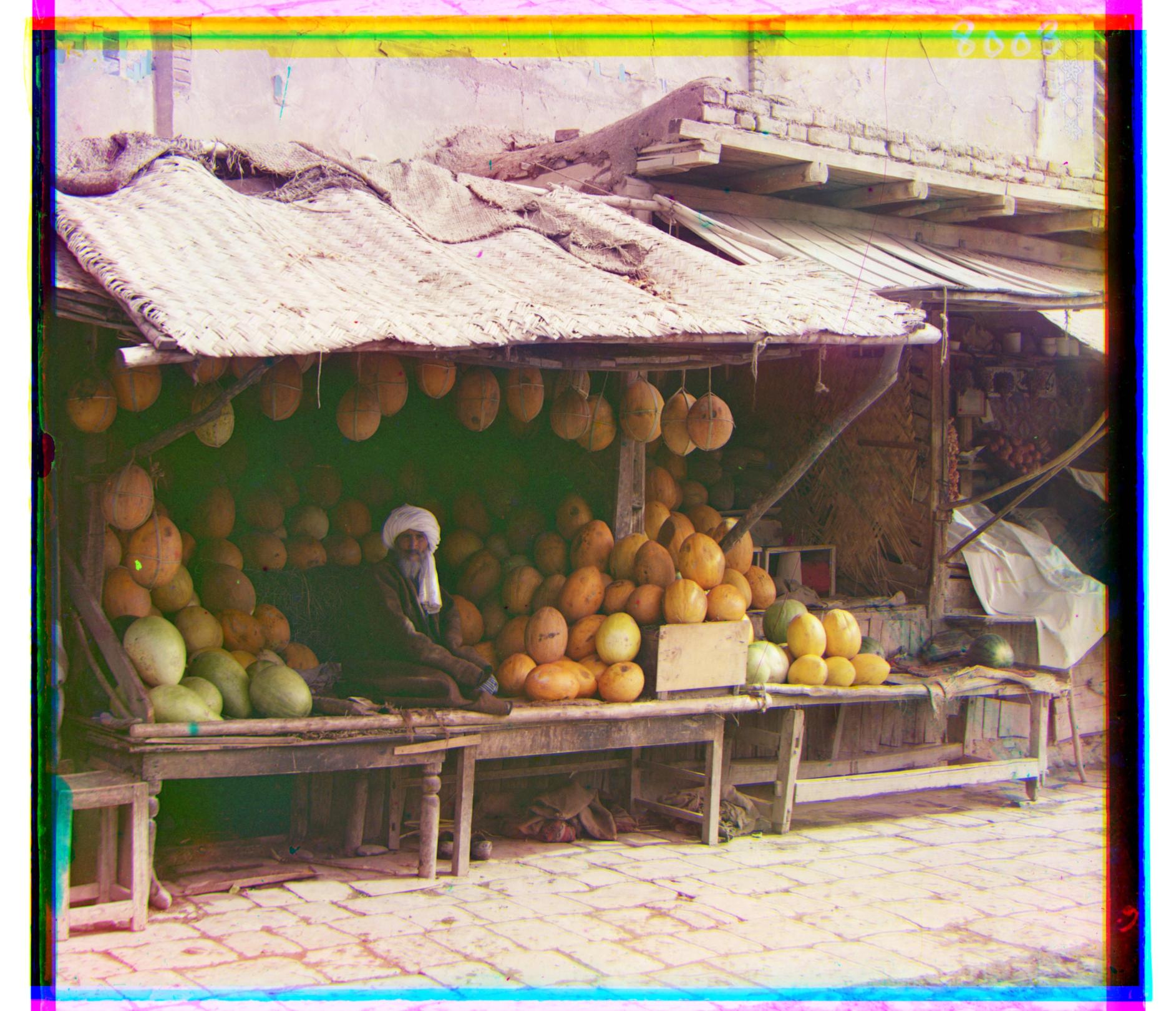

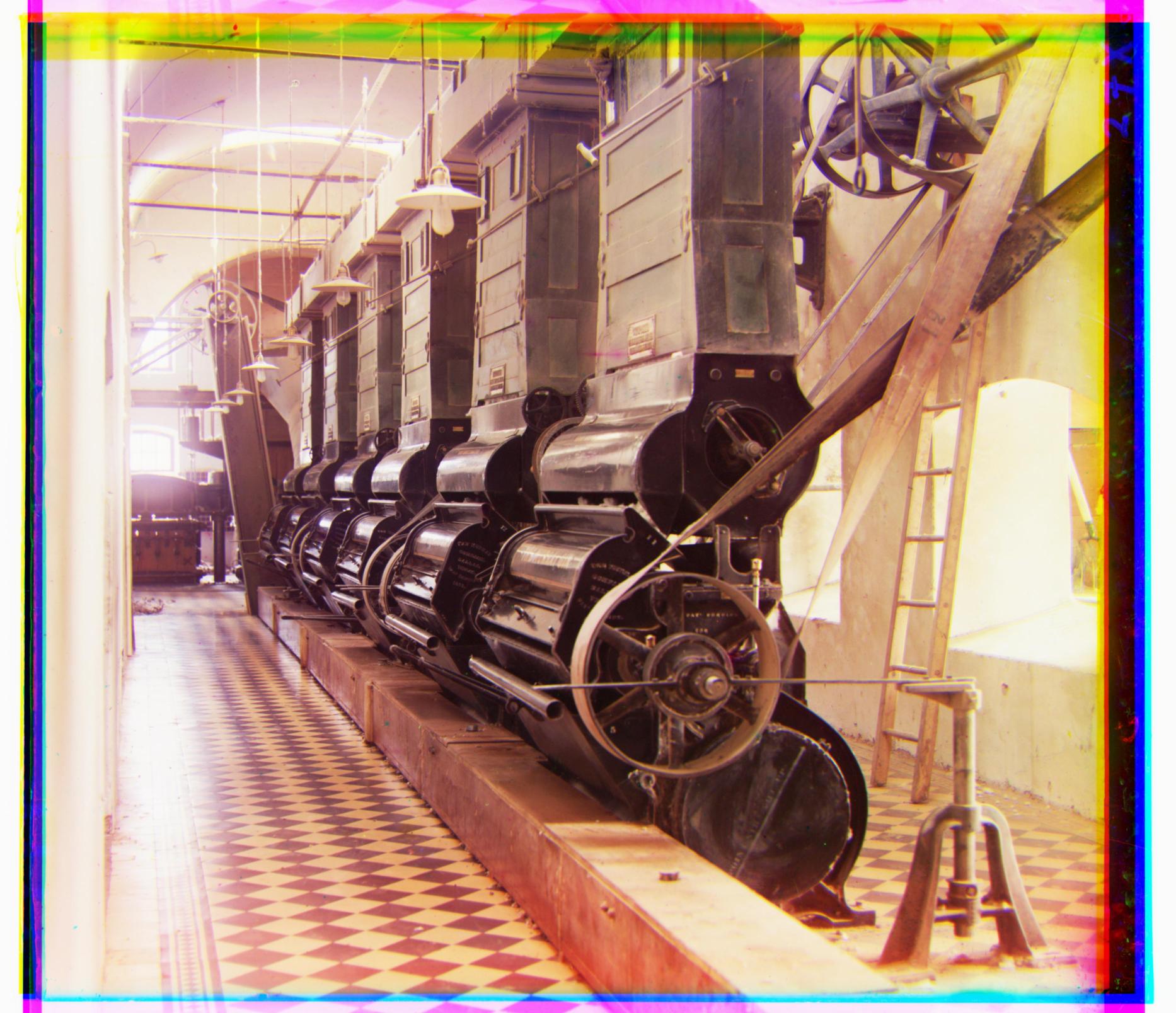

Results for Some Additional Images from Prokudin-Gorskii's Photo Collection

All images below used green as

reference channel, NCC for similarity metric, 70% crop, and a

downsampling factor of 0.5.

|

Church Entrance

Blue Channel Offset: (-20, -64)

Red Channel Offset: (10, 72)

|

Cathedral Red

Blue Channel Offset: (-21, 100)

Red Channel Offset: (19, 41)

|

|

Cathedral White

Blue Channel Offset: (-27, -56)

Red Channel Offset: (4, 68)

|

Floodgate Supervisor - colorshifts in water

Blue Channel Offset: (22, -48)

Red Channel Offset: (-32, 56)

|

|

Man in Red Clothes - bad white balance

Blue Channel Offset: (-19, -67)

Red Channel Offset: (17, 76)

|

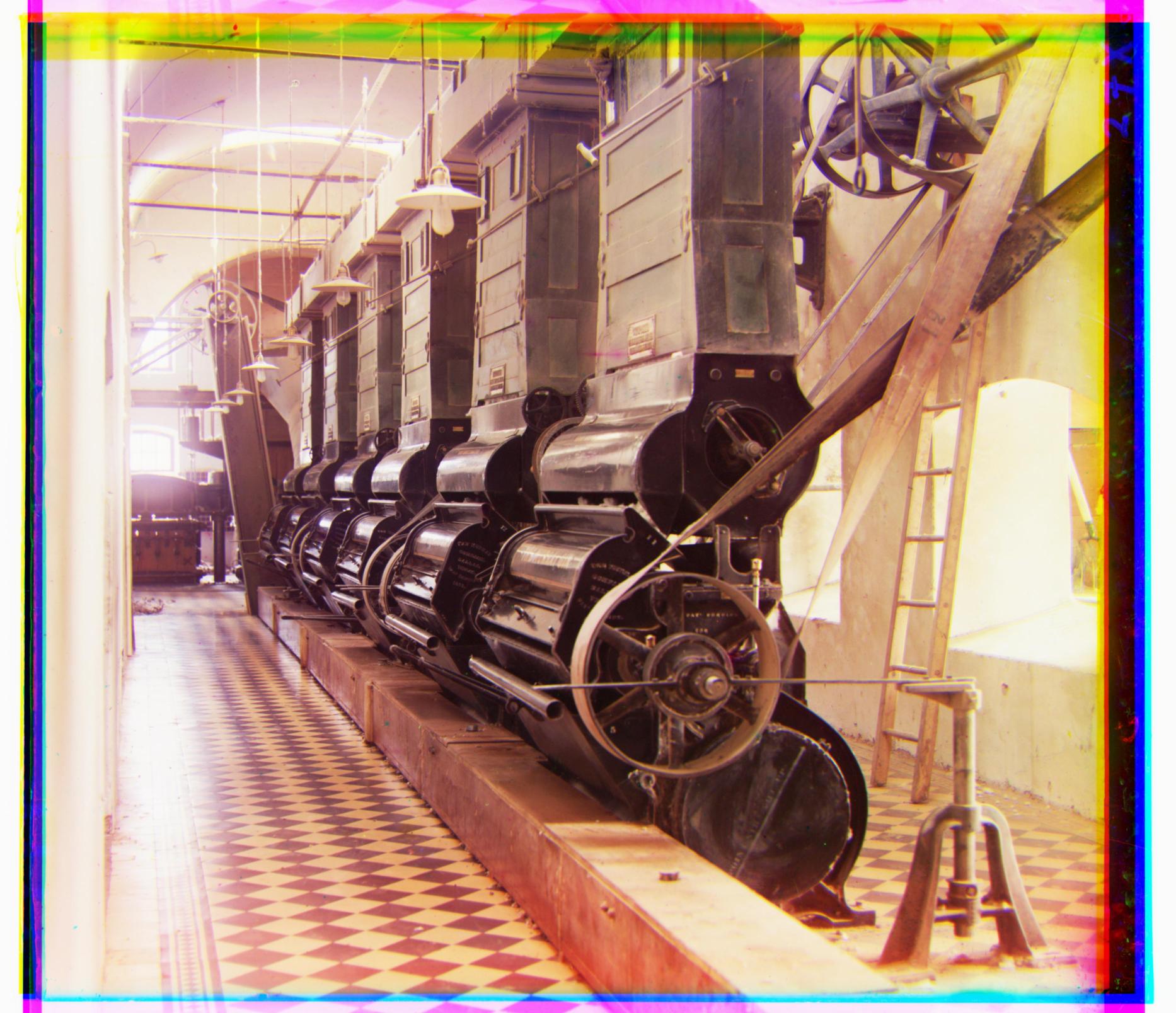

Cotton Mill

Blue Channel Offset: (-28, -61)

Red Channel Offset: (15, 71)

|

|

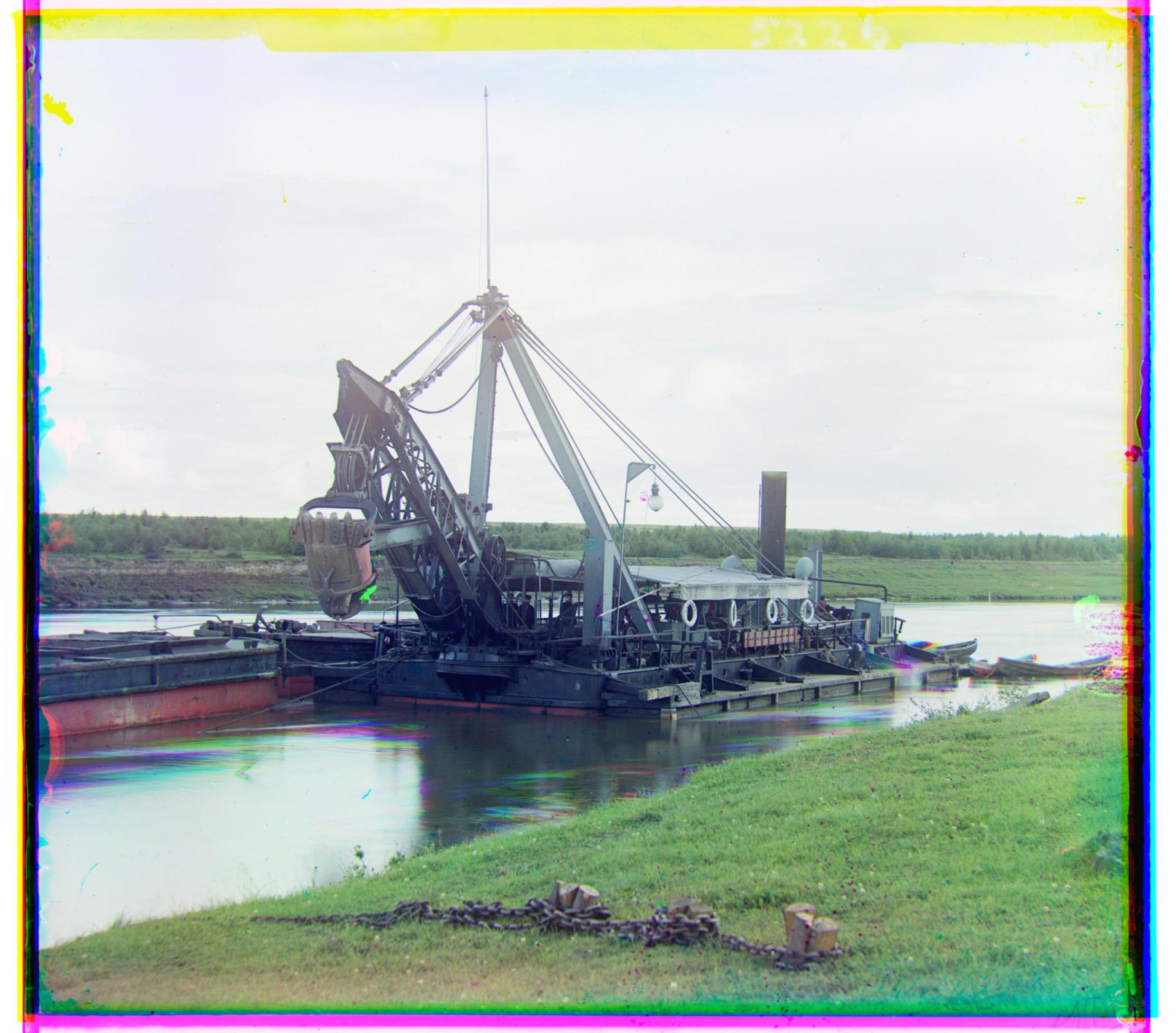

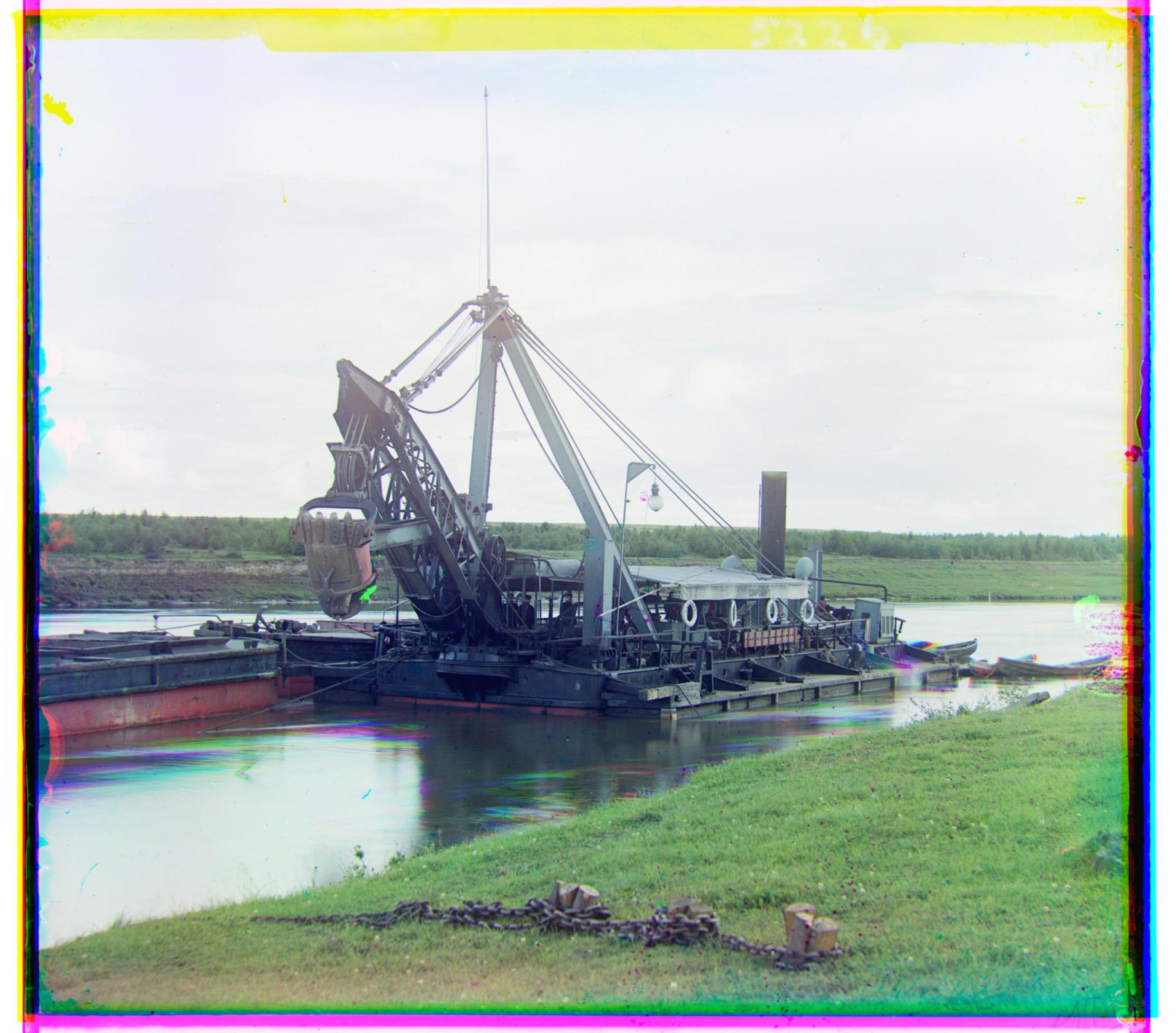

Excavator

Blue Channel Offset: (-17, -34)

Red Channel Offset: (9, 48)

|

Firemen Squad

Blue Channel Offset: (-34, -3)

Red Channel Offset: (31, 28)

|

|

Pink Flowers

Blue Channel Offset: (6, -48)

Red Channel Offset: (-18, 47)

|

Mosque

Blue Channel Offset: (3, -55)

Red Channel Offset: (2, 69)

|

|

Ocean Sunset - looks like a polaroid

Blue Channel Offset: (-12, -52)

Red Channel Offset: (32, 53)

|

Windmill

Blue Channel Offset: (-15, -63)

Red Channel Offset: (18, 77)

|