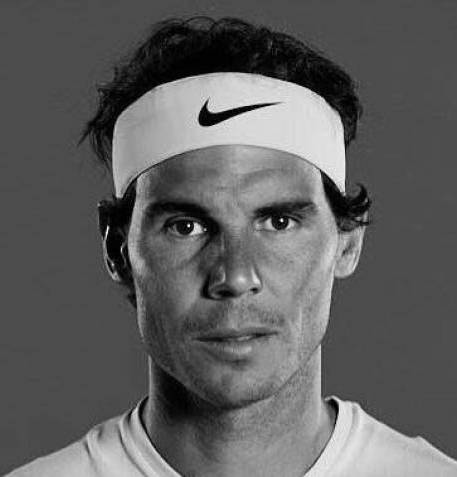

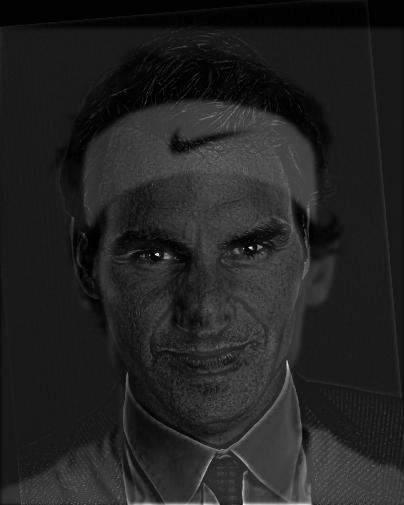

Part 1: Fun with Filters

Part 1.1. Finite Difference Operator

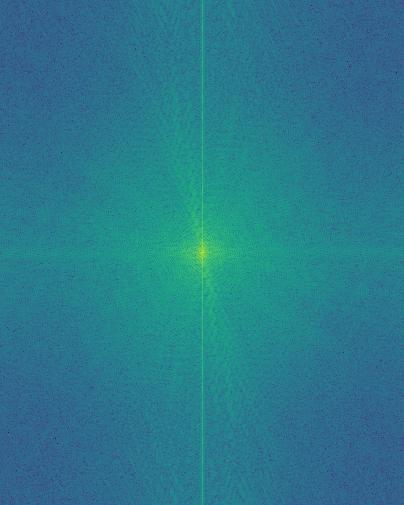

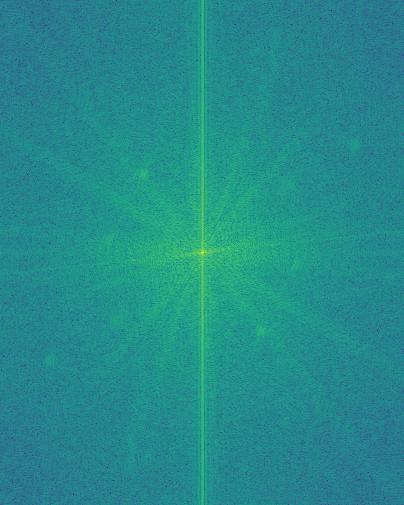

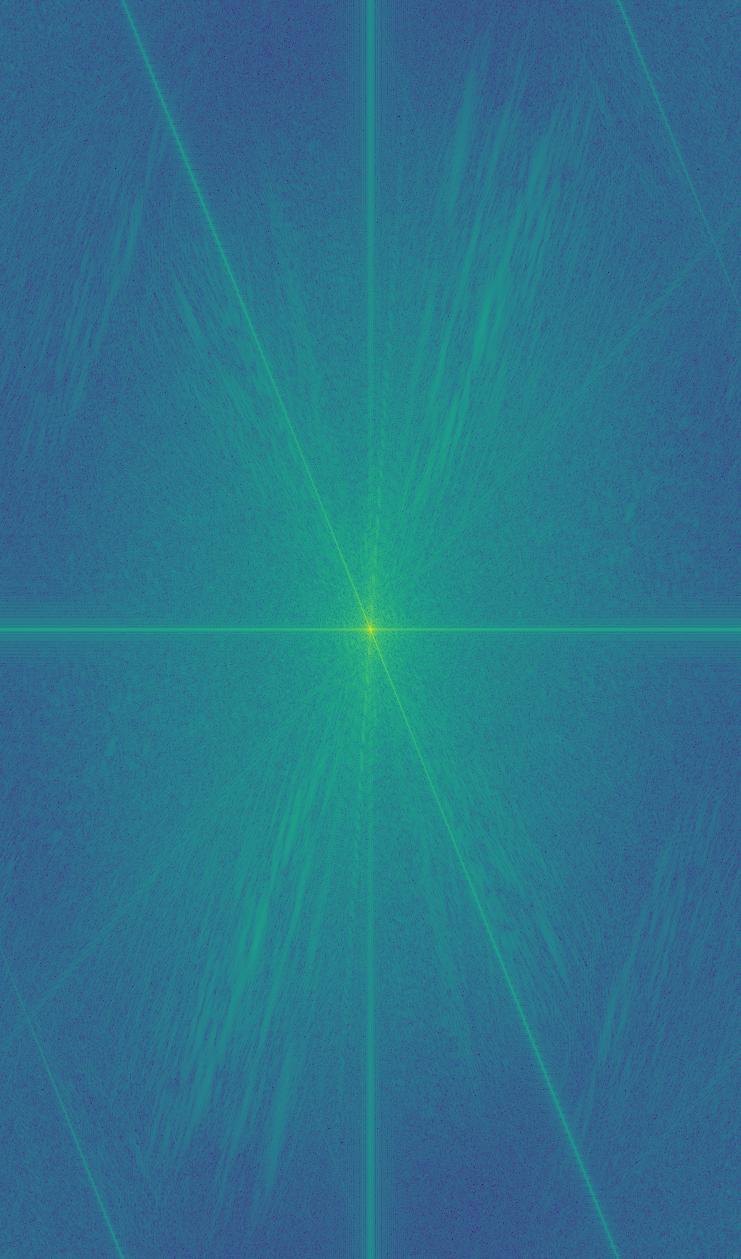

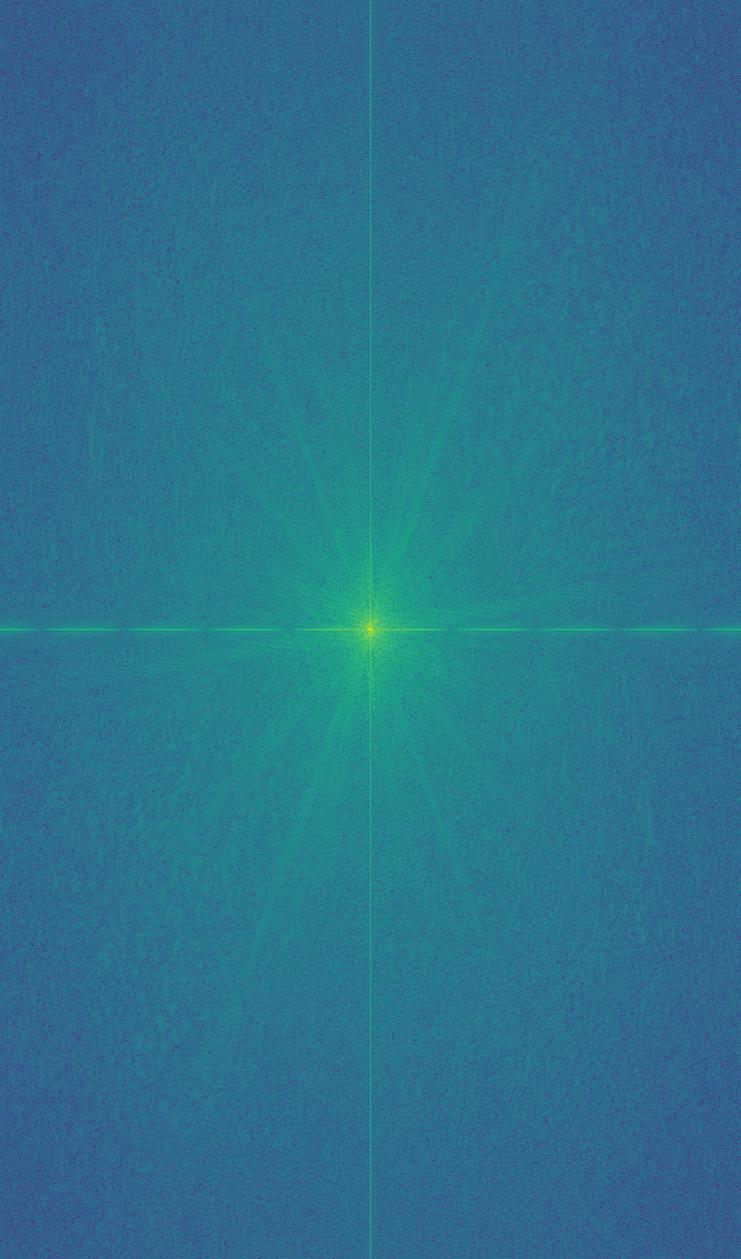

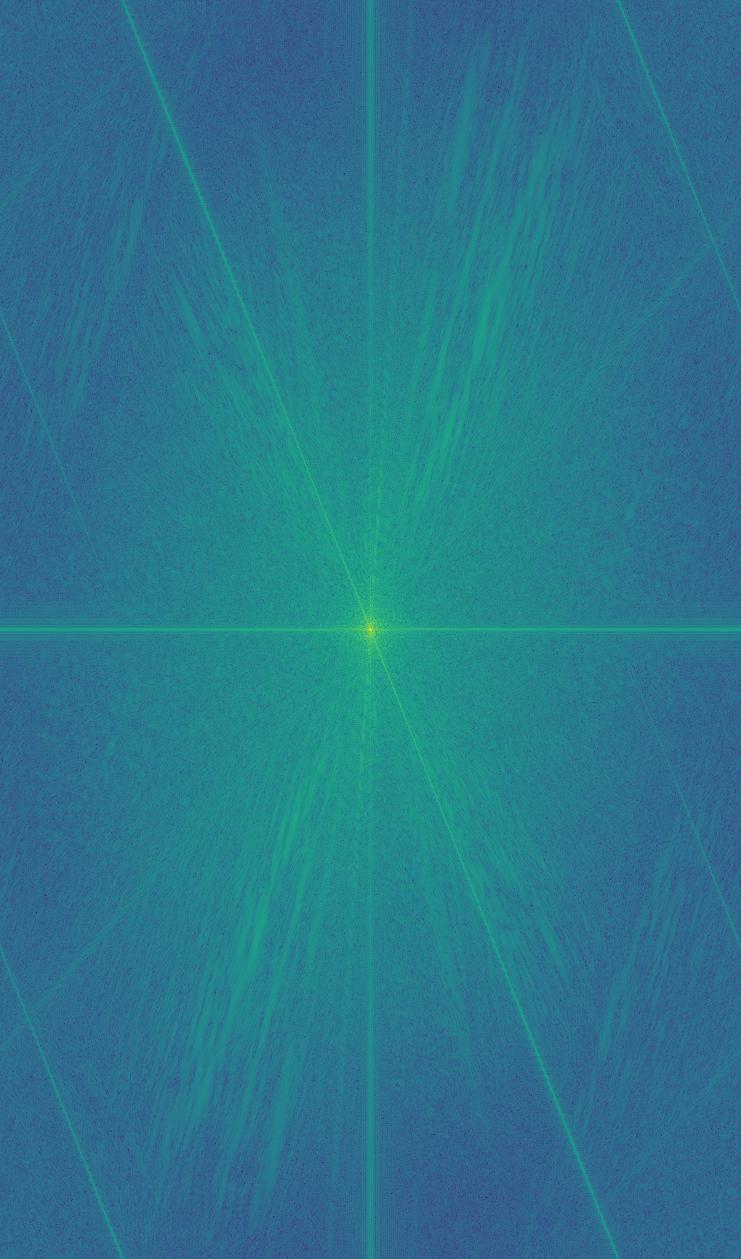

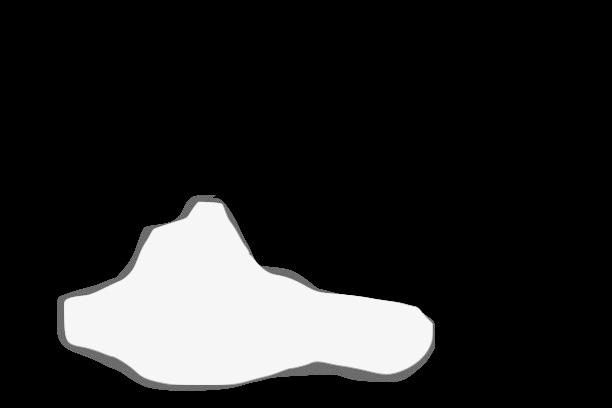

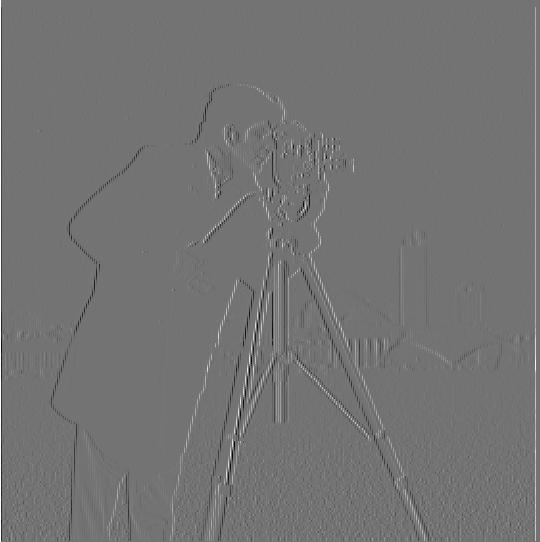

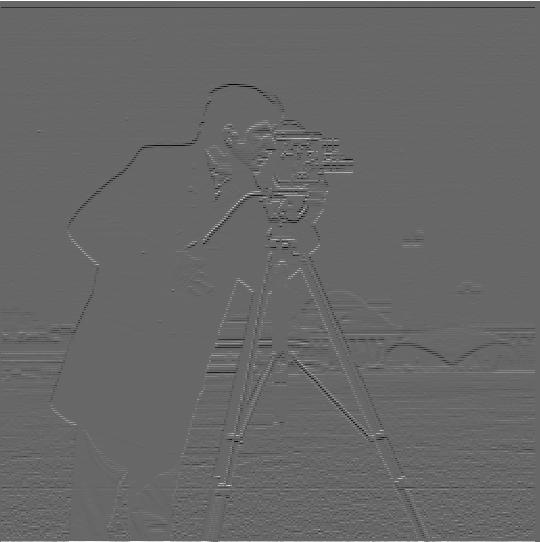

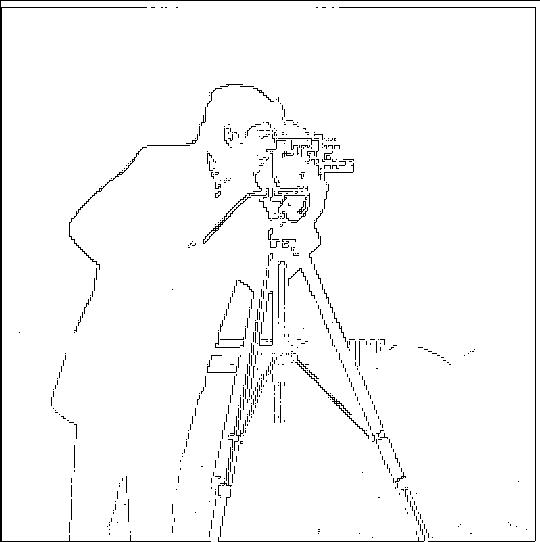

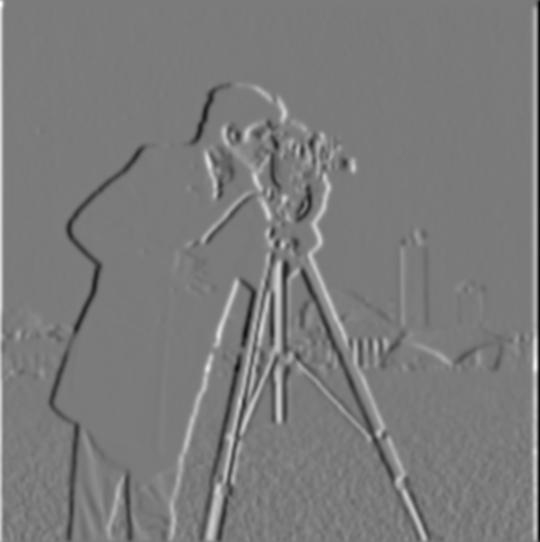

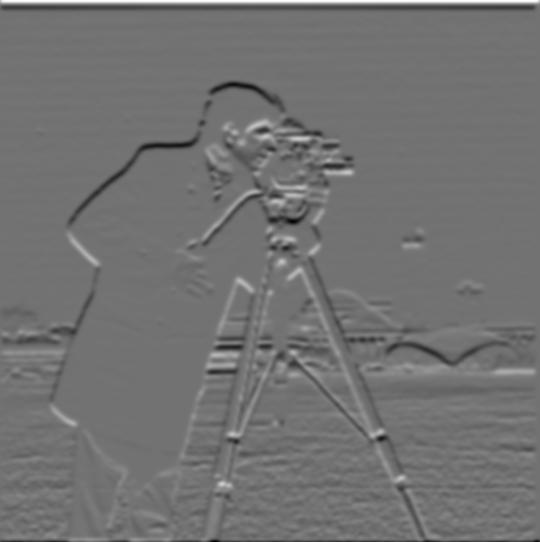

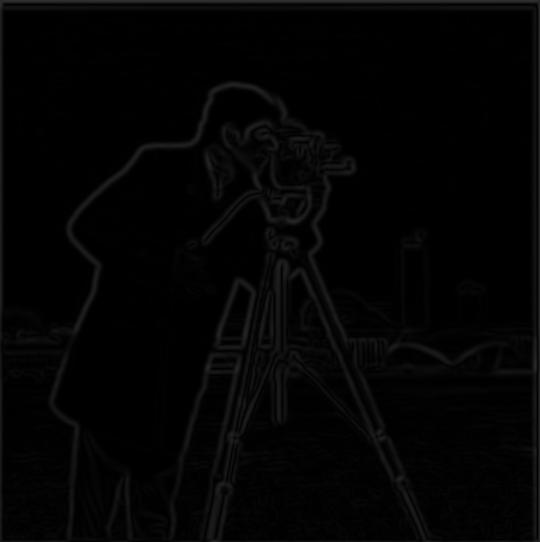

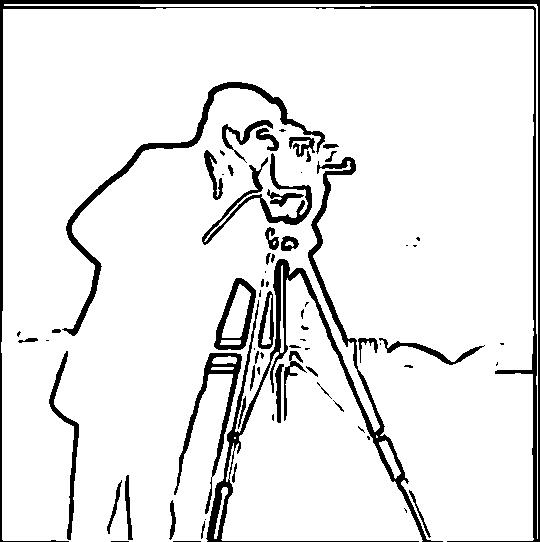

Here we convolve the image with two difference operators to look for edges. The respective operators are $D_x = \begin{bmatrix}1 & -1\\ \end{bmatrix}$ and $D_y = \begin{bmatrix}1 \\ -1\\ \end{bmatrix}$. We then take the magnitudes of the gradient values, where $(\nabla \text{im})_{ij} = \sqrt{(D_x * im)_{ij}^2 + (D_y * im)_{ij}^2}$. From here, we can define an arbitrary threshold to detect edges.

|

|

|

|

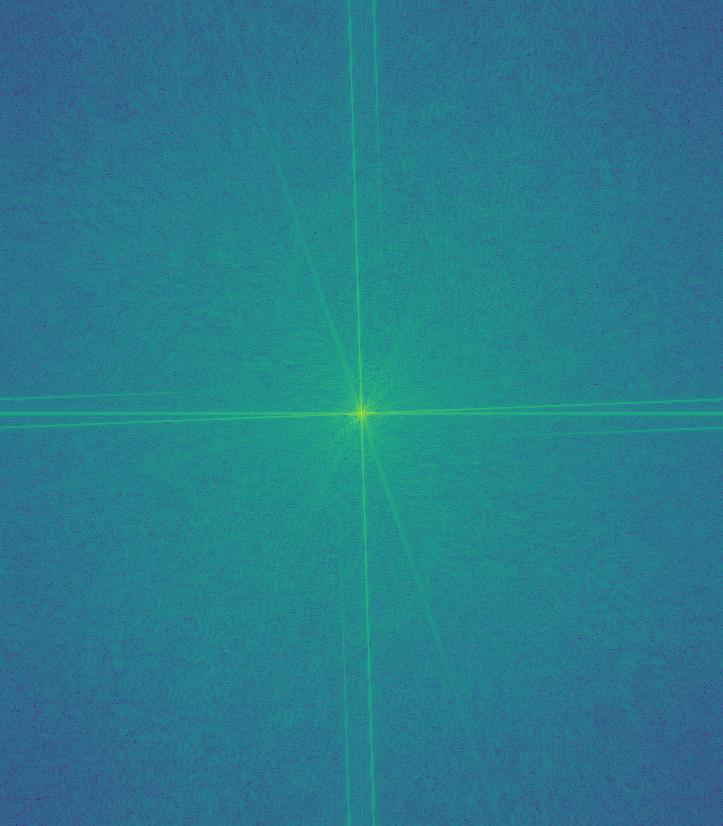

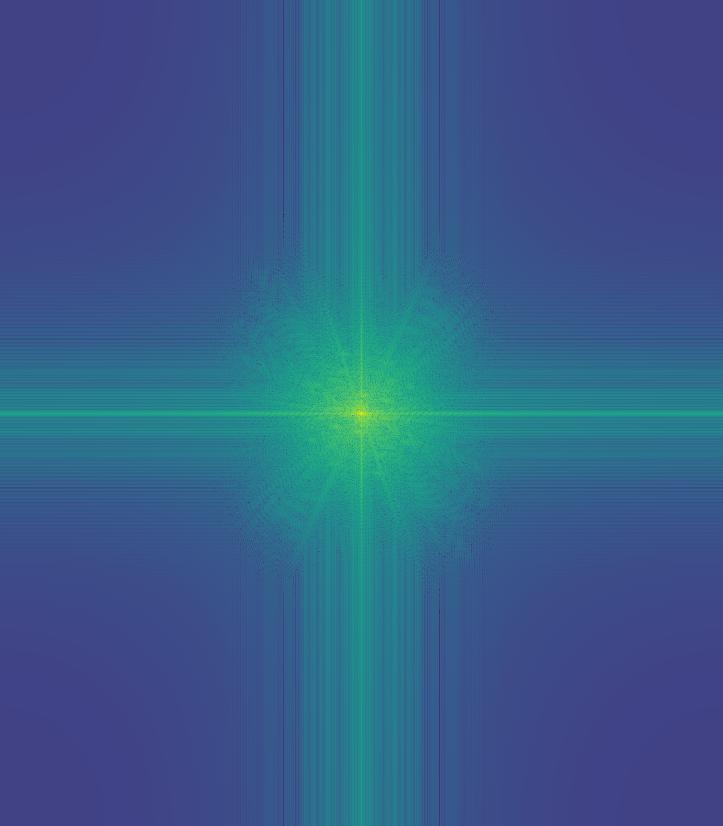

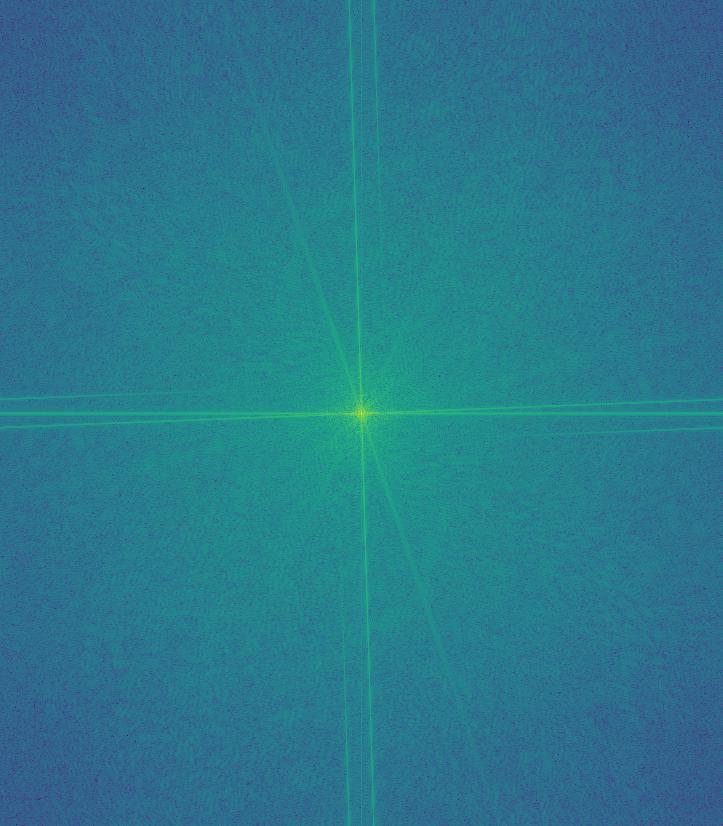

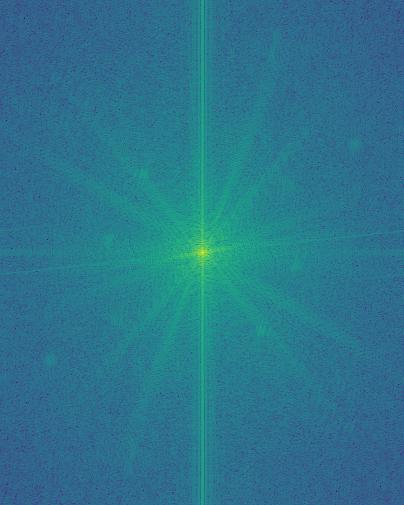

| Image convolved with $D_x$ | Image convolved with $D_y$ | Image gradient magnitudes | Image edges (0.3 threshold) |

|---|

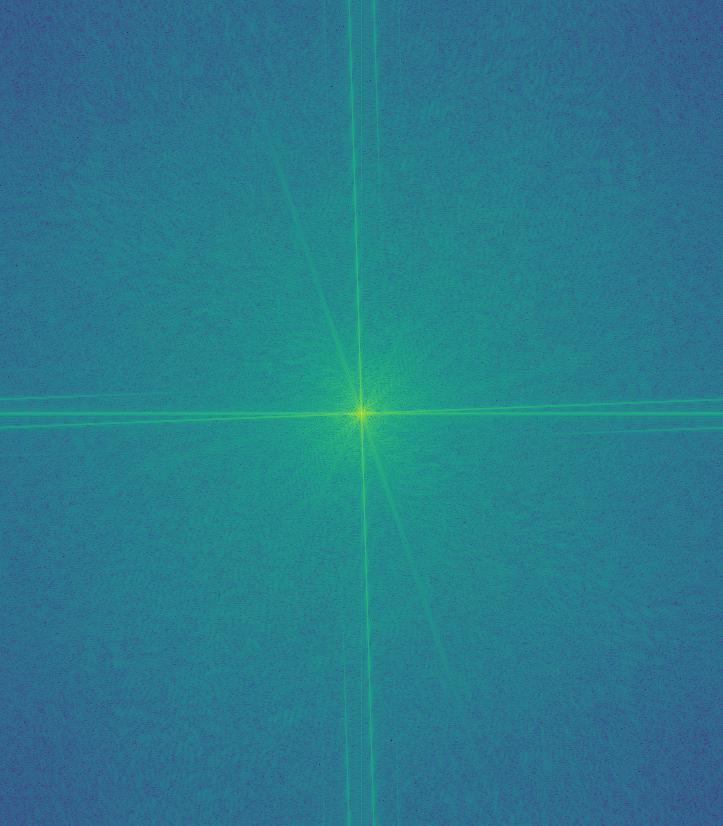

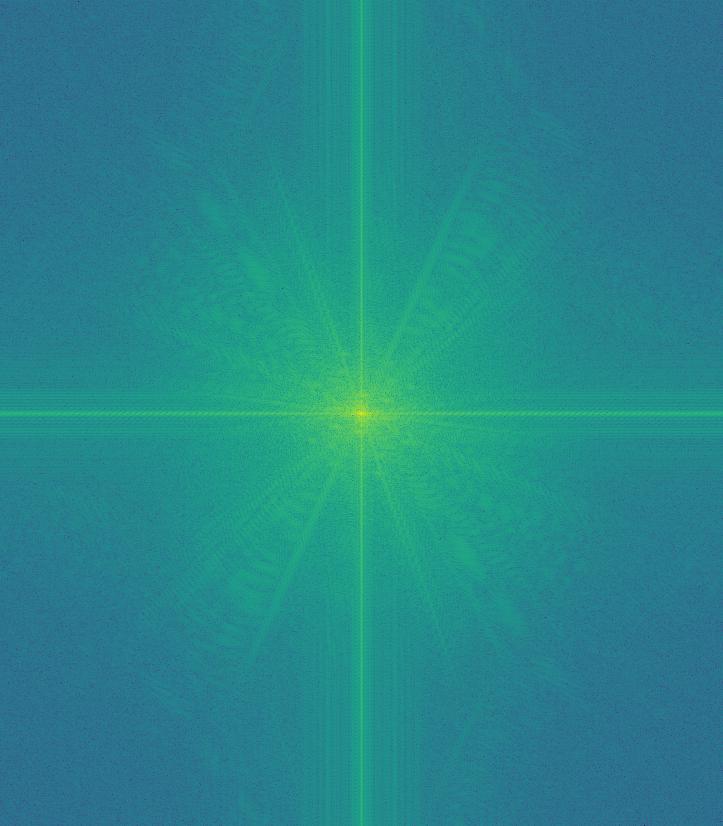

Part 1.2. Derivative of Gaussian (DoG) Filter

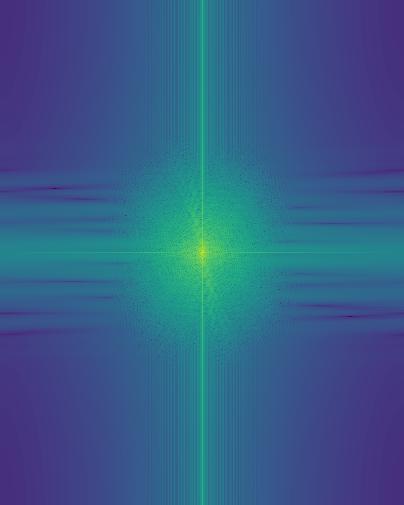

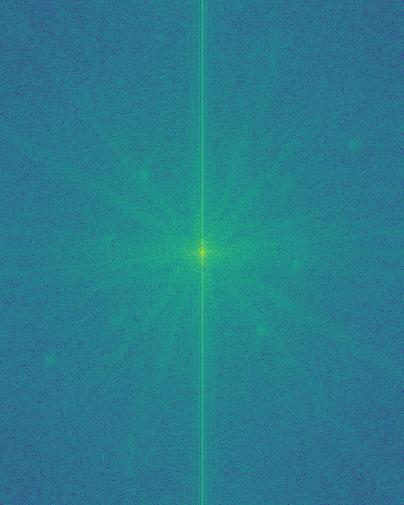

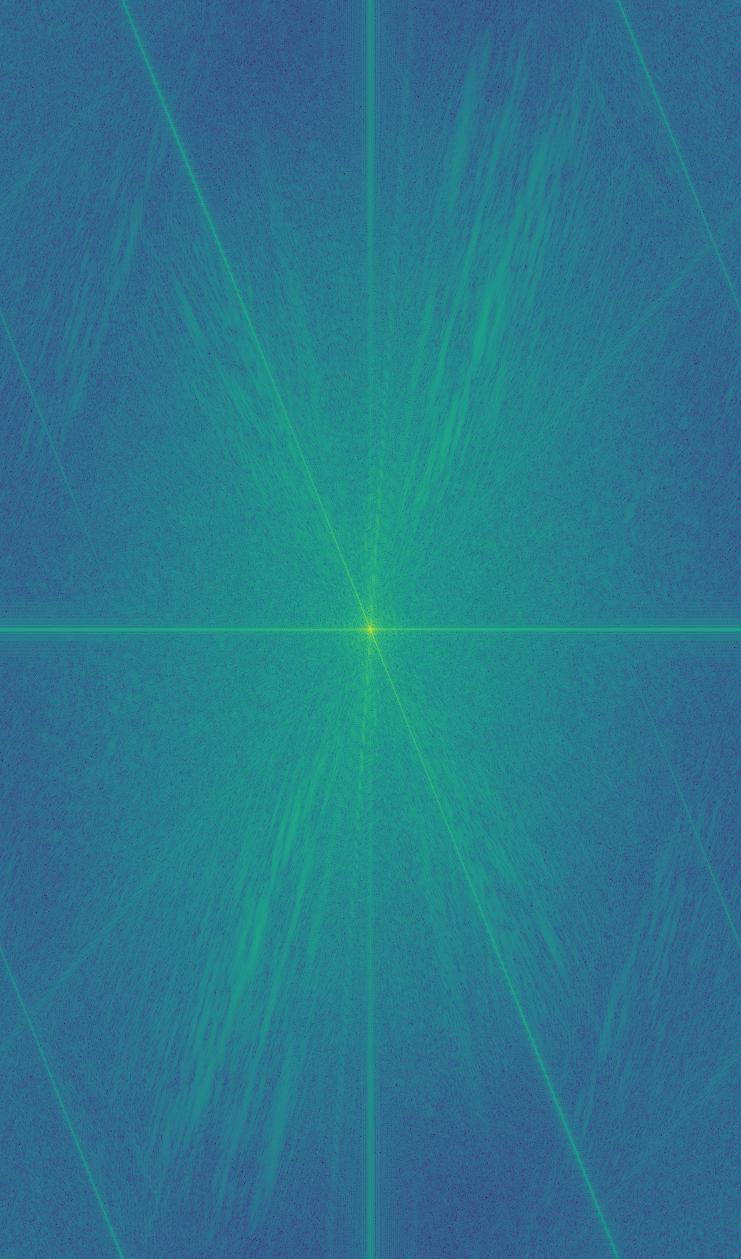

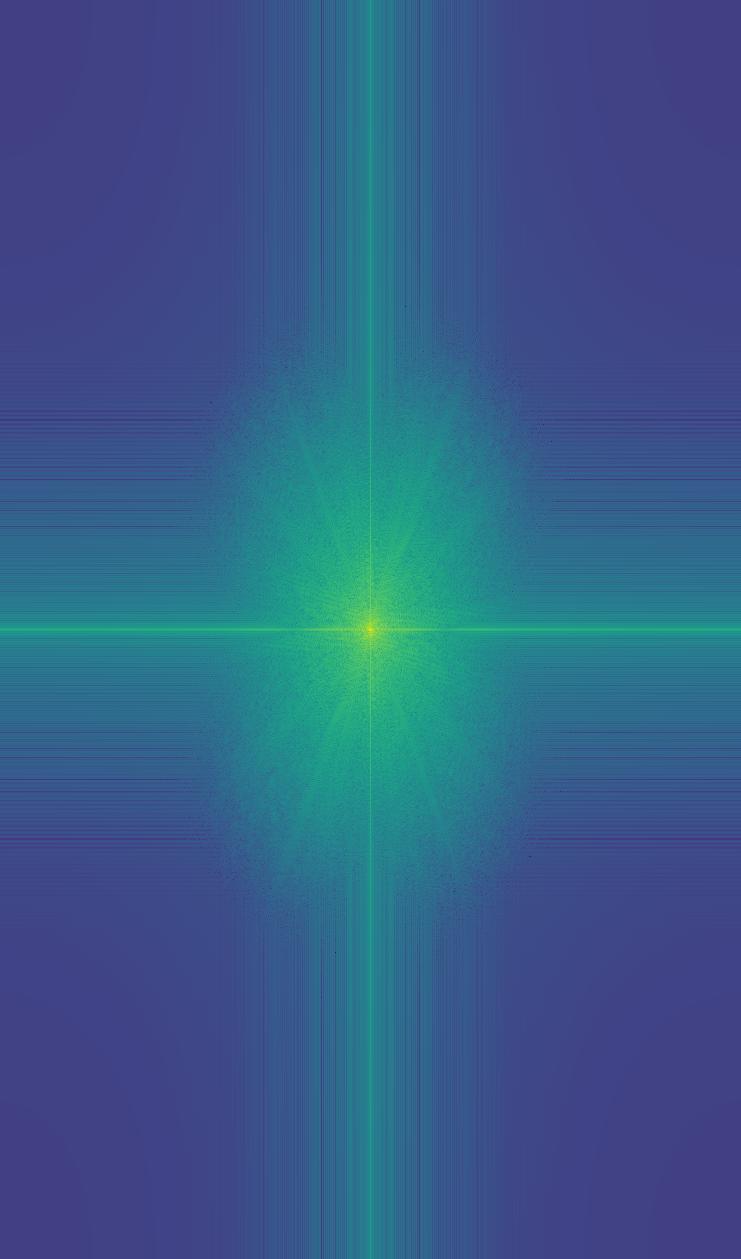

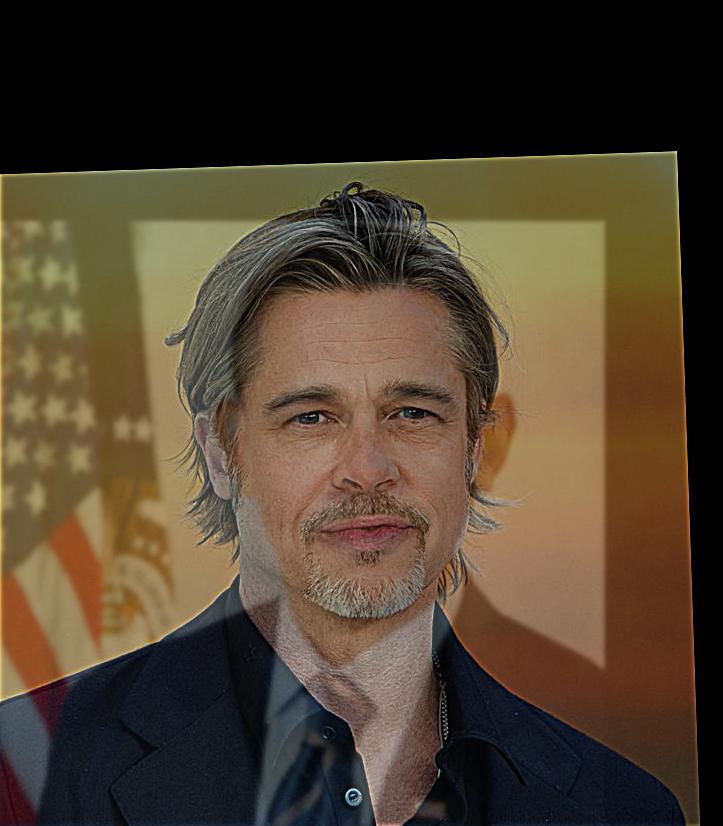

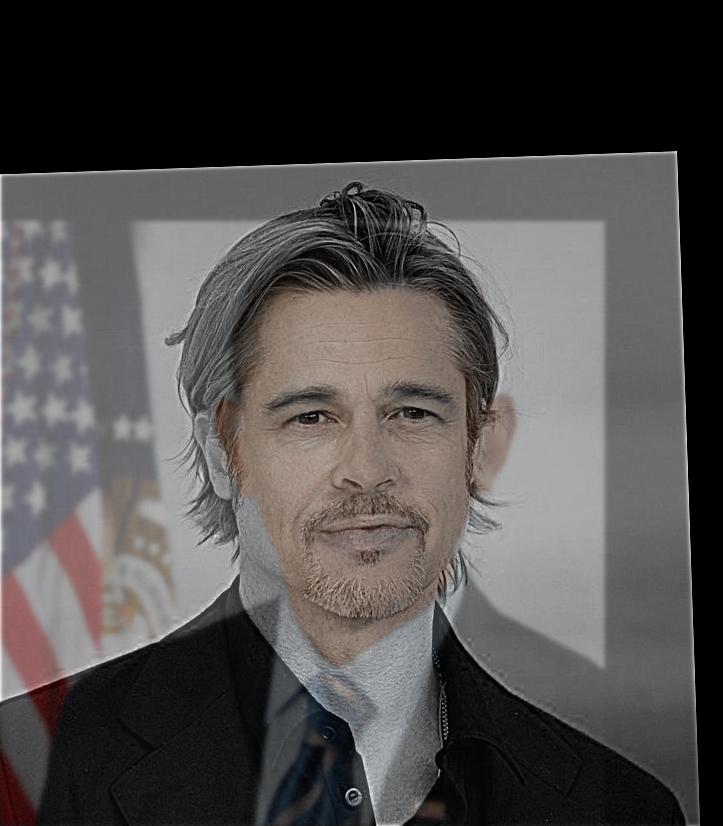

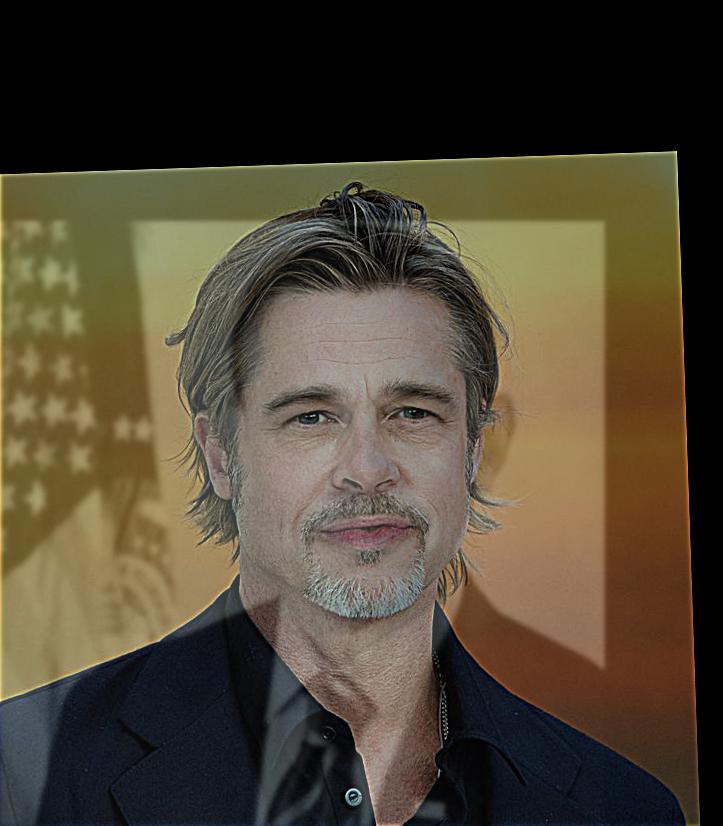

Here we do the same process except we pass the image through a Gaussian filter first. Instead of needing to perform two separate convolutions per direction, we can simply convolve the image with the filters $(GF * D_x)$ and $(GF * D_y)$.

|

|

|

|

| Image convolved with $(GF * D_x)$ | Image convolved with $(GF * D_y)$ | Image gradient magnitudes | Image edges (0.05 threshold) |

|---|