COMPSCI 194-26: Project 2

Fun with Filters and Frequencies

Varun Saran

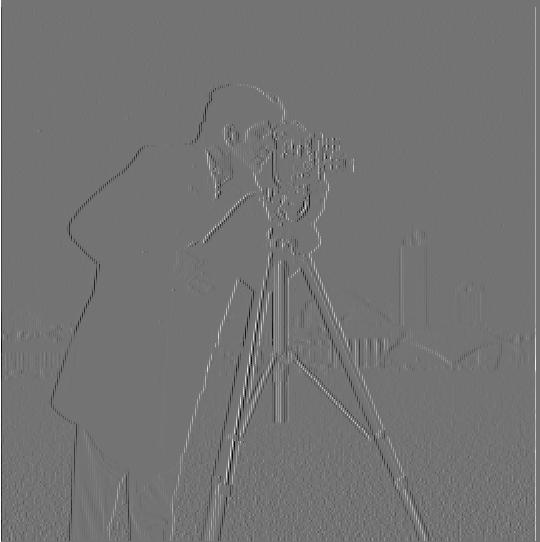

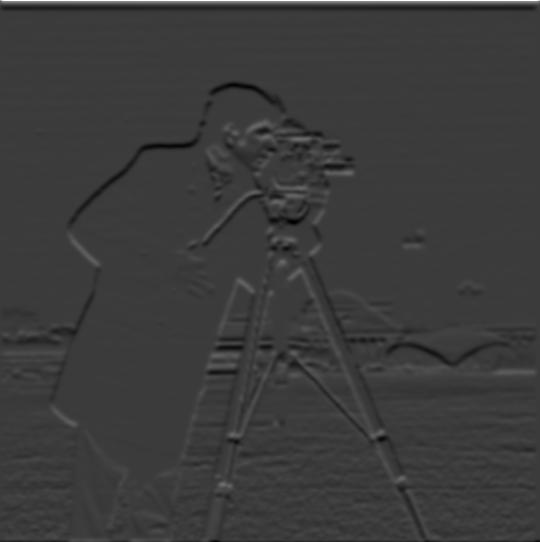

Part 1.1: Finite Difference Operator

Using derivates to get edges. The gradient magnitude image is created by first taking the partial derivative of the image with respect to x and y separately, using Dx = [1, -1] and Dy = [ [1], [-1]]. These were used as filter to convolve with the image. The resulting 2 images are the first 2 images displayed. Then, to create the gradient magnitude image, the 2 gradients are combined to get grad_image = sqrt (grad_x^2 + grad_y^2). Finally, the image is binarized to reduce noise and emphasize the edges by qualitatively choosing a threshold, and setting all values less than the threshold to 0, and all above it to 1 (or 255, depending on the format of the image)

In this section, we don't blur the image before taking the gradient.

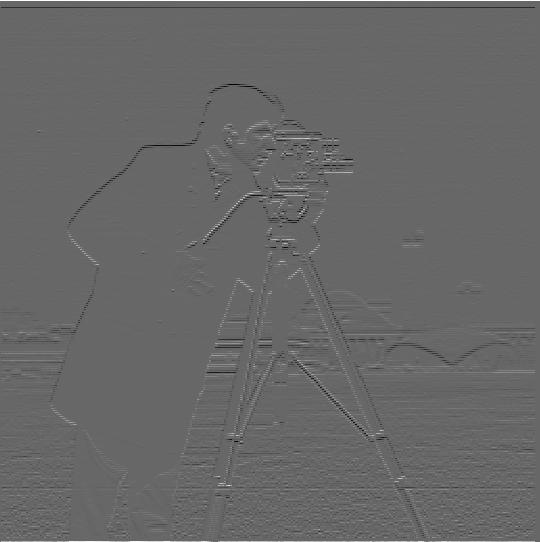

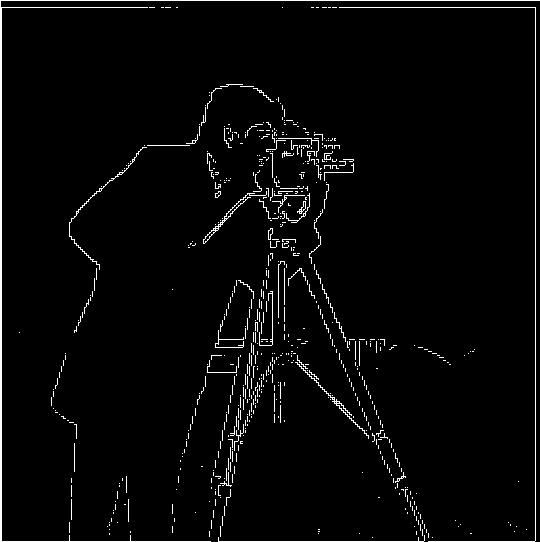

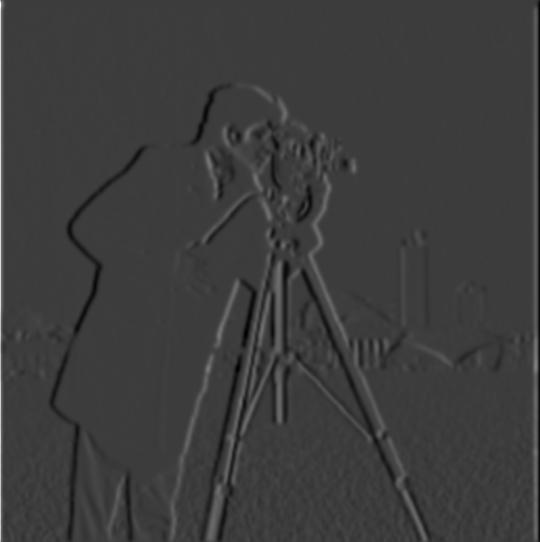

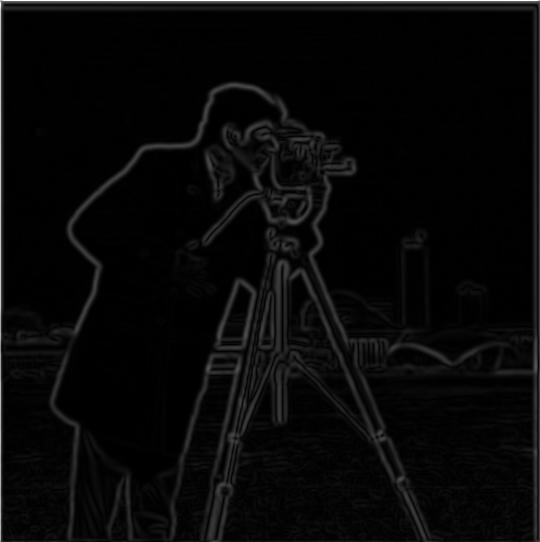

Part 1.2: Derivative of Gaussian (DoG) Filter

Using derivates to get edges. In this section, we do blur the image before taking the gradient.

Q1. What differences do you see?

The image is alot clearer, and the gradient image is a lot smoother. No more rapid zig zags to make curves (as seen in part 1.1, may require zooming in). Instead, the edges actually look like curves.

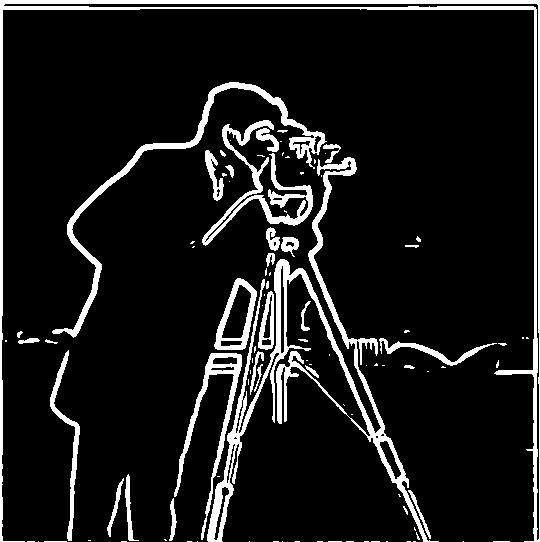

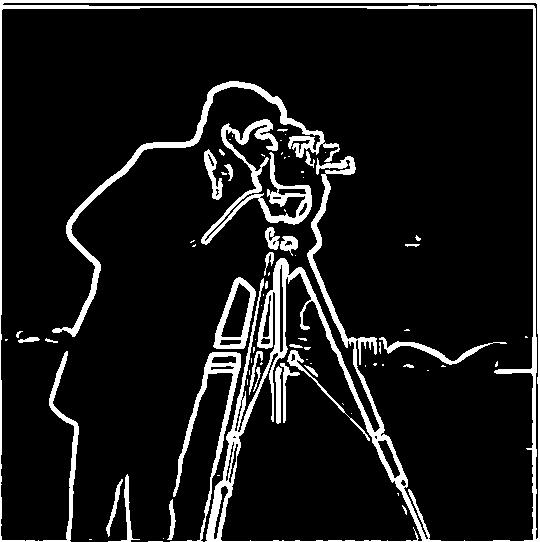

Now, we use a single convolution to blur the image and take the derivative using a derivative of gaussian filter.

Yes, result is the exact same. No information is lost, and convolutions are commutative so order doesn’t matter. This just speeds things up because we aren’t convolving with a big image twice.

Sharpening Images

here, we use the low pass filter, and subtract it from the original image to get a high pass filter (an image with only high frequncies aka edges). We can add this high frequency image to the original image to sharpen the image. We can scale the high frequency image by a scalar to increase its affect. sharpened_image = original_image + alpha *(original_image - blurred_image)

Sharpening Images using a single convolution filter

Now, instead of 2 convolutions, we combine the filters first, and then do a single convolution with the image. this should have the same results, and take much less time.

Sharpening Images

Now, I use my own image of a bird. The original image is very sharp. I first low-passed it to get a blurry image, and then used the blurry image to find the high frequency edges to manually sharpen the (blurred) image. As alpha increases, the images get more and more sharp, until it gets overdone.

alpha = 3 or 4 seems to be the best sharpened image and closest (though understandably nearly not as good) to the original super sharp image.

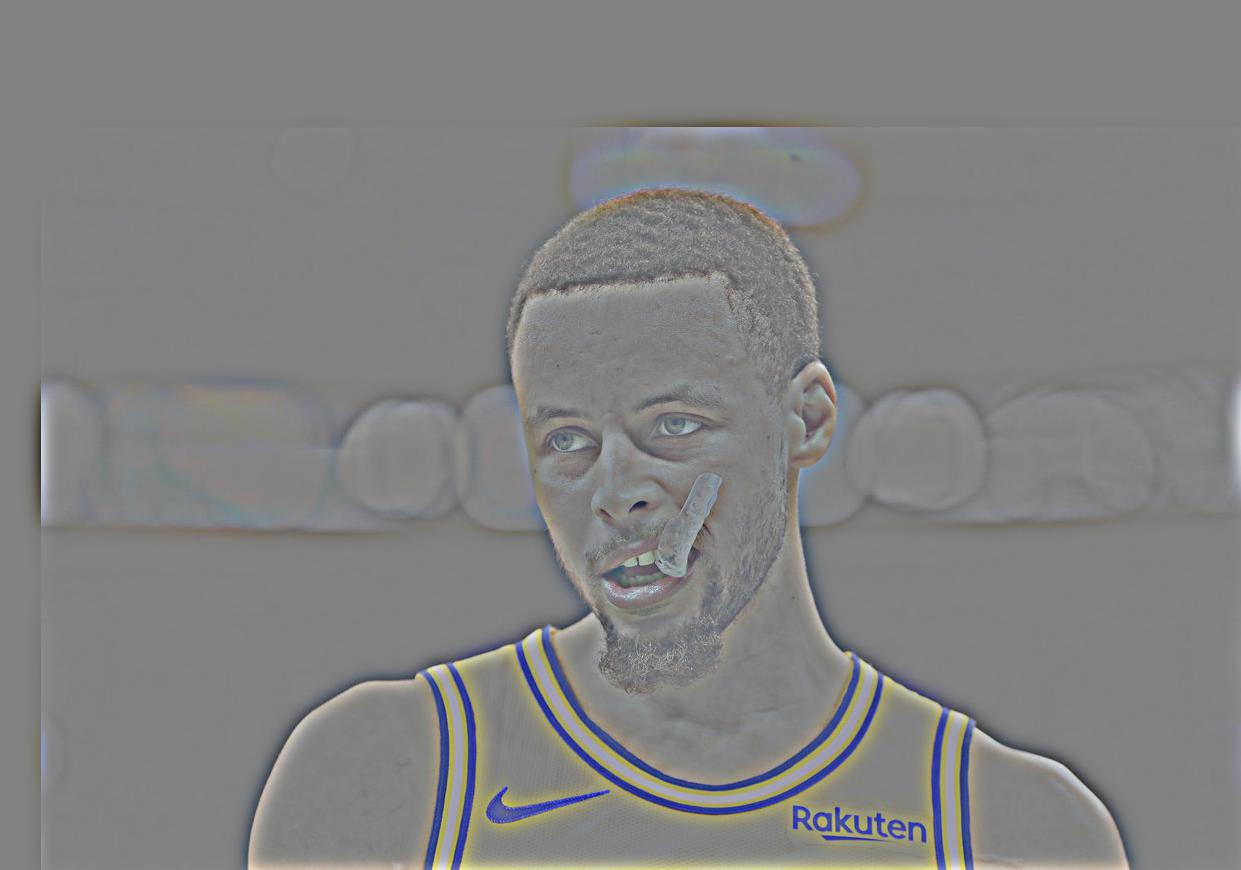

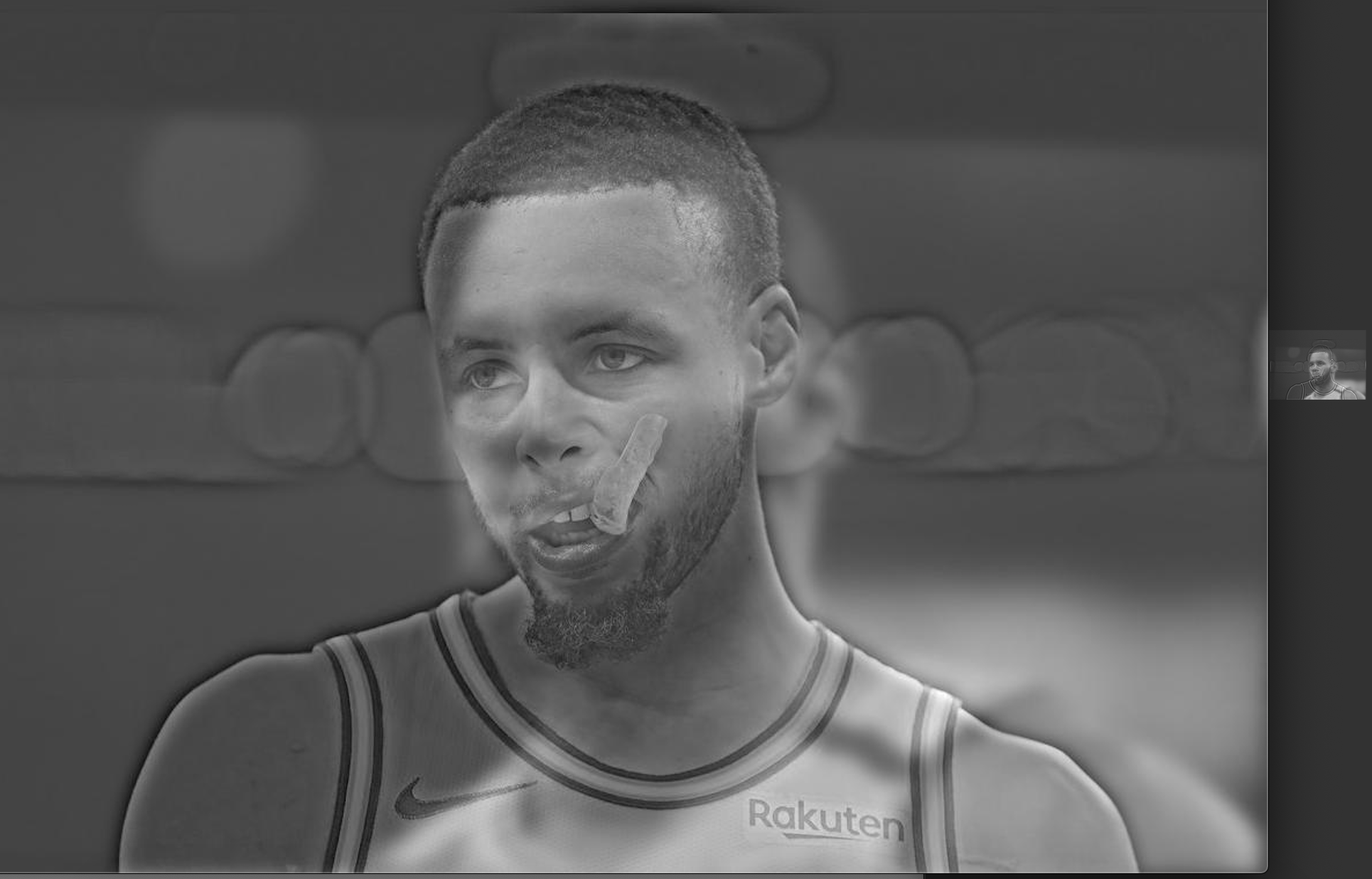

Hybrid Steph / Lebron

I averaged the low-pass and high-pass results of the 2 images to create the hybrid images.

Hybrid Hemoa

Hybrid Chris Hemsworth and Jason Momoa

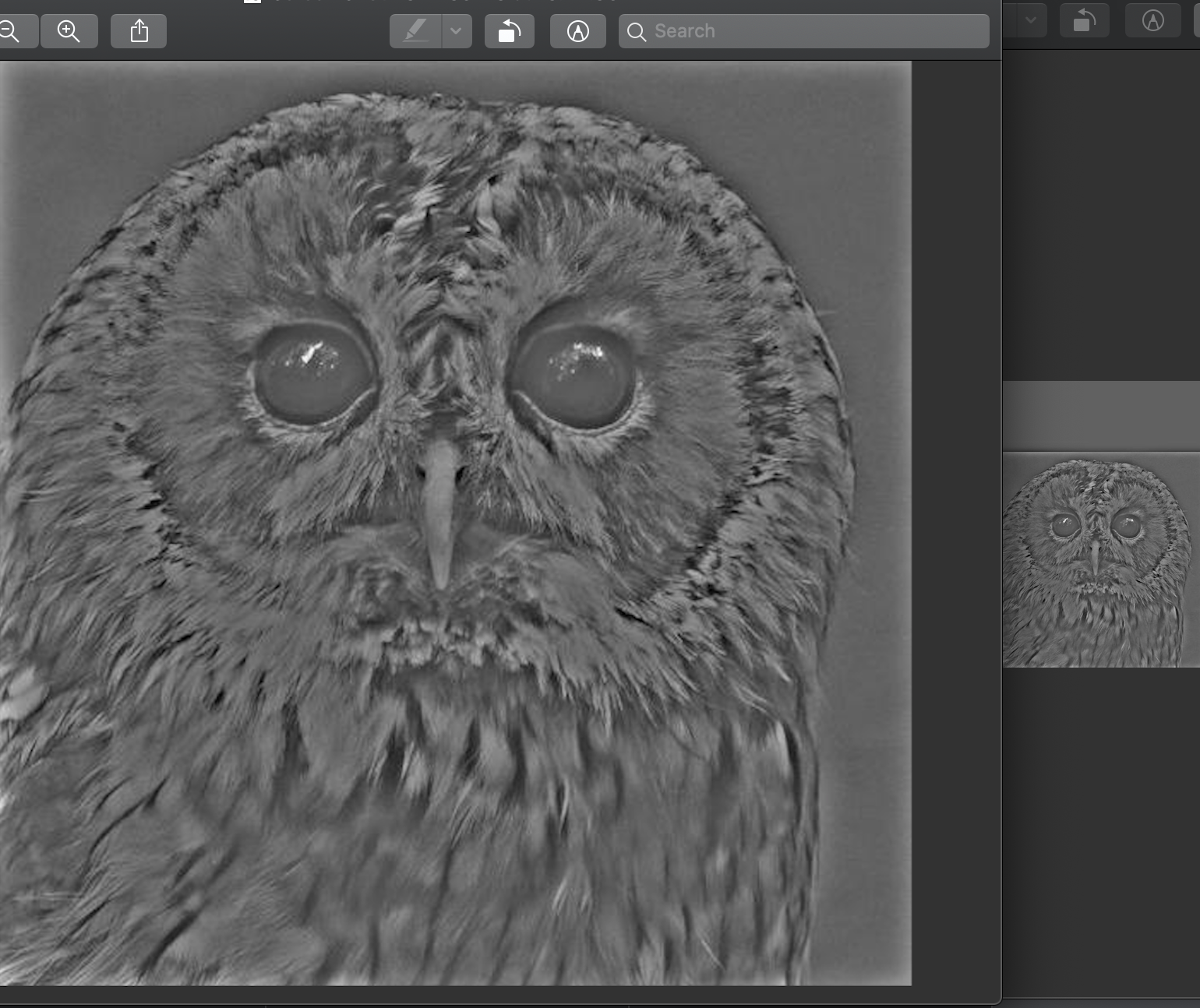

Hybrid Dowl

Hybrid Dog and Owl. Another good example of a hybrid image.

Hybrid Dowl

Hybrid Owl and Dog. In this case, the owl and dog were switched, so the one that was low-pass-filtered is now high-pas-filtered and vice versa. This is a bad example.

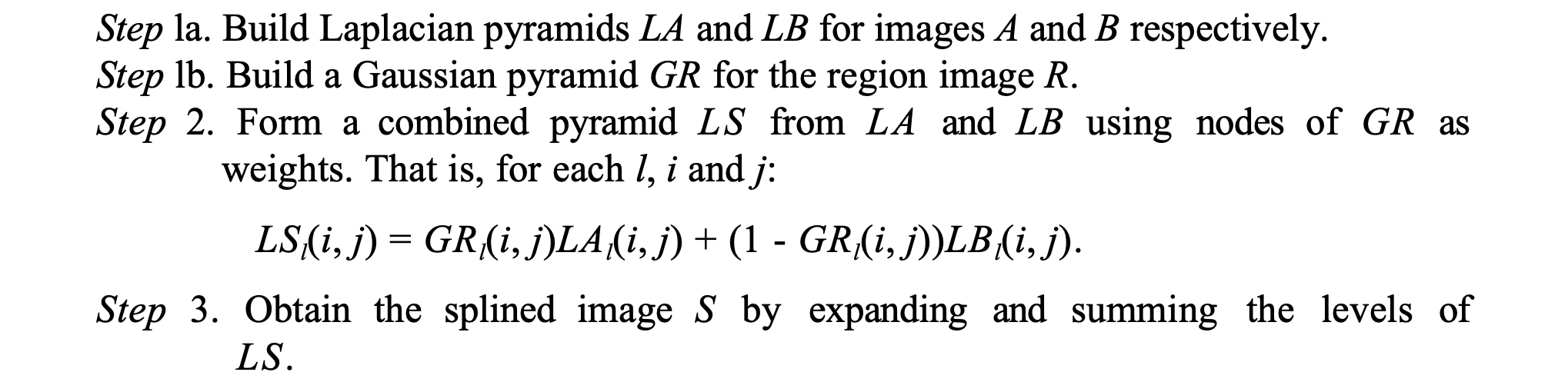

Multi resolution blendings

The following algorithm was used to create the laplacian pyramid and blend 2 images:

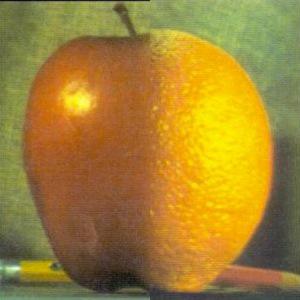

And here are the results for the oraple = orange + apple

Highest frequency (level 0)

Middle frequency (level 3)

Lowest frequency (level 5)

Final multi-resolution blended image

Multi resolution blending of hat on campanile

Initial Images

Highest frequency (level 0)

Middle frequency (level 3)

Lowest frequency (level 5)

Final multi-resolution blended image

Multi resolution blending of soccer player kicking sleeping man

Initial Images

Highest frequency (level 0)

Middle frequency (level 3)

Lowest frequency (level 5)

Final multi-resolution blended image