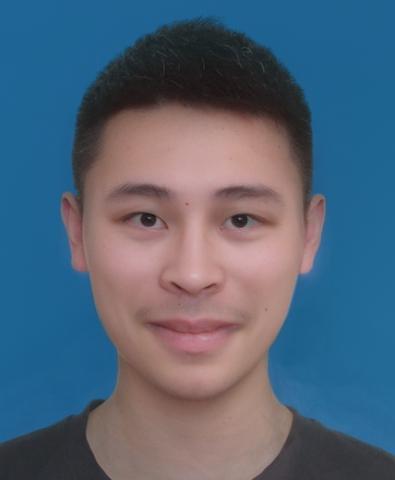

In this project, I morph myself smoothly to Daniel Wu's face and present it in a gif. Also, I calculate the mean of male faces in the dane dataset and morph my face to it. Then, I use that mean face to create a caricature of myself.

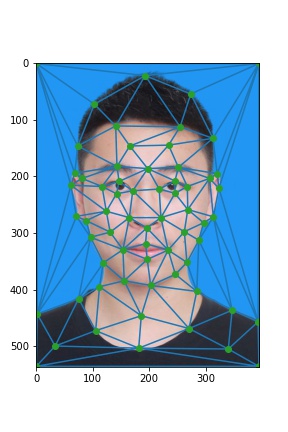

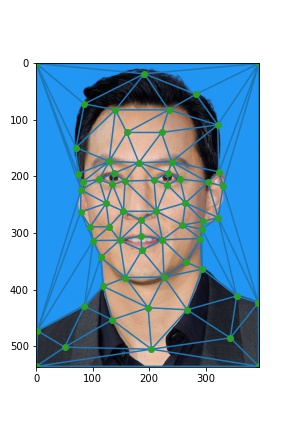

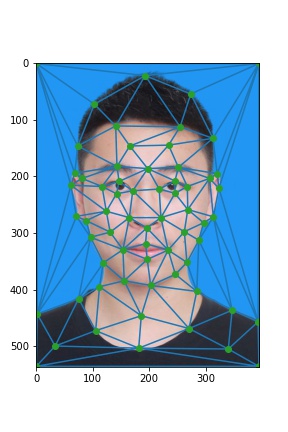

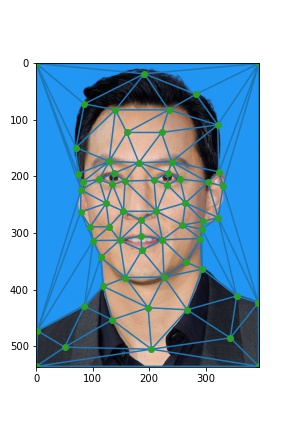

I use plt.ginput to select the key points and make sure the points on the source and target images are selected in the same order. Then I calculate the mean distance [(s_1 + t_1) / 2] of the corresponding points and form triangulation on those mean points. Below are the triangulations based on the mean points and the key points.

Now I want to do two transformations: 1. Transform triangles of me to triangles of points calculated by warp fraction (call it midway face); 2. Transform triangles of Daniel to the triangles of the midway face. I need to compute the affine transformation matrix for each corresponding triangle involved. Using the 6 vertices of coresponding triangles, I can write out a linear system and solve it to get the matrix. Then I need to map all the pixels inside the triangle to the pixels of the midway face using the calculated transformation matrix. However, I do the inverse mapping so that there will be no gap between the pixels of the midway face. Now I get the points in the input image because of the input mapping, and use interpolation (RectBivariateSpline) to determine the color of the pixel of the midway face. After obtaining two midway faces (one from me and one from Daniel), I cross-dissolve the image to get the final midway face. See the result below with dissolve_frac = 0.5.

I'm much more handsome, am I, huh?

Similar to the steps above, I first warp my source and target images into an intermediate shape configuration calculated by weighted average of the key points. Then I cross-dissolve the images to get the midway face. The warp_frac I choose is linspace(0.0, 1.0, 45), and the dissolve_frac I choose is warp_frac ** 2. I use 45 frames and each takes 1/30 second. Below is the interesting gif for morphing!

I choose the dane dataset, and I parse the files to get the key points in all images where male person presents full frontal face and neutral expression. I calculate the mean face shape by averaging the key points over all images. Then I morph every face to that mean face shape. Below are some examples.

Then I compute the average face of the male population by summing all those images and take the average of the color values. Below is the average face. Looks handsome!

Finally, I warp my face into the average face's geometry and the average face into my face's geometry. To do that, I need to reshape my face and the average face and draw new sequence of key points of my face. Then, the morphing happens! Below is the result:

The photo alignment can be better to improve the result. But I think this result is already good.

Now I extrapolate the key points of my face from the points of the population mean I get in the last step. I use alpha = 2, alpha = 3, and alpha = -2. See result below.

I am very serious in the picture. I want to use the smiling male population of the dane dataset to morph my face. Below are the images where I morph just the shape, just the appearance, and both. For morphing the shape, I calculate the mean key points of the sample and morph my face to the mean face. For morphing the appearance, I just cross-dissolve my face and the mean face.

Now I am happy!

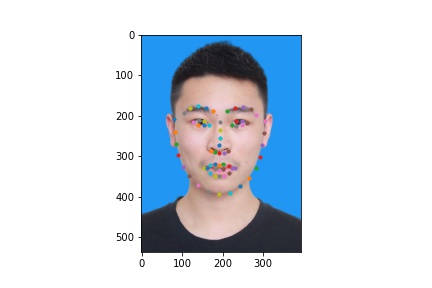

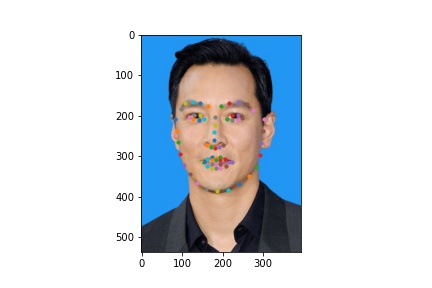

What makes me even happier is the automatic morphing. In fact, all we need is automatic key-point selecting! This is done by dlib. Use its shape_predictor_68_face_landmarks predictor. Tada! Here are the key points selected and I don't need to draw them myself anymore!

I also create a gif with 60 frames for this.

Image of Daniel Wu from https://warnerbros.fandom.com/wiki/Daniel_Wu

Dane dataset from https://web.archive.org/web/20210305094647/http://www2.imm.dtu.dk/~aam/datasets/datasets.html