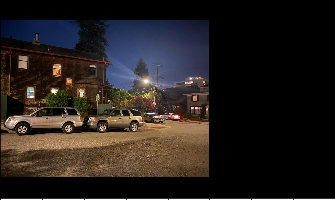

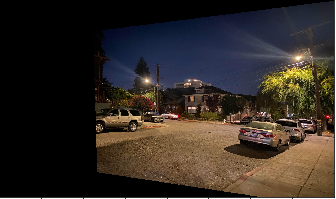

For this step I shot pictures of some murals and streets around Berkeley, and defined 10 corresponding points between pairs of images of the same scene from a different perspective.

For recovering homographies, we defined the keypoints as shown above. We then used overparameterized least squares in order to try and accurately estimate the homography between the two images that we loaded in the previous section.

This yielded a 3x3 matrix defining a transformation from a set of points in one image to a set of points in another, which we could use to perform the warping for rectifying images and constructing mosaics.

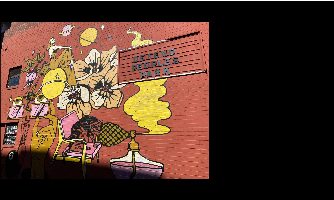

In order to warp the images, we used the homography matrix H we calculated to transform the set of points in a given image into a set of points from which to sample from the image. I used the cv2.remap function in order to sample the transformed points from the original image (I also experimented with using RectBivariateSpline, and had reasonable results, but ran into issues on the borders of images).

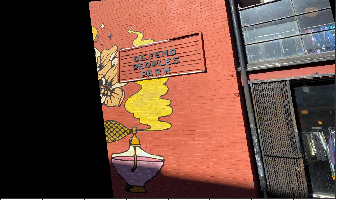

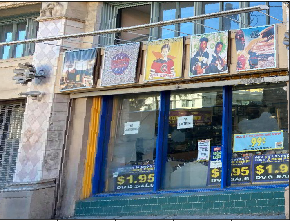

Something else interesting that can be done is rectifying an image to show a planar surface in a frontal-parallel fashion. This was done to validate the warping, with keypoints for the plane corresponding the the four corners of the original image for calculating the homography.

Finally, we blended warped images together into a mosaic, combining them using weighted averaging. I computed a mask of the overlapping region between two images in the mosaic, and set the mask value to be 0.5 in the overlapping region in order to try and best blend the two images together. The value in the mask would have to be updated for any additional image that also overlapped in the same region.

I learned how we can warp and rectify images to change perspective easily, as well as how to stitch together images by computing homographies, which is pretty cool.