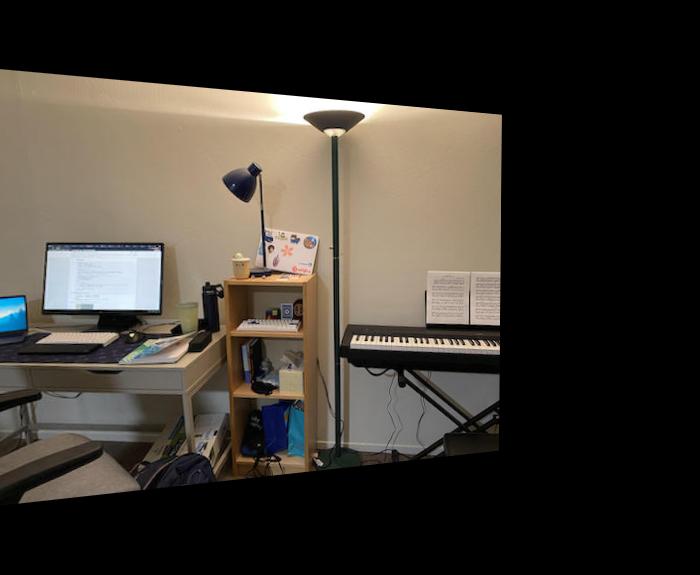

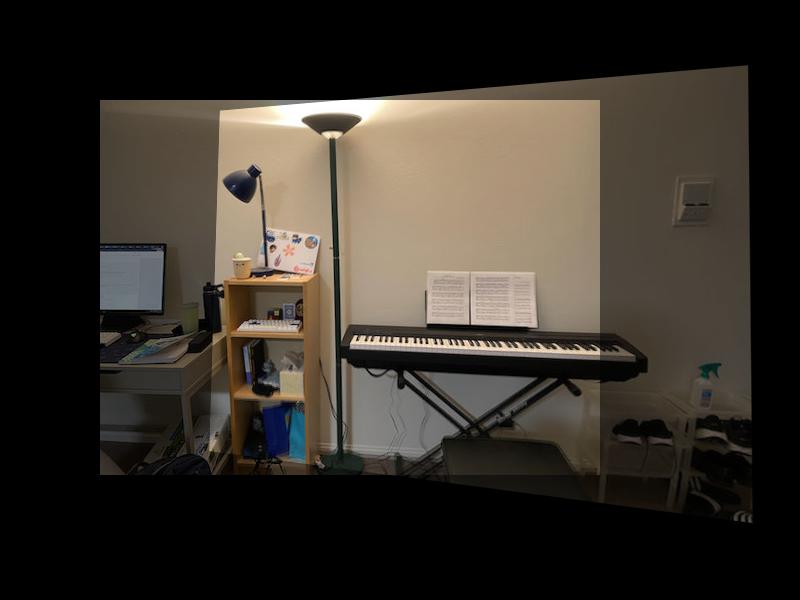

Displayed below are the photos that will be warped and blended together.

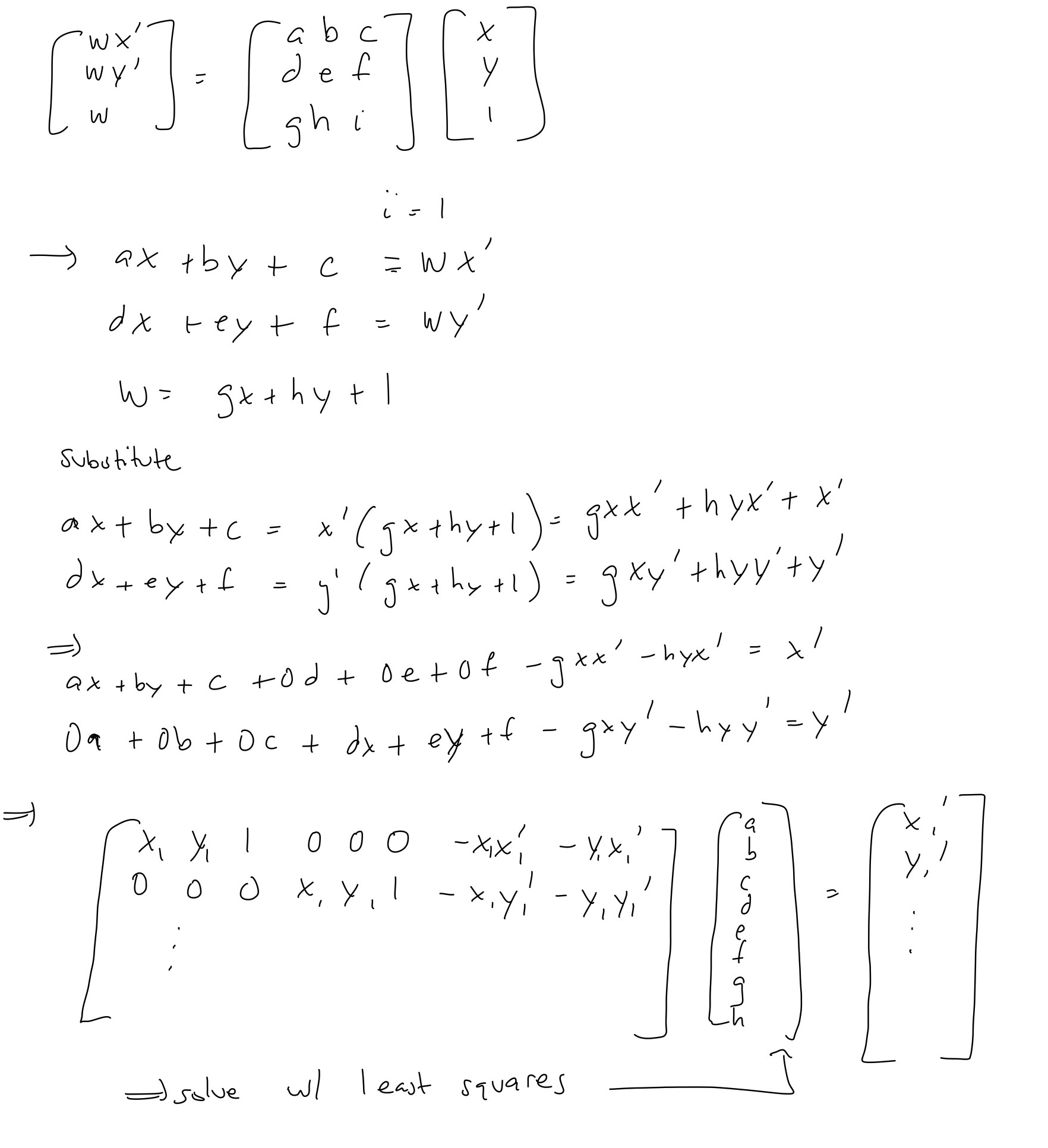

In this we want to find a homography H that will transform the image so that we can warp the pespective to match the target image. To do so, we setup a system of equations, giving us an overconstrained system which we can solve with least squares. I show the setup below. For each pair of images, I selected 10 correspondence points at corners.

After recovering the homography, I use linear interpolation to warp the image perspective. In the target image, we search for the corresponding point in the source image and interpolate the pixel value similar to project 3.

In this part, I test our homography and warping functions by warping square and rectangle planes to be frontal parallel. We do this by selecting the corners of the plane as well as creating artifical points for frontal parallel plane.

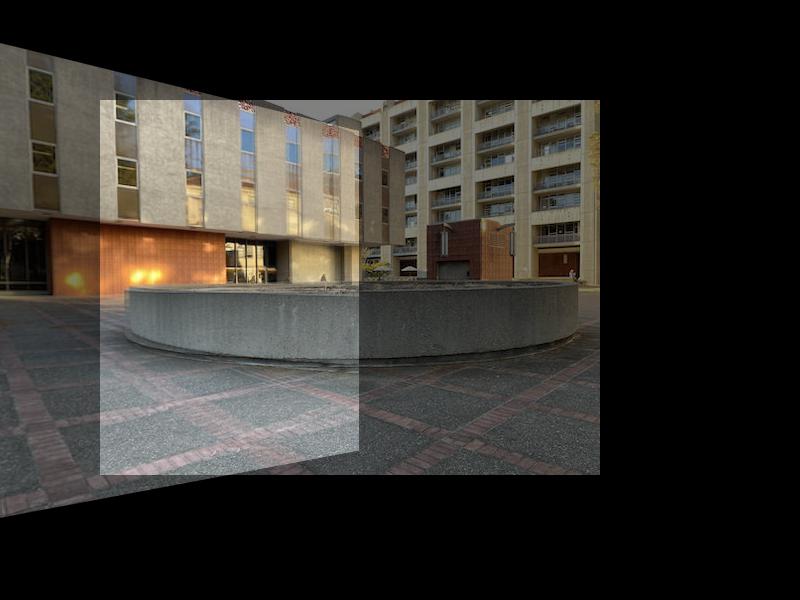

In this part, we blend the image together to create the final mosaic image.

It was very cool to be able to walk through the actual computation, mathematics, and process behind how panoramic photos could be made by warping perspectives and stitching images together. I learned that point correspondence are important and creating a clean mosaic image is difficult and requires a lot of extra image processing! It was also very cool to see how much information could be recovered by warping the perspective of the image!