CS 194-26 Project 4 Part A - Image Warping and Mosaicing¶

Jerry Zhang¶

Overview¶

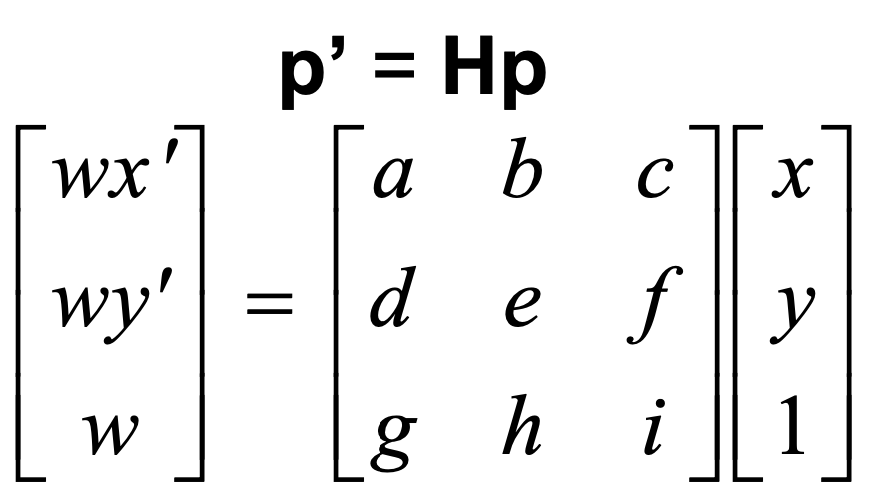

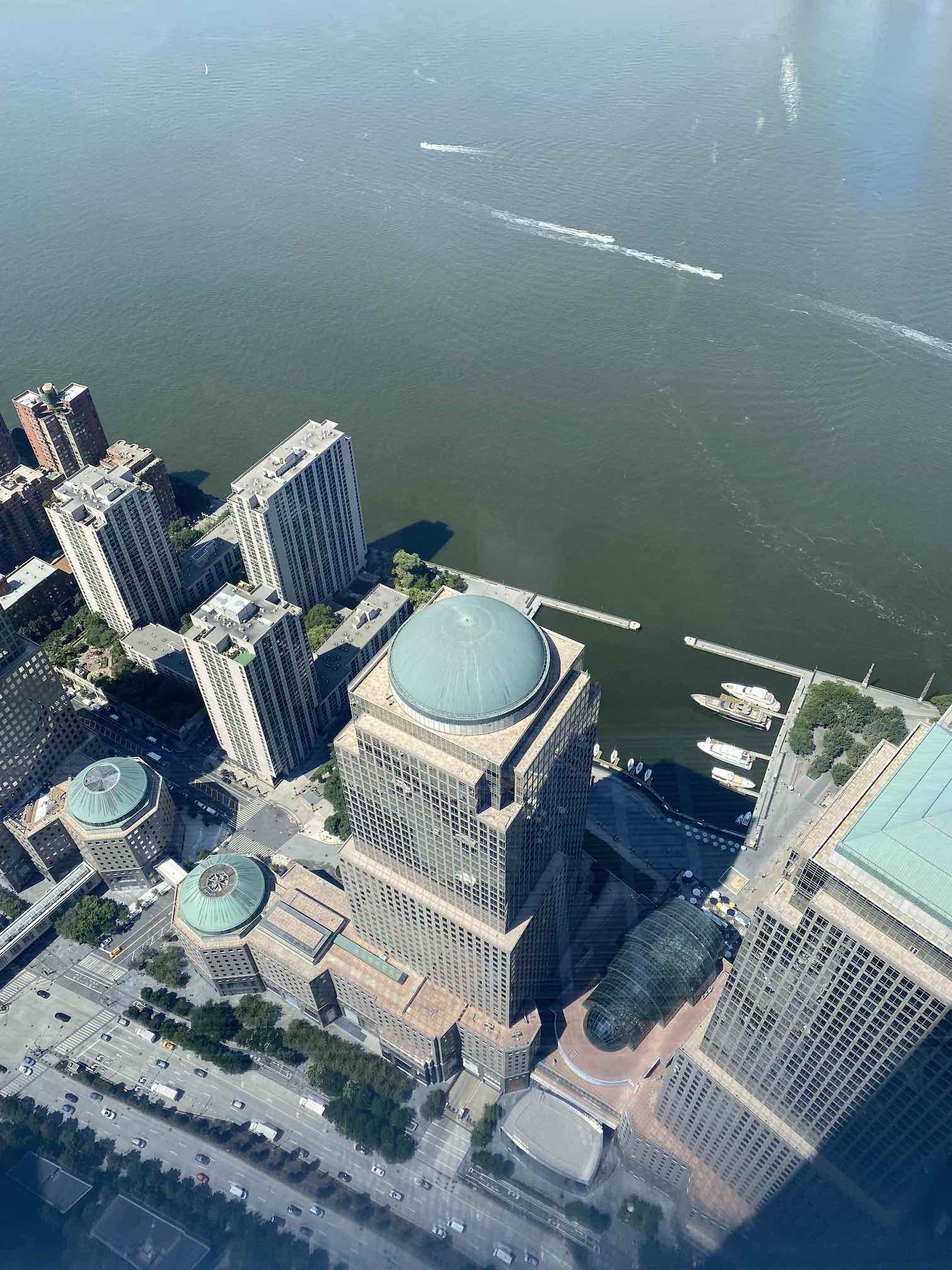

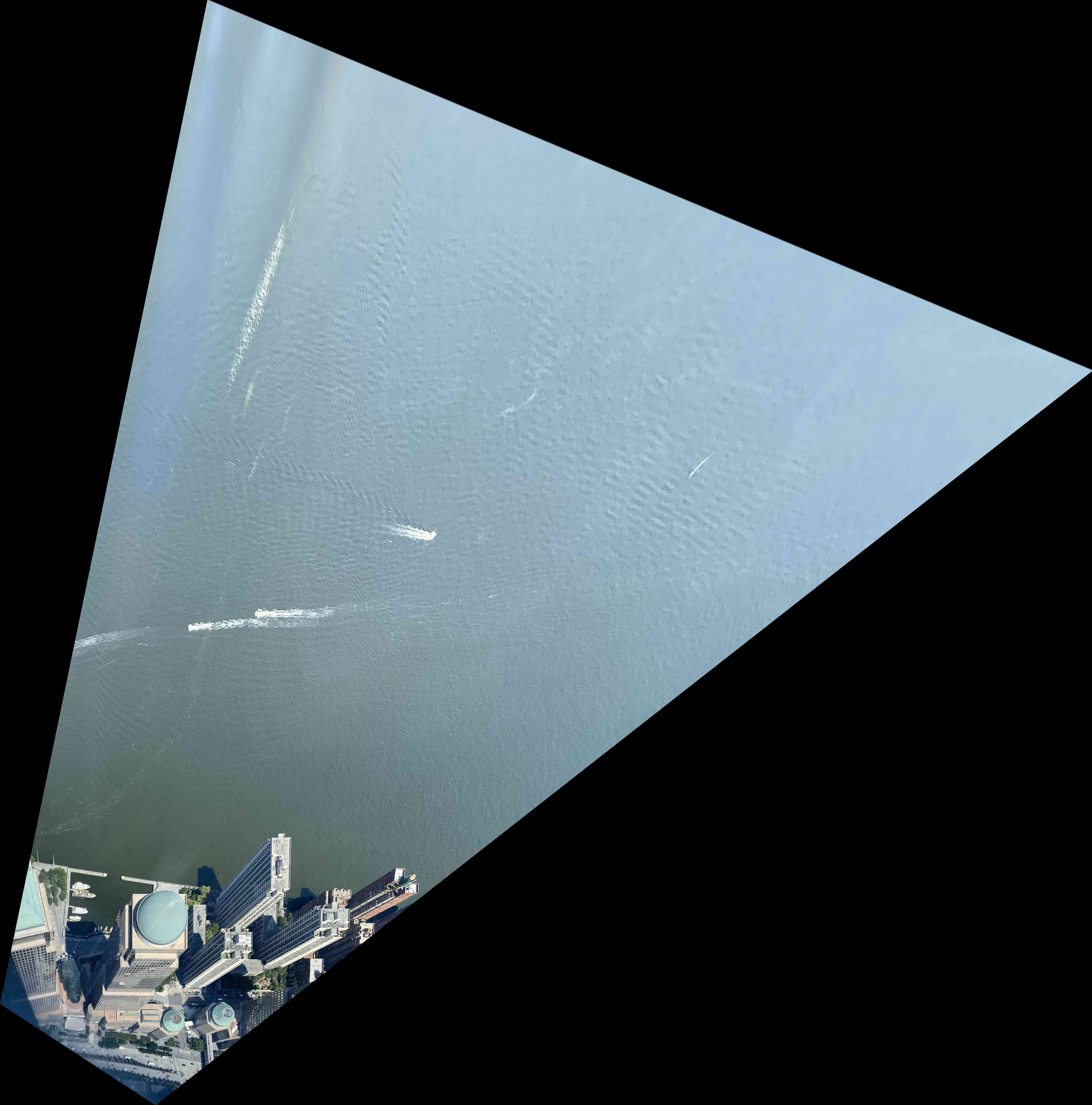

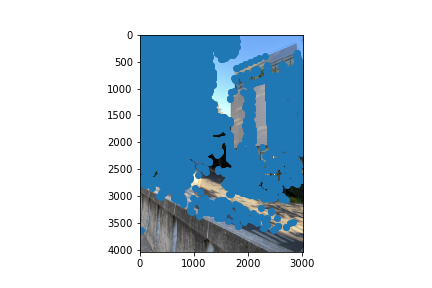

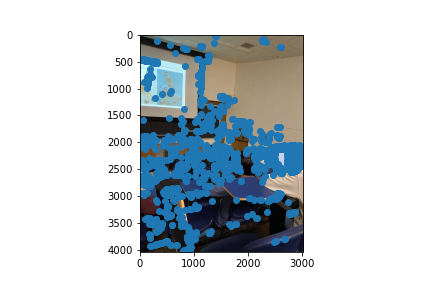

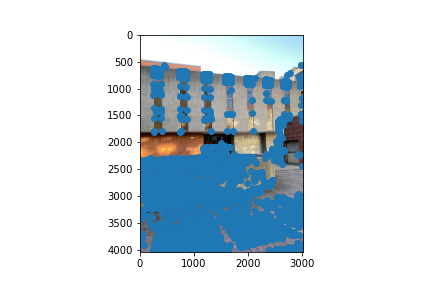

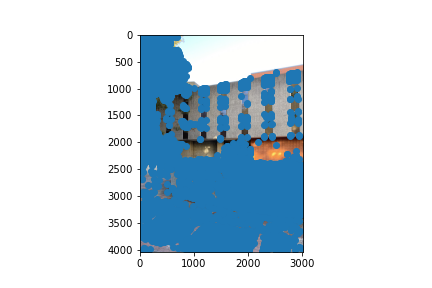

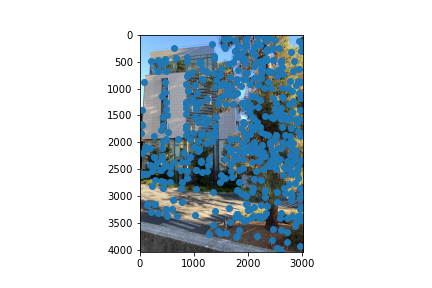

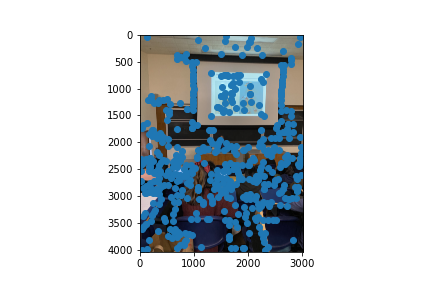

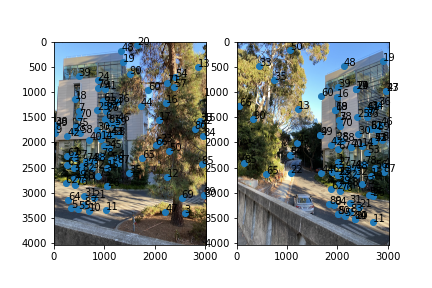

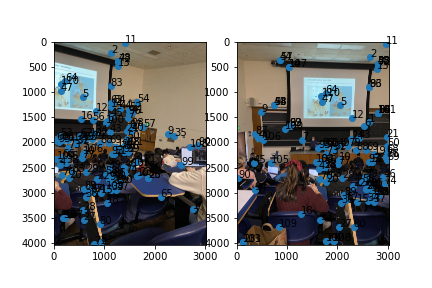

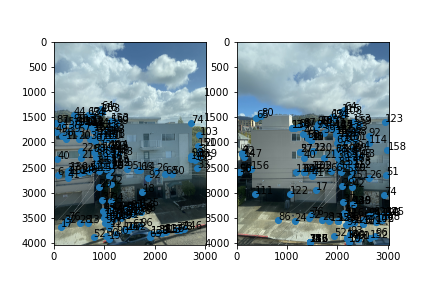

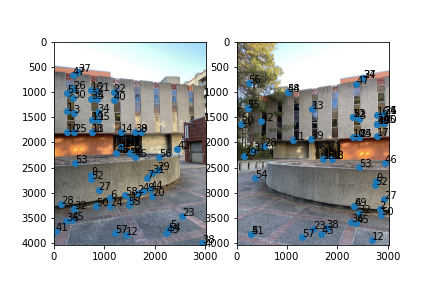

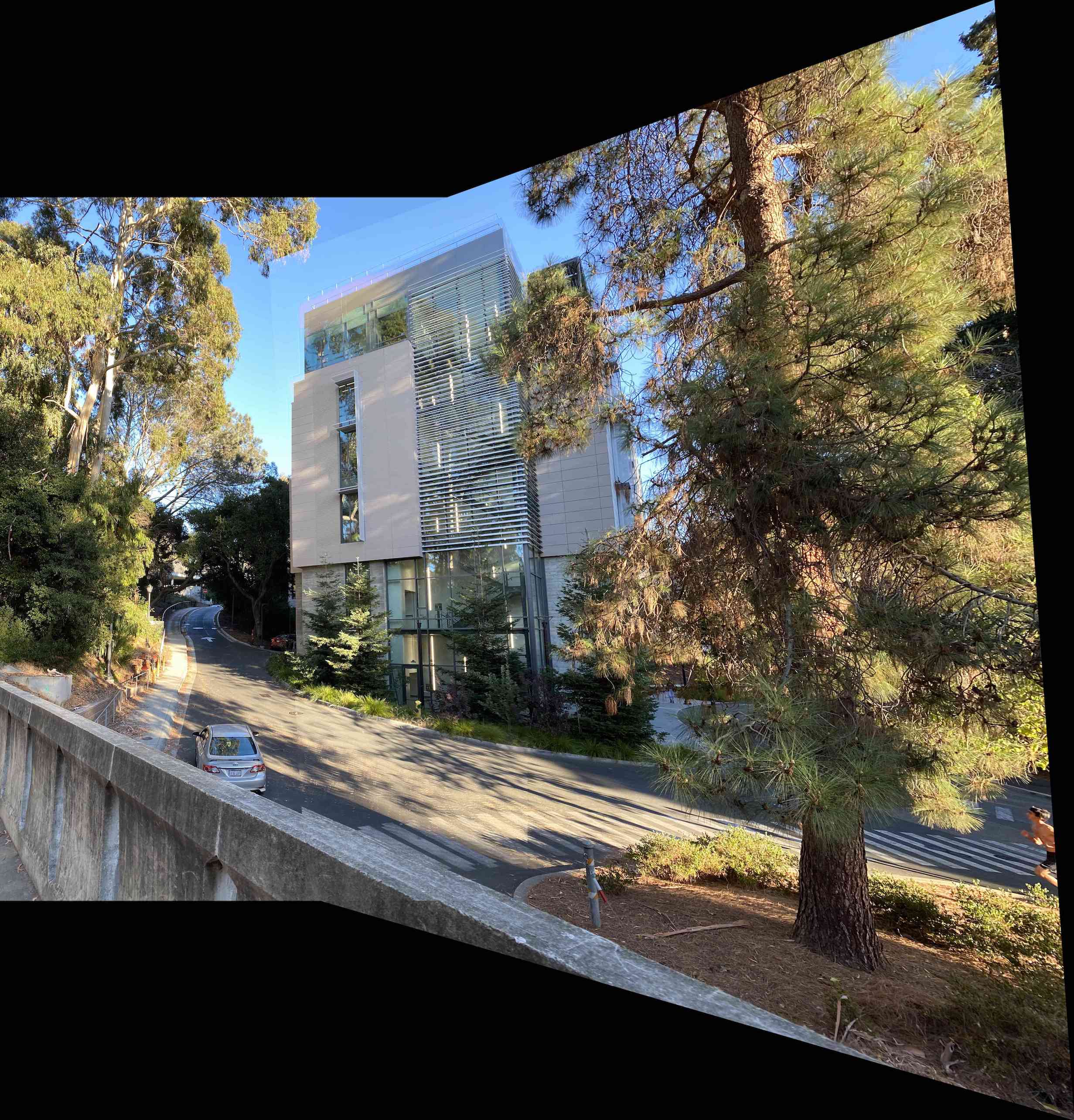

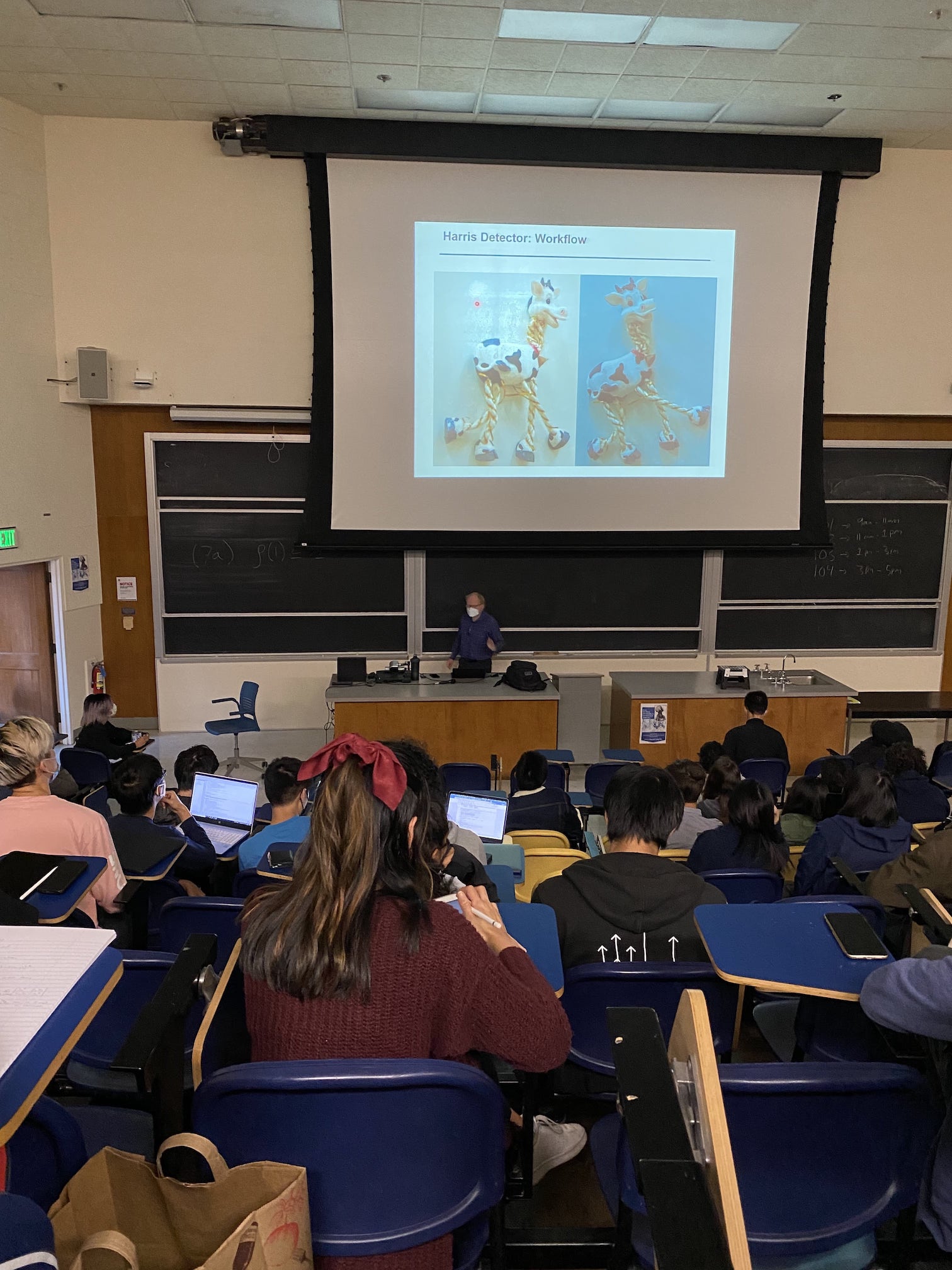

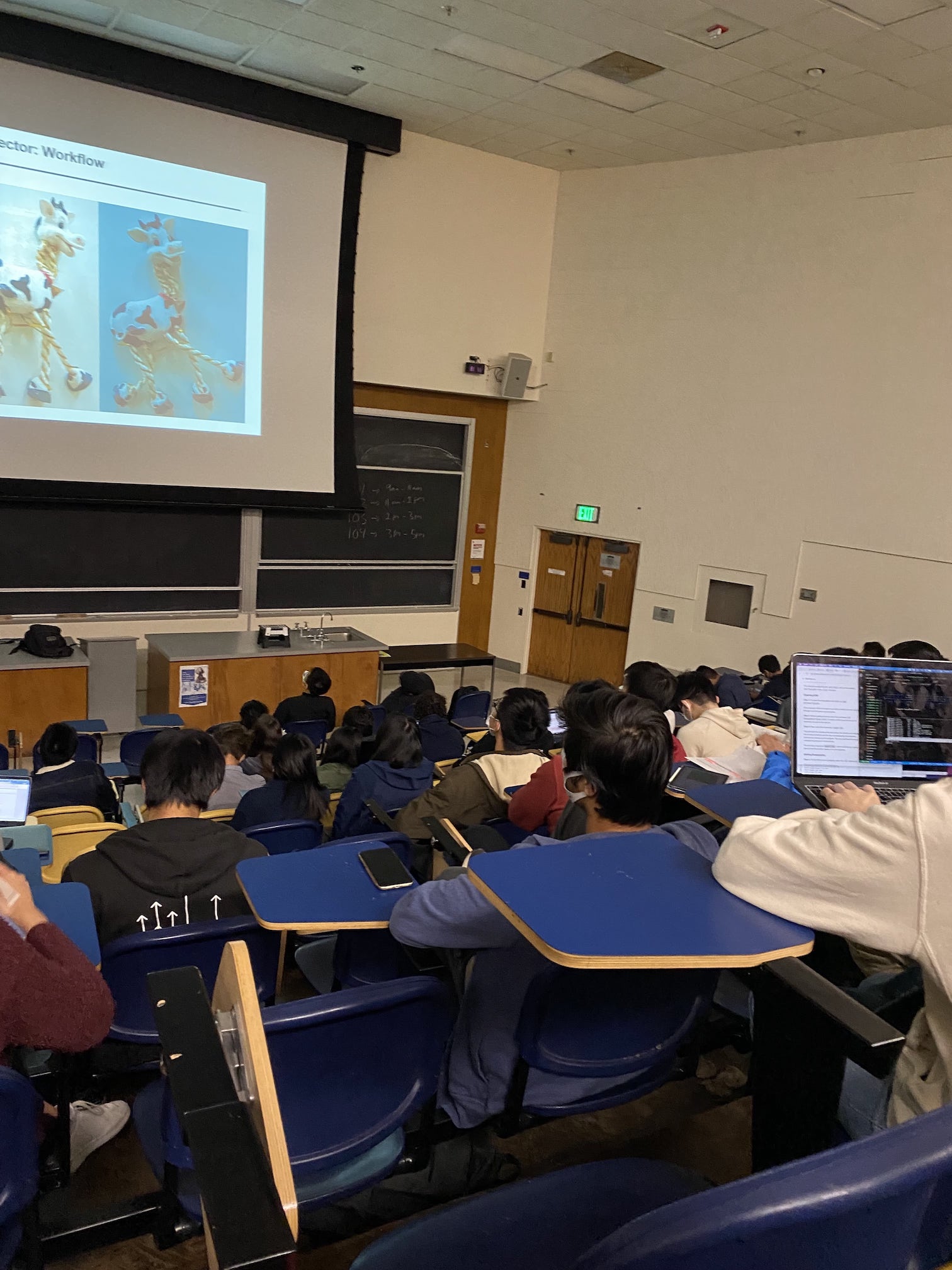

In this part of project 4, I worked on image mosaicing using image warping, taking a few photographs and creating an image mosaic out of them.

Shoot the Pictures¶

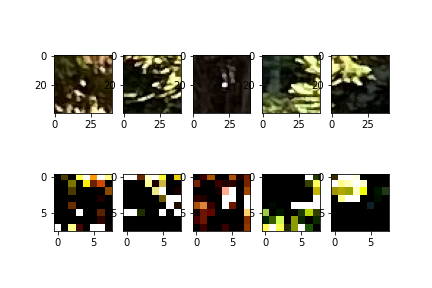

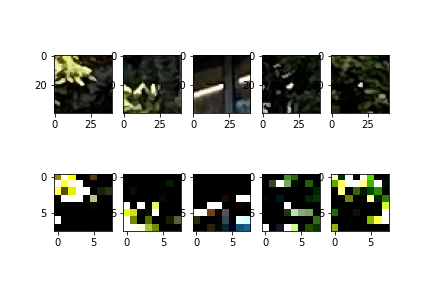

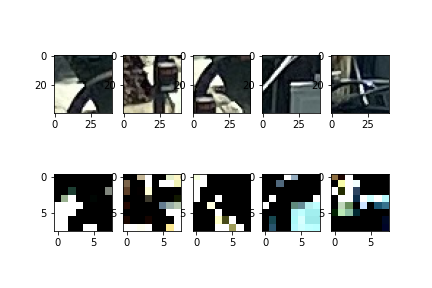

The photos I used for the mosaics are the following, all shot as much as possible from the same point, just rotating the camera.

|

|

|---|---|

|

|

|

|