CS194-26 Project 4: (Auto) Stitching of Images

Overview:

Last time (and its 4A despite reading project 3), we created a mosiac of connected images shot at different angles, using a warping and blending to keep the original feel and angle of the image.

In that process, we had to pick points manually that we knew were the same on all images. But what if we didn't need to find points manually, or couldn't?

This time, we will automatically determine by the points following the paper, "Multi-Image Matching using Multi-Scale Oriented Patches."

We'll use the same images as before.

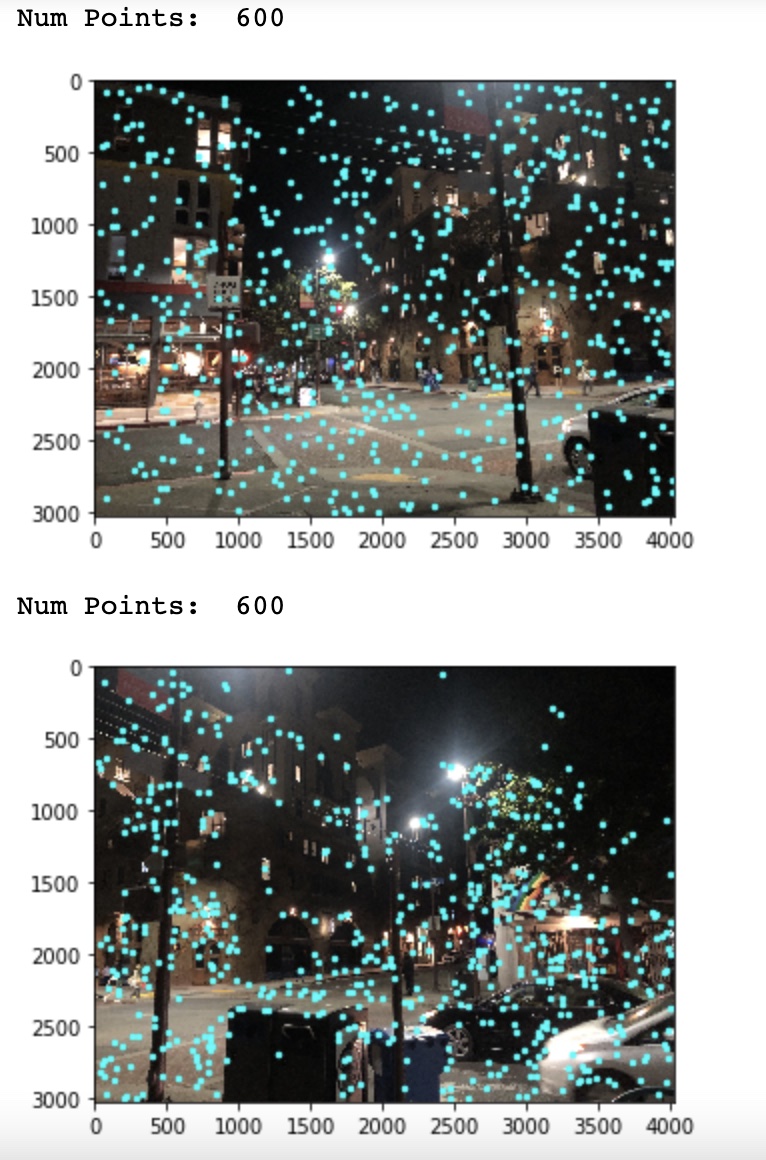

The first image set is a scene from Telegraph avenue:

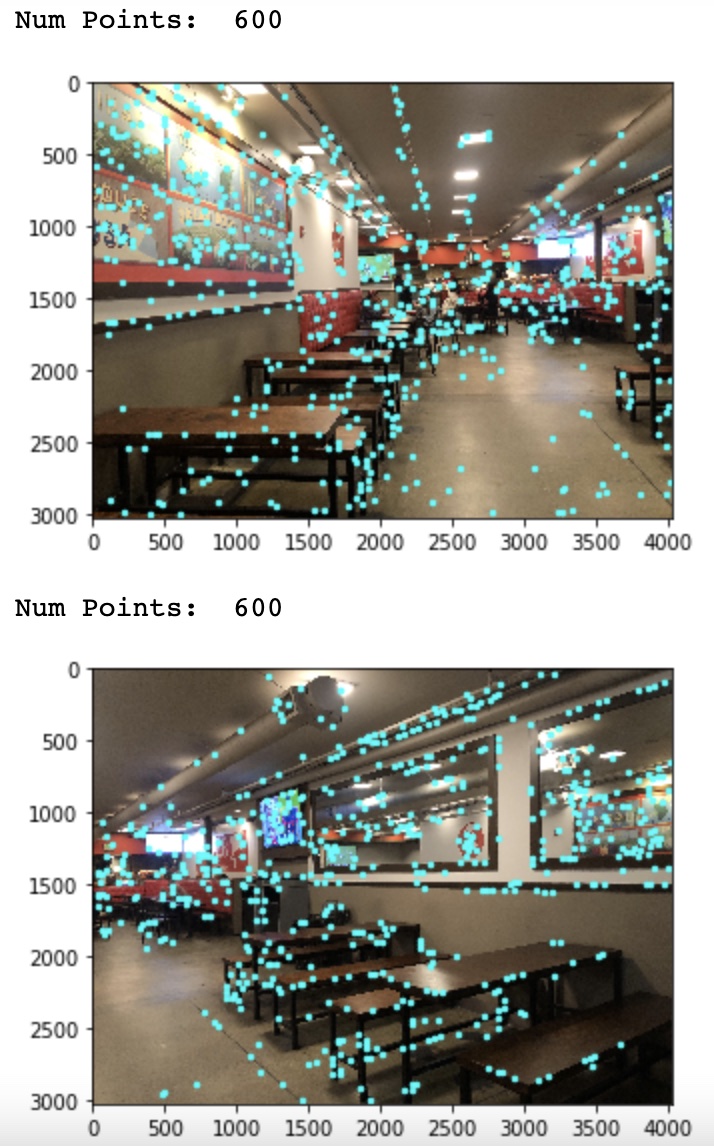

The next set is a scene from inside Slivers:

My third set is a different set of images from a slightly different vantage point on Telegraph (but the same corner):

This time, we'll use the Silver's set as the demonstration points.

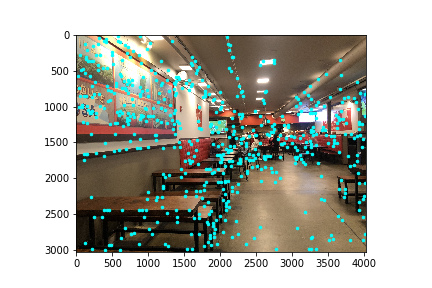

Step 1: Harris Corners

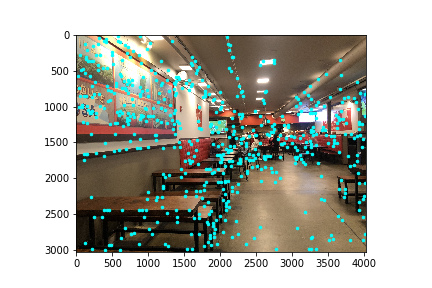

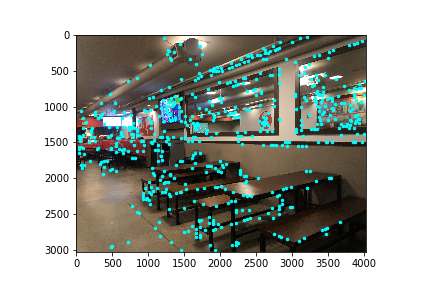

Using the Sci-kit Image library, we will use the harris corner detector provided in the sample code.

For the left and right Slivers images, this looks like this:

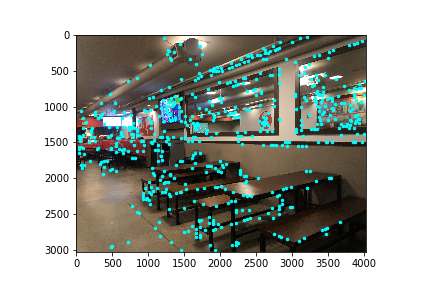

Step 2: Adaptive Non-Maximal Suppression

Here, we want to remove the abundance of possible harris corners provided by the original harris corners, using the ANMS process.

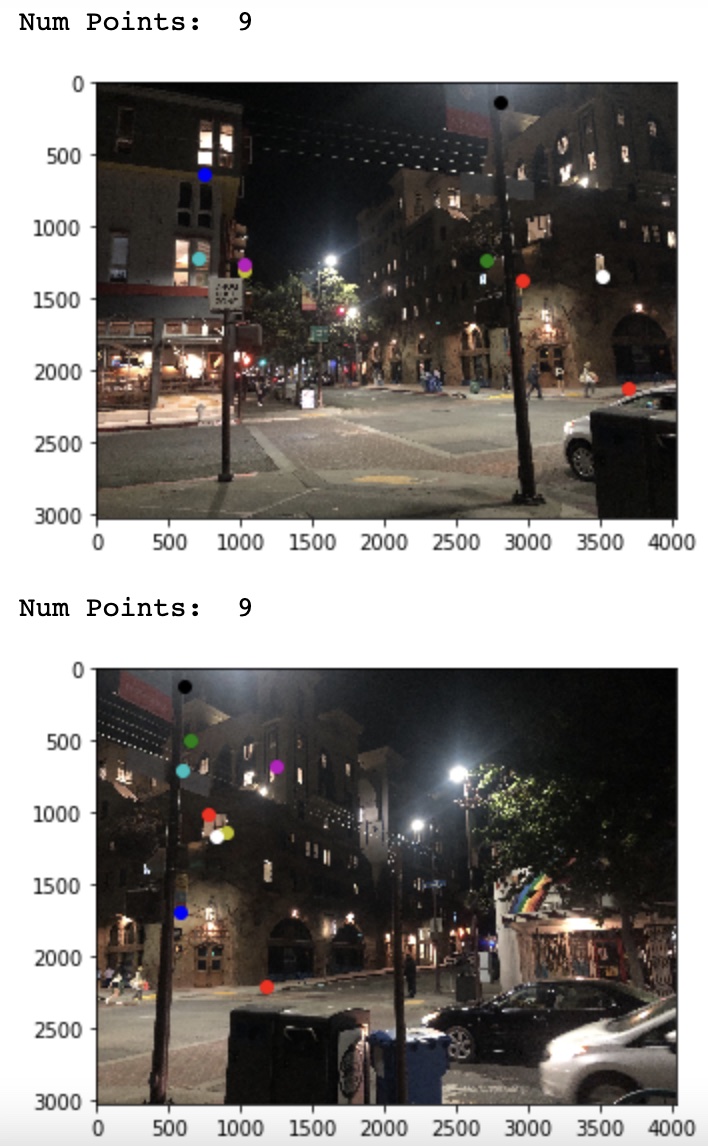

Here are the reduced set of points for Slivers Right Image:

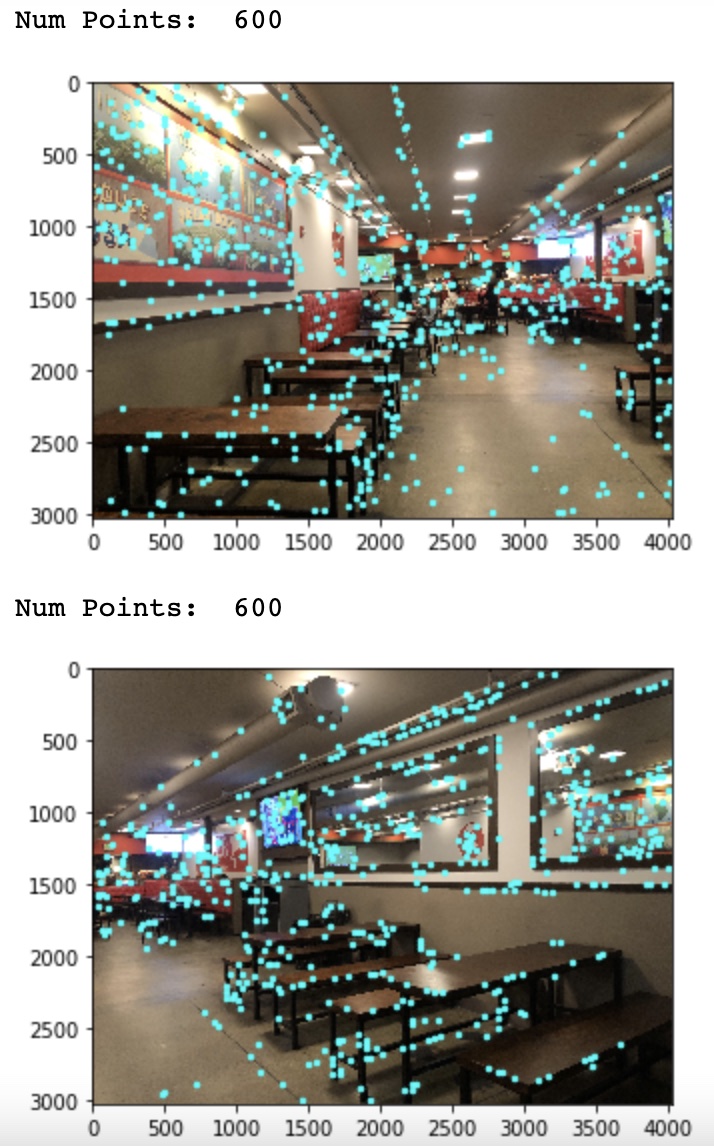

Step 3: Feature Descriptor and Matching

From the previous set of Harris Corners, we need to find the points that are most likely to be matching features in both images.

We break the feature sets into batches, then distance match via nearest neighbors on the opposing image, as long as the points are under a threshold.

Thresholds: Sliver = 0.3, Telegraph 1 = 0.7, Telegraph 2 = 0.7

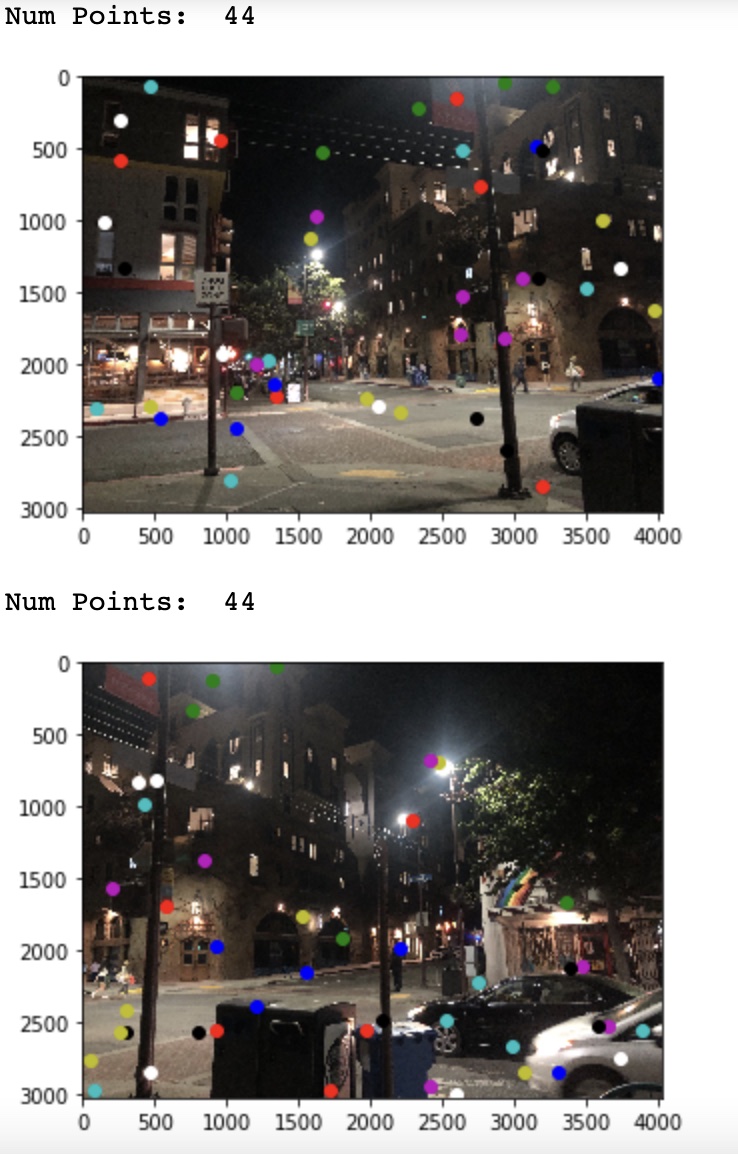

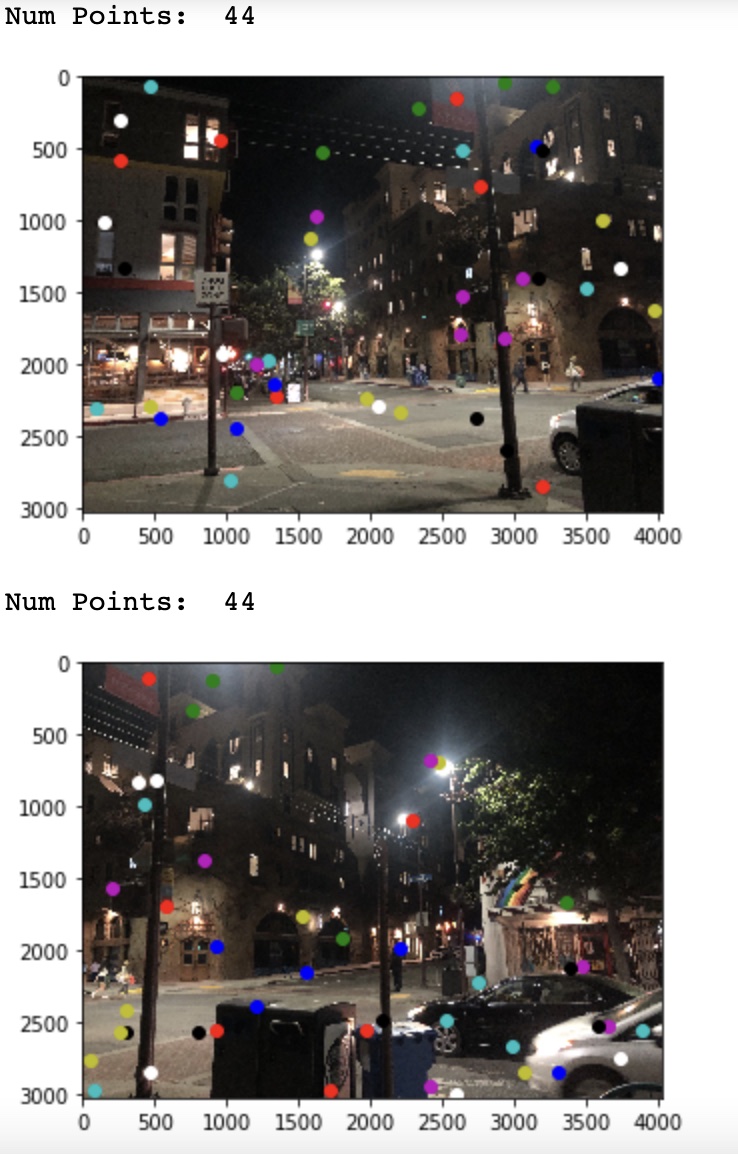

Here are the images for the Telegraph and Slivers images:

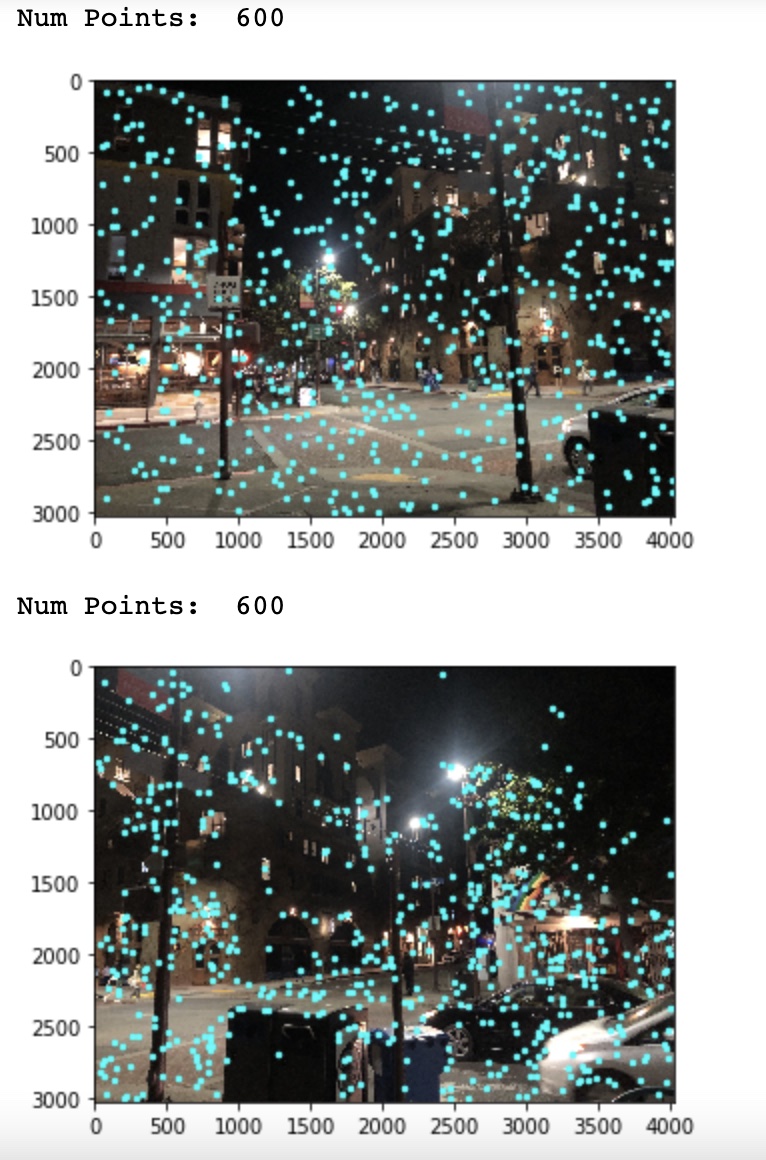

Step 4: Ransac

Thresholds: Sliver = 4.2e7, Telegraph 1 = 1.23e6, Telegraph 2 = 1.4e6

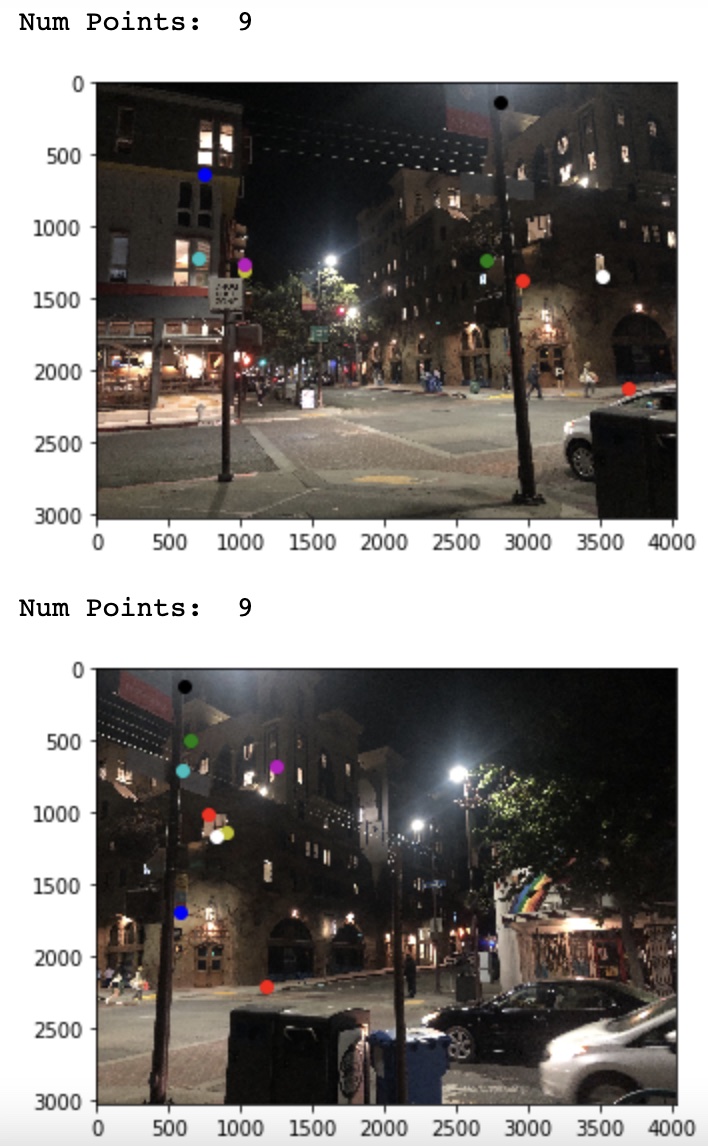

Then, we chose the most robust points using the ransac method.

Thresholds: Sliver = 4.2e7, Telegraph 1 = 1.23e6, Telegraph 2 = 1.4e6

Here are the images for the Telegraph and Slivers images:

Step 5: Putting it all together

Finally, our warped mosiac by adding the two images together at the appropriate rectified location and blending, similar to before.

The partA mosiacs and partB mosiacs are put on the left and right side respectively.

For the First Telegraph, set:

For the Sliver set:

For set 3:

What did we learn?

Part A of the project was a nice prospective of image dimension warping and blending, that took more work than expected, but was still a understandable task.

The understandable part (at least from a newbie's perspective) was using known parts to align images (or at least, create the homographies matrix).

In part B, we followed a published article that takes the human part out, by finding features automatically.

This was a much more interesting prospect than part A, as this is a novel idea that utilizes Harris Corners as well as feature contrasting that provides a form of intelligence for image recognition.

This intelligence is the cool part of the project in my opinion.