Project1: LightField Camera

Part I: Depth Refocusing

In this part, I chose the center image to be 08_08, and shift all other images uv toward the center image. More specifically, I shift the images by alpha*(u-u_middle), alpha*(v-v_middle). After the shifting, I add the images together and take the average.

I tested a few values for the alpha, and it turns out that when alpha equals 0.2/0.3 the result is the best, as alpha value goes up, the image gets more blurry.

|

|

|

|

|

Part II: Aperture Adjustment

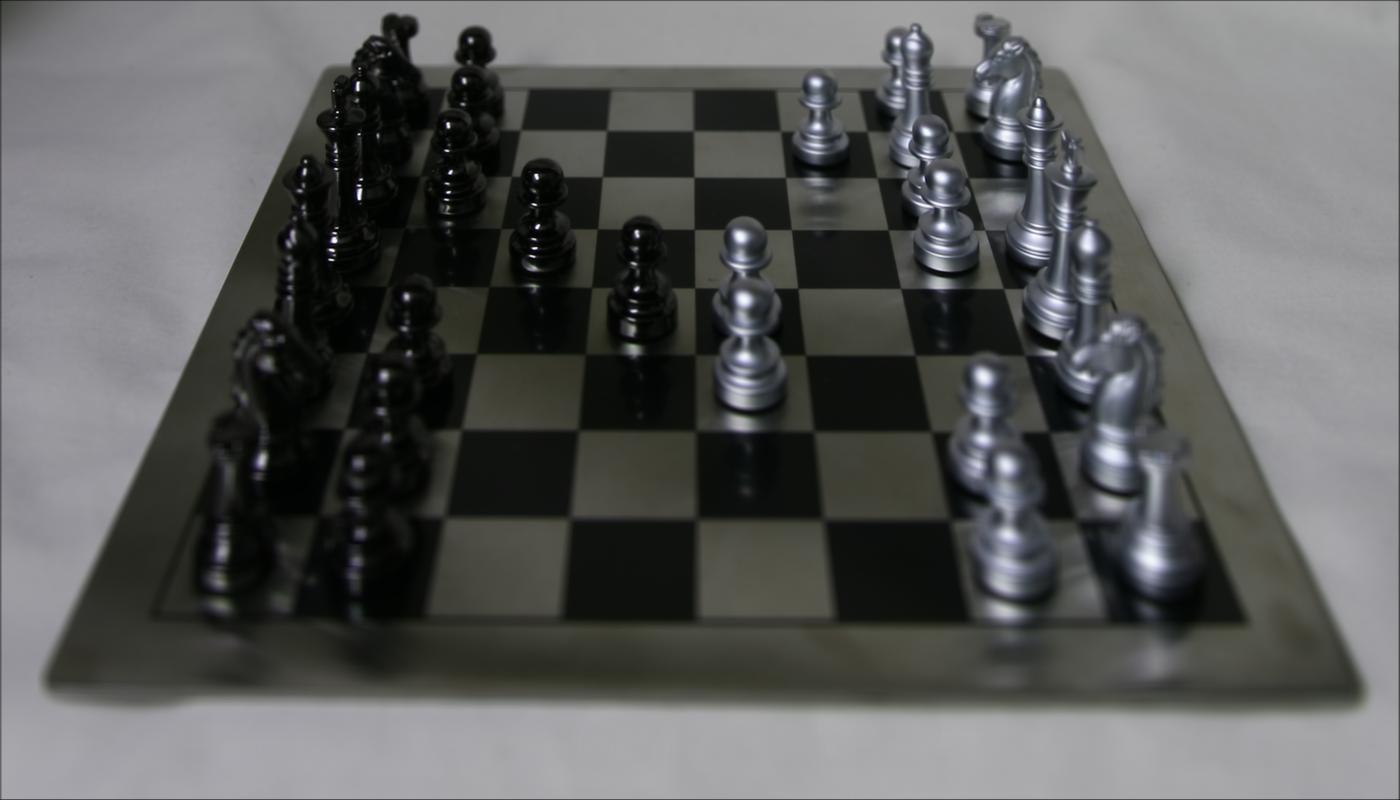

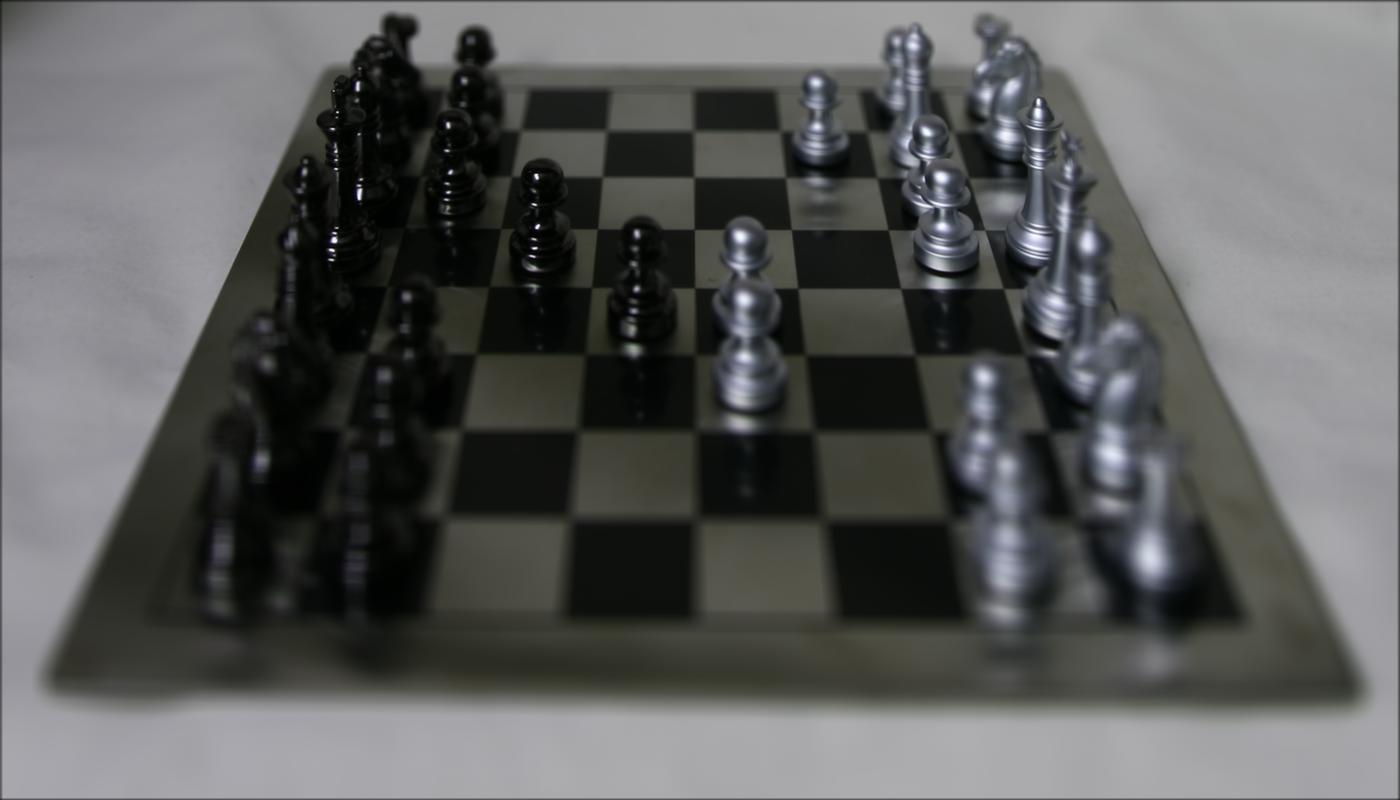

In this part, instead of using all the images to compute result, I used only the images around the center images based on input radius. For example, if the radius is 2, I would select images from 07_07 to 09_09 to compute the result.

As I increase the radius, the effect of depth-of-field increases.

|

|

|

|