Due Date: 11:59pm on Monday, Mar 30, 2020 [START EARLY]

Classification and Segmentation

In this problem, we will solve classification of images in the Fashion-MNIST dataset and semantic segmentation of images in mini Facade dataset using Deep Nets! For this question, you can use pytorch/tensorflow or any other deep learning framework you like. We will primarily focus on pytorch for the implementation details but you can look for equivalent functions in tensorflow.

You will run your code on Google Colab, or use your own GPU if you want. If you choose to use colab,

once you create a new notebook, you need to go to Runtime --> change runtime type and set the hardware accelerator to GPU.

Note that one google colab session has an idle timeout for 90 minutes and an absolute timeout for 12 hours,

so please download your results/your trained model frequently.

Make sure to START EARLY if you are not familiar with colab, we will not further extend the due date for problems caused by that.

Once you are done, export your code form colab as .py and submit it to bcourse.

Part 1: Image Classification

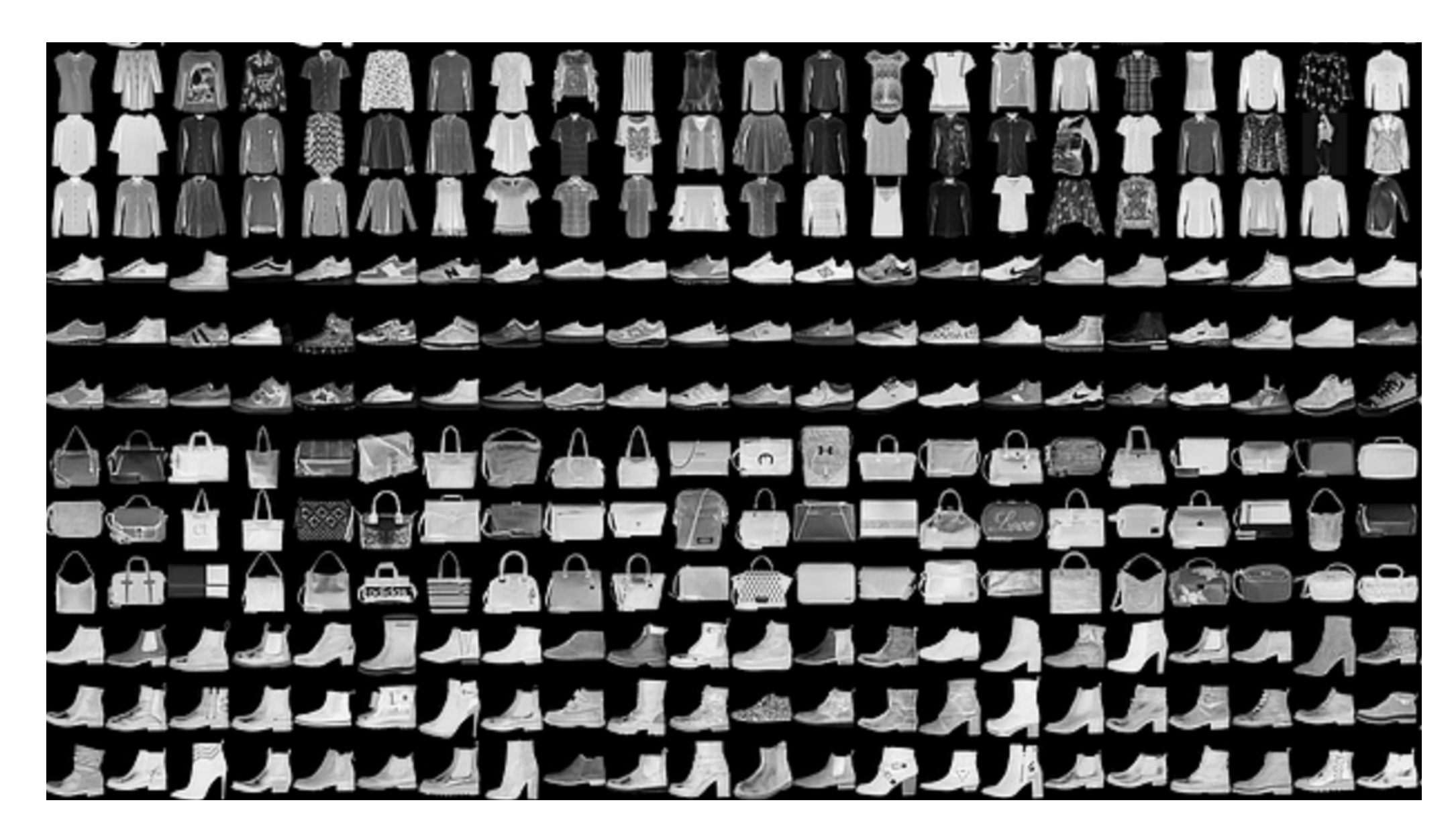

We will use the Fasion MNIST dataset available in torchvision.datasets.FashionMNIST for training our model. Fashion MNIST has 10 classes and 60000 train + validation images and 10000 test images.

- Dataloader: Use the dataloader from torch.utils.data.DataLoader. This tutorial gives an example of how to use a torchvision dataset with the dataloader class. We will cast the problem as a 10 way classification problem. So the labels will be from 0 to 9. To prepare the data for the task use torchvision.transforms.ToTensor to convert uint8 values from 0 to 255, to normalized float values in range 0 to 1. Once you have the dataloader, sample a few images and display them along with their class.

- CNN: Once you have the data loader, write a convolutional neural network using torch.nn.Module. This tutorial gives an example of how to write a neural network in pytorch. Our CNNs will use a convolutional layer (torch.nn.Conv2d), max pooling layer (torch.nn.MaxPool2d) and Rectilinear Unit as non-linearity (torch.nn.ReLU). The architecture of your neural network should be 2 conv layers, 32 channels each, where each conv layer will be followed by a ReLU followed by a maxpool. This should be followed by 2 fully connected networks. Apply ReLU after the first fc layer (but not after the last fully connected layer). You should play around with different kinds of non linearities and differetn number of channels to improve your result.

- Loss Function and Optimizer: Now that you have the predictor (CNN) and the dataloader, you need to define the loss function and the optimizer to start training your CNN. You will use cross entropy loss (torch.nn.CrossEntropyLoss) as the prediction loss. Train your neural network using Adam using a learning rate of 0.01 (torch.optim.Adam). Run the training loop Until convergence. Try different optimizers, different learning rates and different values of weight decay (which is the same a L2 regularization).

- Results: Once you have trained your model, show the following results for the network:

- Plot the train and validation accuracy during the training process.

- Compute a per class accuracy of your classifier on the validation and test dataset. Which classes are the hardest to get? Show 2 images from each class which the network classifies correctly, and 2 more images where it classifies incorrectly.

- Visualize the learned filters.

Part 2: Semantic Segmentation

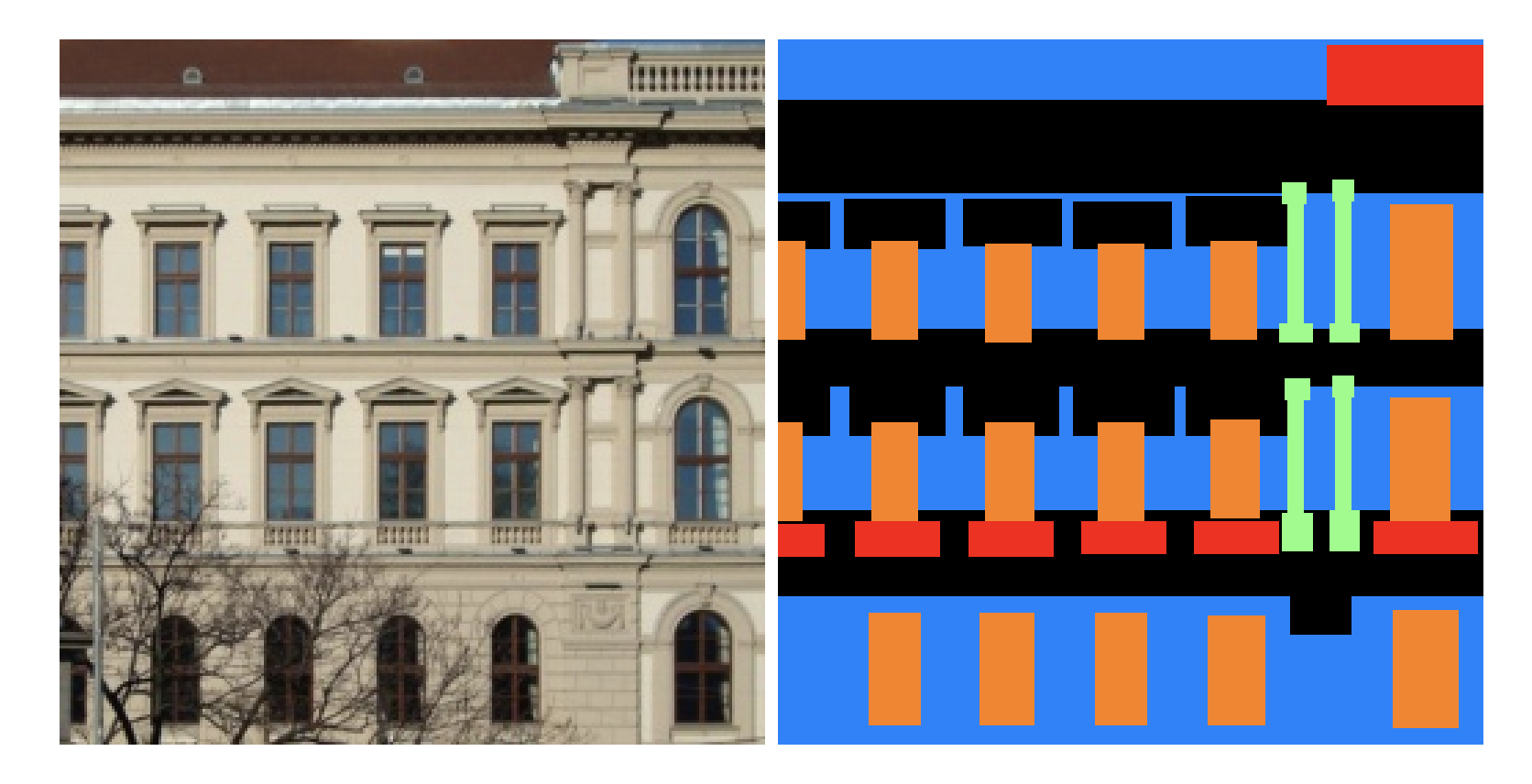

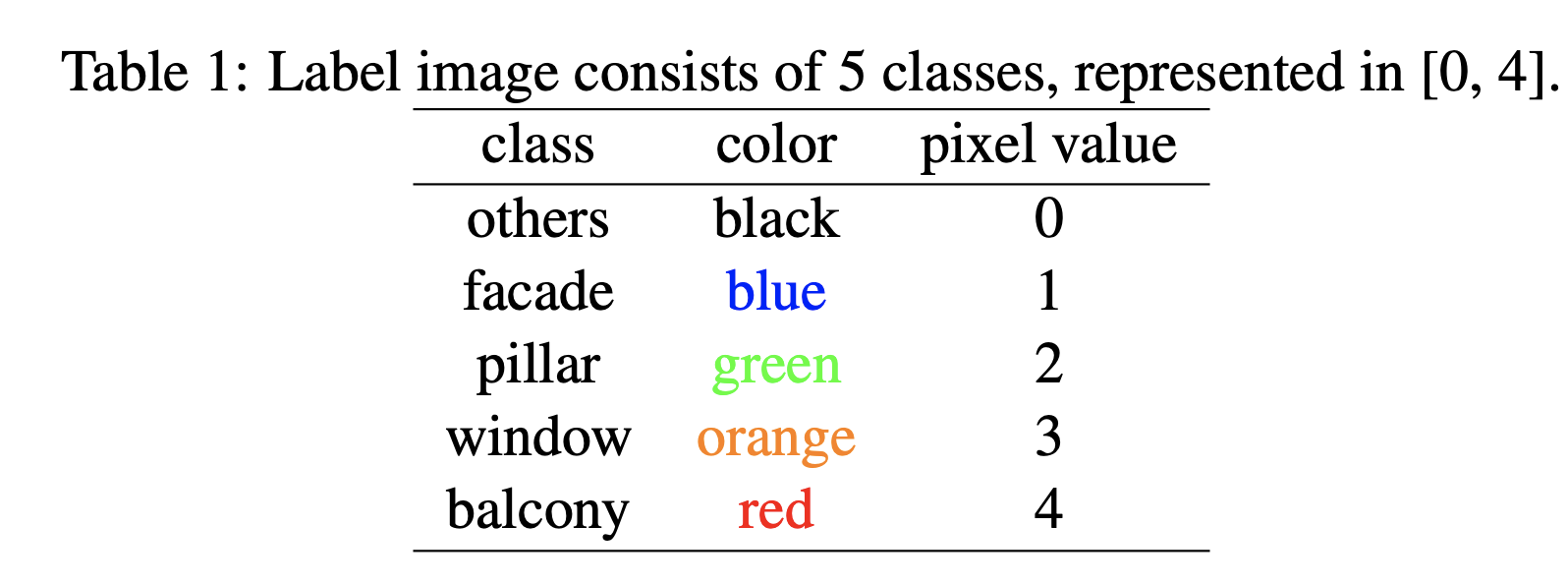

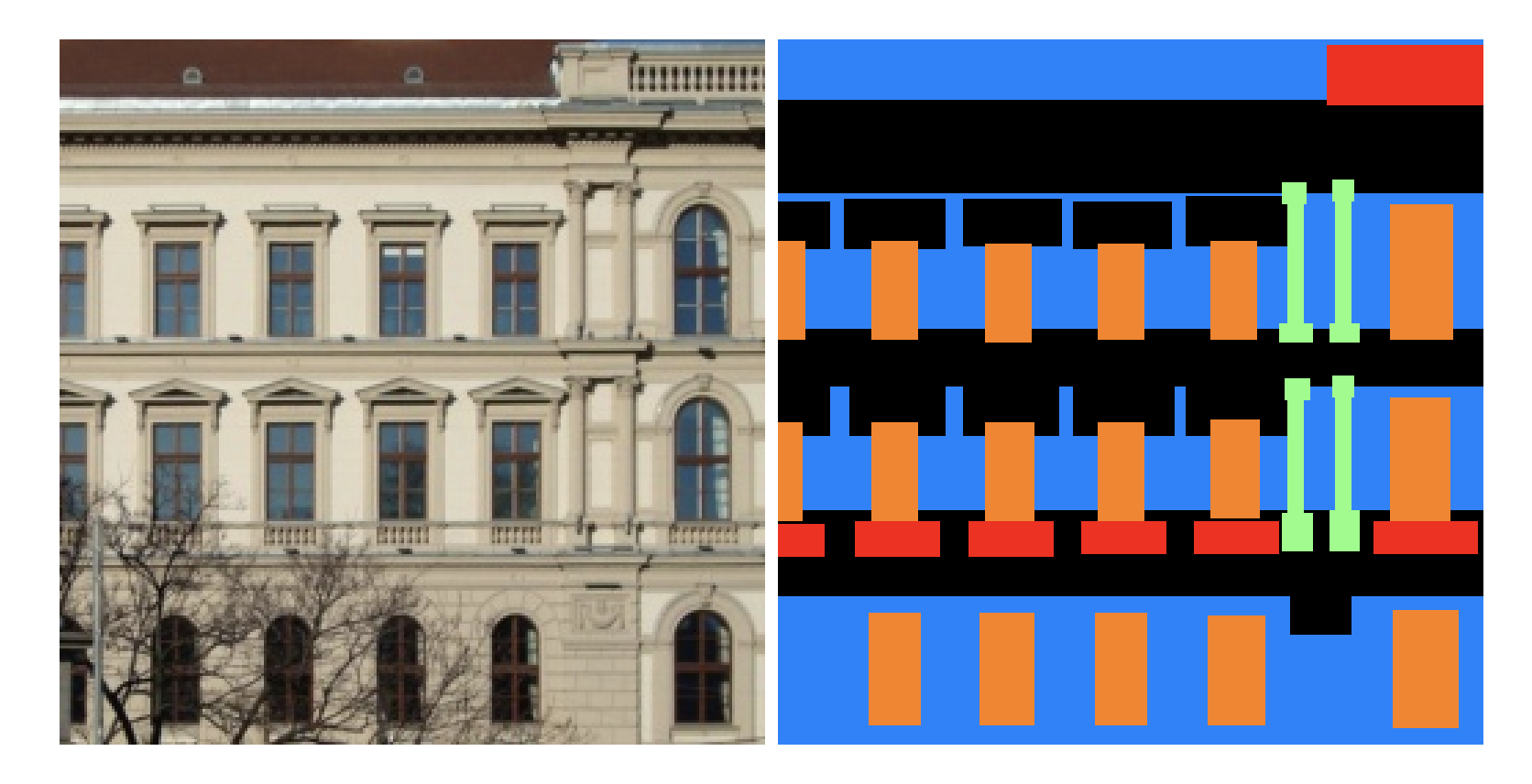

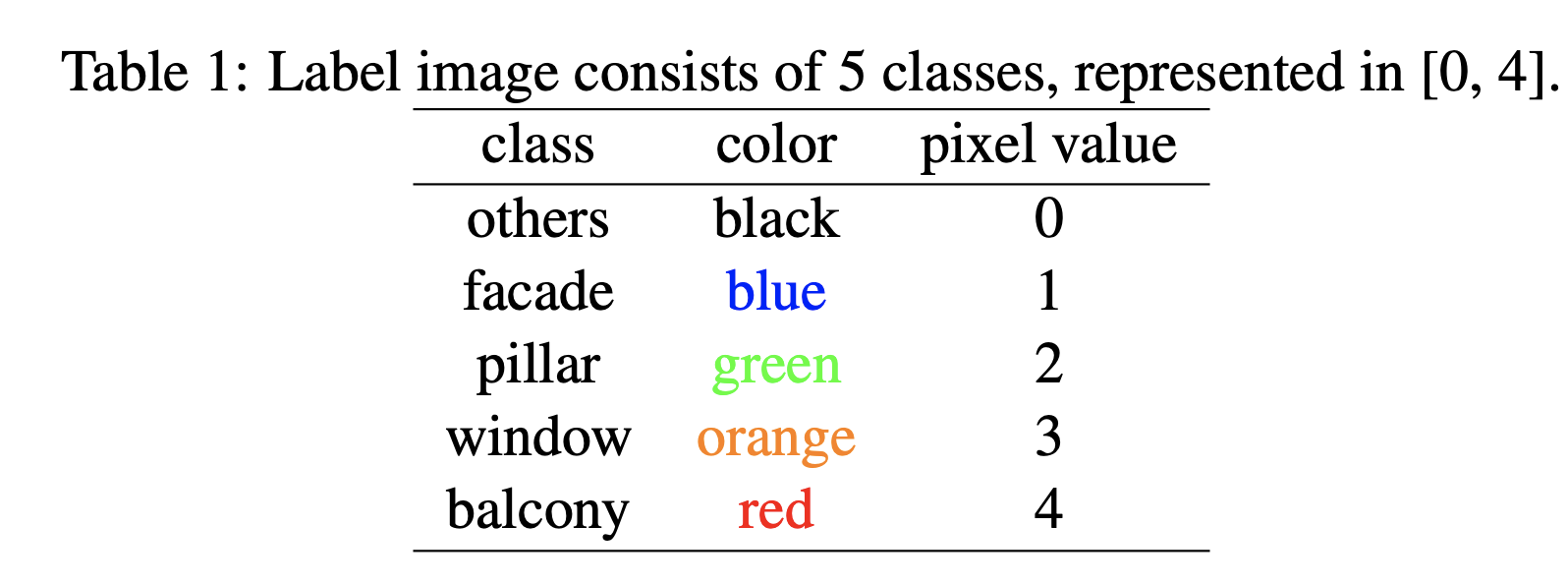

Semantic Segmentation refers to labeling each pixel in the image to its correct object class. We will use the Mini Facade dataset. The starter code and data for this part is available here. Mini Facade dataset consists of images of different cities around the world and diverse architectural styles (in .jpg format), shown as the image on the left. It also contains semantic segmentation labels (in .png format) in 5 different classes: balcony, window, pillar, facade and others. Your task is to train a network to convert image on the left to the labels on the right. The color to label map is shown in the table below.

- Dataloader: The starter code has the dataloader written for the Mini Facade. Split the data into a training and validation set, and you should evaluate on the test set only once, after you have tuned all your hyper parameters on the validation set.

- CNN: The starter code in part3/train.py provides a dummy network. The output of the network should be 5 numbers for each pixel in the input image (HxWx5 sized output). Write a CNN with 5-6 convolution layers for this task. The convolution should be followed by ReLU. If you choose to use maxpool after the conv layer, you might consider using torch.nn.Upsample or torch.nn.ConvTranspose2d to finally match the resolution of the input image.

- Loss Function and Optimizer: You will use cross entropy loss (torch.nn.CrossEntropyLoss) as the prediction loss. The optimizer in the starter code is Adam, with a learning rate of 1e-3 and weight decay 1e-5. Run the training loop Until convergence. You should try different optimizers, different learning rates and different values of weight decay (which is the same a L2 regularization) to improve your results. Note that to evaluate, we will use Average Precision (AP) on the test set to evaluate the learned model. The starter code contains the code to evaluate AP. Your submission should achieve an AP of atleast 0.45 on the test set.

- Results: Once you have trained your model, show the following results for the network:

- Report the detailed architecture of your model. Include information on hyperparameters chosen for training and a plot showing both training and validation loss across iterations.

- Report the average precision on the test set. You can use provided function to calculate AP on the test set. You should only evaluate your model on the test set once. All hyperparameter tuning should be done on the validation set. You should try to achieve an AP of 0.45 on the test set.

- Try running the trained model on the photo of a building from your collection. Which parts of the images does it get right? Which ones does it fail on?

Resources

[1] Introductory Pytorch Tutorial

[2] Tutorial on writing a custom Dataloader

[3] Example of a neural network class

[4] Google Colab Tutorial (Using this should be very similar to using an ipython notebook)

Acknowledgements

The assignment has been adapted from David Fouhey's Computer Vision Course at Michigan University.

Programming Project #4 (proj4)

Programming Project #4 (proj4) Programming Project #4 (proj4)

Programming Project #4 (proj4)