Background and Overview

Sergei Mikhailovich Prokudin-Gorskii traveled across the Russian Empire taking colored photographs of people,

buildings, landscapes, railroads, etc. He would record three exposures of each scene onto a glass plate using

a red, green, and blue filter. While his photos were never materialized, his RGB glass plate negatives still

exist in the Library of Congress.

This project seeks to take the digitized Prokudin-Gorskii images and using image processing techniques to

produce a color image with as few visual artifacts as possible.

Single Scale Processing

The first algorithm involves searching over a window of possible displacements,

in my case [-15, 15] pixels, to find the displacement with the best score. I followed the

L2 norm, which calculates the SSD between the intensities of the two images being compared

and was the simplest to use, handling most images quite successfully. I would apply this

method when aligning the g channel with the b, and the r channel with the b, finally stacking

the shifted channels to produce the colored image.

cathedral: g[2,5]; r[3,12]

monastery: g[2,-3]; r[2,3]

tobolsk: g[3,3]; r[3,6]

Multi Scale Processing

The next algorithm involves utilizing an image pyramid approach to compute displacements

on significantly larger images. The exhaustive search of single scale processing technique would be

too expensive and too long on hi-res images. Therefore, the image pyramid technique, along with

calculating SSD’s, was used to find the offsets. By recursively resizing down to a more coarse-grained

image and updating the offsets at each level, we get a more improved displacement as we recurse back up

to each finer-grained level.

The only image that was not successfully aligned was emir.tif. Due to the significant difference in

intensity values among the color channels, utilizing SSD would not result in accurate scores, leading

to poor alignment.

emir: g[24,49]; r[-305,93]

harvesters: g[16,59]; r[13,124]

icon: g[17,41]; r[23,89]

lady: g[9,51]; r[12,111]

melons: g[10,81]; r[13,178]

onion_church: g[26,51]; r[36,108]

self_portrait: g[29,78]; r[37,176]

three_generations: g[14,53]; r[11,112]

train: g[5,42]; r[32,87]

village: g[12,64]; r[22,137]

workshop: g[0,53]; r[-12,105]

Bells and Whistles: Automatic Cropping

In order to implement automatic cropping, I utilized the offsets produced from aligning

the green and red channels to the blue. Since we have the displacement vectors at hand, we know how

much the channel has shifted in the positive/negative direction of the x and y axes. For each axis,

I took the maximum of the positive offsets between the red and green channels and the minimum of the

negative offsets to determine the cropped boundaries of the image.

three generations no crop

|

three generations with cropped edges

|

train no crop

|

train with cropped edges

|

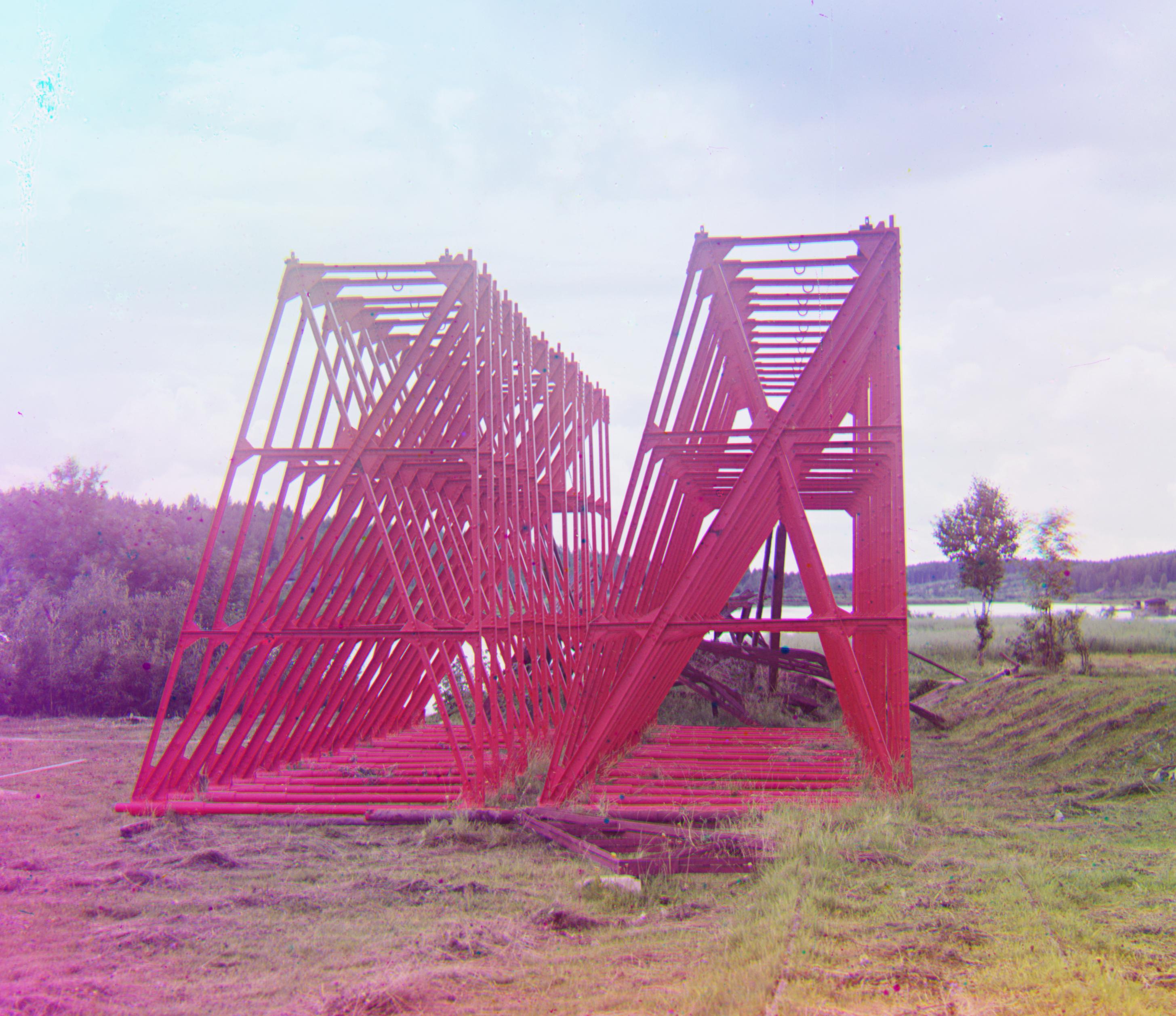

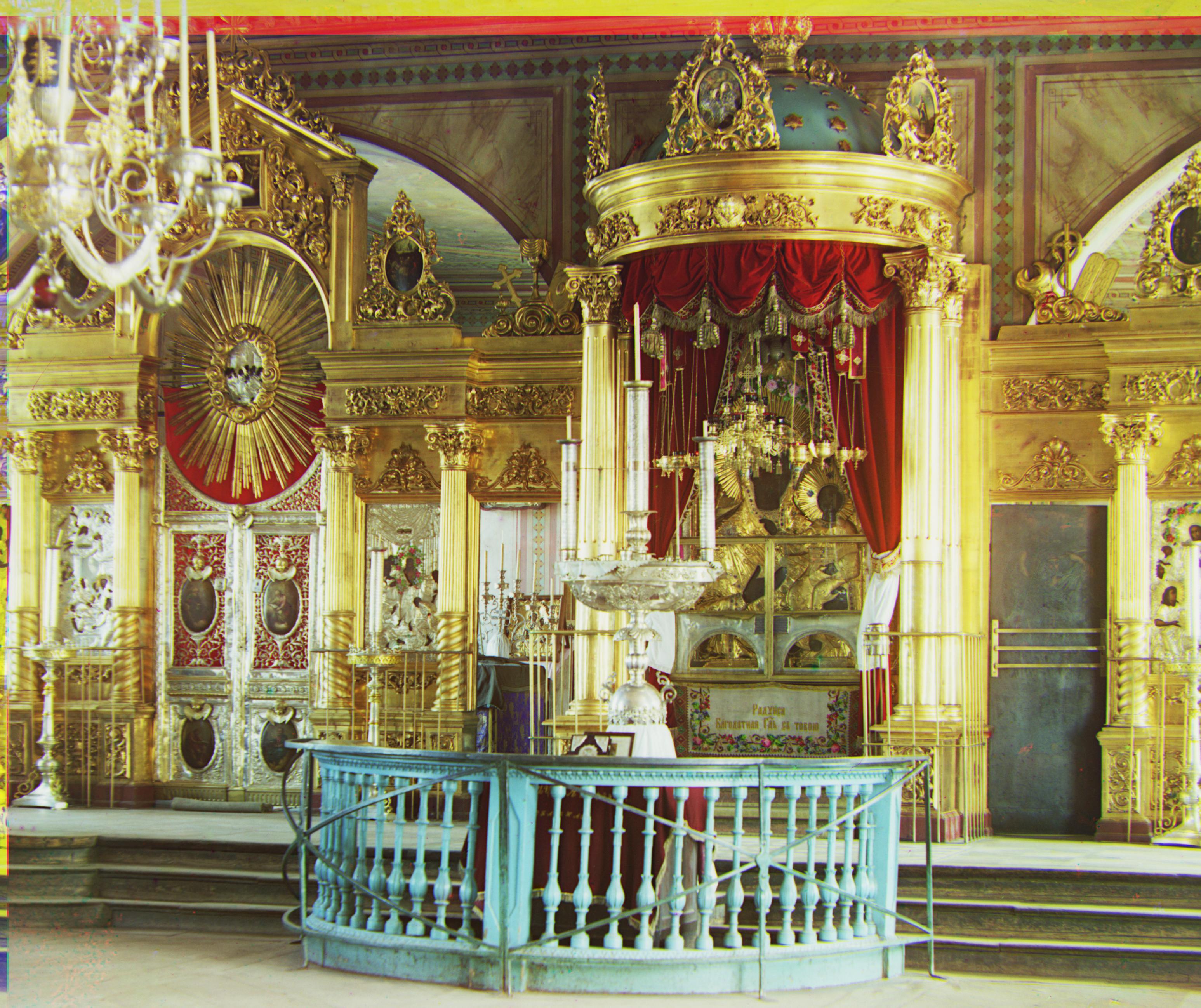

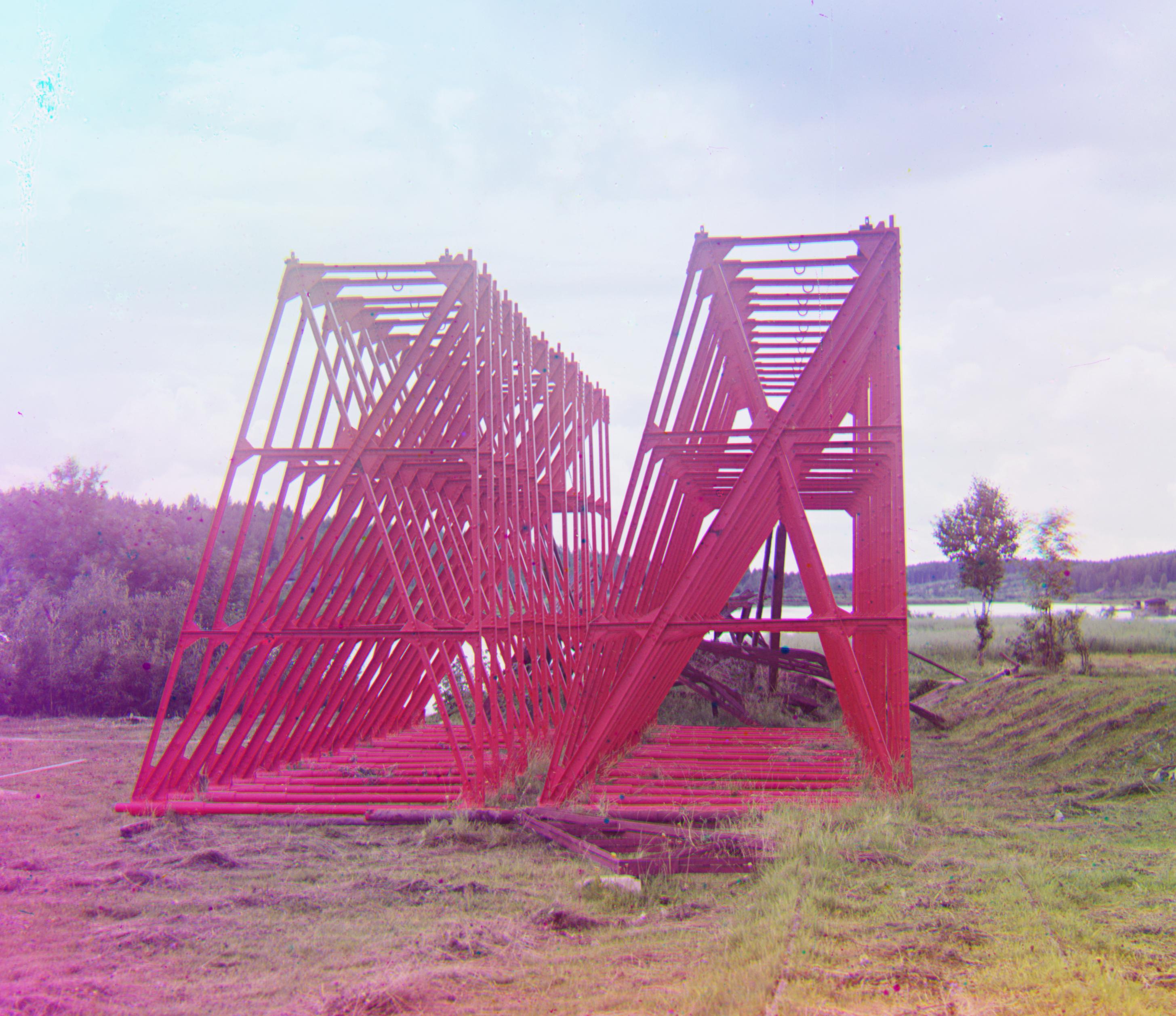

Extra Examples from Prokudin-Gorskii Photo Collection

cathedral_peter: g[11,26]; r[16,63]

diverting_current: g[15,14]; r[19,38]

harvest_time: g[27,22]; r[38,143]

prison: g[30,18]; r[37,42]

reserve_girders: g[21,15]; [27,43]

trinity_cathedral: g[9, -15]; r[10,11]