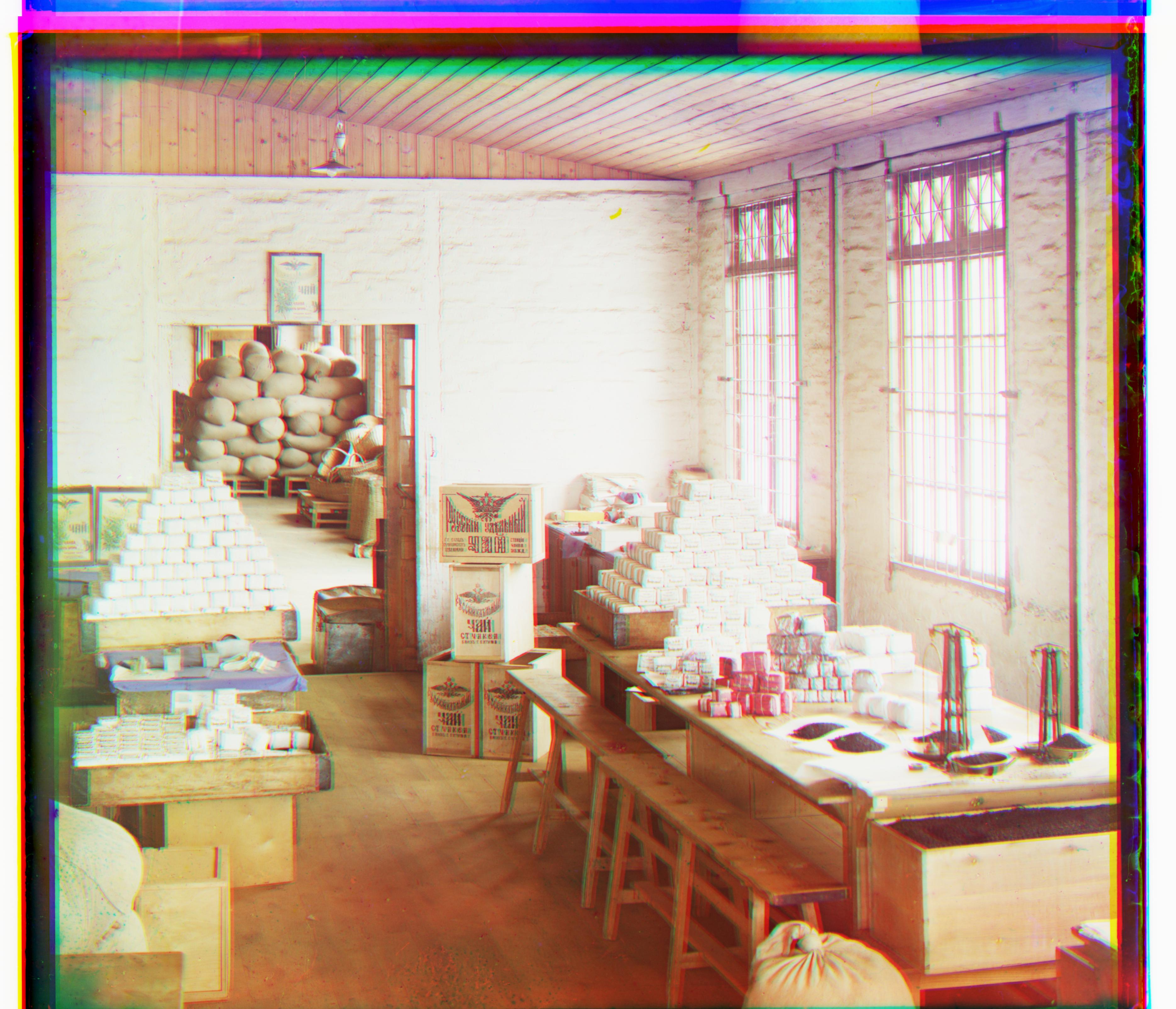

The general idea behind the process of recreating the images in color is to stack the three color channel images on top of each other, after correctly aligning them, in order to form a single RGB color image. Therefore, the main difficulties of the project is figuring out the correct approach to align the pictures. To do this, I set my blue color channel to be the reference point, and attempted to align the other color channels with respect to it. Using the metric of the L2 norm and two for loops, I shifted my red and green color channels within a displacement range of [15, 15] as best I could in the way that would minimize the norm between those respective channels and the blue one. Originally, I found the results of the alignment to be unsatisfactory, and tackled the problem by cropping the images to remove the edges when comparing the norms of the channels. This inevitably reduced the noise of the comparison to recreate the following small colored images with relative precision:

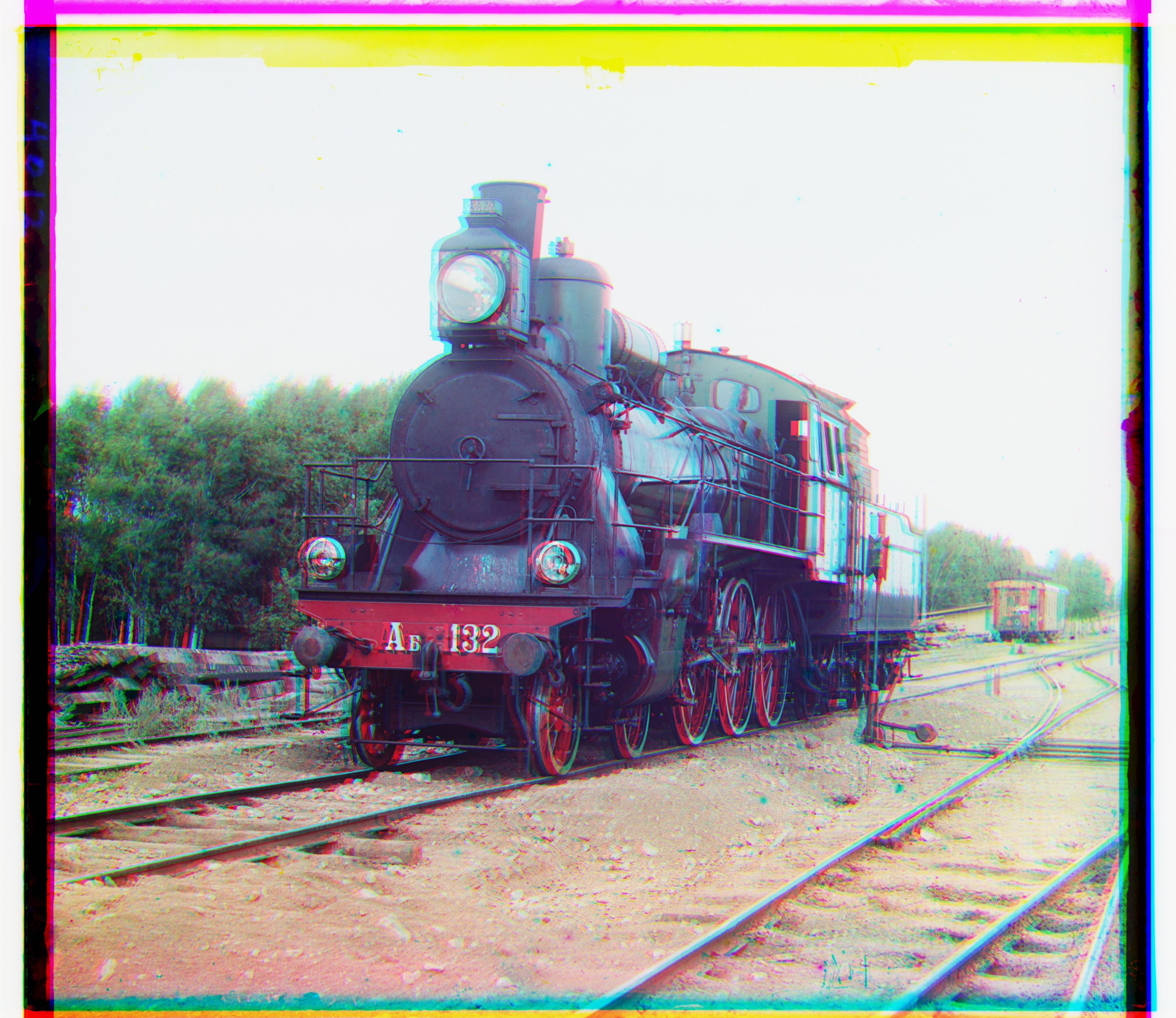

While this naive algorithm worked well for small images, when used on larger images, the overhead of shifting and comparing these larger images directly caused the process to be incredibly slow. To combat this, I created an image pyramid, first converting the naive algorithm code into one that returned the offset calculated instead of the actual image after the shifts. Then, I took the original color channels, and downsized them by a factor of 2 between recursive calls. At the base case of a 400 x 400 pixel image, I ran the general algorithm on the coarse image to get an offset. This offset was then propagated up between recursive calls, multiplying the returned offset between calls by a factor of 2. Essentially, within every call, I used the previous offset value to reduce the search space of the offset for the next call, searching within some chosen radius around the offset. This allowed for much faster calculation time, allowing for the efficient and proper coloring of all the remaining images except emir.tif.

With emir.tif not aligning very well since its color channels were of different brightness values, I wanted to figure out a different metric to align the channels. The first thing I tried was to simply align the red channel to the adjusted green channel instead of to the reference blue channel. This by itself actually gave significantly better results, although the image still wasn't too sharp:

However, after rerunning this new algorithm on the rest of the images, I noticed that although in general the quality of the pictures was exactly the same, lady.tif became aligned significantly worse:

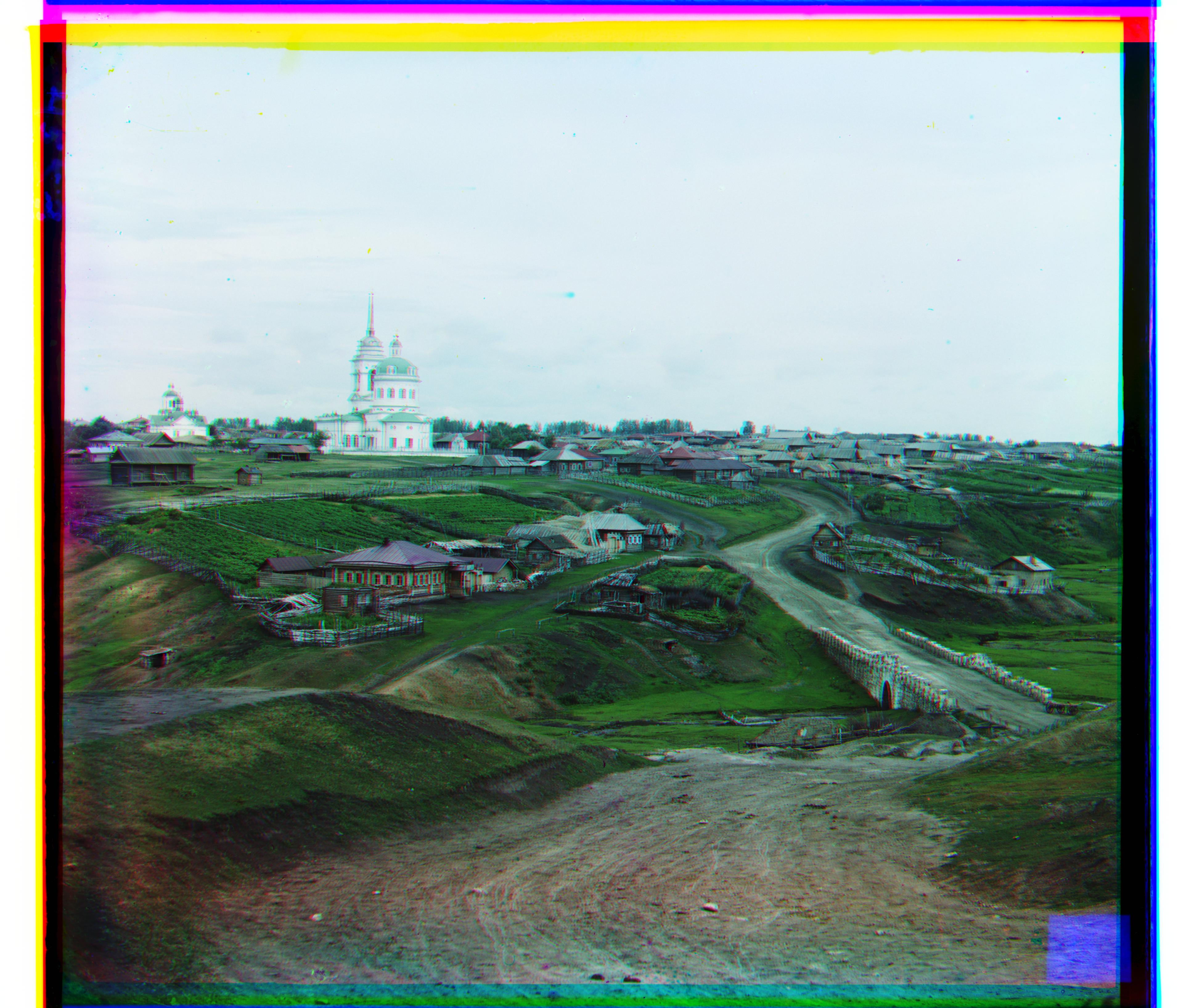

Based on this, I decided to go back to the drawing board and try again. After looking through the different options for bells and whistles on the project spec, and then researching a bit online, I came across the idea to use Canny Edge Detection to align the pixels. Essentially, my new algorithm involved taking the color channels, cropping the edges as usual, applying the Canny Edge Detection in order to get binary (black and white) images with edges detected, and then use the same image metric of the L2 norm in order to align the channels. To do this easily, I imported feature from skimage. Doing it this way, the images that became significantly better aligned were emir.tif, lady.tif, and melons.tif. The rest had either the same, or only a slightly different result:

Below are the results of my algorithm on various chosen pictures from the Projudin-Gorskii Collection. bamboo.tif seemed to have some trouble aligning properly due to the predominance of green, which may have caused the L2 norm image metric to not work as well: