CS194-26 Project 1: Images of the Russian Empire

Buyi Zhang, cs194-26-aei

Overview

The goal of this assignment is to take the digitized Prokudin-Gorskii glass plate images and, using image processing techniques, automatically produce a color image with as few visual artifacts as possible. In order to do this, you will need to extract the three color channel images, place them on top of each other, and align them so that they form a single RGB color image.

Approach

Single-scale version

To align the RGB channels, I first implemented a single-scale version, which finds correct windows for G/R channels in the way such that G/R channels are aligned with B channel within a displacement range of [-15, 15]. B/G/R channels are just sliced from the original image in equal heights.

To measure the "goodness of alignment", we used SSD/NCC metrics:

- SSD metrics: Compute the sum of squared distance or differences between two images using the raw pixels.

- NCC metrics: Compute the dot product of two flattened and normalized images. I have subtract this from one to make the optimal displacements have smallest score.

I have used the NCC metrics as the default one. One last word about computing the metrics is that the borders of channels are causing lots of troubles. As the images provided had uneven borders, I did not compute the metric over the edges of each channel (defined as the outer 10% of the image dimension).

Pyramid version

Exhaustive search will become prohibitively expensive if the pixel displacement is too large (which will be the case for high-resolution glass plate scans).

In this case, I have implemented a faster search procedure such as an image pyramid. An image pyramid represents the image at multiple scales (usually scaled by a factor of 2) and the processing is done sequentially starting from the coarsest scale (smallest image) and going down the pyramid, updating my estimate as I go, until the image total size is less than 2*10^5. It is very easy to implement by adding recursive calls to my original single-scale implementation with user-specified window of displacements.

Assuming the lower dimension image alignments are successful, the search range for the doubled-dimension image is set as [ -3, 3 ], which greatly reduces the computation burden.

Bells & Whistles(Extras)

Better features

Instead of aligning based on RGB similarity, try using gradients or edges. I used the edge detection algorithm provided in the python skimage package (Canny detector). Since it produces an image whose pixels are either 0/1, I can treat the edge image as another input for computing metrics.

Results

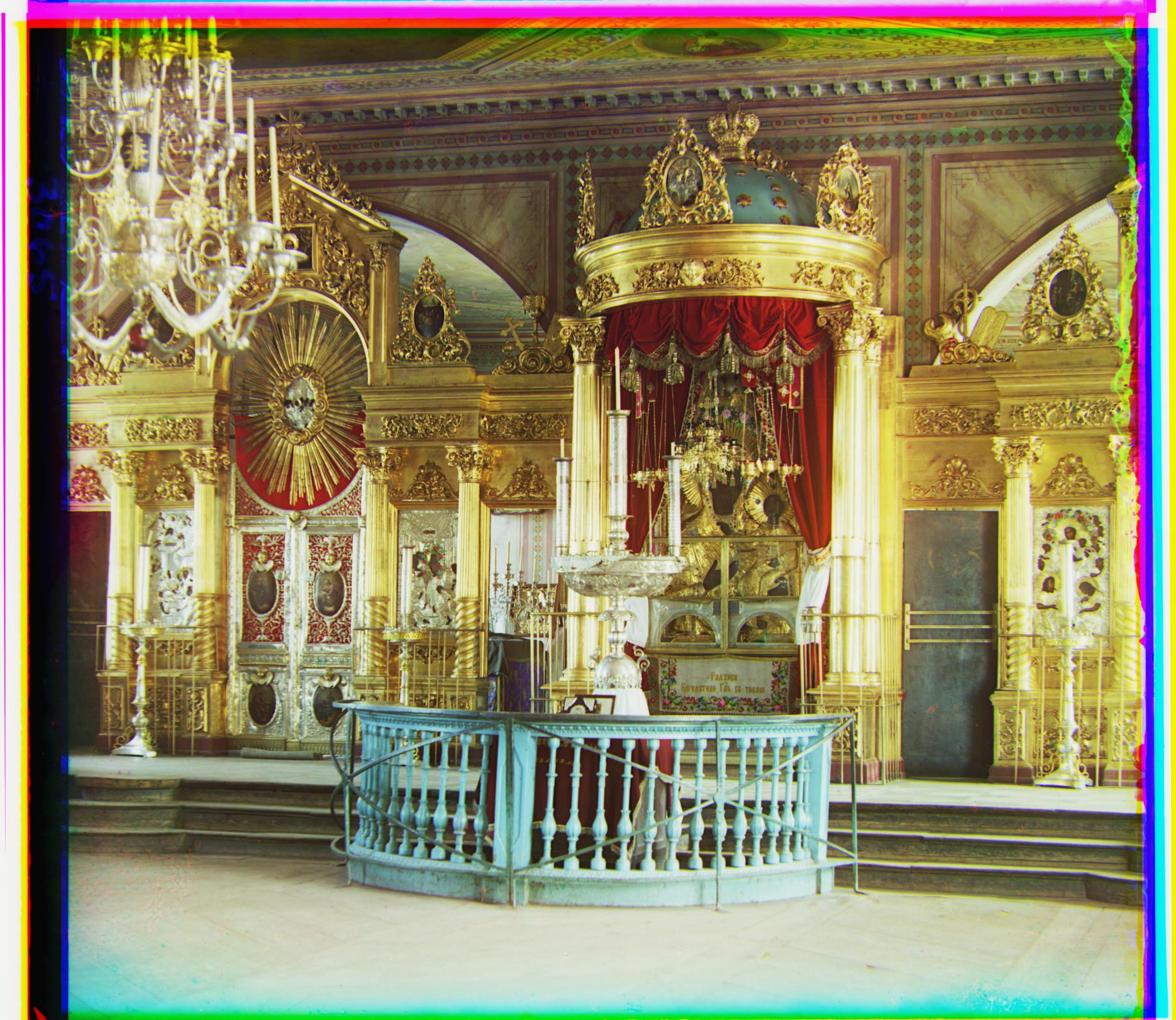

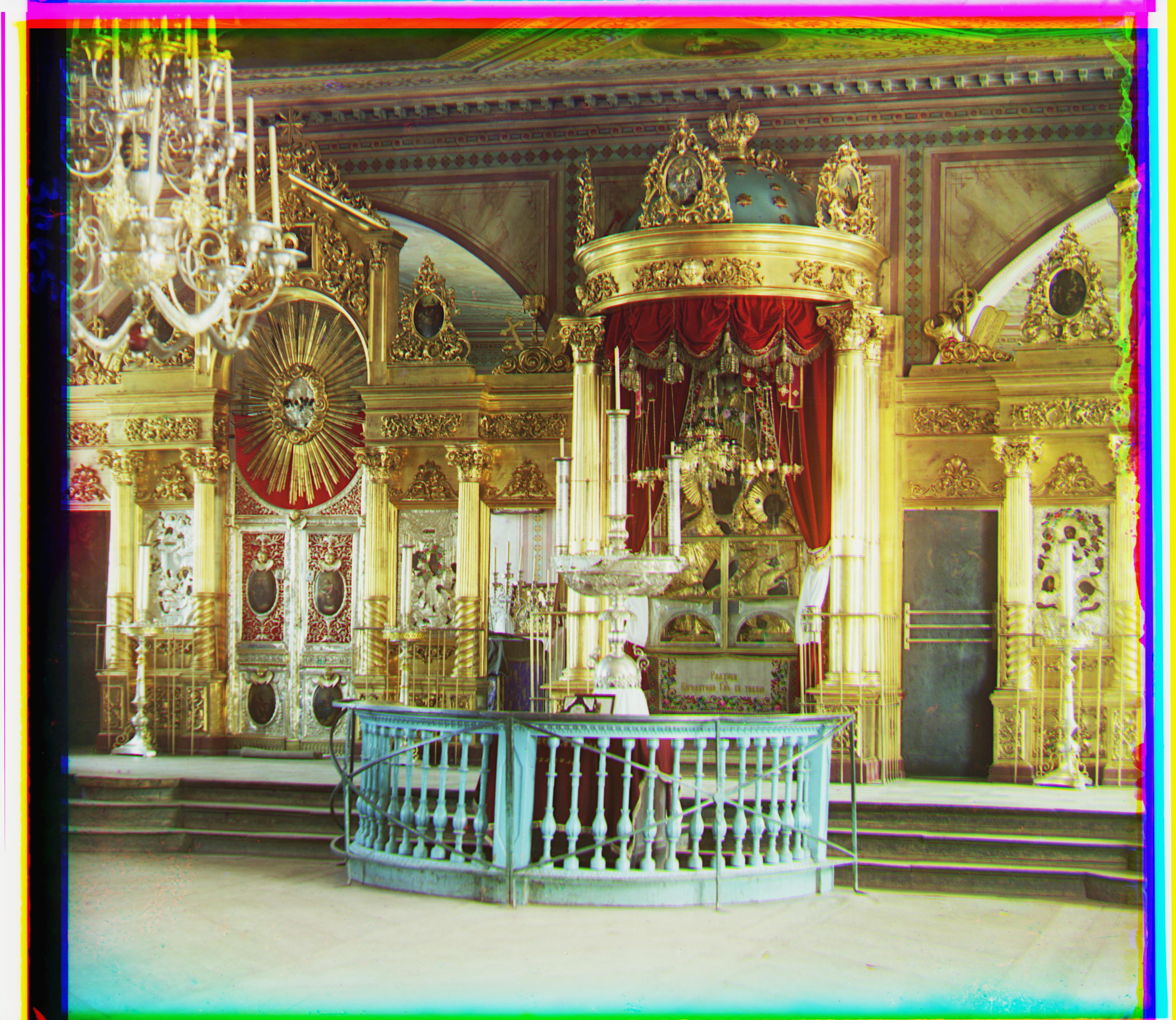

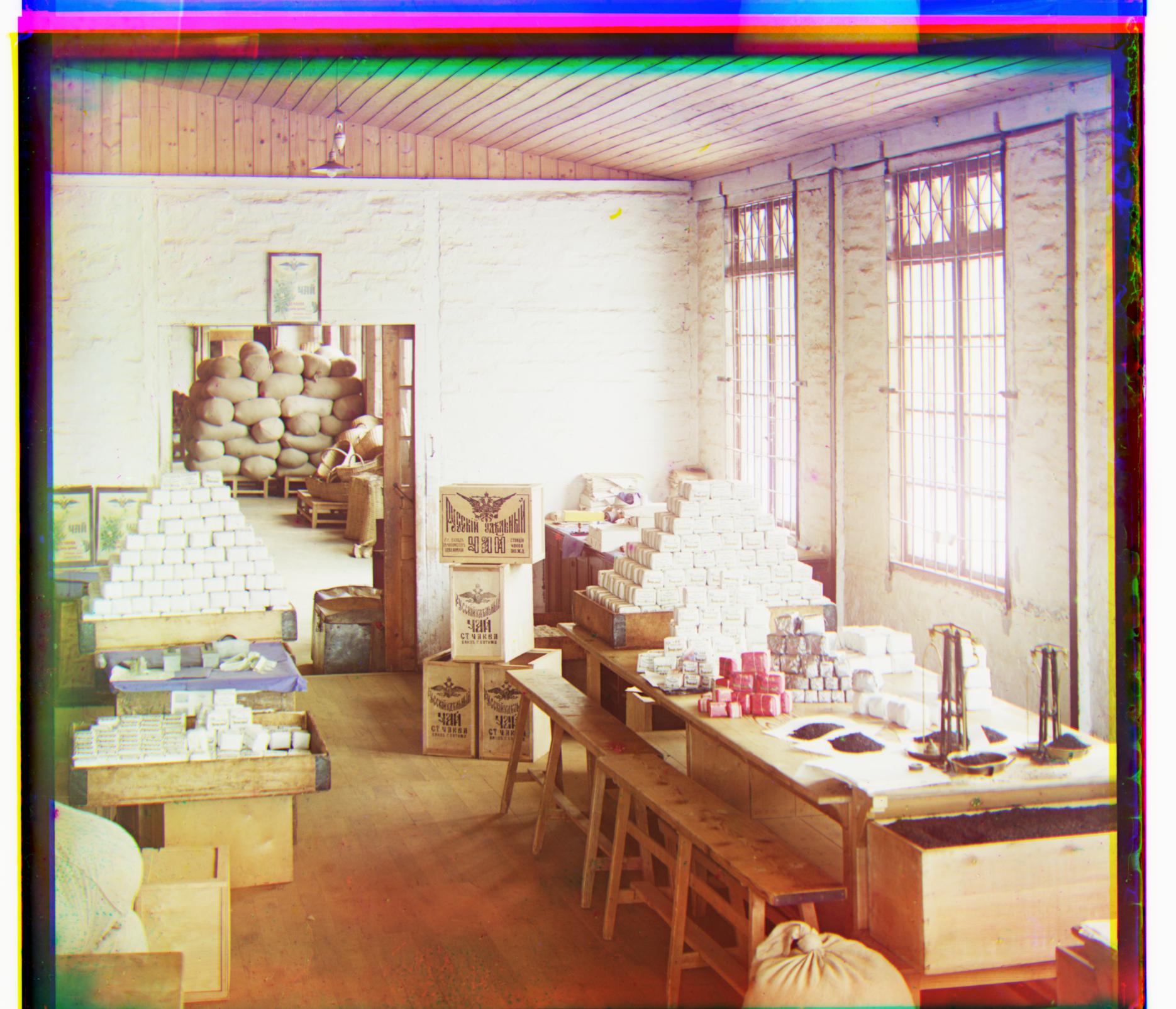

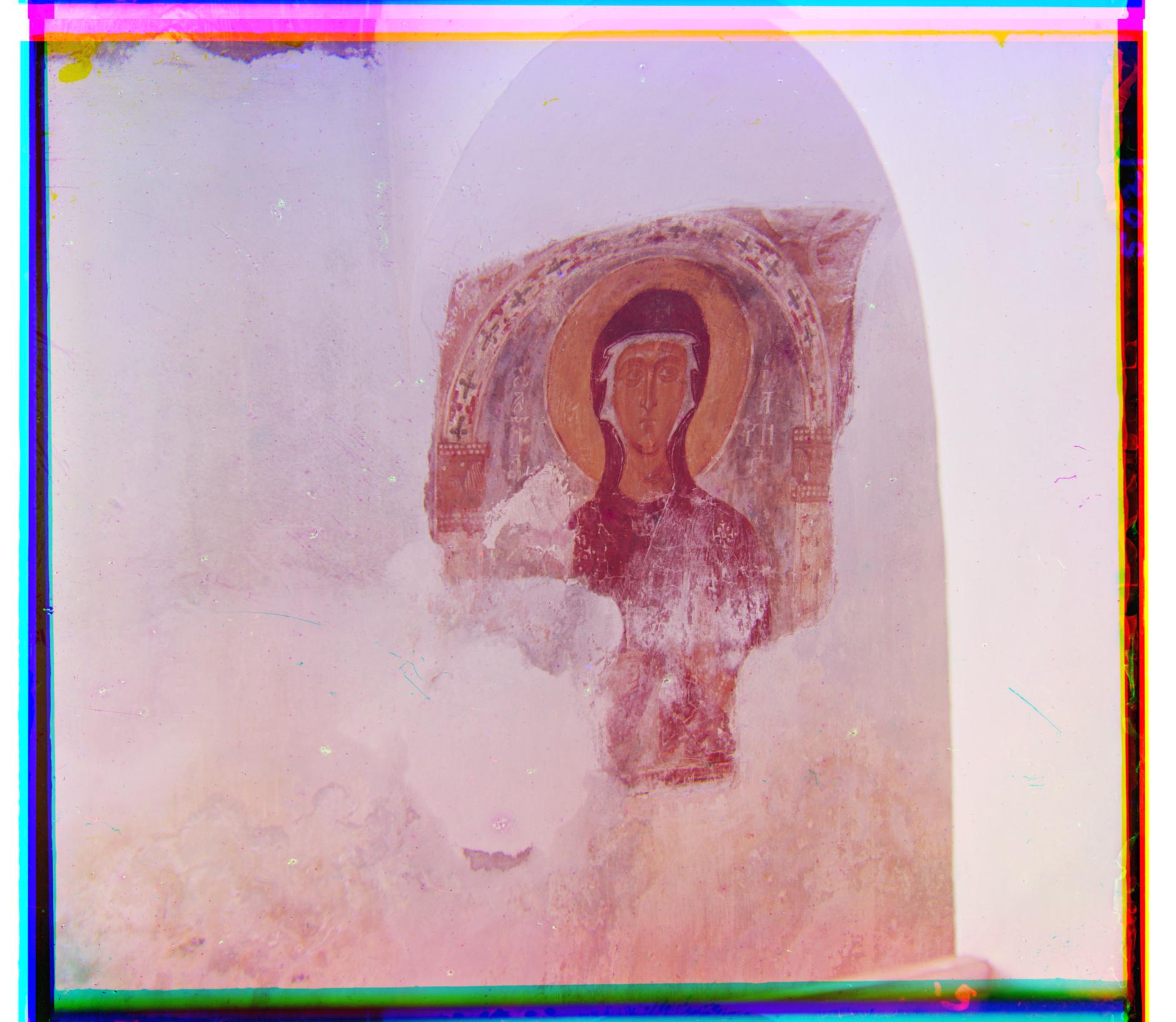

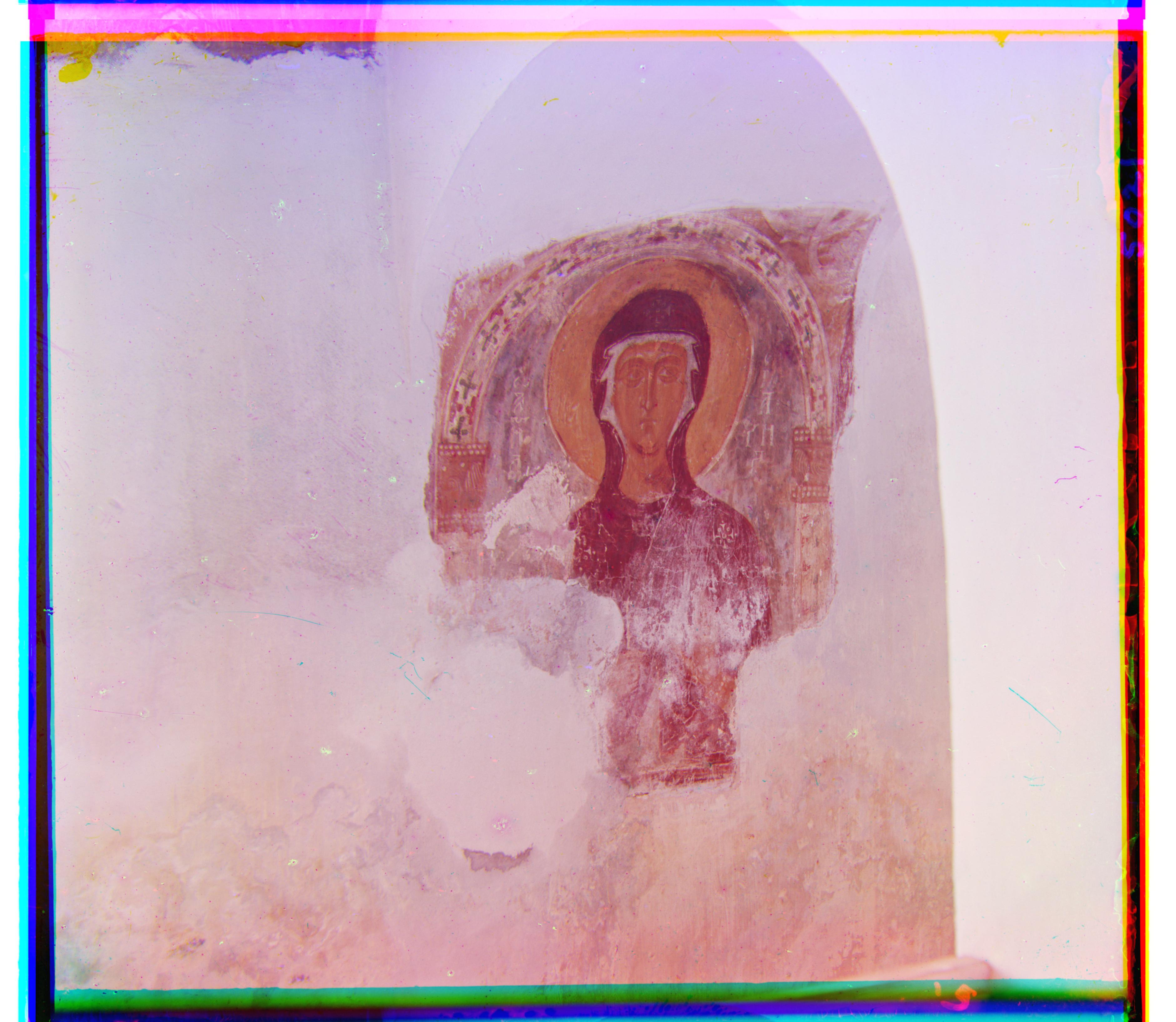

The result of my algorithm on three examples of my own choosing, downloaded from the Prokudin-Gorskii collection are also shown at last.

The Edge method performs reasonably well on all images. I think this is because the edge detector uses gradient information and the post-processing, smoothing, and filtering of edges also makes it perform well on images that have large changing gradients.

cathedral Offsets: Pixels_NCC G[5, 2] R[12, 3] Edge_NCC G [5, 2] R [12, 3]

monastery Offsets: Pixels_NCC G[-3, 2] R[3, 2] Edge_NCC G [-3, 2] R [3, 2]

tobolsk Offsets: Pixels_NCC G[3, 3] R[6, 3] Edge_NCC G [3, 3] R [6, 3]

emir Offsets: Pixels_NCC G[49, 24] R[104, 56] Edge_NCC G [49, 23] R [107, 40]

harvesters Offsets: Pixels_NCC G[60, 17] R[124, 13] Edge_NCC G [60, 18] R [118, 11]

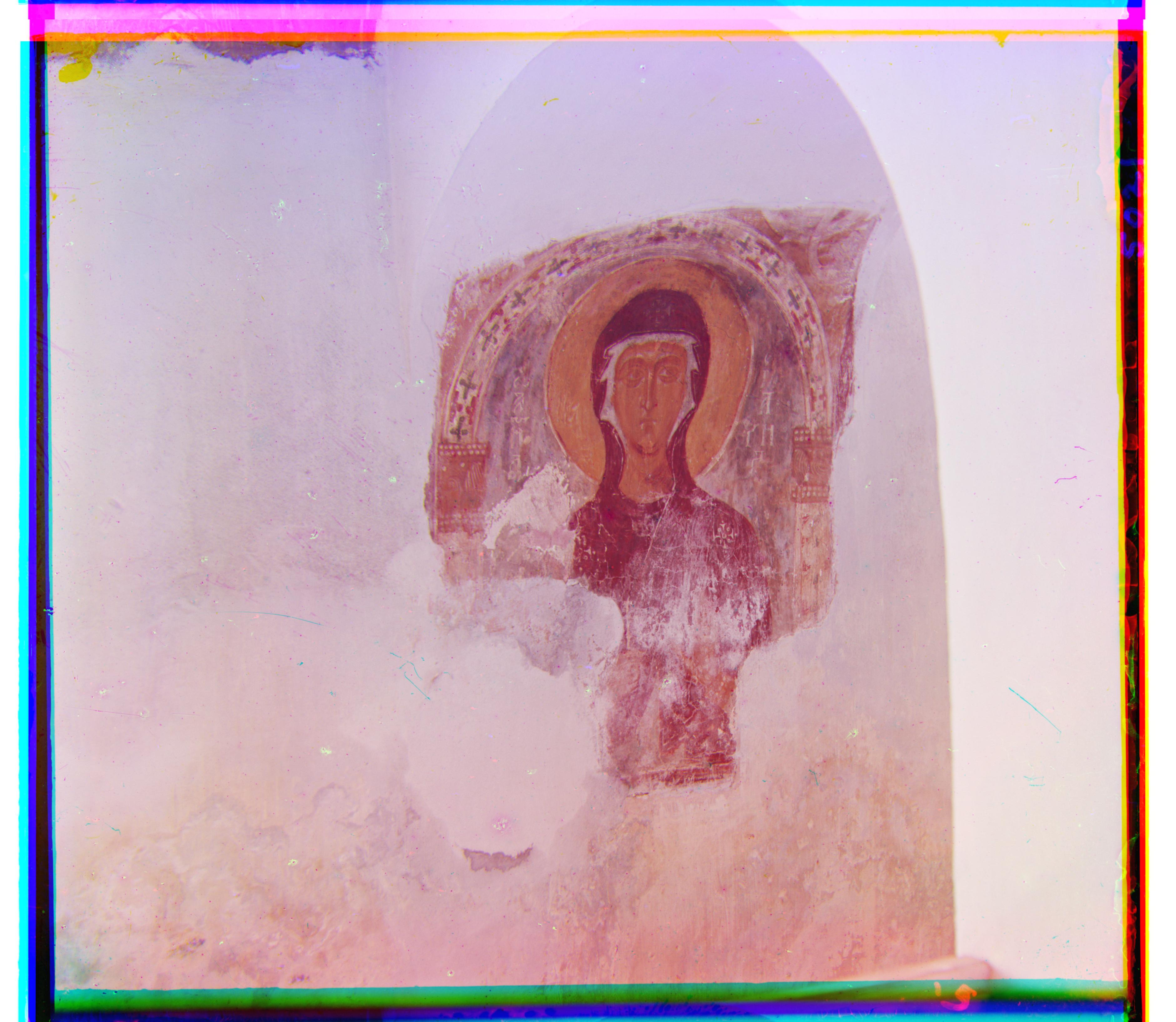

icon Offsets: Pixels_NCC G[41, 17] R[89, 23] Edge_NCC G [38, 16] R [90, 22]

lady Offsets: Pixels_NCC G[55, 9] R[117, 11] Edge_NCC G [57, 9] R [120, 13]

melons Offsets: Pixels_NCC G[82, 11] R[178, 13] Edge_NCC G [79, 9] R [173, 11]

onion_church Offsets: Pixels_NCC G[51, 26] R[108, 36] Edge_NCC G [52, 24] R [107, 34]

self_portrait Offsets: Pixels_NCC G[79, 29] R[176, 37] Edge_NCC G [77, 29] R [165, 34]

three_generations Offsets: Pixels_NCC G[53, 14] R[112, 11] Edge_NCC G [58, 17] R [115, 12]

train Offsets: Pixels_NCC G[43, 6] R[87, 32] Edge_NCC G [40, 8] R [85, 29]

village Offsets: Pixels_NCC G[65, 12] R[138, 22] Edge_NCC G [67, 13] R [138, 22]

workshop Offsets: Pixels_NCC G[53, 0] R[105, -12] Edge_NCC G [54, -1] R [105, -12]

z0 Offsets: Pixels_NCC G[60, -20] R[132, -44] Edge_NCC G [62, -19] R [132, -44]

z1 Offsets: Pixels_NCC G[9, 4] R[27, -2] Edge_NCC G [9, 3] R [28, -1]

z2 Offsets: Pixels_NCC G[15, 9] R[17, 9] Edge_NCC G [15, 8] R [16, 7]