-

Overview

In this assignment, I use warping and cross-dissolving to produce a "morphing" animation between two face images. I'll also use this dataset to generate a "mean face" and generate a caricature of myself.

-

Defining Correspondences

For our warping to work, we first need to select anchor points in our images that correspond to each other. I used a matplotlib GUI and ginput to get the corresponding points according to this order. I then used Delaunay triangulation to separate the images into triangles.

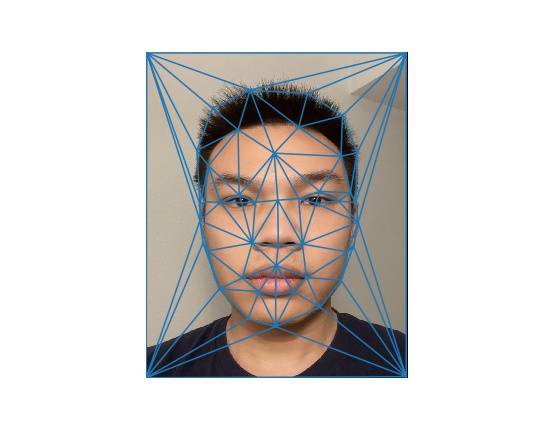

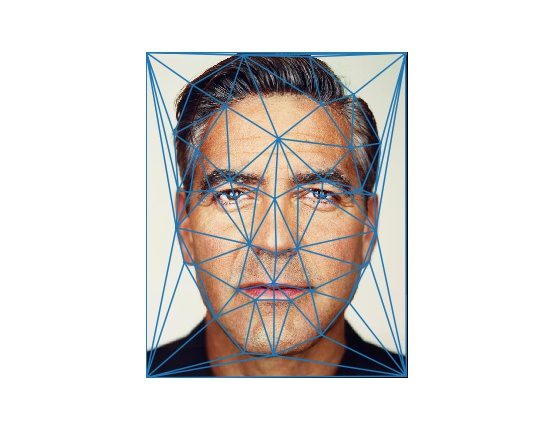

My Picture after TriangulationGeorge Clooney after Triangulation

-

Computing the "Mid-way Face"

To find the corresponding pixels between the original images and the warping images, we need to find the transformation matrix for the warps. We can use the following formula to find the transformation matrix between two triangles:

\( \begin{bmatrix} c & d & e \\ f & g & h \\ 0 & 0 & 1 \end{bmatrix} \begin{bmatrix} a_1 & a_2 & a_3 \\ b_1 & b_2 & b_3 \\ 1 & 1 & 1 \end{bmatrix} = \begin{bmatrix} x_1 & x_2 & x_3 \\ y_1 & y_2 & y_3 \\ 1 & 1 & 1 \end{bmatrix} \)

Where \((a_i, b_i)\) are the vertices of the source triangle, and \((x_i, y_i)\) are the vertices of the destination triangle.

We can use numpy to find the transformation matrix \(T\), and apply the inverse transformation \(T^{-1}\) to pixels in the destination image to find the corresponding pixels in the source image. We then take the average of the pixel values of the two images at the corresponding pixels to find the "Mid-way" face.Mid-way FaceMid-way Face with Triangulation

-

The Morph Sequence

By changing the mix ratio between the two images, we are able to generate intermediate images in the morph sequence. We do this by using \(\alpha\) times the vertex coordinates of image A plus \(1-\alpha\) times the vertex coordinates of image B to find the intermediate vertex location, and doing the same for pixel values. Note that we use the same triangulation correspondence as the one calculated on the mean vertex positions, so that the triangles map to the same regions at any point in the morph sequence.

We show the results below:Sample Morph SequenceSample Morph Sequence with Triangulation

Sample Morph SequenceSample Morph Sequence with Triangulation

Sample Morph SequenceSample Morph Sequence with Triangulation

-

The "Mean face" of a population

Using this annotated dataset, we can calculate a "mean face" of the population. We can also generate "meaned" versions of individual faced. Below, I also show my face morphed into the mean shape, and the mean face morphed into my face shape.

We can see that the morphing doesn't work very well when different parts of the face are visible in the source image and destination image. For sample 4, the person's head is turned to the side, and we can't effectively hallucinate the hidden side of her face.Mean FaceMean Face with Triangulation

Sample Individual 1Sample Individual 1 morphed into mean

Sample Individual 1Sample Individual 1 morphed into mean

Sample Individual 2Sample Individual 2 morphed into mean

Sample Individual 2Sample Individual 2 morphed into mean

Sample Individual 3Sample Individual 3 morphed into mean

Sample Individual 3Sample Individual 3 morphed into mean

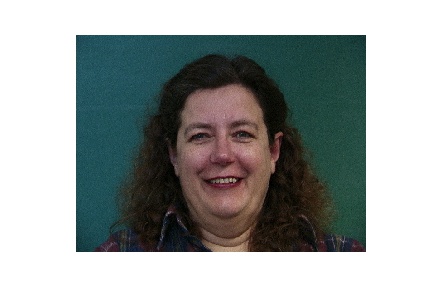

Sample Individual 4Sample Individual 4 morphed into mean

Sample Individual 4Sample Individual 4 morphed into mean

My Face in Mean ShapeMean Face in My Shape

My Face in Mean ShapeMean Face in My Shape

-

Caricatures: Extrapolating from the mean

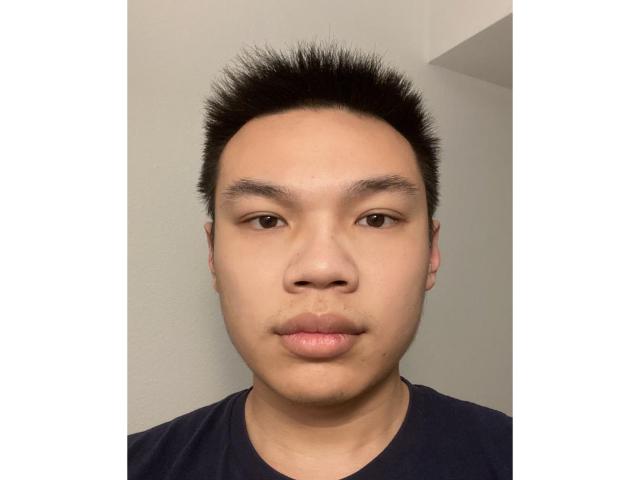

We can extrapolate from the mean by using an \(\alpha\) that's not in the range of (0, 1). By doing so, we can enhance features of individual faces. Below I show my face with differing amounts of extrapolation from the mean.

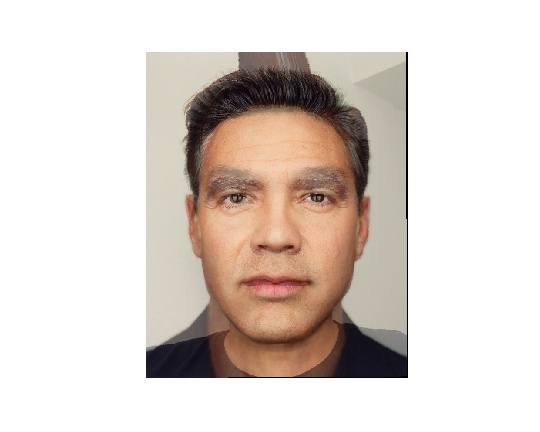

Original ImageExtrapolation with degree 0.2

Extrapolation with degree 0.4Extrapolation with degree 0.6

Extrapolation with degree 0.4Extrapolation with degree 0.6

It's visible that my eyes get bigger, my hair is shrunk, and my mouth gets slanted more as the extrapolation degree increases.

-

Bells and Whistles: PCA

I tried using PCA to extract a "mean" feature vector, and blend the mean with the feature vector generated from my face. I also show the "mean" feature vector face, and try to visualize faces after amplifying some features in the feature vector.

Mean Face from PCAAmplified Face (reconstructed by scaling the 20 largest principal components)

Amplified Face (reconstructed by scaling the 5 largest principal components more)Reconstructed face from my feature vector mixed with the mean

Amplified Face (reconstructed by scaling the 5 largest principal components more)Reconstructed face from my feature vector mixed with the mean

PCA wasn't as effective as morphing in the spatial domain, possibly because the amount of data was not enough. Interestingly, the mean feature vector had values very close to 0, which possibly meant that the mean face was a face with no detailed features. It sort of makes sense that the PCA mean face is very blurry.