Project 4: mAcHIne lEarnIng :)

Aniruddha Nrusimha

Part 1: Image Classification

The network I ended up using had the following structure

- 1 3x3 32 channel convolution

- 1 2x2 Maxpool

- 1 3x3 32 channel convolution

- 1 fully connected layer with (7x7x32) inputs and (7x7x32) outputs

- 1 fully connected layer with (7x7x32) inputs and 10 outputs

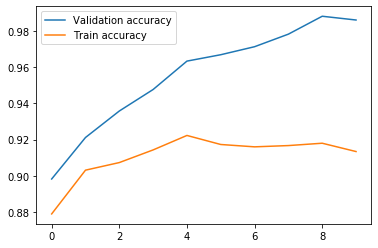

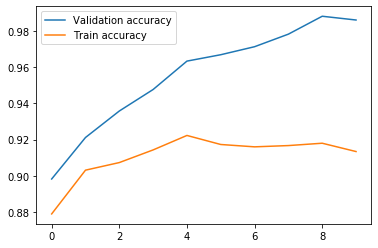

It trained pretty well. Here is the accuracy versus epoch curve.

Validation and Training Accuracy versus epochs

Validation and Training Accuracy versus epochs

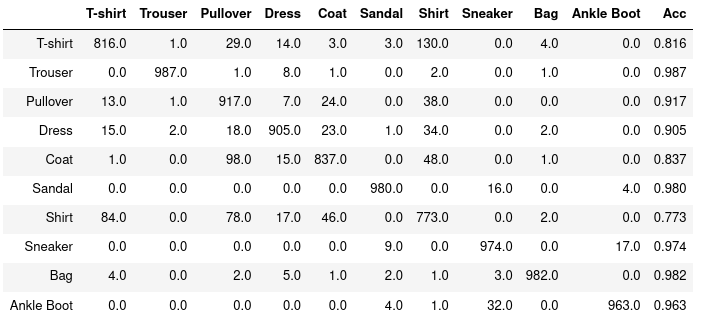

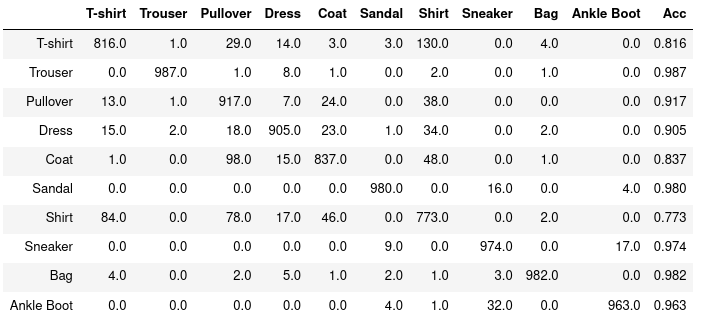

I made a table to investigate what mistakes the classifier was making. Here it is!

Table. Left side is actual label, top side is predicted label. Right most column is accuracy

Table. Left side is actual label, top side is predicted label. Right most column is accuracy

Shirts, T shirts and shirts are often mixed up, and as a result have the two lowest accuracy numbers

Here are some correct and incorrect classifications:

|

Class

|

First Correct

|

Second correct

|

First Wrong

|

Second Wrong

|

|

Ankle Boot

|

|

|

|

|

|

Bag

|

|

|

|

|

|

Coat

|

|

|

|

|

|

Dress

|

|

|

|

|

|

Pullover

|

|

|

|

|

|

Sandals

|

|

|

|

|

|

Shirt

|

|

|

|

|

|

Sneaker

|

|

|

|

|

|

Trousers

|

|

|

|

|

|

T-shirts

|

|

|

|

|

As you can see, the error images tend to overlap with a certain other class. For example, the errors in sandles and sneakers look like the other, as do the errors in T shirts and shirts

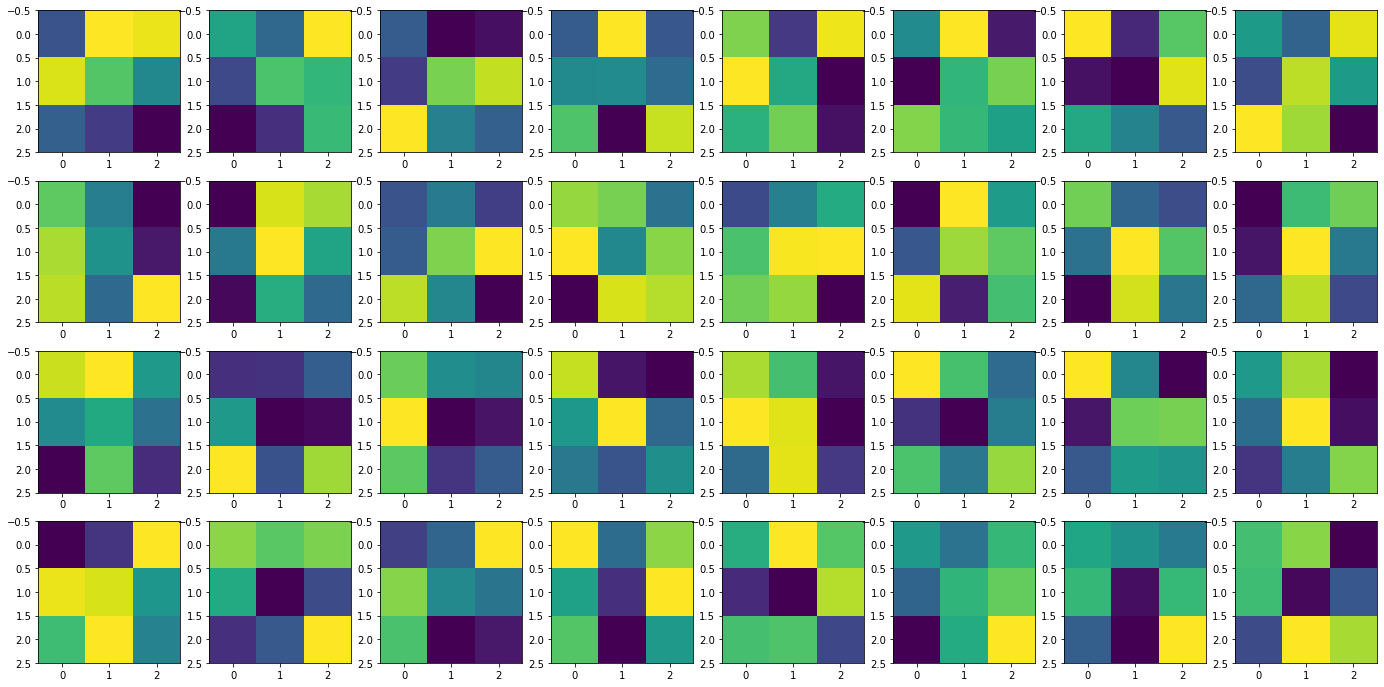

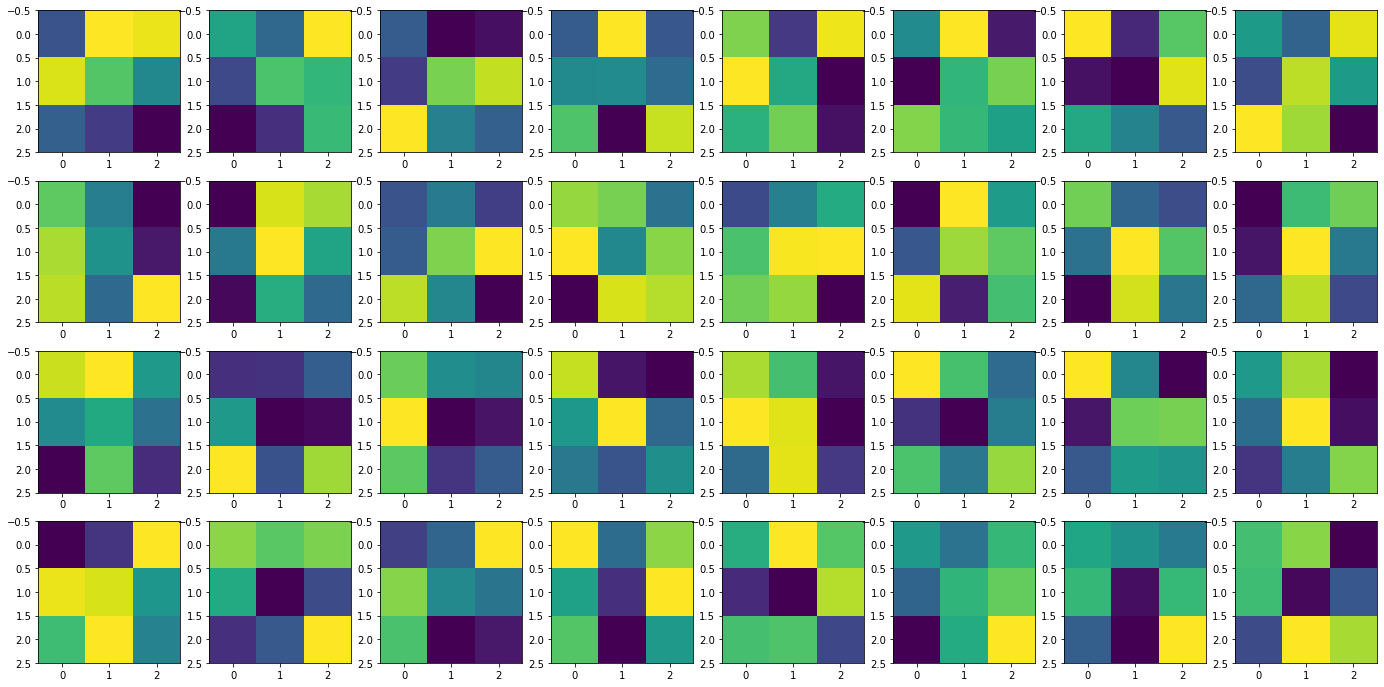

Here are the learned filters for the first layer. Brighter colors are higher values

Learned filters in the first layer. 32 total

Learned filters in the first layer. 32 total

Segmentation

Here is the architecture I ended up with. I first defined a standard block which is pretty standard in the literature:

- Enough Padding to preserve size (I chose reflection padding because it seemed to make sense for the very symettrical buildings)

- Conv2D layer with variable stride and filter size

- Batchnorm

- ReLU

These layers were then arranged as follows. Channel Size, which I experimented with, will be denoted as C

- Layer 1: 7x7 conv with C channels and stride 1

- Layer 2: 3x3 conv with 2 * C channels and stride 2

- Layer 3: 3x3 conv with 2 * C channels and stride 1

- Layer 4: 3x3 conv with 2 * C channels and stride 1

- An upsampling 2d to revert the image to its original resolution

- Layer 5: 3x3 conv with 2 * C channels and stride 1

- A concatenation of the outputs of Layers 1 and 5

- 1x1 conv with 5 output channels

I used the following hyperparameters

- Channel size was 32. 16 had worse performance, but 64 and 32 were similar, so I stuck with the faster 32.

- I used regular cross entropy loss. I tried weighted loss to improve performance on rarer classes, but it actually decreased my mAP

- I used ADAM with default parameters (lr initially 1e-3, betas = .9, .999). I also used weight decay of 1e-5. While higher learning rates had initally better results, after 10 epochs 1e-3 had the best performance

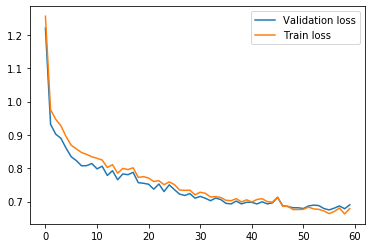

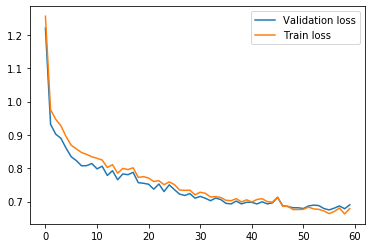

- I trained for 60 epochs for my final results. Loss curves below for validation and trianing

Validation and Training Loss over 60 epochs

Validation and Training Loss over 60 epochs

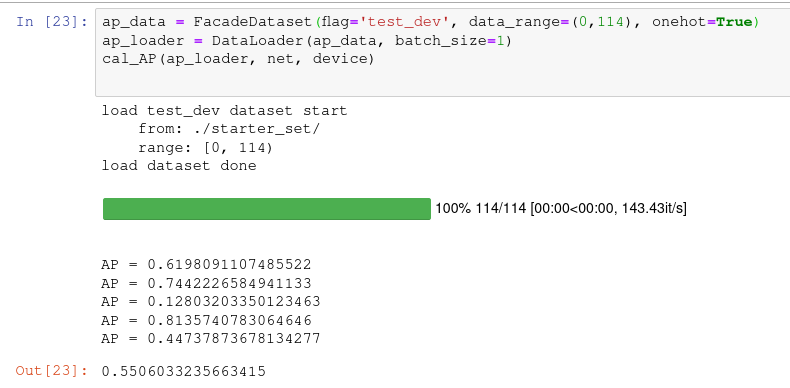

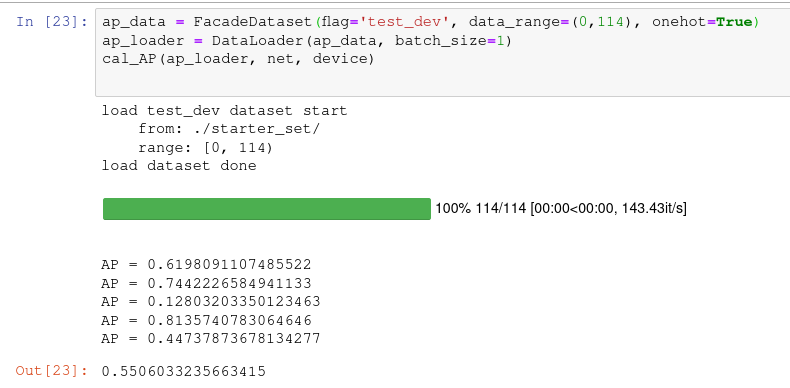

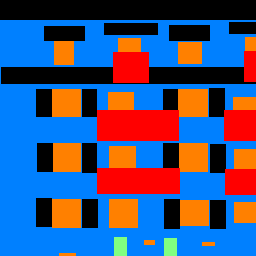

Here is my AP (first try :) )

Average Precision for each of the five classes

Average Precision for each of the five classes

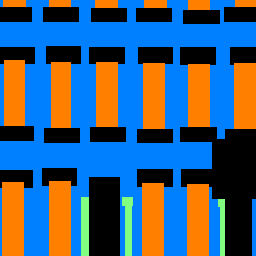

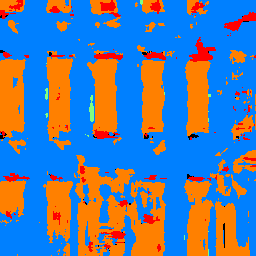

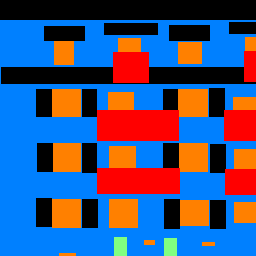

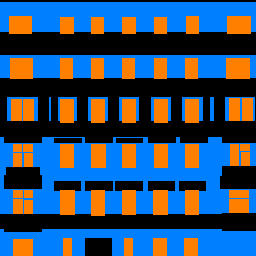

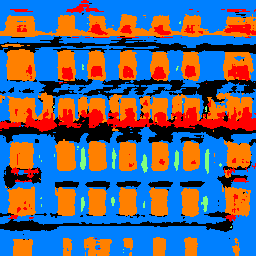

|

Image

|

Ground Truth

|

Prediction

|

Image 0

Image 0

|

Image 0 Label

Image 0 Label

|

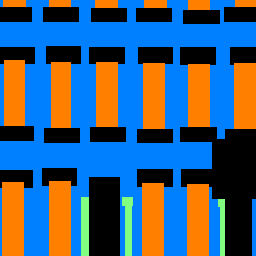

Image 0 Prediction

Image 0 Prediction

|

Image 1

Image 1

|

Image 1 Label

Image 1 Label

|

Image 1 Prediction

Image 1 Prediction

|

Image 2

Image 2

|

Image 2 Label

Image 2 Label

|

Image 2 Prediction

Image 2 Prediction

|

As you can see, the images tend to struggle a bit with borders but overall perform very well.